吴裕雄 python 机器学习-NBYS(1)

import numpy as np def loadDataSet():

postingList=[['my', 'dog', 'has', 'flea', 'problems', 'help', 'please'],

['maybe', 'not', 'take', 'him', 'to', 'dog', 'park', 'stupid'],

['my', 'dalmation', 'is', 'so', 'cute', 'I', 'love', 'him'],

['stop', 'posting', 'stupid', 'worthless', 'garbage'],

['mr', 'licks', 'ate', 'my', 'steak', 'how', 'to', 'stop', 'him'],

['quit', 'buying', 'worthless', 'dog', 'food', 'stupid']]

classVec = [0,1,0,1,0,1]

return postingList,classVec def createVocabList(dataSet):

vocabSet = set([])

for document in dataSet:

vocabSet = vocabSet | set(document)

return list(vocabSet) def setOfWords2Vec(vocabList, inputSet):

returnVec = [0]*len(vocabList)

for word in inputSet:

if word in vocabList:

returnVec[vocabList.index(word)] = 1

else:

print("the word: %s is not in my Vocabulary!" % word)

return returnVec def trainNB0(trainMatrix,trainCategory):

numTrainDocs = len(trainMatrix)

numWords = len(trainMatrix[0])

pAbusive = sum(trainCategory)/float(numTrainDocs)

p0Num = np.ones(numWords)

p1Num = np.ones(numWords)

p0Denom = 2.0

p1Denom = 2.0

for i in range(numTrainDocs):

if(trainCategory[i] == 1):

p1Num += trainMatrix[i]

p1Denom += sum(trainMatrix[i])

else:

p0Num += trainMatrix[i]

p0Denom += sum(trainMatrix[i])

p1Vect = np.log(p1Num/p1Denom)

p0Vect = np.log(p0Num/p0Denom)

return p0Vect,p1Vect,pAbusive def classifyNB(vec2Classify, p0Vec, p1Vec, pClass1):

p1 = sum(vec2Classify * p1Vec) + np.log(pClass1)

p0 = sum(vec2Classify * p0Vec) + np.log(1.0 - pClass1)

if p1 > p0:

return 1

else:

return 0 def bagOfWords2VecMN(vocabList, inputSet):

returnVec = [0]*len(vocabList)

for word in inputSet:

if(word in vocabList):

returnVec[vocabList.index(word)] += 1

return returnVec def testingNB():

listOPosts,listClasses = loadDataSet()

myVocabList = createVocabList(listOPosts)

trainMat=[]

for postinDoc in listOPosts:

trainMat.append(setOfWords2Vec(myVocabList, postinDoc))

p0V,p1V,pAb = trainNB0(np.array(trainMat),np.array(listClasses))

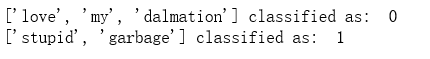

testEntry = ['love', 'my', 'dalmation']

thisDoc = np.array(setOfWords2Vec(myVocabList, testEntry))

print(testEntry,'classified as: ',classifyNB(thisDoc,p0V,p1V,pAb))

testEntry = ['stupid', 'garbage']

thisDoc = np.array(setOfWords2Vec(myVocabList, testEntry))

print(testEntry,'classified as: ',classifyNB(thisDoc,p0V,p1V,pAb)) testingNB()

import re

import numpy as np def createVocabList(dataSet):

vocabSet = set([])

for document in dataSet:

vocabSet = vocabSet | set(document)

return list(vocabSet) def bagOfWords2VecMN(vocabList, inputSet):

returnVec = [0]*len(vocabList)

for word in inputSet:

if(word in vocabList):

returnVec[vocabList.index(word)] += 1

return returnVec def trainNB0(trainMatrix,trainCategory):

numTrainDocs = len(trainMatrix)

numWords = len(trainMatrix[0])

pAbusive = sum(trainCategory)/float(numTrainDocs)

p0Num = np.ones(numWords)

p1Num = np.ones(numWords)

p0Denom = 2.0

p1Denom = 2.0

for i in range(numTrainDocs):

if(trainCategory[i] == 1):

p1Num += trainMatrix[i]

p1Denom += sum(trainMatrix[i])

else:

p0Num += trainMatrix[i]

p0Denom += sum(trainMatrix[i])

p1Vect = np.log(p1Num/p1Denom)

p0Vect = np.log(p0Num/p0Denom)

return p0Vect,p1Vect,pAbusive def textParse(bigString):

listOfTokens = re.split(r'\W*', bigString)

return [tok.lower() for tok in listOfTokens if len(tok) > 2] def spamTest():

docList=[]

classList = []

fullText =[]

for i in range(1,26):

wordList = textParse(open('D:\\LearningResource\\machinelearninginaction\\Ch04\\email\\spam\\%d.txt' % i).read())

docList.append(wordList)

fullText.extend(wordList)

classList.append(1)

wordList = textParse(open('D:\\LearningResource\\machinelearninginaction\\Ch04\\email\\ham\\%d.txt' % i).read())

docList.append(wordList)

fullText.extend(wordList)

classList.append(0)

vocabList = createVocabList(docList)

trainingSet = list(np.arange(50))

testSet=[]

for i in range(10):

randIndex = int(np.random.uniform(0,len(trainingSet)))

testSet.append(trainingSet[randIndex])

del(trainingSet[randIndex])

trainMat=[]

trainClasses = []

for docIndex in trainingSet:

trainMat.append(bagOfWords2VecMN(vocabList, docList[docIndex]))

trainClasses.append(classList[docIndex])

p0V,p1V,pSpam = trainNB0(np.array(trainMat),np.array(trainClasses))

errorCount = 0

for docIndex in testSet:

wordVector = bagOfWords2VecMN(vocabList, docList[docIndex])

if(classifyNB(np.array(wordVector),p0V,p1V,pSpam) != classList[docIndex]):

errorCount += 1

print("classification error",docList[docIndex])

print('the error rate is: ',float(errorCount)/len(testSet)) spamTest()

吴裕雄 python 机器学习-NBYS(1)的更多相关文章

- 吴裕雄 python 机器学习-NBYS(2)

import matplotlib import numpy as np import matplotlib.pyplot as plt n = 1000 xcord0 = [] ycord0 = [ ...

- 吴裕雄 python 机器学习——分类决策树模型

import numpy as np import matplotlib.pyplot as plt from sklearn import datasets from sklearn.model_s ...

- 吴裕雄 python 机器学习——回归决策树模型

import numpy as np import matplotlib.pyplot as plt from sklearn import datasets from sklearn.model_s ...

- 吴裕雄 python 机器学习——线性判断分析LinearDiscriminantAnalysis

import numpy as np import matplotlib.pyplot as plt from matplotlib import cm from mpl_toolkits.mplot ...

- 吴裕雄 python 机器学习——逻辑回归

import numpy as np import matplotlib.pyplot as plt from matplotlib import cm from mpl_toolkits.mplot ...

- 吴裕雄 python 机器学习——ElasticNet回归

import numpy as np import matplotlib.pyplot as plt from matplotlib import cm from mpl_toolkits.mplot ...

- 吴裕雄 python 机器学习——Lasso回归

import numpy as np import matplotlib.pyplot as plt from sklearn import datasets, linear_model from s ...

- 吴裕雄 python 机器学习——岭回归

import numpy as np import matplotlib.pyplot as plt from sklearn import datasets, linear_model from s ...

- 吴裕雄 python 机器学习——线性回归模型

import numpy as np from sklearn import datasets,linear_model from sklearn.model_selection import tra ...

随机推荐

- Solr DocValues详解

前言: 在Lucene4.x之后,出现一个重大的特性,就是索引支持DocValues,这对于广大的solr和elasticsearch用户,无疑来说是一个福音,这玩意的出现通过牺牲一定的磁盘空间带来的 ...

- IE兼容性问题的总结

一.IE6/IE7对display:inline-block的支持欠缺 1.表现描述 这个应该算是很经典的ie兼容性问题了,inline-block作用就是将块级元素以行的等式显示.在主流浏览器中水平 ...

- Maven依赖下载速度慢,不用怕,这么搞快了飞起

一.背景 众所周知,Maven对于依赖的管理让我们程序员感觉爽的不要不要的,但是由于这货是国外出的,所以在我们从中央仓库下载依赖的时候,速度如蜗牛一般,让人不能忍,并且这也是大多数程序员都会遇到的问题 ...

- qt 软件打包

今天呈现的客户端完成了要打包发布,想了一下还不会,就问了一下度娘,在此记录一下学习的程度 1>将QT编译工具的BUG模式切换成Release模式,在Release模式下生成一个*.exe的可执行 ...

- QT_QSlider的总结

当鼠标选中QSlider 上时,通过点击的数值为setpageStep():通过左右方向键按钮移动的数值为setsingleStep(). 鼠标滚轮上面两者都不行,不知道是什么原因! 应用: http ...

- 20165205 2017-2018-2 《Java程序设计》第七周学习总结

20165205 2017-2018-2 <Java程序设计>第七周学习总结 教材学习内容总结 下载XAMPP并完成配置 完成XAMPP与数据库的连接 学会创建一个数据库 学会用java语 ...

- Microsoft Office Access数据库或项目包含一个对文件“dao360.dll”版本5.0.的丢失的或损坏的引用。

今天使用 office 2007 access 打开 2003 的数据库中的表时候,提示这个错误.经过搜索,发现是没有 dao360.dll 的问题. 在 https://cn.dll-files.c ...

- 《算法》第四章部分程序 part 1

▶ 书中第四章部分程序,加上自己补充的代码,包含无向 / 有向图类 ● 无向图类 package package01; import java.util.NoSuchElementException; ...

- tensorflow 入门

1. tensorflow 官方文档中文版(下载) 2. tensorflow mac安装参考 http://www.tuicool.com/articles/Fni2Yr 3. 源码例子目录 l ...

- IIS编辑器错误信息:CS0016解决方案

错误信息: 运行asp.net程序时候,编译器错误消息: CS0016: 未能写入输出文件“c:\Windows\Microsoft.NET\Framework\v2.0.50727\Temporar ...