6.Spark streaming技术内幕 : Job动态生成原理与源码解析

原创文章,转载请注明:转载自 周岳飞博客(http://www.cnblogs.com/zhouyf/)

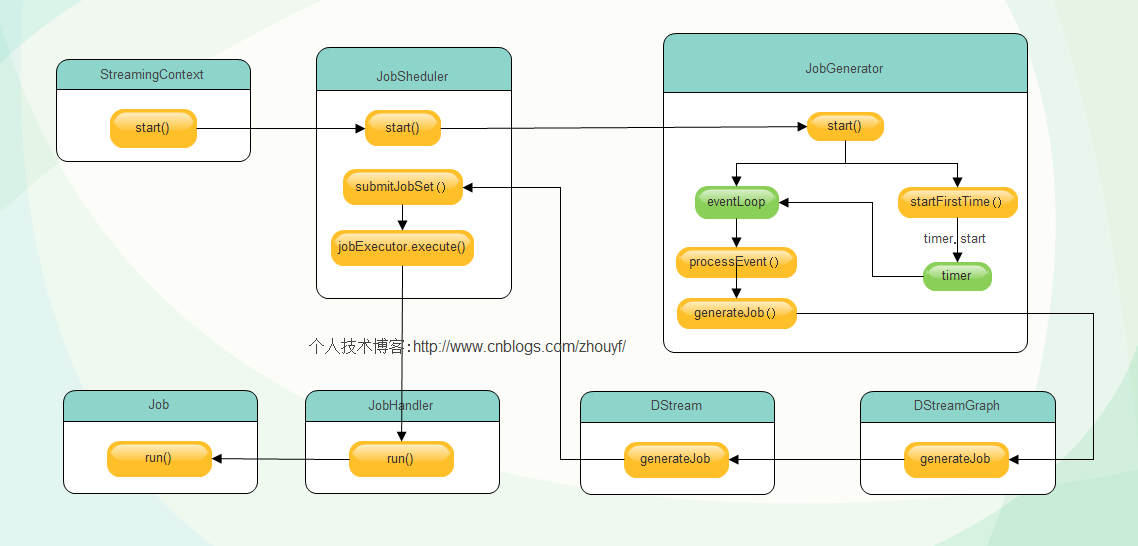

try {validate()// Start the streaming scheduler in a new thread, so that thread local properties// like call sites and job groups can be reset without affecting those of the// current thread.ThreadUtils.runInNewThread("streaming-start") {sparkContext.setCallSite(startSite.get)sparkContext.clearJobGroup()sparkContext.setLocalProperty(SparkContext.SPARK_JOB_INTERRUPT_ON_CANCEL, "false")savedProperties.set(SerializationUtils.clone(sparkContext.localProperties.get()).asInstanceOf[Properties])scheduler.start()}state = StreamingContextState.ACTIVE} catch {case NonFatal(e) =>logError("Error starting the context, marking it as stopped", e)scheduler.stop(false)state = StreamingContextState.STOPPEDthrow e}

eventLoop = new EventLoop[JobSchedulerEvent]("JobScheduler") {override protected def onReceive(event: JobSchedulerEvent): Unit = processEvent(event)override protected def onError(e: Throwable): Unit = reportError("Error in job scheduler", e)}eventLoop.start()// attach rate controllers of input streams to receive batch completion updatesfor {inputDStream <- ssc.graph.getInputStreamsrateController <- inputDStream.rateController} ssc.addStreamingListener(rateController)listenerBus.start()receiverTracker = new ReceiverTracker(ssc)inputInfoTracker = new InputInfoTracker(ssc)executorAllocationManager = ExecutorAllocationManager.createIfEnabled(ssc.sparkContext,receiverTracker,ssc.conf,ssc.graph.batchDuration.milliseconds,clock)executorAllocationManager.foreach(ssc.addStreamingListener)receiverTracker.start()jobGenerator.start()executorAllocationManager.foreach(_.start())logInfo("Started JobScheduler")

private def processEvent(event: JobSchedulerEvent) {try {event match {case JobStarted(job, startTime) => handleJobStart(job, startTime)case JobCompleted(job, completedTime) => handleJobCompletion(job, completedTime)case ErrorReported(m, e) => handleError(m, e)}} catch {case e: Throwable =>reportError("Error in job scheduler", e)}}

/** Start generation of jobs */

def start(): Unit = synchronized {

if (eventLoop != null) return // generator has already been started // Call checkpointWriter here to initialize it before eventLoop uses it to avoid a deadlock.

// See SPARK-10125

checkpointWriter eventLoop = new EventLoop[JobGeneratorEvent]("JobGenerator") {

override protected def onReceive(event: JobGeneratorEvent): Unit = processEvent(event) override protected def onError(e: Throwable): Unit = {

jobScheduler.reportError("Error in job generator", e)

}

}

eventLoop.start() if (ssc.isCheckpointPresent) {

restart()

} else {

startFirstTime()

}

}

/** Generate jobs and perform checkpoint for the given `time`. */private def generateJobs(time: Time) {// Checkpoint all RDDs marked for checkpointing to ensure their lineages are// truncated periodically. Otherwise, we may run into stack overflows (SPARK-6847).ssc.sparkContext.setLocalProperty(RDD.CHECKPOINT_ALL_MARKED_ANCESTORS, "true")Try {jobScheduler.receiverTracker.allocateBlocksToBatch(time) // allocate received blocks to batchgraph.generateJobs(time) // generate jobs using allocated block} match {case Success(jobs) =>val streamIdToInputInfos = jobScheduler.inputInfoTracker.getInfo(time)jobScheduler.submitJobSet(JobSet(time, jobs, streamIdToInputInfos))case Failure(e) =>jobScheduler.reportError("Error generating jobs for time " + time, e)}eventLoop.post(DoCheckpoint(time, clearCheckpointDataLater = false))}

def generateJobs(time: Time): Seq[Job] = {logDebug("Generating jobs for time " + time)val jobs = this.synchronized {outputStreams.flatMap { outputStream =>val jobOption = outputStream.generateJob(time)jobOption.foreach(_.setCallSite(outputStream.creationSite))jobOption}}logDebug("Generated " + jobs.length + " jobs for time " + time)jobs}

def submitJobSet(jobSet: JobSet) {if (jobSet.jobs.isEmpty) {logInfo("No jobs added for time " + jobSet.time)} else {listenerBus.post(StreamingListenerBatchSubmitted(jobSet.toBatchInfo))jobSets.put(jobSet.time, jobSet)jobSet.jobs.foreach(job => jobExecutor.execute(new JobHandler(job)))logInfo("Added jobs for time " + jobSet.time)}}

Attachment List

6.Spark streaming技术内幕 : Job动态生成原理与源码解析的更多相关文章

- Spark streaming技术内幕6 : Job动态生成原理与源码解析

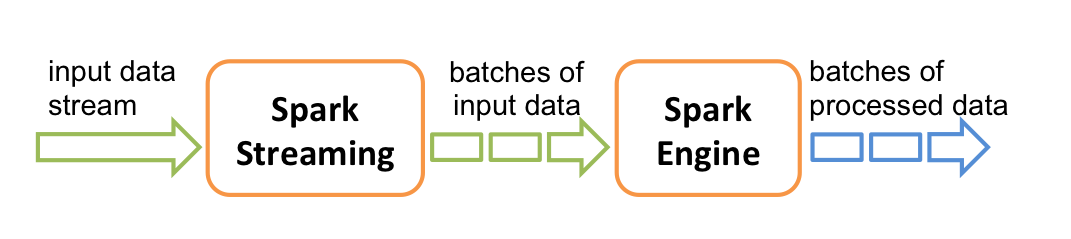

原创文章,转载请注明:转载自 周岳飞博客(http://www.cnblogs.com/zhouyf/) Spark streaming 程序的运行过程是将DStream的操作转化成RDD的操作,S ...

- Spark技术内幕: Task向Executor提交的源码解析

在上文<Spark技术内幕:Stage划分及提交源码分析>中,我们分析了Stage的生成和提交.但是Stage的提交,只是DAGScheduler完成了对DAG的划分,生成了一个计算拓扑, ...

- 7.spark Streaming 技术内幕 : 从DSteam到RDD全过程解析

原创文章,转载请注明:转载自 听风居士博客(http://www.cnblogs.com/zhouyf/) 上篇博客讨论了Spark Streaming 程序动态生成Job的过程,并留下一个疑问: ...

- Spark技术内幕:Stage划分及提交源码分析

http://blog.csdn.net/anzhsoft/article/details/39859463 当触发一个RDD的action后,以count为例,调用关系如下: org.apache. ...

- 9. Spark Streaming技术内幕 : Receiver在Driver的精妙实现全生命周期彻底研究和思考

原创文章,转载请注明:转载自 听风居士博客(http://www.cnblogs.com/zhouyf/) Spark streaming 程序需要不断接收新数据,然后进行业务逻辑 ...

- JDK1.8 动态代理机制及源码解析

动态代理 a) jdk 动态代理 Proxy, 核心思想:通过实现被代理类的所有接口,生成一个字节码文件后构造一个代理对象,通过持有反射构造被代理类的一个实例,再通过invoke反射调用被代理类实例的 ...

- Spark集群任务提交流程----2.1.0源码解析

Spark的应用程序是通过spark-submit提交到Spark集群上运行的,那么spark-submit到底提交了什么,集群是怎样调度运行的,下面一一详解. 0. spark-submit提交任务 ...

- 贯通Spark Streaming JobScheduler内幕实现和深入思考

本节主要内容: 一.SparkStreaming Job生成深度思考 二.SparkStreaming Job生成源码解析 JobScheduler的地位非常的重要,所有的关键都在JobSchedul ...

- Spark Streaming运行流程及源码解析(一)

本系列主要描述Spark Streaming的运行流程,然后对每个流程的源码分别进行解析 之前总听同事说Spark源码有多么棒,咱也不知道,就是疯狂点头.今天也来撸一下Spark源码. 对Spark的 ...

随机推荐

- WPF DataGrid、ListView 简单绑定

DataGrid运行效果: xaml 代码: DataGridName= dtgData ItemsSource= {Binding} AutoGenerateColumns= False DataG ...

- POJ 3255 Roadblocks (次短路模板)

Roadblocks http://poj.org/problem?id=3255 Time Limit: 2000MS Memory Limit: 65536K Descriptio ...

- 51Nod 1049最大子段和 | 模板

Input示例 6 -2 11 -4 13 -5 -2 Output示例 20 1.最大子段和模板 #include "bits/stdc++.h" using namespace ...

- asyncio 实现 aiohttp

#asyncio 没有提供http协议的接口 aiohttp import asyncio import socket from urllib.parse import urlparse async ...

- centos7.2编译安装zabbix-3.0.4

安装zabbix-3.0.4 #安装必备的包 yum -y install gcc* make php php-gd php-mysql php-bcmath php-mbstring php-xml ...

- 知问前端——Ajax提交表单

本文,运用两大表单插件,完成数据表新增的工作. 一.创建数据库 创建一个数据库,名称为:zhiwen,表——user表,字段依次为:id.name.pass.email.sex.birthday.da ...

- el-date-picker 日期格式化 yyyy-MM-dd

<el-date-picker format="yyyy-MM-dd" v-model="dateValue" type="date" ...

- hdu 1102 Constructing Roads (最小生成树)

题目链接:http://acm.hdu.edu.cn/showproblem.php?pid=1102 Constructing Roads Time Limit: 2000/1000 MS (Jav ...

- ARM linux的启动部分源代码简略分析【转】

转自:http://www.cnblogs.com/armlinux/archive/2011/11/07/2396784.html ARM linux的启动部分源代码简略分析 以友善之臂的mini2 ...

- centos 引导盘

# grub.conf generated by anaconda## Note that you do not have to rerun grub after making changes to ...