7.spark Streaming 技术内幕 : 从DSteam到RDD全过程解析

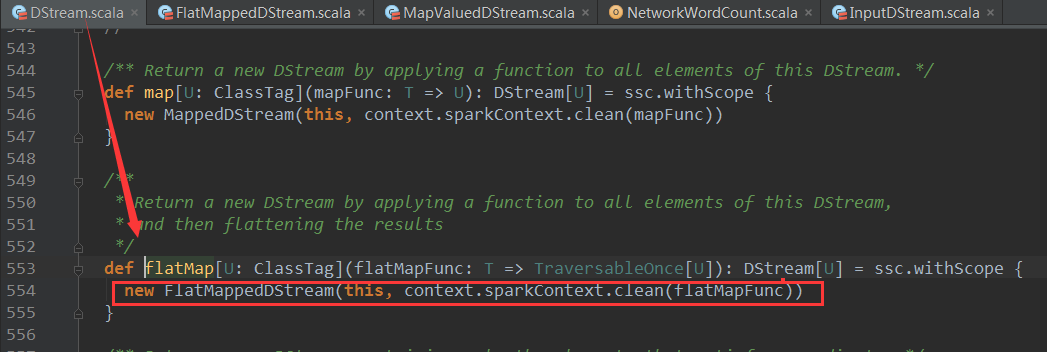

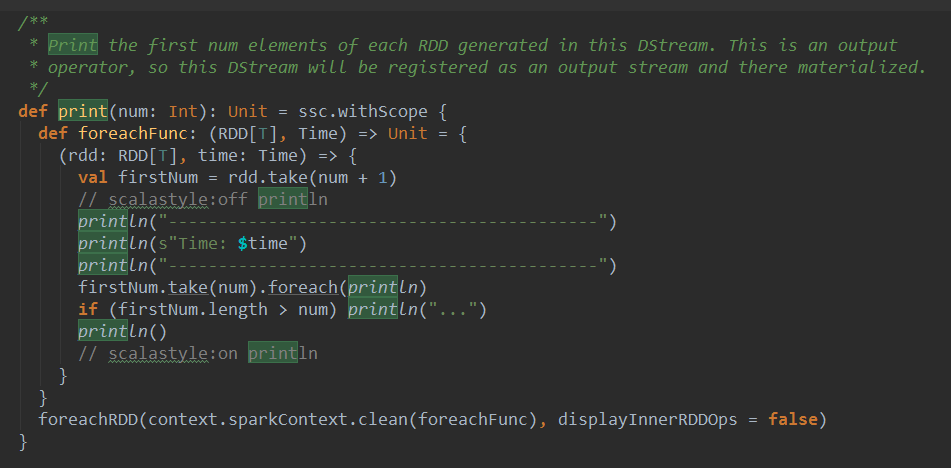

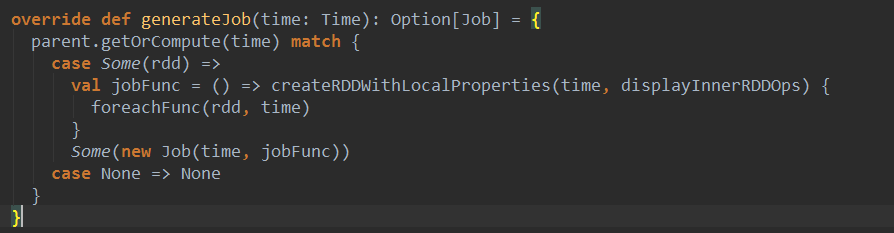

上篇博客讨论了Spark Streaming 程序动态生成Job的过程,并留下一个疑问: JobScheduler将动态生成的Job提交,然后调用了Job对象的run方法,最后run方法的调用是如何触发RDD的Action操作,从而真正触发Job的执行的呢?本文就具体讲解这个问题。

object WordCount{def main(args:Array[String]):Unit={val sparkConf =newSparkConf().setMaster("local[4]").setAppName("WordCount")val ssc =newStreamingContext(sparkConf,Seconds(1))val lines = ssc.socketTextStream("localhost",9999)val words = lines.flatMap(_.split(" "))val wordCounts = words.map(x =>(x,1)).reduceByKey(_+_)wordCounts.print()ssc.start()ssc.awaitTermination()}}

| Output Operation | Meaning |

|---|---|

| print() | Prints the first ten elements of every batch of data in a DStream on the driver node running the streaming application. This is useful for development and debugging. Python API This is called pprint() in the Python API. |

| saveAsTextFiles(prefix, [suffix]) | Save this DStream's contents as text files. The file name at each batch interval is generated based onprefix and suffix: "prefix-TIME_IN_MS[.suffix]". |

| saveAsObjectFiles(prefix, [suffix]) | Save this DStream's contents as SequenceFiles of serialized Java objects. The file name at each batch interval is generated based on prefix and suffix: "prefix-TIME_IN_MS[.suffix]". Python API This is not available in the Python API. |

| saveAsHadoopFiles(prefix, [suffix]) | Save this DStream's contents as Hadoop files. The file name at each batch interval is generated based on prefix and suffix: "prefix-TIME_IN_MS[.suffix]". Python API This is not available in the Python API. |

| foreachRDD(func) | The most generic output operator that applies a function, func, to each RDD generated from the stream. This function should push the data in each RDD to an external system, such as saving the RDD to files, or writing it over the network to a database. Note that the function func is executed in the driver process running the streaming application, and will usually have RDD actions in it that will force the computation of the streaming RDDs. |

7.spark Streaming 技术内幕 : 从DSteam到RDD全过程解析的更多相关文章

- 9. Spark Streaming技术内幕 : Receiver在Driver的精妙实现全生命周期彻底研究和思考

原创文章,转载请注明:转载自 听风居士博客(http://www.cnblogs.com/zhouyf/) Spark streaming 程序需要不断接收新数据,然后进行业务逻辑 ...

- Spark streaming技术内幕6 : Job动态生成原理与源码解析

原创文章,转载请注明:转载自 周岳飞博客(http://www.cnblogs.com/zhouyf/) Spark streaming 程序的运行过程是将DStream的操作转化成RDD的操作,S ...

- 6.Spark streaming技术内幕 : Job动态生成原理与源码解析

原创文章,转载请注明:转载自 周岳飞博客(http://www.cnblogs.com/zhouyf/) Spark streaming 程序的运行过程是将DStream的操作转化成RDD的操作, ...

- 贯通Spark Streaming JobScheduler内幕实现和深入思考

本节主要内容: 一.SparkStreaming Job生成深度思考 二.SparkStreaming Job生成源码解析 JobScheduler的地位非常的重要,所有的关键都在JobSchedul ...

- 16.Spark Streaming源码解读之数据清理机制解析

原创文章,转载请注明:转载自 听风居士博客(http://www.cnblogs.com/zhouyf/) 本期内容: 一.Spark Streaming 数据清理总览 二.Spark Streami ...

- 10.Spark Streaming源码分析:Receiver数据接收全过程详解

原创文章,转载请注明:转载自 听风居士博客(http://www.cnblogs.com/zhouyf/) 在上一篇中介绍了Receiver的整体架构和设计原理,本篇内容主要介绍Receiver在 ...

- Spark技术内幕:Sort Based Shuffle实现解析

在Spark 1.2.0中,Spark Core的一个重要的升级就是将默认的Hash Based Shuffle换成了Sort Based Shuffle,即spark.shuffle.manager ...

- 9.Spark Streaming

Spark Streaming 1 Why Apache Spark 2 关于Apache Spark 3 如何安装Apache Spark 4 Apache Spark的工作原理 5 spark弹性 ...

- Spark Streaming的优化之路—从Receiver到Direct模式

作者:个推数据研发工程师 学长 1 业务背景 随着大数据的快速发展,业务场景越来越复杂,离线式的批处理框架MapReduce已经不能满足业务,大量的场景需要实时的数据处理结果来进行分析.决 ...

随机推荐

- apt-get update的hit和ign含义

How do Ign and Hit affect apt-get update? From what I can see in the apt source code, "Ign" ...

- shell 将字符串作为变量名并打印

使用shell的eval实现此功能.代码如下: #!/bin/sh IP9="127.0.0.1" i=9 eval echo \$IP${i} #!/bin/sh WEBIP0= ...

- 游戏编程入门之测试Xbox360控制输入

代码: #include<Windows.h> #include<d3d9.h> #include<d3dx9.h> #include<Xinput.h> ...

- 如何把自己写的python程序给别人用

这里讲的给别人用,不是指将你的代码开源,也不是指给另一个程序员用..... 前段时间写了个程序,输入URP学生系统的账号和密码,输出课表.绩点之类的信息,想给同学用,但是总不能叫别人也去装python ...

- [C#] 类型学习笔记三:自定义值类型

既前两篇之后,这一篇我们讨论通过struct 关键字自定义值类型. 在第一篇已经讨论过值类型的优势,节省空间,不会触发Gargage Collection等等. 在对性能要求比较高的场景下,通过str ...

- 51Nod 1082 | 模拟

Input示例 5 4 5 6 7 8 Output示例 30 55 91 91 155 模拟 #include "bits/stdc++.h" using namespace s ...

- 【BZOJ4884】太空猫 [DP]

太空猫 Time Limit: 1 Sec Memory Limit: 256 MB[Submit][Status][Discuss] Description 太空猫(SpaceCat)是一款画面精 ...

- 【BZOJ4821】【SDOI2017】相关分析 [线段树]

相关分析 Time Limit: 10 Sec Memory Limit: 128 MB[Submit][Status][Discuss] Description Frank对天文学非常感兴趣,他经 ...

- Codeforces Round #411 (Div. 2) A-F

比赛时候切了A-E,fst了A Standings第一页只有三个人挂了A题,而我就是其中之一,真™开心啊蛤蛤蛤 A. Fake NP time limit per test 1 second memo ...

- Java并发——关键字synchronized解析

synchronized用法 在Java中,最简单粗暴的同步手段就是synchronized关键字,其同步的三种用法: ①.同步实例方法,锁是当前实例对象 ②.同步类方法,锁是当前类对象 ③.同步代码 ...