吴裕雄--天生自然python Google深度学习框架:经典卷积神经网络模型

import tensorflow as tf INPUT_NODE = 784

OUTPUT_NODE = 10 IMAGE_SIZE = 28

NUM_CHANNELS = 1

NUM_LABELS = 10 CONV1_DEEP = 32

CONV1_SIZE = 5 CONV2_DEEP = 64

CONV2_SIZE = 5 FC_SIZE = 512 def inference(input_tensor, train, regularizer):

with tf.variable_scope('layer1-conv1'):

conv1_weights = tf.get_variable(

"weight", [CONV1_SIZE, CONV1_SIZE, NUM_CHANNELS, CONV1_DEEP],

initializer=tf.truncated_normal_initializer(stddev=0.1))

conv1_biases = tf.get_variable("bias", [CONV1_DEEP], initializer=tf.constant_initializer(0.0))

conv1 = tf.nn.conv2d(input_tensor, conv1_weights, strides=[1, 1, 1, 1], padding='SAME')

relu1 = tf.nn.relu(tf.nn.bias_add(conv1, conv1_biases)) with tf.name_scope("layer2-pool1"):

pool1 = tf.nn.max_pool(relu1, ksize = [1,2,2,1],strides=[1,2,2,1],padding="SAME") with tf.variable_scope("layer3-conv2"):

conv2_weights = tf.get_variable(

"weight", [CONV2_SIZE, CONV2_SIZE, CONV1_DEEP, CONV2_DEEP],

initializer=tf.truncated_normal_initializer(stddev=0.1))

conv2_biases = tf.get_variable("bias", [CONV2_DEEP], initializer=tf.constant_initializer(0.0))

conv2 = tf.nn.conv2d(pool1, conv2_weights, strides=[1, 1, 1, 1], padding='SAME')

relu2 = tf.nn.relu(tf.nn.bias_add(conv2, conv2_biases)) with tf.name_scope("layer4-pool2"):

pool2 = tf.nn.max_pool(relu2, ksize=[1, 2, 2, 1], strides=[1, 2, 2, 1], padding='SAME')

pool_shape = pool2.get_shape().as_list()

nodes = pool_shape[1] * pool_shape[2] * pool_shape[3]

reshaped = tf.reshape(pool2, [pool_shape[0], nodes]) with tf.variable_scope('layer5-fc1'):

fc1_weights = tf.get_variable("weight", [nodes, FC_SIZE],

initializer=tf.truncated_normal_initializer(stddev=0.1))

if regularizer != None: tf.add_to_collection('losses', regularizer(fc1_weights))

fc1_biases = tf.get_variable("bias", [FC_SIZE], initializer=tf.constant_initializer(0.1)) fc1 = tf.nn.relu(tf.matmul(reshaped, fc1_weights) + fc1_biases)

if train: fc1 = tf.nn.dropout(fc1, 0.5) with tf.variable_scope('layer6-fc2'):

fc2_weights = tf.get_variable("weight", [FC_SIZE, NUM_LABELS],

initializer=tf.truncated_normal_initializer(stddev=0.1))

if regularizer != None: tf.add_to_collection('losses', regularizer(fc2_weights))

fc2_biases = tf.get_variable("bias", [NUM_LABELS], initializer=tf.constant_initializer(0.1))

logit = tf.matmul(fc1, fc2_weights) + fc2_biases return logit

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

import LeNet5_infernece

import os

import numpy as np BATCH_SIZE = 100

LEARNING_RATE_BASE = 0.01

LEARNING_RATE_DECAY = 0.99

REGULARIZATION_RATE = 0.0001

TRAINING_STEPS = 6000

MOVING_AVERAGE_DECAY = 0.99 def train(mnist):

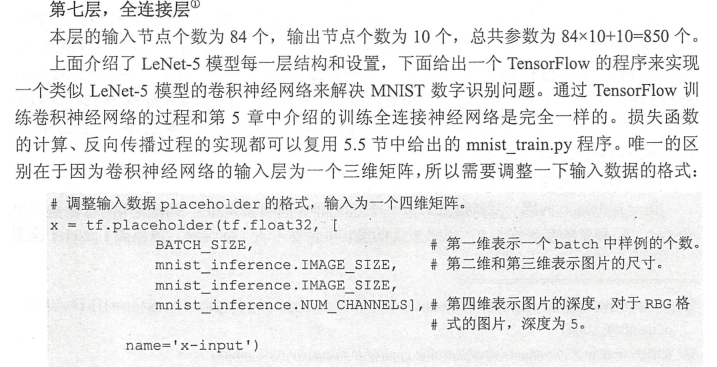

# 定义输出为4维矩阵的placeholder

x = tf.placeholder(tf.float32, [

BATCH_SIZE,

LeNet5_infernece.IMAGE_SIZE,

LeNet5_infernece.IMAGE_SIZE,

LeNet5_infernece.NUM_CHANNELS],

name='x-input')

y_ = tf.placeholder(tf.float32, [None, LeNet5_infernece.OUTPUT_NODE], name='y-input') regularizer = tf.contrib.layers.l2_regularizer(REGULARIZATION_RATE)

y = LeNet5_infernece.inference(x,False,regularizer)

global_step = tf.Variable(0, trainable=False) # 定义损失函数、学习率、滑动平均操作以及训练过程。

variable_averages = tf.train.ExponentialMovingAverage(MOVING_AVERAGE_DECAY, global_step)

variables_averages_op = variable_averages.apply(tf.trainable_variables())

cross_entropy = tf.nn.sparse_softmax_cross_entropy_with_logits(logits=y, labels=tf.argmax(y_, 1))

cross_entropy_mean = tf.reduce_mean(cross_entropy)

loss = cross_entropy_mean + tf.add_n(tf.get_collection('losses'))

learning_rate = tf.train.exponential_decay(

LEARNING_RATE_BASE,

global_step,

mnist.train.num_examples / BATCH_SIZE, LEARNING_RATE_DECAY,

staircase=True) train_step = tf.train.GradientDescentOptimizer(learning_rate).minimize(loss, global_step=global_step)

with tf.control_dependencies([train_step, variables_averages_op]):

train_op = tf.no_op(name='train') # 初始化TensorFlow持久化类。

saver = tf.train.Saver()

with tf.Session() as sess:

tf.global_variables_initializer().run()

for i in range(TRAINING_STEPS):

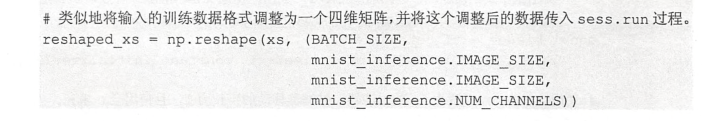

xs, ys = mnist.train.next_batch(BATCH_SIZE) reshaped_xs = np.reshape(xs, (

BATCH_SIZE,

LeNet5_infernece.IMAGE_SIZE,

LeNet5_infernece.IMAGE_SIZE,

LeNet5_infernece.NUM_CHANNELS))

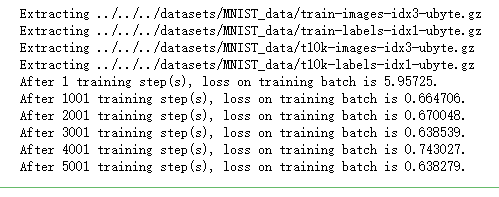

_, loss_value, step = sess.run([train_op, loss, global_step], feed_dict={x: reshaped_xs, y_: ys}) if i % 1000 == 0:

print("After %d training step(s), loss on training batch is %g." % (step, loss_value)) def main(argv=None):

mnist = input_data.read_data_sets("../../../datasets/MNIST_data", one_hot=True)

train(mnist) if __name__ == '__main__':

main()

吴裕雄--天生自然python Google深度学习框架:经典卷积神经网络模型的更多相关文章

- 吴裕雄--天生自然python Google深度学习框架:Tensorflow实现迁移学习

import glob import os.path import numpy as np import tensorflow as tf from tensorflow.python.platfor ...

- 吴裕雄--天生自然python Google深度学习框架:图像识别与卷积神经网络

- 吴裕雄--天生自然python Google深度学习框架:MNIST数字识别问题

import tensorflow as tf from tensorflow.examples.tutorials.mnist import input_data INPUT_NODE = 784 ...

- 吴裕雄--天生自然python Google深度学习框架:深度学习与深层神经网络

- 吴裕雄--天生自然python Google深度学习框架:TensorFlow实现神经网络

http://playground.tensorflow.org/

- 吴裕雄--天生自然python Google深度学习框架:Tensorflow基础应用

import tensorflow as tf a = tf.constant([1.0, 2.0], name="a") b = tf.constant([2.0, 3.0], ...

- 吴裕雄--天生自然python Google深度学习框架:人工智能、深度学习与机器学习相互关系介绍

- 吴裕雄--天生自然神经网络与深度学习实战Python+Keras+TensorFlow:Bellman函数、贪心算法与增强性学习网络开发实践

!pip install gym import random import numpy as np import matplotlib.pyplot as plt from keras.layers ...

- 吴裕雄--天生自然神经网络与深度学习实战Python+Keras+TensorFlow:使用TensorFlow和Keras开发高级自然语言处理系统——LSTM网络原理以及使用LSTM实现人机问答系统

!mkdir '/content/gdrive/My Drive/conversation' ''' 将文本句子分解成单词,并构建词库 ''' path = '/content/gdrive/My D ...

随机推荐

- mybatis初步配置容易出现的问题

The server time zone value 'Öйú±ê׼ʱ¼ä' is unrecognized or represents more than one time zone. You ...

- oracle数据删除恢复

insert into 表名 select * from 表名 as of timestamp to_Date('2017-07-20 10:00:00', 'yyyy-mm-dd hh24:mi:s ...

- C#高级编程(第9版) 第11章 LINQ 笔记

概述语言集成查询(Language Integrated Query, LINQ)在C#编程语言中集成了查询语法,可以用相同的语法访问不同的数据源.LINQ提供了不同数据源的抽象层,所以可以使用相同的 ...

- spring boot2 运行环境

1.springboot个版本系统需求 spring boot maven jdk 内置tomcat 内置jetty servlet 2.0.x 3.2+ 8或9 8.5(3.1) 9.4(3.1) ...

- 从内存上限说起 VMware内存分配初探

原文链接:http://blog.51cto.com/cxpbt/463777 [IT168 应用技巧]为方便识别虚拟的资源和物理(或叫真实的)资源,本人文章中以小写字母v前缀标识虚拟资源,小写字母p ...

- POJ 2993:Emag eht htiw Em Pleh

Emag eht htiw Em Pleh Time Limit: 1000MS Memory Limit: 65536KB 64bit IO Format: %I64d & %I64 ...

- 文献阅读报告 - Social LSTM:Human Trajectory Prediction in Crowded Spaces

概览 简述 文献所提出的模型旨在解决交通中行人的轨迹预测(pedestrian trajectory prediction)问题,特别是在拥挤环境中--人与人交互(interaction)行为常有发生 ...

- dp--区间dp P1880 [NOI1995]石子合并

题目描述 在一个圆形操场的四周摆放 N 堆石子,现要将石子有次序地合并成一堆.规定每次只能选相邻的2堆合并成新的一堆,并将新的一堆的石子数,记为该次合并的得分. 试设计出一个算法,计算出将 N 堆石子 ...

- String StringBuffer和StringBuilder的区别和联系

1:String,StringBuffer和StringBuilder概念 1.1:String String中使用字符串数组来存储字符串,但是是fianl来修饰的,所以String的内容不可改变. ...

- Linux-socket编程接口介绍

1.建立连接 (1).socket.socket函数类似于open,用来打开一个网络连接,如果打开成功则返回一个网络文件描述符(int类型),之后我们操作这个网络连接都可以通过这个网络文件描述符. ( ...