Optimization Algorithms

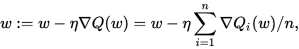

1. Stochastic Gradient Descent

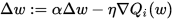

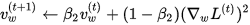

2. SGD With Momentum

Stochastic gradient descent with momentum remembers the update Δ w at each iteration, and determines the next update as a linear combination of the gradient and the previous update:

Unlike in classical stochastic gradient descent, it tends to keep traveling in the same direction, preventing oscillations.

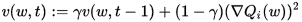

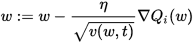

3. RMSProp

RMSProp (for Root Mean Square Propagation) is also a method in which the learning rate is adapted for each of the parameters. The idea is to divide the learning rate for a weight by a running average of the magnitudes of recent gradients for that weight. So, first the running average is calculated in terms of means square,

where,

And the parameters are updated as,

RMSProp has shown excellent adaptation of learning rate in different applications. RMSProp can be seen as a generalization of Rprop and is capable to work with mini-batches as well opposed to only full-batches.

4. The Adam Algorithm

Adam (short for Adaptive Moment Estimation) is an update to the RMSProp optimizer. In this optimization algorithm, running averages of both the gradients and the second moments of the gradients are used. Given parameters

where

参考链接:Wikipedia。

Optimization Algorithms的更多相关文章

- (转) An overview of gradient descent optimization algorithms

An overview of gradient descent optimization algorithms Table of contents: Gradient descent variants ...

- An overview of gradient descent optimization algorithms

原文地址:An overview of gradient descent optimization algorithms An overview of gradient descent optimiz ...

- 课程二(Improving Deep Neural Networks: Hyperparameter tuning, Regularization and Optimization),第二周(Optimization algorithms) —— 2.Programming assignments:Optimization

Optimization Welcome to the optimization's programming assignment of the hyper-parameters tuning spe ...

- 优化算法动画演示Alec Radford's animations for optimization algorithms

Alec Radford has created some great animations comparing optimization algorithms SGD, Momentum, NAG, ...

- [C2W2] Improving Deep Neural Networks : Optimization algorithms

第二周:优化算法(Optimization algorithms) Mini-batch 梯度下降(Mini-batch gradient descent) 本周将学习优化算法,这能让你的神经网络运行 ...

- 【论文翻译】An overiview of gradient descent optimization algorithms

这篇论文最早是一篇2016年1月16日发表在Sebastian Ruder的博客.本文主要工作是对这篇论文与李宏毅课程相关的核心部分进行翻译. 论文全文翻译: An overview of gradi ...

- Coursera Deep Learning 2 Improving Deep Neural Networks: Hyperparameter tuning, Regularization and Optimization - week2, Optimization algorithms

Gradient descent Batch Gradient Decent, Mini-batch gradient descent, Stochastic gradient descent 还有很 ...

- An overview of gradient descent optimization algorithms (更新到Adam)

Momentum:解快了收敛速度,同时也减弱了SGD的波动 NAG: 减速了Momentum更新参数太快 Adagrad: 出现频率较低参数采用较大的更新,对于出现频率较高的参数采用较小的,不共用一个 ...

- 最佳化常用测试函数 Optimization Test functions

http://www.sfu.ca/~ssurjano/optimization.html The functions listed below are some of the common func ...

随机推荐

- cocos2dx-android-添加64位编译

Application.mk: APP_ABI := armeabi arm64-v8a build.gradle: android{ ndk{ abiFilters "armeabi&qu ...

- 安装ceilometer

在控制节点上执行 #!/bin/bash MYSQL_ROOT_PASSWD='m4r!adbOP' GNOCCHI_PASSWD='gnocchi1234!' CEILOMETER_PASSWD=' ...

- #内存不够,swap来凑# Linux上创建SWAP文件/分区

转自:https://www.vmvps.com/how-to-create-a-swap-file-on-the-linux-os.html 很久很久以前,电脑的内存是个珍贵东西,于是乎就有了swa ...

- 并发-synchronized

线程并发-synchronized和Lock简单认知 前几天刚加深了线程的了解,期间在验证各种方法及多线程时遇到一些疑问,在高并发的情况下,怎么做才能保证程序还能按照我们预期的正常运行下去,这就是我们 ...

- 虚拟机(Vmware)安装ubuntu18.04和配置调整(一)

一.虚拟机(Vmware)安装ubuntu18.04 1.下载ubuntu18.04桌面版镜像文件< ubuntu-18.04.3-desktop-amd64.iso> 2.使用VMwar ...

- Linux就该这么学——新手必须掌握的命令之文件编辑命令组

cat 命令 用途 : 用于查看纯文本文件 格式 : cat [选项] [文件] 示例 : more 命令 用途 : 用于查看纯文本文件(内容较多的),可以用”Enter” 键或者”Space”键向下 ...

- Elastic Search中DSL Query的常见语法

Query DSL是一种通过request body提交搜索参数的请求方式.就是将请求头参数(?xxx=xxx)转换为请求体参数.语法格式:GET [/index_name/type_name]/_s ...

- Python编程之注释

一.注释 当你把变量理解透了,你就已经进入了编程的世界.随着学习的深入,用不了多久,你就可以写复杂的上千甚至上万行的代码啦,有些代码你花了很久写出来,过了些天再回去看,发现竟然看不懂了,这太正常了. ...

- 基于从库+binlog方式恢复数据

基于从库+binlog方式恢复数据 将bkxt从库的全备份在rescs5上恢复一份,恢复到6306端口,用cmdb操作 恢复全备后执行如下操作 set global read_only=OFF; st ...

- 基于keepalived搭建mysql双主高可用

目录 概述 环境准备 keepalived搭建 mysql搭建 mysql双主搭建 mysql双主高可用搭建 概述 传统(不借助中间件)的数据库主从搭建,如果主节点挂掉了,从节点只能读取无法写入,只能 ...