谣言检测(PLAN)——《Interpretable Rumor Detection in Microblogs by Attending to User Interactions》

论文信息

论文标题:Interpretable Rumor Detection in Microblogs by Attending to User Interactions

论文作者:Ling Min Serena Khoo, Hai Leong Chieu, Zhong Qian, Jing Jiang

论文来源:2020,

论文地址:download

论文代码:download

Background

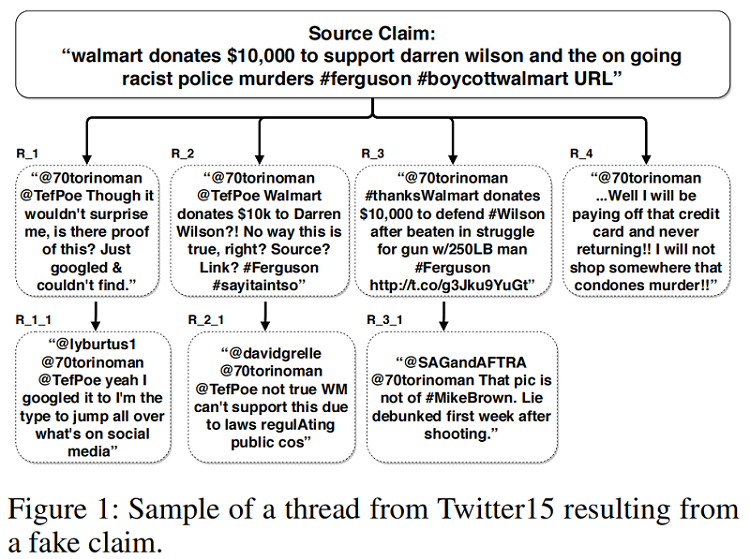

基于群体智能的谣言检测:Figure 1

本文观点:基于树结构的谣言检测模型,往往忽略了 Branch 之间的交互。

1 Introduction

Motivation:a user posting a reply might be replying to the entire thread rather than to a specific user.

Mehtod:We propose a post-level attention model (PLAN) to model long distance interactions between tweets with the multi-head attention mechanism in a transformer network.

We investigated variants of this model:

- a structure aware self-attention model (StA-PLAN) that incorporates tree structure information in the transformer network;

- a hierarchical token and post-level attention model (StA-HiTPLAN) that learns a sentence representation with token-level self-attention.

- We utilize the attention weights from our model to provide both token-level and post-level explanations behind the model’s prediction. To the best of our knowledge, we are the first paper that has done this.

- We compare against previous works on two data sets - PHEME 5 events and Twitter15 and Twitter16 . Previous works only evaluated on one of the two data sets.

- Our proposed models could outperform current state-ofthe-art models for both data sets.

目前谣言检测的类型:

(i) the content of the claim.

(ii) the bias and social network of the source of the claim.

(iii) fact checking with trustworthy sources.

(iv) community response to the claims.

2 Approaches

2.1 Recursive Neural Networks

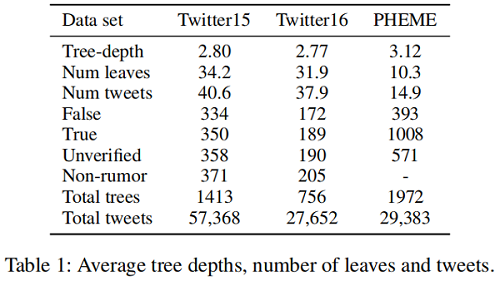

观点:谣言传播树通常是浅层的,一个用户通常只回复一次 source post ,而后进行早期对话。

|

Dataset |

Twitter15 |

Twitter16 |

PHEME |

|

Tree-depth |

2.80 |

2.77 |

3.12 |

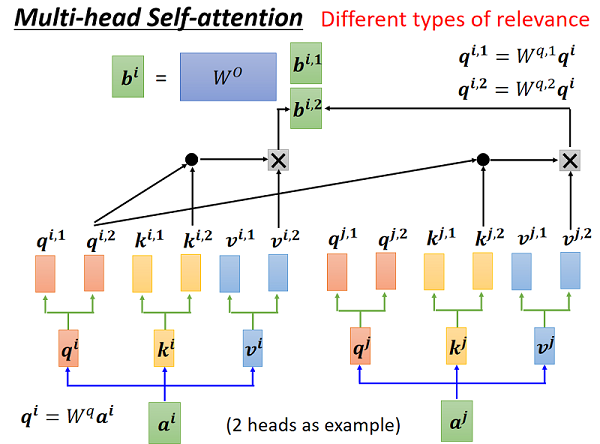

2.2 Transformer Networks

Transformer 中的注意机制使有效的远程依赖关系建模成为可能。

Transformer 中的注意力机制:

$\alpha_{i j}=\operatorname{Compatibility}\left(q_{i}, k_{j}\right)=\operatorname{softmax}\left(\frac{q_{i} k_{j}^{T}}{\sqrt{d_{k}}}\right)\quad\quad\quad(1)$

$z_{i}=\sum_{j=1}^{n} \alpha_{i j} v_{j}\quad\quad\quad(2)$

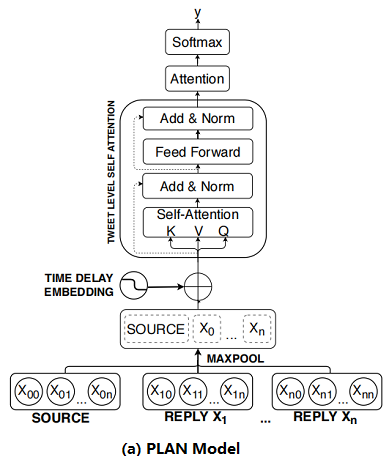

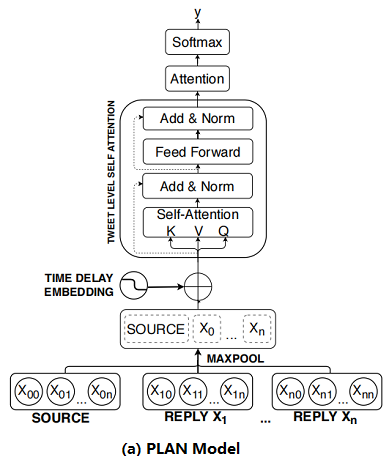

2.3 Post-Level Attention Network (PLAN)

框架如下:

首先:将 Post 按时间顺序排列;

其次:对每个 Post 使用 Max pool 得到 sentence embedding ;

然后:将 sentence embedding $X^{\prime}=\left(x_{1}^{\prime}, x_{2}^{\prime}, \ldots, x_{n}^{\prime}\right)$ 通过 $s$ 个多头注意力模块 MHA 得到 $U=\left(u_{1}, u_{2}, \ldots, u_{n}\right)$;

最后:通过 attention 机制聚合这些输出并使用全连接层进行预测 :

$\begin{array}{l}\alpha_{k}=\operatorname{softmax}\left(\gamma^{T} u_{k}\right) &\quad\quad\quad(3)\\v=\sum\limits _{k=0}^{m} \alpha_{k} u_{k} &\quad\quad\quad(4)\\p=\operatorname{softmax}\left(W_{p}^{T} v+b_{p}\right) &\quad\quad\quad(5)\end{array}$

where $\gamma \in \mathbb{R}^{d_{\text {model }}}, \alpha_{k} \in \mathbb{R}$,$W_{p} \in \mathbb{R}^{d_{\text {model }}, K}$,$b \in \mathbb{R}^{d_{\text {model }}}$,$u_{k}$ is the output after passing through $s$ number of MHA layers,$v$ and $p$ are the representation vector and prediction vector for $X$

回顾:

2.4 Structure Aware Post-Level Attention Network (StA-PLAN)

上述模型的问题:线性结构组织的推文容易失去结构信息。

为了结合显示树结构的优势和自注意力机制,本文扩展了 PLAN 模型,来包含结构信息。

$\begin{array}{l}\alpha_{i j}=\operatorname{softmax}\left(\frac{q_{i} k_{j}^{T}+a_{i j}^{K}}{\sqrt{d_{k}}}\right)\\z_{i}=\sum\limits _{j=1}^{n} \alpha_{i j}\left(v_{j}+a_{i j}^{V}\right)\end{array}$

其中, $a_{i j}^{V}$ 和 $a_{i j}^{K}$ 是代表上述五种结构关系(i.e. parent, child, before, after and self) 的向量。

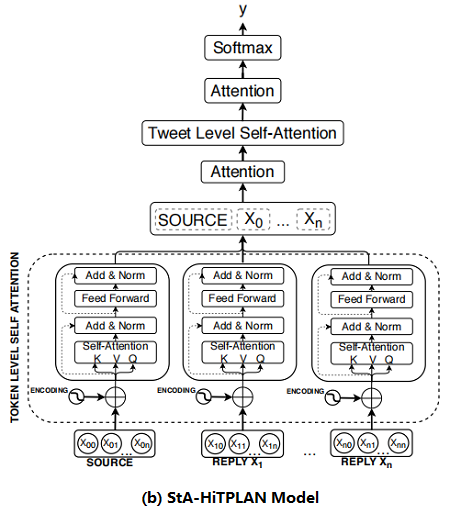

2.5 Structure Aware Hierarchical Token and Post-Level Attention Network (StA-HiTPLAN)

本文的PLAN 模型使用 max-pooling 来得到每条推文的句子表示,然而比较理想的方法是允许模型学习单词向量的重要性。因此,本文提出了一个层次注意模型—— attention at a token-level then at a post-level。层次结构模型的概述如 Figure 2b 所示。

2.6 Time Delay Embedding

source post 创建的时候,reply 一般是抱持怀疑的状态,而当 source post 发布了一段时间后,reply 有着较高的趋势显示 post 是虚假的。因此,本文研究了 time delay information 对上述三种模型的影响。

To include time delay information for each tweet, we bin the tweets based on their latency from the time the source tweet was created. We set the total number of time bins to be 100 and each bin represents a 10 minutes interval. Tweets with latency of more than 1,000 minutes would fall into the last time bin. We used the positional encoding formula introduced in the transformer network to encode each time bin. The time delay embedding would be added to the sentence embedding of tweet. The time delay embedding, TDE, for each tweet is:

$\begin{array}{l}\mathrm{TDE}_{\text {pos }, 2 i} &=&\sin \frac{\text { pos }}{10000^{2 i / d_{\text {model }}}} \\\mathrm{TDE}_{\text {pos }, 2 i+1} &=&\cos \frac{\text { pos }}{10000^{2 i / d_{\text {model }}}}\end{array}$

where pos represents the time bin each tweet fall into and $p o s \in[0,100)$, $i$ refers to the dimension and $d_{\text {model }}$ refers to the total number of dimensions of the model.

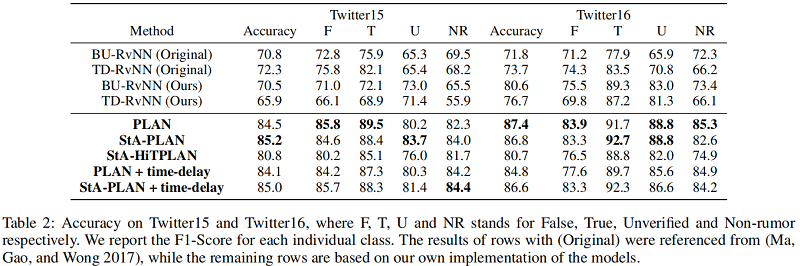

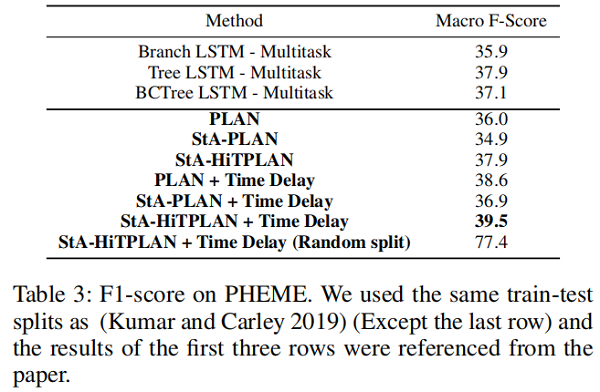

3 Experiments and Results

dataset

Result

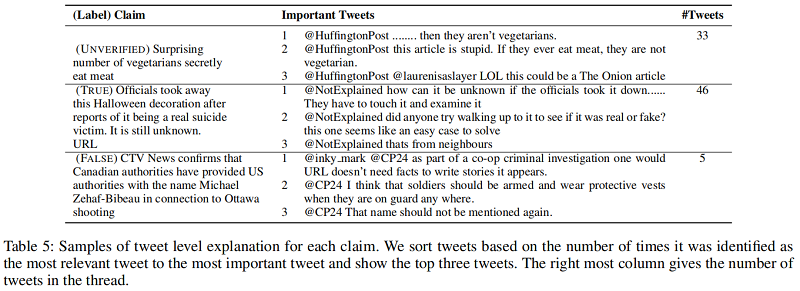

Post-Level Explanations

首先通过最后的 attention 层获得最重要的推文 $tweet_{impt}$ ,然后从第 $i$ 个MHA层获得该层的与 $tweet_{impt}$ 最相关的推文 $tweet _{rel,i}$ ,每篇推文可能被识别成最相关的推文多次,最后按照 被识别的次数排序,取前三名作为源推文的解释。举例如下:

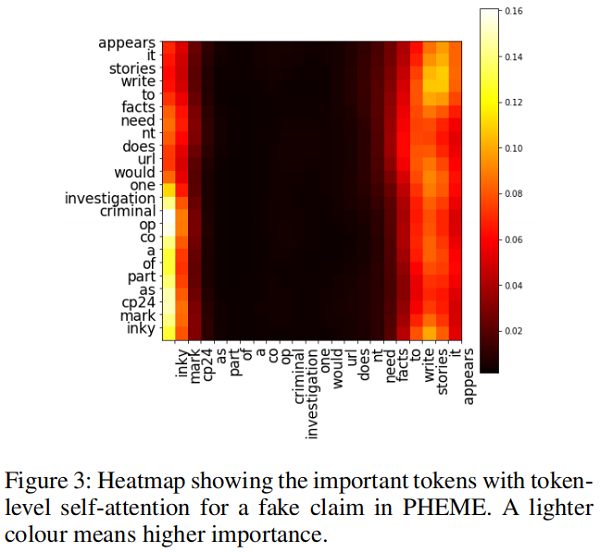

Token-Level Explanation

可以使用 token-level self-attention 的自注意力权重来进行 token-level 的解释。比如评论 “@inky mark @CP24 as part of a co-op criminal investigation one would URL doesn’t need facts to write stories it appears.”中短语“facts to write stories it appears”表达了对源推文的质疑,下图的自注意力权重图可以看出大量权重集中在这一部分,这说明这个短语就可以作为一个解释:

谣言检测(PLAN)——《Interpretable Rumor Detection in Microblogs by Attending to User Interactions》的更多相关文章

- 谣言检测——(PSA)《Probing Spurious Correlations in Popular Event-Based Rumor Detection Benchmarks》

论文信息 论文标题:Probing Spurious Correlations in Popular Event-Based Rumor Detection Benchmarks论文作者:Jiayin ...

- 谣言检测——《MFAN: Multi-modal Feature-enhanced Attention Networks for Rumor Detection》

论文信息 论文标题:MFAN: Multi-modal Feature-enhanced Attention Networks for Rumor Detection论文作者:Jiaqi Zheng, ...

- 谣言检测(GACL)《Rumor Detection on Social Media with Graph Adversarial Contrastive Learning》

论文信息 论文标题:Rumor Detection on Social Media with Graph AdversarialContrastive Learning论文作者:Tiening Sun ...

- 谣言检测(ClaHi-GAT)《Rumor Detection on Twitter with Claim-Guided Hierarchical Graph Attention Networks》

论文信息 论文标题:Rumor Detection on Twitter with Claim-Guided Hierarchical Graph Attention Networks论文作者:Erx ...

- 谣言检测(RDEA)《Rumor Detection on Social Media with Event Augmentations》

论文信息 论文标题:Rumor Detection on Social Media with Event Augmentations论文作者:Zhenyu He, Ce Li, Fan Zhou, Y ...

- 谣言检测()《Data Fusion Oriented Graph Convolution Network Model for Rumor Detection》

论文信息 论文标题:Data Fusion Oriented Graph Convolution Network Model for Rumor Detection论文作者:Erxue Min, Yu ...

- 谣言检测()《Rumor Detection with Self-supervised Learning on Texts and Social Graph》

论文信息 论文标题:Rumor Detection with Self-supervised Learning on Texts and Social Graph论文作者:Yuan Gao, Xian ...

- 谣言检测(PSIN)——《Divide-and-Conquer: Post-User Interaction Network for Fake News Detection on Social Media》

论文信息 论文标题:Divide-and-Conquer: Post-User Interaction Network for Fake News Detection on Social Media论 ...

- 论文解读(RvNN)《Rumor Detection on Twitter with Tree-structured Recursive Neural Networks》

论文信息 论文标题:Rumor Detection on Twitter with Tree-structured Recursive Neural Networks论文作者:Jing Ma, Wei ...

随机推荐

- JUC源码学习笔记3——AQS等待队列和CyclicBarrier,BlockingQueue

一丶Condition 1.概述 任何一个java对象都拥有一组定义在Object中的监视器方法--wait(),wait(long timeout),notify(),和notifyAll()方法, ...

- for_in循环

for-in循环也可以简单称为for循环 in表达从(字符串,序列等)中依次取值,又称为遍历(全部都要取到) for-in遍历的对象必须是可迭代对象 目前可以简单认为只有字符串和序列是可迭代对象 它是 ...

- 基于二进制安装Cloudera Manager集群

一.环境准备 参考链接:https://www.cnblogs.com/zhangzhide/p/11108472.html 二.安装jdk(三台主机都要做) 下载jdk安装包并解压:tar xvf ...

- 1.2 Hadoop快速入门

1.2 Hadoop快速入门 1.Hadoop简介 Hadoop是一个开源的分布式计算平台. 提供功能:利用服务器集群,根据用户定义的业务逻辑,对海量数据的存储(HDFS)和分析计算(MapReduc ...

- Luogu1880 [NOI1995]石子合并 (区间DP)

一个1A主席树的男人,沦落到褪水DP举步维艰 #include <iostream> #include <cstdio> #include <cstring> #i ...

- HCNP Routing&Switching之端口安全

前文我们了解了二层MAC安全相关话题和配置,回顾请参考https://www.cnblogs.com/qiuhom-1874/p/16618201.html:今天我们来聊一聊mac安全的综合解决方案端 ...

- 给ShardingSphere提了个PR,不知道是不是嫌弃我

说来惭愧,干了 10 来年程序员,还没有给开源做过任何贡献,以前只知道嘎嘎写,出了问题嘎嘎改,从来没想过提个 PR 去修复他,最近碰到个问题,发现挺简单的,就随手提了个 PR 过去. 问题 问题挺简单 ...

- Rsync数据备份工具

Rsync数据备份工具 1.Rsync基本概述 rsync是一款开源的备份工具,可以在不同主机之间进行同步(windows和Linux之间 Mac和 Linux Linux和Linux),可实现全量备 ...

- 如何在 C# 程序中注入恶意 DLL?

一:背景 前段时间在训练营上课的时候就有朋友提到一个问题,为什么 Windbg 附加到 C# 程序后,程序就处于中断状态了?它到底是如何实现的? 其实简而言之就是线程的远程注入,这一篇就展开说一下. ...

- [双重 for 循环]打印一个倒三角形

[双重 for 循环]打印一个倒三角形 核心算法 里层循环:j = i; j <= 10; j++ 当i=1时,j=1 , j<=10,j++,打印10个星星 当i=2时,j=2 , j& ...