Kafka 温故(五):Kafka的消费编程模型

Kafka的消费模型分为两种:

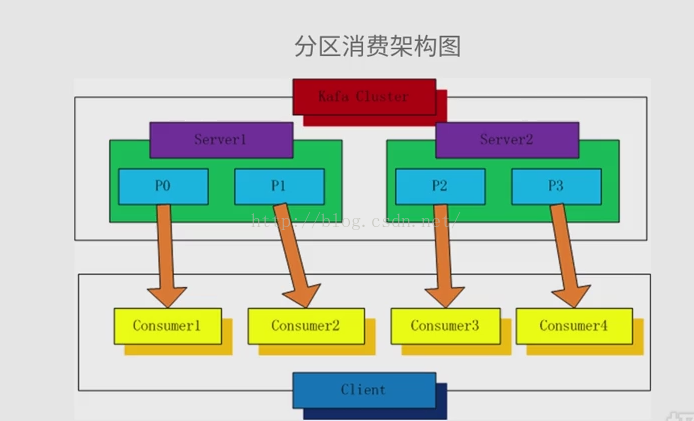

1.分区消费模型

2.分组消费模型

一.分区消费模型

二、分组消费模型

Producer :

package cn.outofmemory.kafka; import java.util.Properties; import kafka.javaapi.producer.Producer;

import kafka.producer.KeyedMessage;

import kafka.producer.ProducerConfig; /**

* Hello world!

*

*/

public class KafkaProducer

{

private final Producer<String, String> producer;

public final static String TOPIC = "TEST-TOPIC"; private KafkaProducer(){

Properties props = new Properties();

//此处配置的是kafka的端口

props.put("metadata.broker.list", "192.168.193.148:9092"); //配置value的序列化类

props.put("serializer.class", "kafka.serializer.StringEncoder");

//配置key的序列化类

props.put("key.serializer.class", "kafka.serializer.StringEncoder"); //request.required.acks

//0, which means that the producer never waits for an acknowledgement from the broker (the same behavior as 0.7). This option provides the lowest latency but the weakest durability guarantees (some data will be lost when a server fails).

//1, which means that the producer gets an acknowledgement after the leader replica has received the data. This option provides better durability as the client waits until the server acknowledges the request as successful (only messages that were written to the now-dead leader but not yet replicated will be lost).

//-1, which means that the producer gets an acknowledgement after all in-sync replicas have received the data. This option provides the best durability, we guarantee that no messages will be lost as long as at least one in sync replica remains.

props.put("request.required.acks","-1"); producer = new Producer<String, String>(new ProducerConfig(props));

} void produce() {

int messageNo = 1000;

final int COUNT = 10000; while (messageNo < COUNT) {

String key = String.valueOf(messageNo);

String data = "hello kafka message " + key;

producer.send(new KeyedMessage<String, String>(TOPIC, key ,data));

System.out.println(data);

messageNo ++;

}

} public static void main( String[] args )

{

new KafkaProducer().produce();

} }

Consumer

package cn.outofmemory.kafka; import java.util.HashMap;

import java.util.List;

import java.util.Map;

import java.util.Properties; import kafka.consumer.ConsumerConfig;

import kafka.consumer.ConsumerIterator;

import kafka.consumer.KafkaStream;

import kafka.javaapi.consumer.ConsumerConnector;

import kafka.serializer.StringDecoder;

import kafka.utils.VerifiableProperties; public class KafkaConsumer { private final ConsumerConnector consumer; private KafkaConsumer() {

Properties props = new Properties();

//zookeeper 配置

props.put("zookeeper.connect", "192.168.193.148:2181"); //group 代表一个消费组

props.put("group.id", "jd-group"); //zk连接超时

props.put("zookeeper.session.timeout.ms", "4000");

props.put("zookeeper.sync.time.ms", "200");

props.put("auto.commit.interval.ms", "1000");

props.put("auto.offset.reset", "smallest");

//序列化类

props.put("serializer.class", "kafka.serializer.StringEncoder"); ConsumerConfig config = new ConsumerConfig(props); consumer = kafka.consumer.Consumer.createJavaConsumerConnector(config);

} void consume() {

Map<String, Integer> topicCountMap = new HashMap<String, Integer>();

topicCountMap.put(KafkaProducer.TOPIC, new Integer(1)); StringDecoder keyDecoder = new StringDecoder(new VerifiableProperties());

StringDecoder valueDecoder = new StringDecoder(new VerifiableProperties()); //获取到的输入流

Map<String, List<KafkaStream<String, String>>> consumerMap =

consumer.createMessageStreams(topicCountMap,keyDecoder,valueDecoder);

KafkaStream<String, String> stream = consumerMap.get(KafkaProducer.TOPIC).get(0);

ConsumerIterator<String, String> it = stream.iterator();

//输出接受到的消息

while (it.hasNext())

System.out.println(it.next().message());

} public static void main(String[] args) {

new KafkaConsumer().consume();

}

}

kafka 学习告一段落,后面进入的为Spring 温习。

Kafka 温故(五):Kafka的消费编程模型的更多相关文章

- Kafka 温故(二):Kafka的基本概念和结构

一.Kafka中的核心概念 Producer: 特指消息的生产者Consumer :特指消息的消费者Consumer Group :消费者组,可以并行消费Topic中partition的消息Broke ...

- Storm集成Kafka编程模型

原创文章,转载请注明: 转载自http://www.cnblogs.com/tovin/p/3974417.html 本文主要介绍如何在Storm编程实现与Kafka的集成 一.实现模型 数据流程: ...

- Kafka 通过python简单的生产消费实现

使用CentOS6.5.python3.6.kafkaScala 2.10 - kafka_2.10-0.8.2.2.tgz (asc, md5) 一.下载kafka 下载地址 https://ka ...

- kafka的编程模型

1.kafka消费者编程模型 分区消费模型 组(group)消费模型 1.1.1.分区消费架构图,每个分区对应一个消费者. 1.1.2.分区消费模型伪代码描述 指定偏移量,用于从上次消费的地方开始消费 ...

- kafka架构,消息存储和生成消费模型,Kafka与其他队列对比,零拷贝,Kafka基本介绍

kafka架构,消息存储和生成消费模型,Kafka与其他队列对比,零拷贝,Kafka基本介绍 一.初识kafka 1.1SparkStreaming+Kafka好处: 1.2Kafka的架构: 二.k ...

- Kafka具体解释五、Kafka Consumer的底层API- SimpleConsumer

1.Kafka提供了两套API给Consumer The high-level Consumer API The SimpleConsumer API 第一种高度抽象的Consumer API,它使用 ...

- Kafka详解五:Kafka Consumer的底层API- SimpleConsumer

问题导读 1.Kafka如何实现和Consumer之间的交互?2.使用SimpleConsumer有哪些弊端呢? 1.Kafka提供了两套API给Consumer The high-level Con ...

- Kafka创建&查看topic,生产&消费指定topic消息

启动zookeeper和Kafka之后,进入kafka目录(安装/启动kafka参考前面一章:https://www.cnblogs.com/cici20166/p/9425613.html) 1.创 ...

- kafka创建topic,生产和消费指定topic消息

启动zookeeper和Kafka之后,进入kafka目录(安装/启动kafka参考前面一章:https://www.cnblogs.com/cici20166/p/9425613.html) 1.创 ...

随机推荐

- Redux系列x:源码解析

写在前面 redux的源码很简洁,除了applyMiddleware比较绕难以理解外,大部分还是 这里假设读者对redux有一定了解,就不科普redux的概念和API啥的啦,这部分建议直接看官方文档. ...

- [Latex] 所有字体embedded: Type3 PDF文档处理 / True Type转换为Type 1

目录: [正文] Adobe Acrobat打印解决字体嵌入问题 [Appendix I] Type3转TRUE Type/Type 1 [Appendix II] TRUE Type转Type 1 ...

- 《Pro SQL Server Internals, 2nd edition》的CHAPTER 3 Statistics中的Introduction to SQL Server Statistics、Statistics and Execution Plans、Statistics Maintenance(译)

<Pro SQL Server Internals> 作者: Dmitri Korotkevitch 出版社: Apress出版年: 2016-12-29页数: 804定价: USD 59 ...

- TeamWork#3,Week5,Release Notes of the Alpha Version

在这里的是一款你时下最不可或缺的一款美妙的产品. “今天哪家外卖便宜?” “今天这家店在哪个网站打折?” “这家店到底哪个菜好吃?” 这些问题你在寝室/办公室每天要问几次?还在为了找一家便宜的外卖店而 ...

- team330团队铁大兼职网站使用说明

项目名称:铁大兼职网站 项目形式:网站 网站链接:http://39.106.30.16:8080/zhaopinweb/mainpage.jsp 开发团队:team330 网站上线时间:2018年1 ...

- C++:多态浅析

1.多态 在C++中由两种多态性: • 编译时的多态性:通过函数的重载和运算符的重载来实现的 • 运行时的多态性:通过类继承关系和虚函数来实现的 特别注意: a.运行时的多态性是指程序执行前,无法根据 ...

- do

http://www.cnblogs.com/xdp-gacl/p/3791993.html http://blog.sina.com.cn/s/blog_95c8f1ac010198j2.html

- T检验在项目上的具体实施

我觉得 T 检验,应该用在 判断某种仿真条件因素 对碳纳米管的随机性 是否有显著影响 上.所以不是针对<相同仿真条件对不同源的影响>这个表中的数据做 T 检验 如:判断 金属/半导体比率 ...

- helm 替换源的方法

网上找了一个 helm 替换源的方法 挺好用的 mark 一下 helm repo remove stable helm repo add stable https://kubernetes.oss- ...

- CUDA ---- 线程配置

前言 线程的组织形式对程序的性能影响是至关重要的,本篇博文主要以下面一种情况来介绍线程组织形式: 2D grid 2D block 线程索引 矩阵在memory中是row-major线性存储的: 在k ...