Model Representation and Cost Function

Model Representation

To establish notation for future use, we’ll use x(i) to denote the “input” variables (living area in this example), also called input features, and y(i) to denote the “output” or target variable that we are trying to predict (price). A pair (x(i),y(i)) is called a training example, and the dataset that we’ll be using to learn—a list of m training examples (x(i),y(i));i=1,...,m—is called a training set. Note that the superscript “(i)” in the notation is simply an index into the training set, and has nothing to do with exponentiation. We will also use X to denote the space of input values, and Y to denote the space of output values. In this example, X = Y = ℝ.

To describe the supervised learning problem slightly more formally, our goal is, given a training set, to learn a function h : X → Y so that h(x) is a “good” predictor for the corresponding value of y. For historical reasons, this function h is called a hypothesis. Seen pictorially, the process is therefore like this:

When the target variable that we’re trying to predict is continuous, such as in our housing example, we call the learning problem a regression problem. When y can take on only a small number of discrete values (such as if, given the living area, we wanted to predict if a dwelling is a house or an apartment, say), we call it a classification problem.

Cost Function

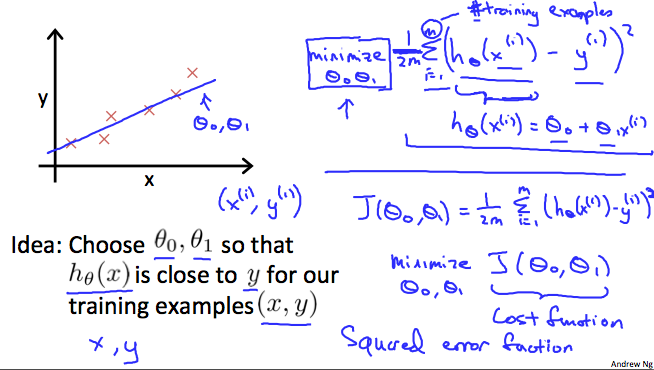

We can measure the accuracy of our hypothesis function by using a cost function. This takes an average difference (actually a fancier version of an average) of all the results of the hypothesis with inputs from x's and the actual output y's.

J(θ0,θ1)=12m∑i=1m(y^i−yi)2=12m∑i=1m(hθ(xi)−yi)2

To break it apart, it is 12 x¯ where x¯ is the mean of the squares of hθ(xi)−yi , or the difference between the predicted value and the actual value.

This function is otherwise called the "Squared error function", or "Mean squared error". The mean is halved (12)as a convenience for the computation of the gradient descent, as the derivative term of the square function will cancel out the 12 term. The following image summarizes what the cost function does:

Cost Function - Intuition I

If we try to think of it in visual terms, our training data set is scattered on the x-y plane. We are trying to make a straight line (defined by hθ(x)) which passes through these scattered data points.

Our objective is to get the best possible line. The best possible line will be such so that the average squared vertical distances of the scattered points from the line will be the least. Ideally, the line should pass through all the points of our training data set. In such a case, the value of J(θ0,θ1) will be 0. The following example shows the ideal situation where we have a cost function of 0.

When θ1=1, we get a slope of 1 which goes through every single data point in our model. Conversely, when θ1=0.5, we see the vertical distance from our fit to the data points increase.

This increases our cost function to 0.58. Plotting several other points yields to the following graph:

Thus as a goal, we should try to minimize the cost function. In this case, θ1=1 is our global minimum.

Cost Function - Intuition II

A contour plot is a graph that contains many contour lines. A contour line of a two variable function has a constant value at all points of the same line. An example of such a graph is the one to the right below.

Taking any color and going along the 'circle', one would expect to get the same value of the cost function. For example, the three green points found on the green line above have the same value for J(θ0,θ1) and as a result, they are found along the same line. The circled x displays the value of the cost function for the graph on the left when θ0 = 800 and θ1= -0.15. Taking another h(x) and plotting its contour plot, one gets the following graphs:

When θ0 = 360 and θ1 = 0, the value of J(θ0,θ1) in the contour plot gets closer to the center thus reducing the cost function error. Now giving our hypothesis function a slightly positive slope results in a better fit of the data.

The graph above minimizes the cost function as much as possible and consequently, the result of θ1 and θ0 tend to be around 0.12 and 250 respectively. Plotting those values on our graph to the right seems to put our point in the center of the inner most 'circle'.

Model Representation and Cost Function的更多相关文章

- Linear regression with one variable - Cost function

摘要: 本文是吴恩达 (Andrew Ng)老师<机器学习>课程,第二章<单变量线性回归>中第7课时<代价函数>的视频原文字幕.为本人在视频学习过程中逐字逐句记录下 ...

- loss function与cost function

实际上,代价函数(cost function)和损失函数(loss function 亦称为 error function)是同义的.它们都是事先定义一个假设函数(hypothesis),通过训练集由 ...

- 【caffe】loss function、cost function和error

@tags: caffe 机器学习 在机器学习(暂时限定有监督学习)中,常见的算法大都可以划分为两个部分来理解它 一个是它的Hypothesis function,也就是你用一个函数f,来拟合任意一个 ...

- 逻辑回归损失函数(cost function)

逻辑回归模型预估的是样本属于某个分类的概率,其损失函数(Cost Function)可以像线型回归那样,以均方差来表示:也可以用对数.概率等方法.损失函数本质上是衡量”模型预估值“到“实际值”的距离, ...

- [Machine Learning] 浅谈LR算法的Cost Function

了解LR的同学们都知道,LR采用了最小化交叉熵或者最大化似然估计函数来作为Cost Function,那有个很有意思的问题来了,为什么我们不用更加简单熟悉的最小化平方误差函数(MSE)呢? 我个人理解 ...

- logistic回归具体解释(二):损失函数(cost function)具体解释

有监督学习 机器学习分为有监督学习,无监督学习,半监督学习.强化学习.对于逻辑回归来说,就是一种典型的有监督学习. 既然是有监督学习,训练集自然能够用例如以下方式表述: {(x1,y1),(x2,y2 ...

- 机器学习 损失函数(Loss/Error Function)、代价函数(Cost Function)和目标函数(Objective function)

损失函数(Loss/Error Function): 计算单个训练集的误差,例如:欧氏距离,交叉熵,对比损失,合页损失 代价函数(Cost Function): 计算整个训练集所有损失之和的平均值 至 ...

- 损失函数(Loss function) 和 代价函数(Cost function)

1损失函数和代价函数的区别: 损失函数(Loss function):指单个训练样本进行预测的结果与实际结果的误差. 代价函数(Cost function):整个训练集,所有样本误差总和(所有损失函数 ...

- 手势跟踪论文学习:Realtime and Robust Hand Tracking from Depth(三)Cost Function

iker原创.转载请标明出处:http://blog.csdn.net/ikerpeng/article/details/39050619 Realtime and Robust Hand Track ...

随机推荐

- 框架应用:Mybatis (三) - 关系映射

你有一张你自己的学生证?(一对一) 你这一年级有多少个学生?(一对多) 班上学生各选了什么课?(多对多) 两张表以上的操作都需要联立多张表,而用SQL语句可以直接联立两张表,用工程语言则需要手动完成这 ...

- 你的专属定制——JQuery自定义插件

前 言 絮叨絮叨 jQuery是一个快速.简洁的JavaScript框架,是继Prototype之后又一个优秀的JavaScript代码库(或JavaScript框架).jQuery设计的宗 ...

- 为什么你需要将代码迁移到ASP.NET Core 2.0?

随着 .NET Core 2.0 的发布,.NET 开源跨平台迎来了新的时代.开发者们可以选择使用命令行.个人喜好的文本编辑器.Visual Studio 2017 15.3 和 Visual Stu ...

- python 脚本开发实战-当当亚马逊图书采集器转淘宝数据包

开发环境python2.7.9 os:win-xp exe打包工具pyinstaller 界面tkinter ============================================= ...

- Class.getResource和ClassLoader.getResource的区别分析

原文:http://swiftlet.net/archives/868 在Java中获取资源的时候,经常用到Class.getResource和ClassLoader.getResource,本文给大 ...

- apollo实现c#与android消息推送(四)

4 Android代码只是为了实现功能,比较简单,就只是贴出来 package com.myapps.mqtttest; import java.util.concurrent.Executors; ...

- Keyboard Row

Given a List of words, return the words that can be typed using letters of alphabet on only one row' ...

- JavaWeb(五)之JSTL标签库

前言 前面介绍了EL表达式,其实EL表达式基本上是和JSTL核心标签库搭配一起使用才能发挥效果的.接下来让我们一起来认识一下吧! 在之前我们学过在JSP页面上为了不使用脚本,所以我们有了JSP内置的行 ...

- DAO与DTO

DAO叫数据访问对象(data access object) DTO是数据传输对象(data transfer object) DAO通常是将非对象数据(如关系数据库中的数据)以对象的方式操纵.(即一 ...

- MyEclipse的JQuery.min.js报错红叉解决办法

MyEclipse的JQuery.min.js报错红叉解决办法 1.选中报错的jquery文件"jquery-1.2.6.min.js".2.右键选择 MyEclipse--> ...