kafka producer自定义partitioner和consumer多线程

为了更好的实现负载均衡和消息的顺序性,Kafka Producer可以通过分发策略发送给指定的Partition。Kafka Java客户端有默认的Partitioner,平均的向目标topic的各个Partition中生产数据,如果想要控制消息的分发策略,有两种方式,一种是在发送前创建ProducerRecord时指定分区(针对单个消息),另一种就是就是根据Key自己写算法。继承Partitioner接口,实现其partition方法。并且配置启动参数 props.put("partitioner.class","com.example.demo.MyPartitioner"),示例代码如下:

自定义的partitoner

package com.example.demo; import java.util.Map; import org.apache.kafka.clients.producer.Partitioner;

import org.apache.kafka.common.Cluster; public class MyPartitioner implements Partitioner { @Override

public void configure(Map<String, ?> configs) { } @Override

public int partition(String topic, Object key, byte[] keyBytes, Object value, byte[] valueBytes, Cluster cluster) {

if (Integer.parseInt((String)key)%3==1)

return 0;

else if (Integer.parseInt((String)key)%3==2)

return 1;

else return 2;

} @Override

public void close() { } }

producer类中指定partitioner.class

package com.example.demo; import java.util.Properties; import org.apache.kafka.clients.producer.KafkaProducer;

import org.apache.kafka.clients.producer.Producer;

import org.apache.kafka.clients.producer.ProducerRecord; public class MyProducer { public static void main(String[] args) {

Properties props = new Properties();

props.put("bootstrap.servers", "192.168.1.124:9092");

props.put("acks", "all");

props.put("retries", 0);

props.put("batch.size", 16384);

props.put("linger.ms", 1);

props.put("partitioner.class", "com.example.demo.MyPartitioner");

props.put("buffer.memory", 33554432);

props.put("key.serializer", "org.apache.kafka.common.serialization.StringSerializer");

props.put("value.serializer", "org.apache.kafka.common.serialization.StringSerializer"); Producer<String, String> producer = new KafkaProducer<>(props);

for (int i = 0; i < 100; i++)

producer.send(new ProducerRecord<String, String>("powerTopic", Integer.toString(i), Integer.toString(i))); producer.close(); }

}

测试consumer

package com.example.demo; import java.util.Arrays;

import java.util.Properties; import org.apache.kafka.clients.consumer.ConsumerRecord;

import org.apache.kafka.clients.consumer.ConsumerRecords;

import org.apache.kafka.clients.consumer.KafkaConsumer; public class MyAutoCommitConsumer { public static void main(String[] args) {

Properties props = new Properties();

props.put("bootstrap.servers", "192.168.1.124:9092");

props.put("group.id", "test");

props.put("enable.auto.commit", "true");

props.put("auto.commit.interval.ms", "1000");

props.put("key.deserializer", "org.apache.kafka.common.serialization.StringDeserializer");

props.put("value.deserializer", "org.apache.kafka.common.serialization.StringDeserializer");

@SuppressWarnings("resource")

KafkaConsumer<String, String> consumer = new KafkaConsumer<>(props);

consumer.subscribe(Arrays.asList("powerTopic"));

while (true) {

ConsumerRecords<String, String> records = consumer.poll(100);

for (ConsumerRecord<String, String> record : records)

System.out.printf("partition = %d,offset = %d, key = %s, value = %s%n",record.partition(), record.offset(), record.key(), record.value());

}

}

}

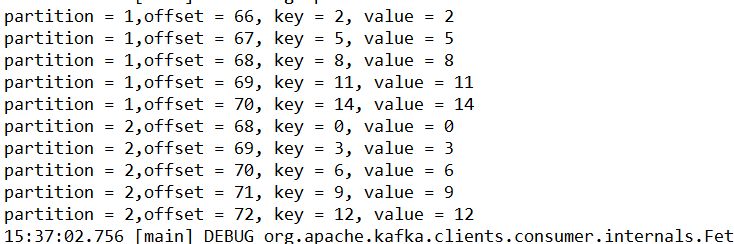

启动zookeeper和kafka,使用命令行新建一个 3个partition的topic:powerTopic,为了方便查看结果,将producer的循环次数设置为15,运行consumer和producer代码,效果如下:

虽然我们有三个分区,但是我们group组中只有一个消费者,所以三个分区的消息被这个消费者顺序消费,下面我们实现一个消费者组,示例代码如下:

ConsumerThread类

package com.example.demo; import org.apache.kafka.clients.consumer.ConsumerRecord;

import org.apache.kafka.clients.consumer.ConsumerRecords;

import org.apache.kafka.clients.consumer.KafkaConsumer; import java.util.Arrays;

import java.util.Properties; public class ConsumerThread implements Runnable {

private KafkaConsumer<String,String> kafkaConsumer;

private final String topic; public ConsumerThread(String brokers,String groupId,String topic){

Properties properties = buildKafkaProperty(brokers,groupId);

this.topic = topic;

this.kafkaConsumer = new KafkaConsumer<String, String>(properties);

this.kafkaConsumer.subscribe(Arrays.asList(this.topic));

} private static Properties buildKafkaProperty(String brokers,String groupId){

Properties properties = new Properties();

properties.put("bootstrap.servers", brokers);

properties.put("group.id", groupId);

properties.put("enable.auto.commit", "true");

properties.put("auto.commit.interval.ms", "1000");

properties.put("session.timeout.ms", "30000");

properties.put("auto.offset.reset", "earliest");

properties.put("key.deserializer", "org.apache.kafka.common.serialization.StringDeserializer");

properties.put("value.deserializer", "org.apache.kafka.common.serialization.StringDeserializer");

return properties;

} @Override

public void run() {

while (true){

ConsumerRecords<String,String> consumerRecords = kafkaConsumer.poll(100);

for(ConsumerRecord<String,String> item : consumerRecords){

System.out.println(Thread.currentThread().getName());

System.out.printf("partition = %d,offset = %d, key = %s, value = %s%n",item.partition(), item.offset(), item.key(), item.value());

}

}

}

}

ConsumerGroup类

package com.example.demo; import java.util.ArrayList;

import java.util.List; public class ConsumerGroup {

private List<ConsumerThread> consumerThreadList = new ArrayList<ConsumerThread>(); public ConsumerGroup(String brokers,String groupId,String topic,int consumerNumber){

for(int i = 0; i< consumerNumber;i++){

ConsumerThread consumerThread = new ConsumerThread(brokers,groupId,topic);

consumerThreadList.add(consumerThread);

}

} public void start(){

for (ConsumerThread item : consumerThreadList){

Thread thread = new Thread(item);

thread.start();

}

}

}

消费者组启动类ConsumerGroupMain

package com.example.demo;

public class ConsumerGroupMain {

public static void main(String[] args){

String brokers = "192.168.1.124:9092";

String groupId = "group01";

String topic = "powerTopic";

int consumerNumber = 3;

ConsumerGroup consumerGroup = new ConsumerGroup(brokers,groupId,topic,consumerNumber);

consumerGroup.start();

}

}

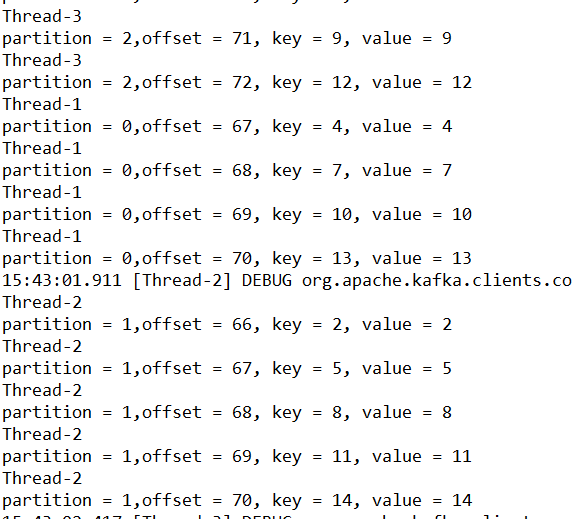

启动消费者和生产者,可以看到不同的分区是不同的线程去执行的效果如下:

kafka producer自定义partitioner和consumer多线程的更多相关文章

- 【原创】Kafka Consumer多线程实例

Kafka 0.9版本开始推出了Java版本的consumer,优化了coordinator的设计以及摆脱了对zookeeper的依赖.社区最近也在探讨正式用这套consumer API替换Scala ...

- 【原创】Kafka Consumer多线程实例续篇

在上一篇<Kafka Consumer多线程实例>中我们讨论了KafkaConsumer多线程的两种写法:多KafkaConsumer多线程以及单KafkaConsumer多线程.在第二种 ...

- Kettle安装Kafka Consumer和Kafka Producer插件

1.从github上下载kettle的kafka插件,地址如下 Kafka Consumer地址: https://github.com/RuckusWirelessIL/pentaho-kafka- ...

- 【原创】kafka producer源代码分析

Kafka 0.8.2引入了一个用Java写的producer.下一个版本还会引入一个对等的Java版本的consumer.新的API旨在取代老的使用Scala编写的客户端API,但为了兼容性 ...

- 玩转Kafka的生产者——分区器与多线程

上篇文章学习kafka的基本安装和基础概念,本文主要是学习kafka的常用API.其中包括生产者和消费者, 多线程生产者,多线程消费者,自定义分区等,当然还包括一些避坑指南. 首发于个人网站:链接地址 ...

- kafka producer实例

1. 定义要发送的消息User POJO package lenmom.kafkaproducer; public class User { public String name; public in ...

- Kafka Producer接口

参考, https://cwiki.apache.org/confluence/display/KAFKA/0.8.0+Producer+Example http://kafka.apache.org ...

- 详解Kafka Producer

上一篇文章我们主要介绍了什么是 Kafka,Kafka 的基本概念是什么,Kafka 单机和集群版的搭建,以及对基本的配置文件进行了大致的介绍,还对 Kafka 的几个主要角色进行了描述,我们知道,不 ...

- Kafka Producer相关代码分析【转】

来源:https://www.zybuluo.com/jewes/note/63925 @jewes 2015-01-17 20:36 字数 1967 阅读 1093 Kafka Producer相关 ...

随机推荐

- 编写高质量代码改善C#程序的157个建议——建议127:用形容词组给接口命名

建议127:用形容词组给接口命名 接口规范的是“Can do”,也就是说,它规范的是类型可以具有哪些行为.所以,接口的命名应该是一个形容词,如: IDisposable表示可以被释放 IEnumera ...

- 【前端布局】px与rpx的简述

本文只以移动设备为例说明. 注意:设计师以iphone6为标准出设计稿的话,1rpx=0.5px=1物理像素.Photoshop里面量出来的尺寸为物理像素点.所以可以直接使用标注尺寸数据. [像素Pi ...

- kafka学习默认端口号9092

一 Kafka 概述1.1 Kafka 是什么在流式计算中,Kafka 一般用来缓存数据,Storm 通过消费 Kafka 的数据进行计算.1)Apache Kafka 是一个开源消息系统(微信公众号 ...

- 【微服务架构】SpringCloud之Hystrix断路器(六)

一:什么是Hystrix 在分布式环境中,许多服务依赖项中的一些将不可避免地失败.Hystrix是一个库,通过添加延迟容差和容错逻辑来帮助您控制这些分布式服务之间的交互.Hystrix通过隔离服务之间 ...

- 在ie6下将png24图片透明

没想到IETester中IE6和IE6真实版本不一样...之前一直没有实现png图片的透明度,现在发现原来是版本不一样惹的祸.总之,我将解决方法以demo的方式显示出来,以供再次利用. <!DO ...

- 系统数据库--恢复Master数据库

实现步骤:关闭SQL SERVER 服务,使用DAC登录 在cmd下还原master 重启SQL SERVER 服务

- CancellationTokenSource 取消任务

using System; using System.Threading; using System.Threading.Tasks; namespace ConsoleApp1 { class Pr ...

- SQLite Mysql 模糊查找(like)

select UserId,UserName,Name,Sex,Birthday,Height,Weight,Role from xqhit_Users where UserName like &qu ...

- 陌上花开(三维偏序)(cdq分治)

题目链接:https://www.lydsy.com/JudgeOnline/problem.php?id=3262 其实就是三位偏序的模板,cdq分治入门题. 学习cdq分治请看__stdcall大 ...

- 针对myeclipse6.5无法自动生成toString方法

public void getToStringSTR(){ Field[] fs = this.getClass().getDeclaredFields(); for (int i = 0; i &l ...