HiBench成长笔记——(3) HiBench测试Spark

很多内容之前的博客已经提过,这里不再赘述,详细内容参照本系列前面的博客:https://www.cnblogs.com/ratels/p/10970905.html

创建并修改配置文件conf/spark.conf

cp conf/spark.conf.template conf/spark.conf

参考:https://github.com/Intel-bigdata/HiBench/blob/master/docs/run-sparkbench.md,设置属性为下列值

# Spark home hibench.spark.home /opt/cloudera/parcels/CDH--.cdh5./lib/spark # Spark master # standalone mode: spark://xxx:7077 # YARN mode: yarn-client hibench.spark.master yarn-client

执行脚本

bin/workloads/micro/wordcount/prepare/prepare.sh

返回信息

[root@node1 prepare]# ./prepare.sh patching args= Parsing conf: /home/cf/app/HiBench-master/conf/hadoop.conf Parsing conf: /home/cf/app/HiBench-master/conf/hibench.conf Parsing conf: /home/cf/app/HiBench-master/conf/spark.conf Parsing conf: /home/cf/app/HiBench-master/conf/workloads/micro/wordcount.conf probe -.cdh5./lib/hadoop/../../jars/hadoop-mapreduce-client-jobclient--cdh5.14.2-tests.jar start HadoopPrepareWordcount bench hdfs -.cdh5./bin/hadoop --config /etc/hadoop/conf.cloudera.yarn fs -rm -r -skipTrash hdfs://node1:8020/HiBench/Wordcount/Input Deleted hdfs://node1:8020/HiBench/Wordcount/Input Submit MapReduce Job: /opt/cloudera/parcels/CDH--.cdh5./bin/hadoop --config /etc/hadoop/conf.cloudera.yarn jar /opt/cloudera/parcels/CDH--.cdh5./lib/hadoop/../../jars/hadoop-mapreduce-examples--cdh5. -D mapreduce.randomtextwriter.bytespermap= -D mapreduce.job.maps= -D mapreduce.job.reduces= hdfs://node1:8020/HiBench/Wordcount/Input The job took seconds. finish HadoopPrepareWordcount bench

执行脚本

bin/workloads/micro/wordcount/spark/run.sh

返回信息

[root@node1 spark]# ./run.sh patching args= Parsing conf: /home/cf/app/HiBench-master/conf/hadoop.conf Parsing conf: /home/cf/app/HiBench-master/conf/hibench.conf Parsing conf: /home/cf/app/HiBench-master/conf/spark.conf Parsing conf: /home/cf/app/HiBench-master/conf/workloads/micro/wordcount.conf probe -.cdh5./lib/hadoop/../../jars/hadoop-mapreduce-client-jobclient--cdh5.14.2-tests.jar start ScalaSparkWordcount bench hdfs -.cdh5./bin/hadoop --config /etc/hadoop/conf.cloudera.yarn fs -rm -r -skipTrash hdfs://node1:8020/HiBench/Wordcount/Output Deleted hdfs://node1:8020/HiBench/Wordcount/Output hdfs -.cdh5./bin/hadoop --config /etc/hadoop/conf.cloudera.yarn fs -du -s hdfs://node1:8020/HiBench/Wordcount/Input Export env: SPARKBENCH_PROPERTIES_FILES=/home/cf/app/HiBench-master/report/wordcount/spark/conf/sparkbench/sparkbench.conf Export env: HADOOP_CONF_DIR=/etc/hadoop/conf.cloudera.yarn Submit Spark job: /opt/cloudera/parcels/CDH--.cdh5./lib/spark/bin/spark-submit --properties- --executor-cores --executor-memory 4g /home/cf/app/HiBench-master/sparkbench/assembly/target/sparkbench-assembly-7.1-SNAPSHOT-dist.jar hdfs://node1:8020/HiBench/Wordcount/Input hdfs://node1:8020/HiBench/Wordcount/Output // :: INFO remote.RemoteActorRefProvider$RemotingTerminator: Shutting down remote daemon. finish ScalaSparkWordcount bench

查看report/hibench.report

Type Date Time Input_data_size Duration(s) Throughput(bytes/s) Throughput/node HadoopWordcount -- :: ScalaSparkWordcount -- ::

\report\wordcount\spark下有多个文件:monitor.log是原始日志,bench.log是scheduler.DAGScheduler和scheduler.TaskSetManager信息,monitor.html可视化了系统的性能信息,\conf\wordcount.conf、\conf\sparkbench\spark.conf和\conf\sparkbench\sparkbench.conf是本次任务的环境变量

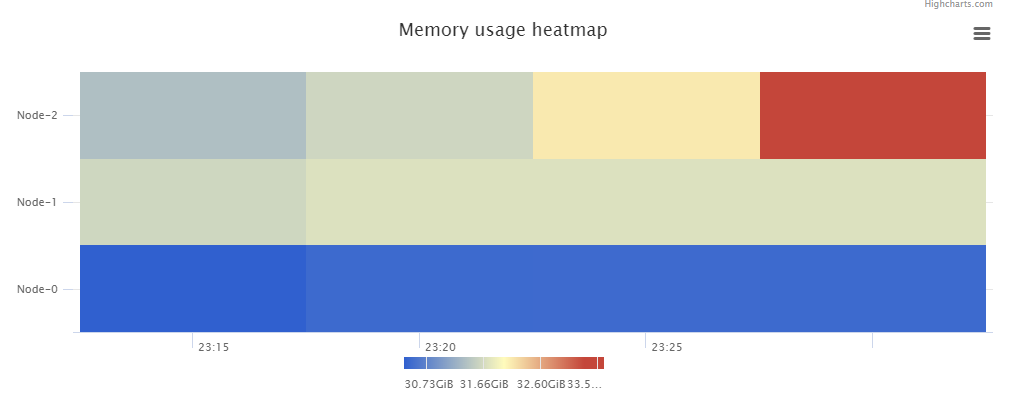

monitor.html中包含了Memory usage heatmap等统计图:

根据官方文档 https://github.com/Intel-bigdata/HiBench/blob/master/docs/run-sparkbench.md ,还可以修改 hibench.scale.profile 调整测试的数据规模,修改 hibench.default.map.parallelism 和 hibench.default.shuffle.parallelism 调整并行化,修改hibench.yarn.executor.num、hibench.yarn.executor.cores、spark.executor.memory和spark.driver.memory控制Spark executor 的数量、核数、内存和driver的内存。

HiBench成长笔记——(3) HiBench测试Spark的更多相关文章

- HiBench成长笔记——(1) HiBench概述

测试分类 HiBench共计19个测试方向,可大致分为6个测试类别:分别是micro,ml(机器学习),sql,graph,websearch和streaming. 2.1 micro Benchma ...

- HiBench成长笔记——(4) HiBench测试Spark SQL

很多内容之前的博客已经提过,这里不再赘述,详细内容参照本系列前面的博客:https://www.cnblogs.com/ratels/p/10970905.html 和 https://www.cnb ...

- HiBench成长笔记——(6) HiBench测试结果分析

Scan Join Aggregation Scan Join Aggregation Scan Join Aggregation Scan Join Aggregation Scan Join Ag ...

- HiBench成长笔记——(5) HiBench-Spark-SQL-Scan源码分析

run.sh #!/bin/bash # Licensed to the Apache Software Foundation (ASF) under one or more # contributo ...

- HiBench成长笔记——(2) CentOS部署安装HiBench

安装Scala 使用spark-shell命令进入shell模式,查看spark版本和Scala版本: 下载Scala2.10.5 wget https://downloads.lightbend.c ...

- HiBench成长笔记——(7) 阅读《The HiBench Benchmark Suite: Characterization of the MapReduce-Based Data Analysis》

<The HiBench Benchmark Suite: Characterization of the MapReduce-Based Data Analysis>内容精选 We th ...

- HiBench成长笔记——(10) 分析源码execute_with_log.py

#!/usr/bin/env python2 # Licensed to the Apache Software Foundation (ASF) under one or more # contri ...

- HiBench成长笔记——(9) 分析源码monitor.py

monitor.py 是主监控程序,将监控数据写入日志,并统计监控数据生成HTML统计展示页面: #!/usr/bin/env python2 # Licensed to the Apache Sof ...

- HiBench成长笔记——(8) 分析源码workload_functions.sh

workload_functions.sh 是测试程序的入口,粘连了监控程序 monitor.py 和 主运行程序: #!/bin/bash # Licensed to the Apache Soft ...

随机推荐

- vector的使用-Hdu 4841

圆桌问题 Time Limit: 3000/1000 MS (Java/Others) Memory Limit: 65535/32768 K (Java/Others)Total Submis ...

- Android开发遇到的问题:不给include设置width、height,导致ListView GridView内容无法显示

我的目的是做一个带有TextView的ListView列表页面. 以下是这个页面的xml: <?xml version="1.0" encoding="utf-8& ...

- PAT A1091 Acute Stroke

对于坐标平面的bfs模板题~ #include<bits/stdc++.h> using namespace std; ; ][][]={false}; ][][]; int n,m,l, ...

- 吴裕雄--天生自然ORACLE数据库学习笔记:优化SQL语句

create or replace procedure trun_table(table_deleted in varchar2) as --创建一个存储过程,传入一个表示表名称的参数,实现清空指定的 ...

- 1007 Maximum Subsequence Sum (25分) 求最大连续区间和

1007 Maximum Subsequence Sum (25分) Given a sequence of K integers { N1, N2, ..., NK }. A ...

- Python 基础之模块之math random time

一:math 数学模块import math#(1)ceil() 向上取整操作 (对比内置round)res = math.ceil(6.001) #注意精度损耗print(res)#(2)floo ...

- springboot内置的定时任务简单使用

直接上图:搞定(一定要加@EnableScheduling(开启定时任务)这个注解@Component(让spring扫描到)),下面是每五秒执行一次 结果:

- 【Java】变量命名规范

Java是一种区分字母的大小写的语言,所以我们在定义变量名的时候应该注意区分大小写的使用和一些规范,接下来我们简单的来讲讲Java语言中包.类.变量等的命名规范. (一)Package(包)的命名 P ...

- Flask - 数据库相关

1. Flask-SQLAlchemy 1.1 参考: http://flask-sqlalchemy.pocoo.org/2.3/ https://github.com/janetat/flasky ...

- JAVA培训—线程同步--卖票问题

线程同步方法: (1).同步代码块,格式: synchronized (同步对象){ //同步代码 } (2).同步方法,格式: 在方法前加synchronized修饰 问题: 多个人同时买票. 1. ...