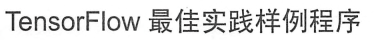

吴裕雄--天生自然python Google深度学习框架:MNIST数字识别问题

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data INPUT_NODE = 784 # 输入节点

OUTPUT_NODE = 10 # 输出节点

LAYER1_NODE = 500 # 隐藏层数 BATCH_SIZE = 100 # 每次batch打包的样本个数 # 模型相关的参数

LEARNING_RATE_BASE = 0.8

LEARNING_RATE_DECAY = 0.99

REGULARAZTION_RATE = 0.0001

TRAINING_STEPS = 5000

MOVING_AVERAGE_DECAY = 0.99 def inference(input_tensor, avg_class, weights1, biases1, weights2, biases2):

# 不使用滑动平均类

if avg_class == None:

layer1 = tf.nn.relu(tf.matmul(input_tensor, weights1) + biases1)

return tf.matmul(layer1, weights2) + biases2 else:

# 使用滑动平均类

layer1 = tf.nn.relu(tf.matmul(input_tensor, avg_class.average(weights1)) + avg_class.average(biases1))

return tf.matmul(layer1, avg_class.average(weights2)) + avg_class.average(biases2) def train(mnist):

x = tf.placeholder(tf.float32, [None, INPUT_NODE], name='x-input')

y_ = tf.placeholder(tf.float32, [None, OUTPUT_NODE], name='y-input')

# 生成隐藏层的参数。

weights1 = tf.Variable(tf.truncated_normal([INPUT_NODE, LAYER1_NODE], stddev=0.1))

biases1 = tf.Variable(tf.constant(0.1, shape=[LAYER1_NODE]))

# 生成输出层的参数。

weights2 = tf.Variable(tf.truncated_normal([LAYER1_NODE, OUTPUT_NODE], stddev=0.1))

biases2 = tf.Variable(tf.constant(0.1, shape=[OUTPUT_NODE])) # 计算不含滑动平均类的前向传播结果

y = inference(x, None, weights1, biases1, weights2, biases2) # 定义训练轮数及相关的滑动平均类

global_step = tf.Variable(0, trainable=False)

variable_averages = tf.train.ExponentialMovingAverage(MOVING_AVERAGE_DECAY, global_step)

variables_averages_op = variable_averages.apply(tf.trainable_variables())

average_y = inference(x, variable_averages, weights1, biases1, weights2, biases2) # 计算交叉熵及其平均值

cross_entropy = tf.nn.sparse_softmax_cross_entropy_with_logits(logits=y, labels=tf.argmax(y_, 1))

cross_entropy_mean = tf.reduce_mean(cross_entropy) # 损失函数的计算

regularizer = tf.contrib.layers.l2_regularizer(REGULARAZTION_RATE)

regularaztion = regularizer(weights1) + regularizer(weights2)

loss = cross_entropy_mean + regularaztion # 设置指数衰减的学习率。

learning_rate = tf.train.exponential_decay(

LEARNING_RATE_BASE,

global_step,

mnist.train.num_examples / BATCH_SIZE,

LEARNING_RATE_DECAY,

staircase=True) # 优化损失函数

train_step = tf.train.GradientDescentOptimizer(learning_rate).minimize(loss, global_step=global_step) # 反向传播更新参数和更新每一个参数的滑动平均值

with tf.control_dependencies([train_step, variables_averages_op]):

train_op = tf.no_op(name='train') # 计算正确率

correct_prediction = tf.equal(tf.argmax(average_y, 1), tf.argmax(y_, 1))

accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32)) # 初始化会话,并开始训练过程。

with tf.Session() as sess:

tf.global_variables_initializer().run()

validate_feed = {x: mnist.validation.images, y_: mnist.validation.labels}

test_feed = {x: mnist.test.images, y_: mnist.test.labels} # 循环的训练神经网络。

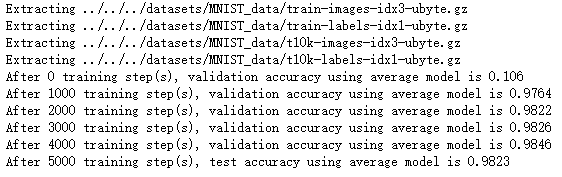

for i in range(TRAINING_STEPS):

if i % 1000 == 0:

validate_acc = sess.run(accuracy, feed_dict=validate_feed)

print("After %d training step(s), validation accuracy using average model is %g " % (i, validate_acc)) xs,ys=mnist.train.next_batch(BATCH_SIZE)

sess.run(train_op,feed_dict={x:xs,y_:ys}) test_acc=sess.run(accuracy,feed_dict=test_feed)

print(("After %d training step(s), test accuracy using average model is %g" %(TRAINING_STEPS, test_acc))) def main(argv=None):

mnist = input_data.read_data_sets("../../../datasets/MNIST_data", one_hot=True)

train(mnist) if __name__=='__main__':

main()

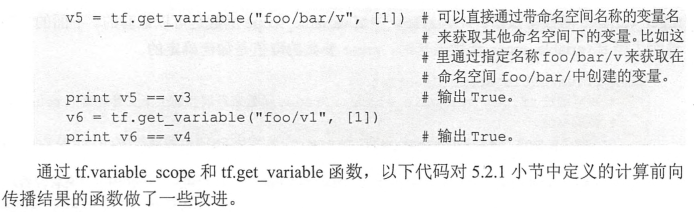

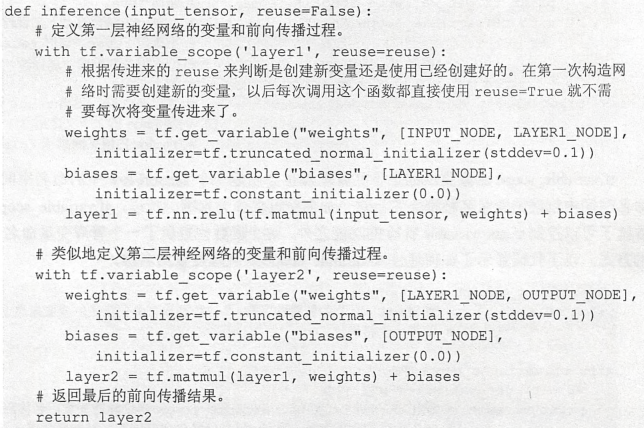

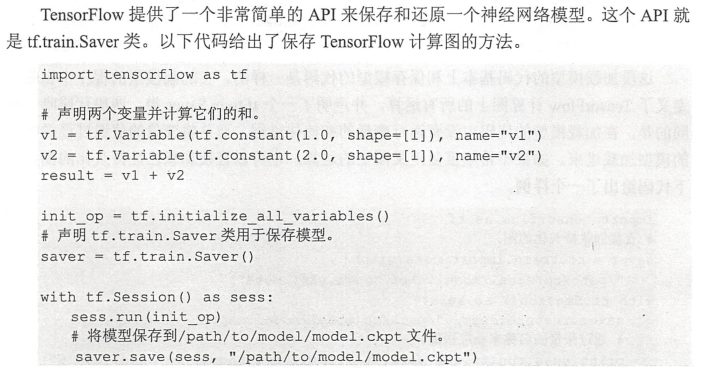

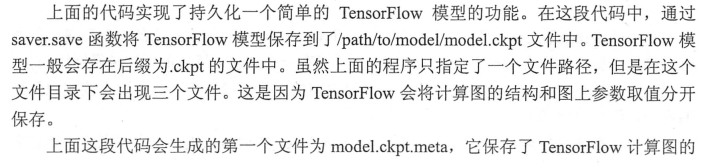

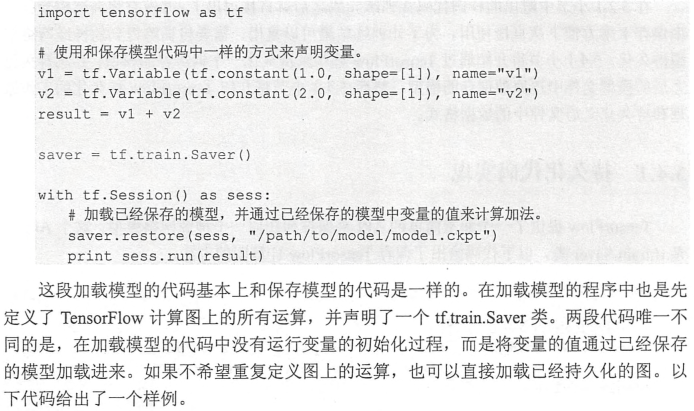

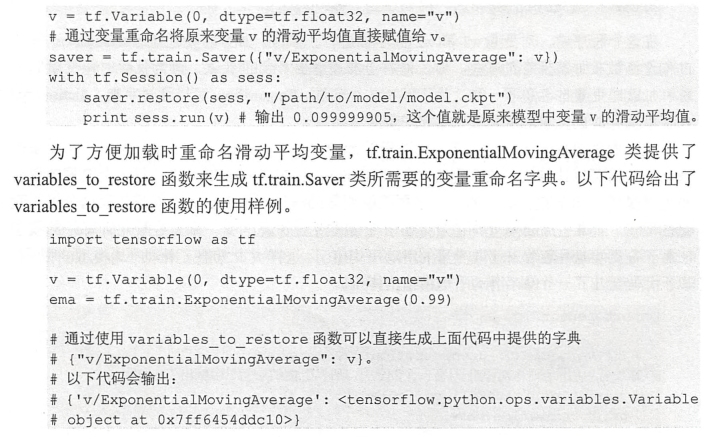

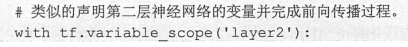

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

import mnist_inference

import os BATCH_SIZE = 100

LEARNING_RATE_BASE = 0.8

LEARNING_RATE_DECAY = 0.99

REGULARIZATION_RATE = 0.0001

TRAINING_STEPS = 30000

MOVING_AVERAGE_DECAY = 0.99

MODEL_SAVE_PATH = "MNIST_model/"

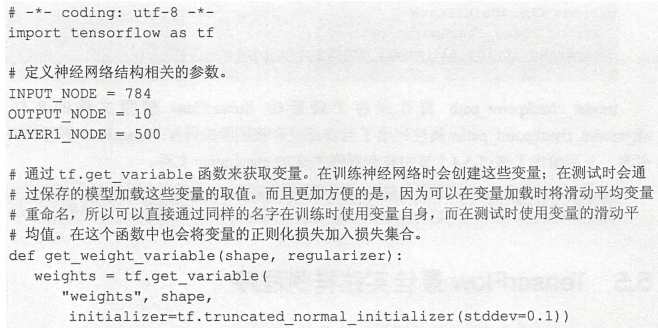

MODEL_NAME = "mnist_model" def train(mnist):

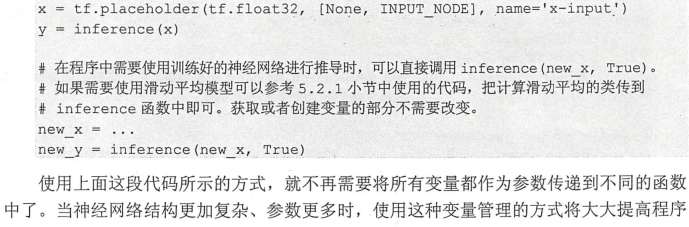

# 定义输入输出placeholder。

x = tf.placeholder(tf.float32, [None, mnist_inference.INPUT_NODE], name='x-input')

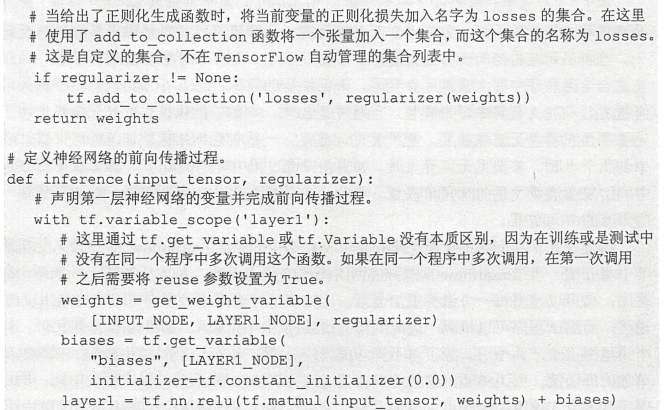

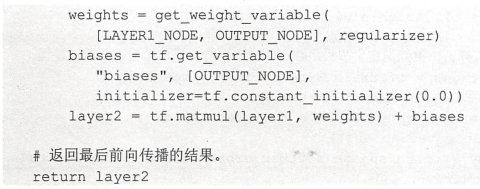

y_ = tf.placeholder(tf.float32, [None, mnist_inference.OUTPUT_NODE], name='y-input') regularizer = tf.contrib.layers.l2_regularizer(REGULARIZATION_RATE)

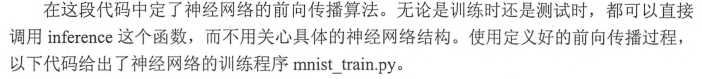

y = mnist_inference.inference(x, regularizer)

global_step = tf.Variable(0, trainable=False) # 定义损失函数、学习率、滑动平均操作以及训练过程。

variable_averages = tf.train.ExponentialMovingAverage(MOVING_AVERAGE_DECAY, global_step)

variables_averages_op = variable_averages.apply(tf.trainable_variables())

cross_entropy = tf.nn.sparse_softmax_cross_entropy_with_logits(logits=y, labels=tf.argmax(y_, 1))

cross_entropy_mean = tf.reduce_mean(cross_entropy)

loss = cross_entropy_mean + tf.add_n(tf.get_collection('losses'))

learning_rate = tf.train.exponential_decay(

LEARNING_RATE_BASE,

global_step,

mnist.train.num_examples / BATCH_SIZE, LEARNING_RATE_DECAY,

staircase=True)

train_step = tf.train.GradientDescentOptimizer(learning_rate).minimize(loss, global_step=global_step)

with tf.control_dependencies([train_step, variables_averages_op]):

train_op = tf.no_op(name='train') # 初始化TensorFlow持久化类。

saver = tf.train.Saver()

with tf.Session() as sess:

tf.global_variables_initializer().run() for i in range(TRAINING_STEPS):

xs, ys = mnist.train.next_batch(BATCH_SIZE)

_, loss_value, step = sess.run([train_op, loss, global_step], feed_dict={x: xs, y_: ys})

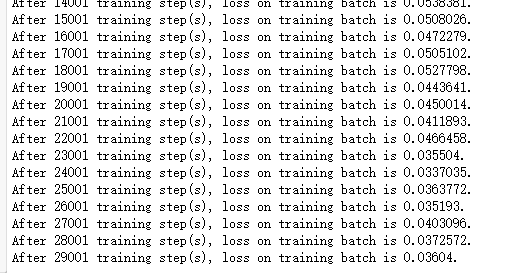

if i % 1000 == 0:

print("After %d training step(s), loss on training batch is %g." % (step, loss_value))

saver.save(sess, os.path.join(MODEL_SAVE_PATH, MODEL_NAME), global_step=global_step) def main(argv=None):

mnist = input_data.read_data_sets("../../../datasets/MNIST_data", one_hot=True)

train(mnist) if __name__ == '__main__':

main()

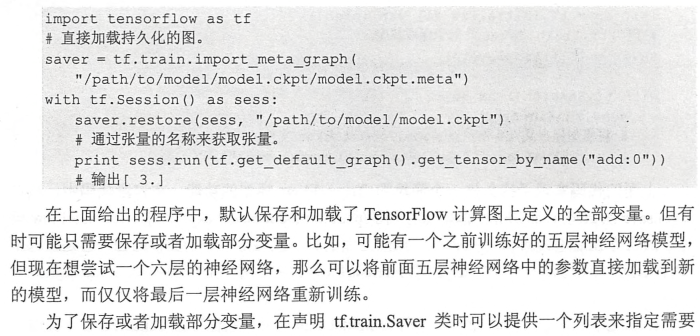

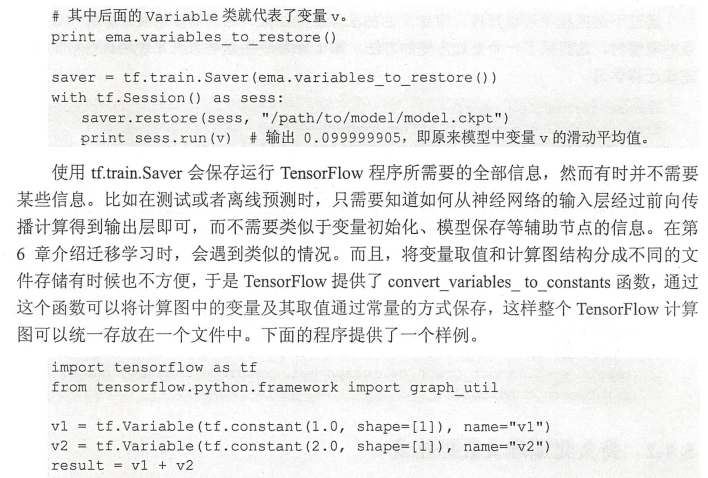

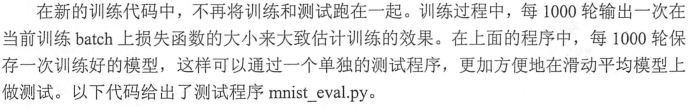

import time

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

import mnist_inference

import mnist_train # 加载的时间间隔。

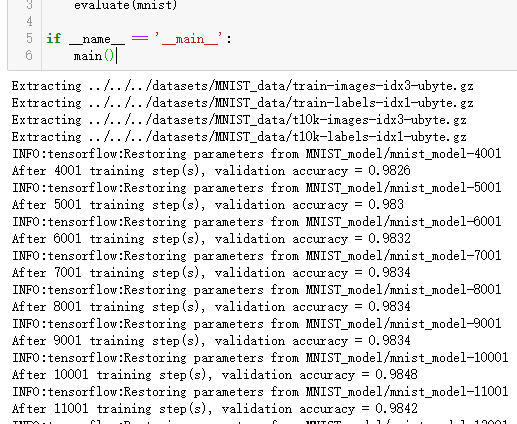

EVAL_INTERVAL_SECS = 10 def evaluate(mnist):

with tf.Graph().as_default() as g:

x = tf.placeholder(tf.float32, [None, mnist_inference.INPUT_NODE], name='x-input')

y_ = tf.placeholder(tf.float32, [None, mnist_inference.OUTPUT_NODE], name='y-input')

validate_feed = {x: mnist.validation.images, y_: mnist.validation.labels} y = mnist_inference.inference(x, None)

correct_prediction = tf.equal(tf.argmax(y, 1), tf.argmax(y_, 1))

accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32)) variable_averages = tf.train.ExponentialMovingAverage(mnist_train.MOVING_AVERAGE_DECAY)

variables_to_restore = variable_averages.variables_to_restore()

saver = tf.train.Saver(variables_to_restore) while True:

with tf.Session() as sess:

ckpt = tf.train.get_checkpoint_state(mnist_train.MODEL_SAVE_PATH)

if ckpt and ckpt.model_checkpoint_path:

saver.restore(sess, ckpt.model_checkpoint_path)

global_step = ckpt.model_checkpoint_path.split('/')[-1].split('-')[-1]

accuracy_score = sess.run(accuracy, feed_dict=validate_feed)

print("After %s training step(s), validation accuracy = %g" % (global_step, accuracy_score))

else:

print('No checkpoint file found')

return

time.sleep(EVAL_INTERVAL_SECS) def main(argv=None):

mnist = input_data.read_data_sets("../../../datasets/MNIST_data", one_hot=True)

evaluate(mnist) if __name__ == '__main__':

main()

吴裕雄--天生自然python Google深度学习框架:MNIST数字识别问题的更多相关文章

- 吴裕雄--天生自然python Google深度学习框架:Tensorflow实现迁移学习

import glob import os.path import numpy as np import tensorflow as tf from tensorflow.python.platfor ...

- 吴裕雄--天生自然python Google深度学习框架:经典卷积神经网络模型

import tensorflow as tf INPUT_NODE = 784 OUTPUT_NODE = 10 IMAGE_SIZE = 28 NUM_CHANNELS = 1 NUM_LABEL ...

- 吴裕雄--天生自然python Google深度学习框架:图像识别与卷积神经网络

- 吴裕雄--天生自然python Google深度学习框架:深度学习与深层神经网络

- 吴裕雄--天生自然python Google深度学习框架:TensorFlow实现神经网络

http://playground.tensorflow.org/

- 吴裕雄--天生自然python Google深度学习框架:Tensorflow基础应用

import tensorflow as tf a = tf.constant([1.0, 2.0], name="a") b = tf.constant([2.0, 3.0], ...

- 吴裕雄--天生自然python Google深度学习框架:人工智能、深度学习与机器学习相互关系介绍

- 吴裕雄--天生自然神经网络与深度学习实战Python+Keras+TensorFlow:Bellman函数、贪心算法与增强性学习网络开发实践

!pip install gym import random import numpy as np import matplotlib.pyplot as plt from keras.layers ...

- 吴裕雄--天生自然神经网络与深度学习实战Python+Keras+TensorFlow:使用TensorFlow和Keras开发高级自然语言处理系统——LSTM网络原理以及使用LSTM实现人机问答系统

!mkdir '/content/gdrive/My Drive/conversation' ''' 将文本句子分解成单词,并构建词库 ''' path = '/content/gdrive/My D ...

随机推荐

- POJ 3083:Children of the Candy Corn

Children of the Candy Corn Time Limit: 1000MS Memory Limit: 65536K Total Submissions: 11015 Acce ...

- SpringCloud学习之Ribbon使用(四)

1.关于 Ribbon Spring Cloud Ribbon 是基于 Netflix Ribbon 实现的一套客户端负载均衡的工具.Ribbon 是 Netflix 发布的开源项目,主要功能是提供客 ...

- {转}Java 字符串分割三种方法

http://www.chenwg.com/java/java-%E5%AD%97%E7%AC%A6%E4%B8%B2%E5%88%86%E5%89%B2%E4%B8%89%E7%A7%8D%E6%9 ...

- 怎么调出原生态launcher

adb shell am start -n com.android.launcher3/.Launcher

- PAT Advanced 1086 Tree Traversals Again (25) [树的遍历]

题目 An inorder binary tree traversal can be implemented in a non-recursive way with a stack. For exam ...

- vim里设置tab及自动换行

今天在使用vim编辑器时发现默认的tab键是8个字符,于是就想到把它设为四个空格,经过百度,得到了以下方法: 首先进入~/.vimrc 然后在文档末尾加上以下代码: set tabstop=4 ...

- Django2.0——中间件

Django中间件middleware本质是一个类,在请求到返回的中间,类中不同的方法会在指定的时机中被触发.setting.py的变量MIDDLEWARE_CLASSES中的每一个元素都是中间件,且 ...

- iOS 通过有alpha值的图片创建蒙版

@interface ViewController () @property (nonatomic, weak) IBOutlet UIImageView *imageView; @end @impl ...

- 图解:平衡二叉树,AVL树

学习过了二叉查找树,想必大家有遇到一个问题.例如,将一个数组{1,2,3,4}依次插入树的时候,形成了图1的情况.有建立树与没建立树对于数据的增删查改已经没有了任何帮助,反而增添了维护的成本.而只有建 ...

- 第 10 章 gdb

一.参考网址 1.linux c编程一站式学习 二.命令列表 1.图1: 2.图2: 3.图3: 三.重点摘抄 1.断点与观测点的区别 我们知道断点是当程序执行到某一代码行时中断,而观察点是当程序访问 ...