Reducing and Profiling GPU Memory Usage in Keras with TensorFlow Backend

keras 自适应分配显存 & 清理不用的变量释放 GPU 显存

Intro

Are you running out of GPU memory when using keras or tensorflow deep learning models, but only some of the time?

Are you curious about exactly how much GPU memory your tensorflow model uses during training?

Are you wondering if you can run two or more keras models on your GPU at the same time?

Background

By default, tensorflow pre-allocates nearly all of the available GPU memory, which is bad for a variety of use cases, especially production and memory profiling.

When keras uses tensorflow for its back-end, it inherits this behavior.

Setting tensorflow GPU memory options

For new models

Thankfully, tensorflow allows you to change how it allocates GPU memory, and to set a limit on how much GPU memory it is allowed to allocate.

Let’s set GPU options on keras‘s example Sequence classification with LSTM network

## keras example imports

from keras.models import Sequential

from keras.layers import Dense, Dropout

from keras.layers import Embedding

from keras.layers import LSTM ## extra imports to set GPU options

import tensorflow as tf

from keras import backend as k ###################################

# TensorFlow wizardry

config = tf.ConfigProto() # Don't pre-allocate memory; allocate as-needed

config.gpu_options.allow_growth = True # Only allow a total of half the GPU memory to be allocated

#config.gpu_options.per_process_gpu_memory_fraction = 0.5 # Create a session with the above options specified.

k.tensorflow_backend.set_session(tf.Session(config=config))

################################### model = Sequential()

model.add(Embedding(max_features, output_dim=256))

model.add(LSTM(128))

model.add(Dropout(0.5))

model.add(Dense(1, activation='sigmoid')) model.compile(loss='binary_crossentropy',

optimizer='rmsprop',

metrics=['accuracy']) model.fit(x_train, y_train, batch_size=16, epochs=10)

score = model.evaluate(x_test, y_test, batch_size=16)

After the above, when we create the sequence classification model, it won’t use half the GPU memory automatically, but rather will allocate GPU memory as-needed during the calls to model.fit() and model.evaluate().

Additionally, with the per_process_gpu_memory_fraction = 0.5, tensorflow will only allocate a total of half the available GPU memory.

If it tries to allocate more than half of the total GPU memory, tensorflow will throw a ResourceExhaustedError, and you’ll get a lengthy stack trace.

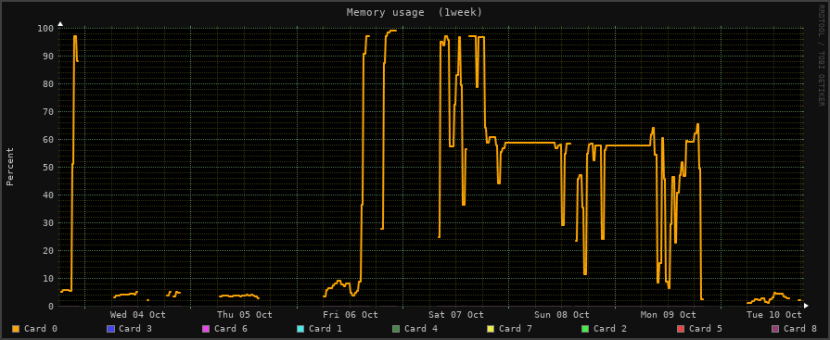

If you have a Linux machine and an nvidia card, you can watch nvidia-smi to see how much GPU memory is in use, or can configure a monitoring tool like monitorix to generate graphs for you.

GPU memory usage, as shown in Monitorix for Linux

For a model that you’re loading

We can even set GPU memory management options for a model that’s already created and trained, and that we’re loading from disk for deployment or for further training.

For that, let’s tweak keras‘s load_model example:

# keras example imports

from keras.models import load_model ## extra imports to set GPU options

import tensorflow as tf

from keras import backend as k ###################################

# TensorFlow wizardry

config = tf.ConfigProto() # Don't pre-allocate memory; allocate as-needed

config.gpu_options.allow_growth = True # Only allow a total of half the GPU memory to be allocated

config.gpu_options.per_process_gpu_memory_fraction = 0.5 # Create a session with the above options specified.

k.tensorflow_backend.set_session(tf.Session(config=config))

################################### # returns a compiled model

# identical to the previous one

model = load_model('my_model.h5') # TODO: classify all the things

Now, with your loaded model, you can open your favorite GPU monitoring tool and watch how the GPU memory usage changes under different loads.

Conclusion

Good news everyone! That sweet deep learning model you just made doesn’t actually need all that memory it usually claims!

And, now that you can tell tensorflow not to pre-allocate memory, you can get a much better idea of what kind of rig(s) you need in order to deploy your model into production.

Is this how you’re handling GPU memory management issues with tensorflow or keras?

Did I miss a better, cleaner way of handling GPU memory allocation with tensorflow and keras?

Let me know in the comments!

How to remove stale models from GPU memory

import gc

m = Model(.....)

m.save(tmp_model_name)

del m

K.clear_session()

gc.collect()

m = load_model(tmp_model_name)

Reducing and Profiling GPU Memory Usage in Keras with TensorFlow Backend的更多相关文章

- GPU Memory Usage占满而GPU-Util却为0的调试

最近使用github上的一个开源项目训练基于CNN的翻译模型,使用THEANO_FLAGS='floatX=float32,device=gpu2,lib.cnmem=1' python run_nn ...

- Allowing GPU memory growth

By default, TensorFlow maps nearly all of the GPU memory of all GPUs (subject to CUDA_VISIBLE_DEVICE ...

- Redis: Reducing Memory Usage

High Level Tips for Redis Most of Stream-Framework's users start out with Redis and eventually move ...

- Android 性能优化(21)*性能工具之「GPU呈现模式分析」Profiling GPU Rendering Walkthrough:分析View显示是否超标

Profiling GPU Rendering Walkthrough 1.In this document Prerequisites Profile GPU Rendering $adb shel ...

- Memory usage of a Java process java Xms Xmx Xmn

http://www.oracle.com/technetwork/java/javase/memleaks-137499.html 3.1 Meaning of OutOfMemoryError O ...

- Shell script for logging cpu and memory usage of a Linux process

Shell script for logging cpu and memory usage of a Linux process http://www.unix.com/shell-programmi ...

- 5 commands to check memory usage on Linux

Memory Usage On linux, there are commands for almost everything, because the gui might not be always ...

- SHELL:Find Memory Usage In Linux (统计每个程序内存使用情况)

转载一个shell统计linux系统中每个程序的内存使用情况,因为内存结构非常复杂,不一定100%精确,此shell可以在Ghub上下载. [root@db231 ~]# ./memstat.sh P ...

- Why does the memory usage increase when I redeploy a web application?

That is because your web application has a memory leak. A common issue are "PermGen" memor ...

随机推荐

- (转)MySQL字段类型详解

MySQL字段类型详解 原文:http://www.cnblogs.com/100thMountain/p/4692842.html MySQL支持大量的列类型,它可以被分为3类:数字类型.日期和时间 ...

- JVM-垃圾收集算法、垃圾收集器、内存分配和收集策略

对象已死么? 判断一个对象是否存活一般有两种方式: 1.引用计数算法:每个对象都有一个引用计数属性,新增一个引用时计数加1,引用释放时计数减1.计数为0时可以回收. 2.可达性分析算法(Reachab ...

- Zookeeper在Centos7上搭建单节点应用

(默认机器上已经安装并配置好了jdk) 1.下载zookeeper并解压 $ tar -zxvf zookeeper-3.4.6.tar.gz 2.将解压后的文件夹移动到 /usr/local/ 目录 ...

- android 使用lint + studio ,排查客户端无用string,drawable,layout资源

在项目中点击右键(或者菜单中的Analyze),在出现的右键菜单中有“Analyze” --> “run inspaction by Name ...”.在弹出的搜索窗口中输入想执行的检查类型, ...

- NiftyDialogEffects-多种弹出效果的对话框

感觉系统自带的对话框弹出太生硬?那就试试NiftyDialogEffects吧,类似于(Nifty Modal Window Effects),效果是模仿里面实现的 ScreenShot . . ...

- OpenGL10-骨骼动画原理篇(2)

接上一篇的内容,上一篇,简单的介绍了,骨骼动画的原理,给出来一个 简单的例程,这一例程将给展示一个最初级的人物动画,具备多细节内容 以人走路为例子,当人走路的从一个站立开始,到迈出一步,这个过程是 一 ...

- crontab的用法

转载于:点击打开链接 cron是一个linux下的定时执行工具,可以在无需人工干预的情况下运行作业. 由于Cron 是Linux的内置服务,但它不自动起来,可以用以下的方法启动.关闭这个服务: / ...

- 《Head First 设计模式》读后总结:基础,原则,模式

基础 抽象 封装 多态 继承 原则 封装变化 多用组合,少用继承 针对接口编程,不针对实现编程 为交互对象之间的松耦合设计而努力 类应该对扩展开放,对修改关闭 依赖抽象,不要依赖具体类 只和朋友交谈 ...

- 消除ADB错误“more than one device and emulator”的方法(转)

当我连着手机充电的时候,启动模拟器调试,执行ADB指令时,报错.C:\Users\gaojs>adb shellerror: more than one device and emulatorC ...

- Angular2 获取当前点击的元素

<a (click)="onClick($event)"></a> onClick($event){ console.log($event.target); ...