从零教你如何获取hadoop2.4源码并使用eclipse关联hadoop2.4源码

从零教你如何获取hadoop2.4源码并使用eclipse关联hadoop2.4源码

http://www.aboutyun.com/thread-8211-1-1.html

(出处: about云开发)

问题导读:

1.如何通过官网src包,获取hadoop的全部代码

2.通过什么样的操作,可以查看hadoop某个函数或则类的实现?

3.maven的作用是什么?

我们如果想搞开发,研究源码对我们的帮助很大。不明白原理就如同黑盒子,遇到问题,我们也摸不着思路。所以这里交给大家

一.如何获取源码

二.如何关联源码

一.如何获取源码

1.下载hadoop的maven程序包

(1)官网下载

这里我们先从官网上下载maven包hadoop-2.4.0-src.tar.gz。

官网下载地址

对于不知道怎么去官网下载,可以查看:新手指导:hadoop官网介绍及如何下载hadoop(2.4)各个版本与查看hadoop API介绍

(2)网盘下载

也可以从网盘下载:

http://pan.baidu.com/s/1kToPuGB

2.通过maven获取源码

获取源码的方式有两种,一种是通过命令行的方式,一种是通过eclipse。这里主要讲通过命令的方式

通过命令的方式获取源码:

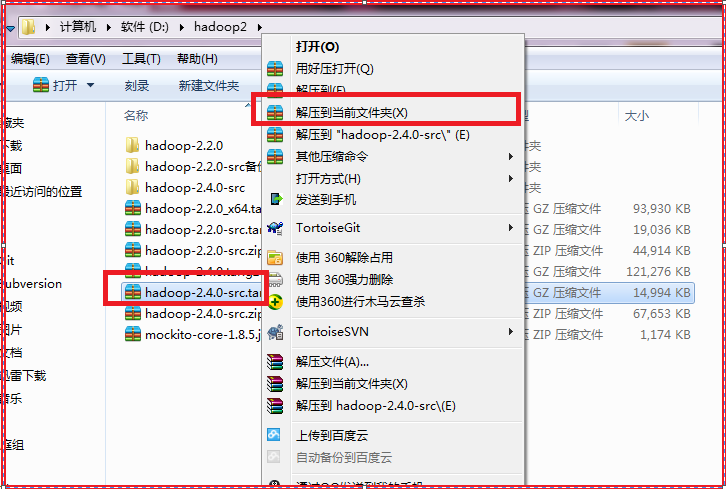

1.解压包

解压包的时候遇到了下面问题。不过不用管,我们继续往下走

1 : 无法创建文件:D:\hadoop2\hadoop-2.4.0-src\hadoop-yarn-project\hadoop-yarn\hadoop-yarn-server\hadoop-yarn-server-applicationhistoryservice\target\classes\org\apache\hadoop\yarn\server\applicationhistoryservice\ApplicationHistoryClientService$ApplicationHSClientProtocolHandler.class:

路径和文件名总长度不能超过260个字符

系统找不到指定的路径。 D:\hadoop2\hadoop-2.4.0-src.zip

2 : 无法创建文件:D:\hadoop2\hadoop-2.4.0-src\hadoop-yarn-project\hadoop-yarn\hadoop-yarn-server\hadoop-yarn-server-applicationhistoryservice\target\classes\org\apache\hadoop\yarn\server\applicationhistoryservice\timeline\LeveldbTimelineStore$LockMap$CountingReentrantLock.class:系统找不到指定的路径。 D:\hadoop2\hadoop-2.4.0-src.zip

3 : 无法创建文件:D:\hadoop2\hadoop-2.4.0-src\hadoop-yarn-project\hadoop-yarn\hadoop-yarn-server\hadoop-yarn-server-applicationhistoryservice\target\test-classes\org\apache\hadoop\yarn\server\applicationhistoryservice\webapp\TestAHSWebApp$MockApplicationHistoryManagerImpl.class:系统找不到指定的路径。 D:\hadoop2\hadoop-2.4.0-src.zip

4 : 无法创建文件:D:\hadoop2\hadoop-2.4.0-src\hadoop-yarn-project\hadoop-yarn\hadoop-yarn-server\hadoop-yarn-server-resourcemanager\target\test-classes\org\apache\hadoop\yarn\server\resourcemanager\monitor\capacity\TestProportionalCapacityPreemptionPolicy$IsPreemptionRequestFor.class:

路径和文件名总长度不能超过260个字符

系统找不到指定的路径。 D:\hadoop2\hadoop-2.4.0-src.zip

5 : 无法创建文件:D:\hadoop2\hadoop-2.4.0-src\hadoop-yarn-project\hadoop-yarn\hadoop-yarn-server\hadoop-yarn-server-resourcemanager\target\test-classes\org\apache\hadoop\yarn\server\resourcemanager\recovery\TestFSRMStateStore$TestFSRMStateStoreTester$TestFileSystemRMStore.class:系统找不到指定的路径。 D:\hadoop2\hadoop-2.4.0-src.zip

6 : 无法创建文件:D:\hadoop2\hadoop-2.4.0-src\hadoop-yarn-project\hadoop-yarn\hadoop-yarn-server\hadoop-yarn-server-resourcemanager\target\test-classes\org\apache\hadoop\yarn\server\resourcemanager\recovery\TestZKRMStateStore$TestZKRMStateStoreTester$TestZKRMStateStoreInternal.class:

路径和文件名总长度不能超过260个字符

系统找不到指定的路径。 D:\hadoop2\hadoop-2.4.0-src.zip

7 : 无法创建文件:D:\hadoop2\hadoop-2.4.0-src\hadoop-yarn-project\hadoop-yarn\hadoop-yarn-server\hadoop-yarn-server-resourcemanager\target\test-classes\org\apache\hadoop\yarn\server\resourcemanager\recovery\TestZKRMStateStoreZKClientConnections$TestZKClient$TestForwardingWatcher.class:

路径和文件名总长度不能超过260个字符

系统找不到指定的路径。 D:\hadoop2\hadoop-2.4.0-src.zip

8 : 无法创建文件:D:\hadoop2\hadoop-2.4.0-src\hadoop-yarn-project\hadoop-yarn\hadoop-yarn-server\hadoop-yarn-server-resourcemanager\target\test-classes\org\apache\hadoop\yarn\server\resourcemanager\recovery\TestZKRMStateStoreZKClientConnections$TestZKClient$TestZKRMStateStore.class:

路径和文件名总长度不能超过260个字符

系统找不到指定的路径。 D:\hadoop2\hadoop-2.4.0-src.zip

9 : 无法创建文件:D:\hadoop2\hadoop-2.4.0-src\hadoop-yarn-project\hadoop-yarn\hadoop-yarn-server\hadoop-yarn-server-resourcemanager\target\test-classes\org\apache\hadoop\yarn\server\resourcemanager\rmapp\attempt\TestRMAppAttemptTransitions$TestApplicationAttemptEventDispatcher.class:

路径和文件名总长度不能超过260个字符

系统找不到指定的路径。 D:\hadoop2\hadoop-2.4.0-src.zip

2.通过maven获取源码

这里需要说明的是,在使用maven的时候,需要先安装jdk,protoc ,如果没有安装可以参考win7如何安装maven、安装protoc

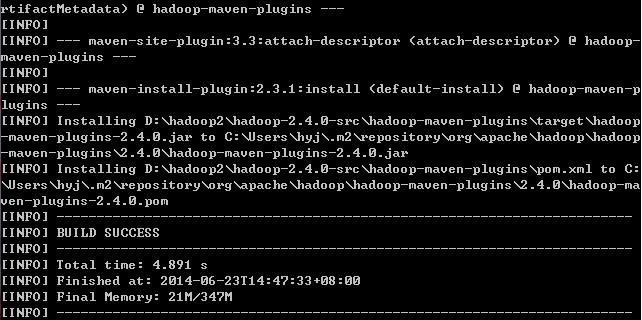

(1)进入hadoop-2.4.0-src\hadoop-maven-plugins,运行mvn install

- D:\hadoop2\hadoop-2.4.0-src\hadoop-maven-plugins>mvn install

复制代码

显示如下信息

- [INFO] Scanning for projects...

- [WARNING]

- [WARNING] Some problems were encountered while building the effective model for

- org.apache.hadoop:hadoop-maven-plugins:maven-plugin:2.4.0

- [WARNING] 'build.plugins.plugin.(groupId:artifactId)' must be unique but found d

- uplicate declaration of plugin org.apache.maven.plugins:maven-enforcer-plugin @

- org.apache.hadoop:hadoop-project:2.4.0, D:\hadoop2\hadoop-2.4.0-src\hadoop-proje

- ct\pom.xml, line 1015, column 15

- [WARNING]

- [WARNING] It is highly recommended to fix these problems because they threaten t

- he stability of your build.

- [WARNING]

- [WARNING] For this reason, future Maven versions might no longer support buildin

- g such malformed projects.

- [WARNING]

- [INFO]

- [INFO] Using the builder org.apache.maven.lifecycle.internal.builder.singlethrea

- ded.SingleThreadedBuilder with a thread count of 1

- [INFO]

- [INFO] ------------------------------------------------------------------------

- [INFO] Building Apache Hadoop Maven Plugins 2.4.0

- [INFO] ------------------------------------------------------------------------

- [INFO]

- [INFO] --- maven-antrun-plugin:1.7:run (create-testdirs) @ hadoop-maven-plugins

- ---

- [INFO] Executing tasks

- main:

- [INFO] Executed tasks

- [INFO]

- [INFO] --- maven-plugin-plugin:3.0:descriptor (default-descriptor) @ hadoop-mave

- n-plugins ---

- [INFO] Using 'UTF-8' encoding to read mojo metadata.

- [INFO] Applying mojo extractor for language: java-annotations

- [INFO] Mojo extractor for language: java-annotations found 2 mojo descriptors.

- [INFO] Applying mojo extractor for language: java

- [INFO] Mojo extractor for language: java found 0 mojo descriptors.

- [INFO] Applying mojo extractor for language: bsh

- [INFO] Mojo extractor for language: bsh found 0 mojo descriptors.

- [INFO]

- [INFO] --- maven-resources-plugin:2.2:resources (default-resources) @ hadoop-mav

- en-plugins ---

- [INFO] Using default encoding to copy filtered resources.

- [INFO]

- [INFO] --- maven-compiler-plugin:2.5.1:compile (default-compile) @ hadoop-maven-

- plugins ---

- [INFO] Nothing to compile - all classes are up to date

- [INFO]

- [INFO] --- maven-plugin-plugin:3.0:descriptor (mojo-descriptor) @ hadoop-maven-p

- lugins ---

- [INFO] Using 'UTF-8' encoding to read mojo metadata.

- [INFO] Applying mojo extractor for language: java-annotations

- [INFO] Mojo extractor for language: java-annotations found 2 mojo descriptors.

- [INFO] Applying mojo extractor for language: java

- [INFO] Mojo extractor for language: java found 0 mojo descriptors.

- [INFO] Applying mojo extractor for language: bsh

- [INFO] Mojo extractor for language: bsh found 0 mojo descriptors.

- [INFO]

- [INFO] --- maven-resources-plugin:2.2:testResources (default-testResources) @ ha

- doop-maven-plugins ---

- [INFO] Using default encoding to copy filtered resources.

- [INFO]

- [INFO] --- maven-compiler-plugin:2.5.1:testCompile (default-testCompile) @ hadoo

- p-maven-plugins ---

- [INFO] No sources to compile

- [INFO]

- [INFO] --- maven-surefire-plugin:2.16:test (default-test) @ hadoop-maven-plugins

- ---

- [INFO] No tests to run.

- [INFO]

- [INFO] --- maven-jar-plugin:2.3.1:jar (default-jar) @ hadoop-maven-plugins ---

- [INFO] Building jar: D:\hadoop2\hadoop-2.4.0-src\hadoop-maven-plugins\target\had

- oop-maven-plugins-2.4.0.jar

- [INFO]

- [INFO] --- maven-plugin-plugin:3.0:addPluginArtifactMetadata (default-addPluginA

- rtifactMetadata) @ hadoop-maven-plugins ---

- [INFO]

- [INFO] --- maven-site-plugin:3.3:attach-descriptor (attach-descriptor) @ hadoop-

- maven-plugins ---

- [INFO]

- [INFO] --- maven-install-plugin:2.3.1:install (default-install) @ hadoop-maven-p

- lugins ---

- [INFO] Installing D:\hadoop2\hadoop-2.4.0-src\hadoop-maven-plugins\target\hadoop

- -maven-plugins-2.4.0.jar to C:\Users\hyj\.m2\repository\org\apache\hadoop\hadoop

- -maven-plugins\2.4.0\hadoop-maven-plugins-2.4.0.jar

- [INFO] Installing D:\hadoop2\hadoop-2.4.0-src\hadoop-maven-plugins\pom.xml to C:

- \Users\hyj\.m2\repository\org\apache\hadoop\hadoop-maven-plugins\2.4.0\hadoop-ma

- ven-plugins-2.4.0.pom

- [INFO] ------------------------------------------------------------------------

- [INFO] BUILD SUCCESS

- [INFO] ------------------------------------------------------------------------

- [INFO] Total time: 4.891 s

- [INFO] Finished at: 2014-06-23T14:47:33+08:00

- [INFO] Final Memory: 21M/347M

- [INFO] ------------------------------------------------------------------------

复制代码

部分截图如下:

(2)运行

- mvn eclipse:eclipse -DskipTests

复制代码

这时候注意,我们进入的是hadoop_home,我这里是D:\hadoop2\hadoop-2.4.0-src

部分信息如下

- [INFO]

- [INFO] ------------------------------------------------------------------------

- [INFO] Reactor Summary:

- [INFO]

- [INFO] Apache Hadoop Main ................................ SUCCESS [ 0.684 s]

- [INFO] Apache Hadoop Project POM ......................... SUCCESS [ 0.720 s]

- [INFO] Apache Hadoop Annotations ......................... SUCCESS [ 0.276 s]

- [INFO] Apache Hadoop Project Dist POM .................... SUCCESS [ 0.179 s]

- [INFO] Apache Hadoop Assemblies .......................... SUCCESS [ 0.121 s]

- [INFO] Apache Hadoop Maven Plugins ....................... SUCCESS [ 1.680 s]

- [INFO] Apache Hadoop MiniKDC ............................. SUCCESS [ 1.802 s]

- [INFO] Apache Hadoop Auth ................................ SUCCESS [ 1.024 s]

- [INFO] Apache Hadoop Auth Examples ....................... SUCCESS [ 0.160 s]

- [INFO] Apache Hadoop Common .............................. SUCCESS [ 1.061 s]

- [INFO] Apache Hadoop NFS ................................. SUCCESS [ 0.489 s]

- [INFO] Apache Hadoop Common Project ...................... SUCCESS [ 0.056 s]

- [INFO] Apache Hadoop HDFS ................................ SUCCESS [ 2.770 s]

- [INFO] Apache Hadoop HttpFS .............................. SUCCESS [ 0.965 s]

- [INFO] Apache Hadoop HDFS BookKeeper Journal ............. SUCCESS [ 0.629 s]

- [INFO] Apache Hadoop HDFS-NFS ............................ SUCCESS [ 0.284 s]

- [INFO] Apache Hadoop HDFS Project ........................ SUCCESS [ 0.061 s]

- [INFO] hadoop-yarn ....................................... SUCCESS [ 0.052 s]

- [INFO] hadoop-yarn-api ................................... SUCCESS [ 0.842 s]

- [INFO] hadoop-yarn-common ................................ SUCCESS [ 0.322 s]

- [INFO] hadoop-yarn-server ................................ SUCCESS [ 0.065 s]

- [INFO] hadoop-yarn-server-common ......................... SUCCESS [ 0.972 s]

- [INFO] hadoop-yarn-server-nodemanager .................... SUCCESS [ 0.580 s]

- [INFO] hadoop-yarn-server-web-proxy ...................... SUCCESS [ 0.379 s]

- [INFO] hadoop-yarn-server-applicationhistoryservice ...... SUCCESS [ 0.281 s]

- [INFO] hadoop-yarn-server-resourcemanager ................ SUCCESS [ 0.378 s]

- [INFO] hadoop-yarn-server-tests .......................... SUCCESS [ 0.534 s]

- [INFO] hadoop-yarn-client ................................ SUCCESS [ 0.307 s]

- [INFO] hadoop-yarn-applications .......................... SUCCESS [ 0.050 s]

- [INFO] hadoop-yarn-applications-distributedshell ......... SUCCESS [ 0.202 s]

- [INFO] hadoop-yarn-applications-unmanaged-am-launcher .... SUCCESS [ 0.194 s]

- [INFO] hadoop-yarn-site .................................. SUCCESS [ 0.057 s]

- [INFO] hadoop-yarn-project ............................... SUCCESS [ 0.066 s]

- [INFO] hadoop-mapreduce-client ........................... SUCCESS [ 0.091 s]

- [INFO] hadoop-mapreduce-client-core ...................... SUCCESS [ 1.321 s]

- [INFO] hadoop-mapreduce-client-common .................... SUCCESS [ 0.786 s]

- [INFO] hadoop-mapreduce-client-shuffle ................... SUCCESS [ 0.456 s]

- [INFO] hadoop-mapreduce-client-app ....................... SUCCESS [ 0.508 s]

- [INFO] hadoop-mapreduce-client-hs ........................ SUCCESS [ 0.834 s]

- [INFO] hadoop-mapreduce-client-jobclient ................. SUCCESS [ 0.541 s]

- [INFO] hadoop-mapreduce-client-hs-plugins ................ SUCCESS [ 0.284 s]

- [INFO] Apache Hadoop MapReduce Examples .................. SUCCESS [ 0.851 s]

- [INFO] hadoop-mapreduce .................................. SUCCESS [ 0.099 s]

- [INFO] Apache Hadoop MapReduce Streaming ................. SUCCESS [ 0.742 s]

- [INFO] Apache Hadoop Distributed Copy .................... SUCCESS [ 0.335 s]

- [INFO] Apache Hadoop Archives ............................ SUCCESS [ 0.397 s]

- [INFO] Apache Hadoop Rumen ............................... SUCCESS [ 0.371 s]

- [INFO] Apache Hadoop Gridmix ............................. SUCCESS [ 0.230 s]

- [INFO] Apache Hadoop Data Join ........................... SUCCESS [ 0.184 s]

- [INFO] Apache Hadoop Extras .............................. SUCCESS [ 0.217 s]

- [INFO] Apache Hadoop Pipes ............................... SUCCESS [ 0.048 s]

- [INFO] Apache Hadoop OpenStack support ................... SUCCESS [ 0.244 s]

- [INFO] Apache Hadoop Client .............................. SUCCESS [ 0.590 s]

- [INFO] Apache Hadoop Mini-Cluster ........................ SUCCESS [ 0.230 s]

- [INFO] Apache Hadoop Scheduler Load Simulator ............ SUCCESS [ 0.650 s]

- [INFO] Apache Hadoop Tools Dist .......................... SUCCESS [ 0.334 s]

- [INFO] Apache Hadoop Tools ............................... SUCCESS [ 0.042 s]

- [INFO] Apache Hadoop Distribution ........................ SUCCESS [ 0.144 s]

- [INFO] ------------------------------------------------------------------------

- [INFO] BUILD SUCCESS

- [INFO] ------------------------------------------------------------------------

- [INFO] Total time: 31.234 s

- [INFO] Finished at: 2014-06-23T14:55:08+08:00

- [INFO] Final Memory: 84M/759M

- [INFO] ------------------------------------------------------------------------

复制代码

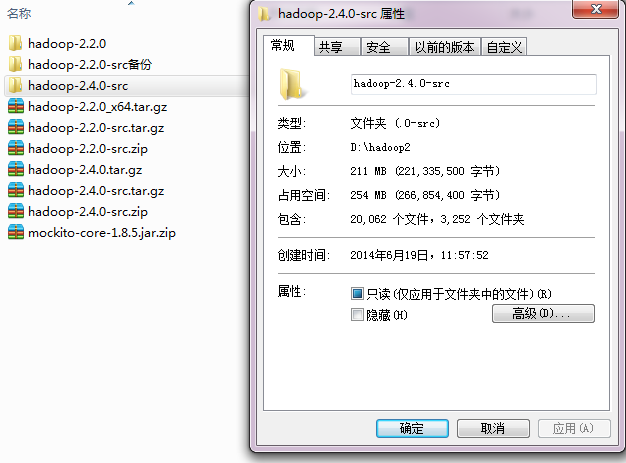

这时候,我们已经把源码给下载下来了。这时候,我们会看到文件会明显增大。

<ignore_js_op>

3.关联eclipse源码

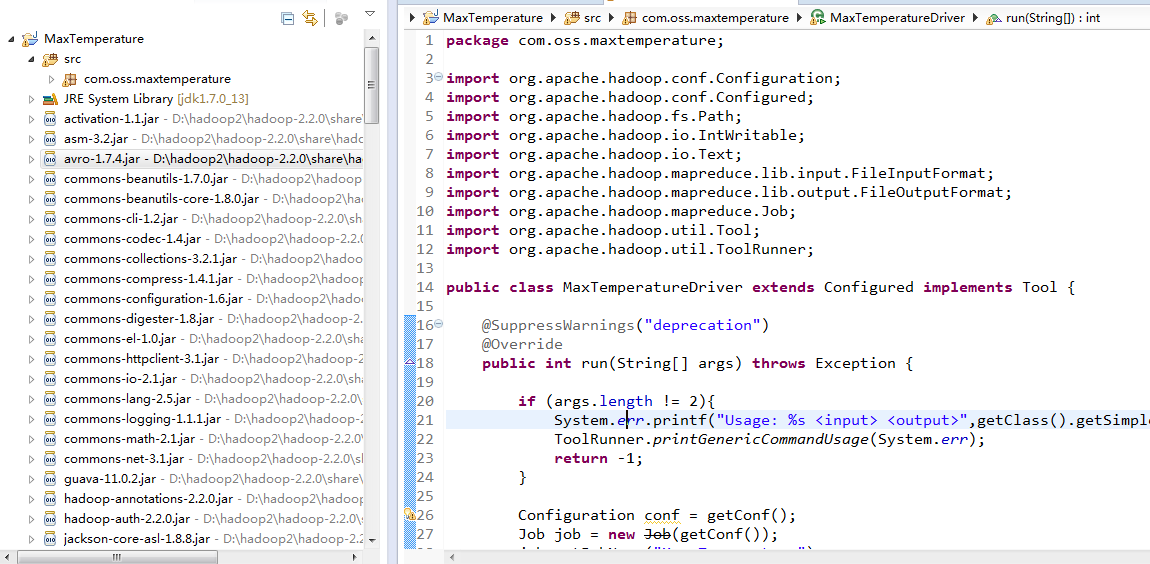

加入我们以下程序

<ignore_js_op> hadoop2.2mapreduce例子.rar (1.14 MB, 下载次数: 7, 售价: 1 云币)

hadoop2.2mapreduce例子.rar (1.14 MB, 下载次数: 7, 售价: 1 云币)

如下图示,对他们进行了打包

<ignore_js_op>

这两个文件, MaxTemperature.zip为mapreduce例子,mockito-core-1.8.5.jar为mapreduce例子所引用的包

(这里需要说明的是,mapreduce为2.2,但是不影响关联源码,只是交给大家该如何关联源码)

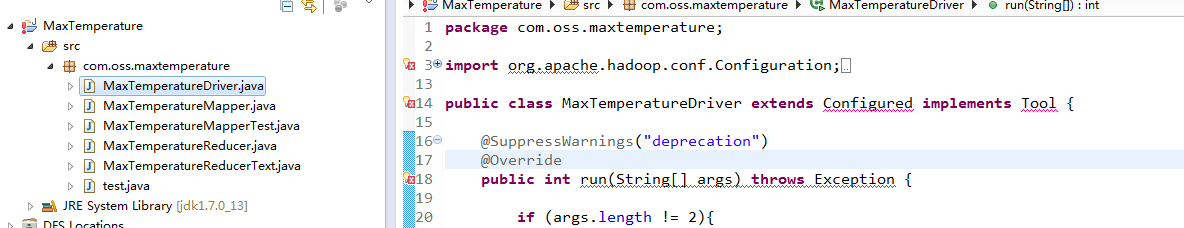

我们解压之后,导入eclipse

(对于导入项目不熟悉,参考零基础教你如何导入eclipse项目)

<ignore_js_op>

我们导入之后,看到很多的红线,这些其实都是没有引用包,下面我们开始解决这些语法问题。

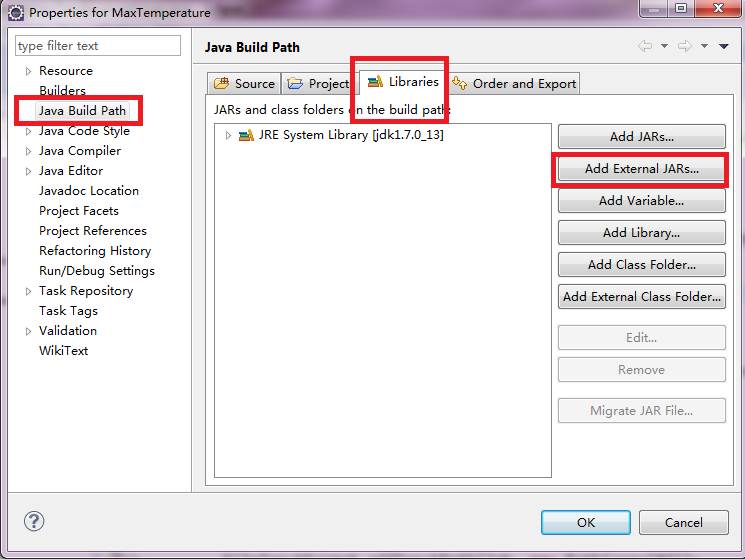

一、解决导入jar包

(1)引入mockito-core-1.8.5.jar

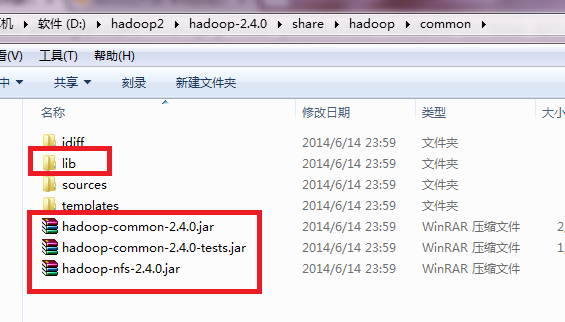

(2)hadoop2.4编译包中的jar文件,这些文件的位置如下:

hadoop_home中share\hadoop文件夹下,具体我这的位置D:\hadoop2\hadoop-2.4.0\share\hadoop

找到里面的jar包,举例如下:lib文件中的jar包,以及下面的jar包都添加到buildpath中。

如果对于引用包,不知道该如何添加这些jar包,参考hadoop开发方式总结及操作指导。

(注意的是,我们这里是引入的是编译包,编译的下载hadoop--642.4.0.tar.gz

链接: http://pan.baidu.com/s/1c0vPjG0 密码:xj6l)

更多包下载可以参考hadoop家族、strom、spark、Linux、flume等jar包、安装包汇总下载

二、关联源码

1.我们导入jar包之后,就没有错误了,如下图所示

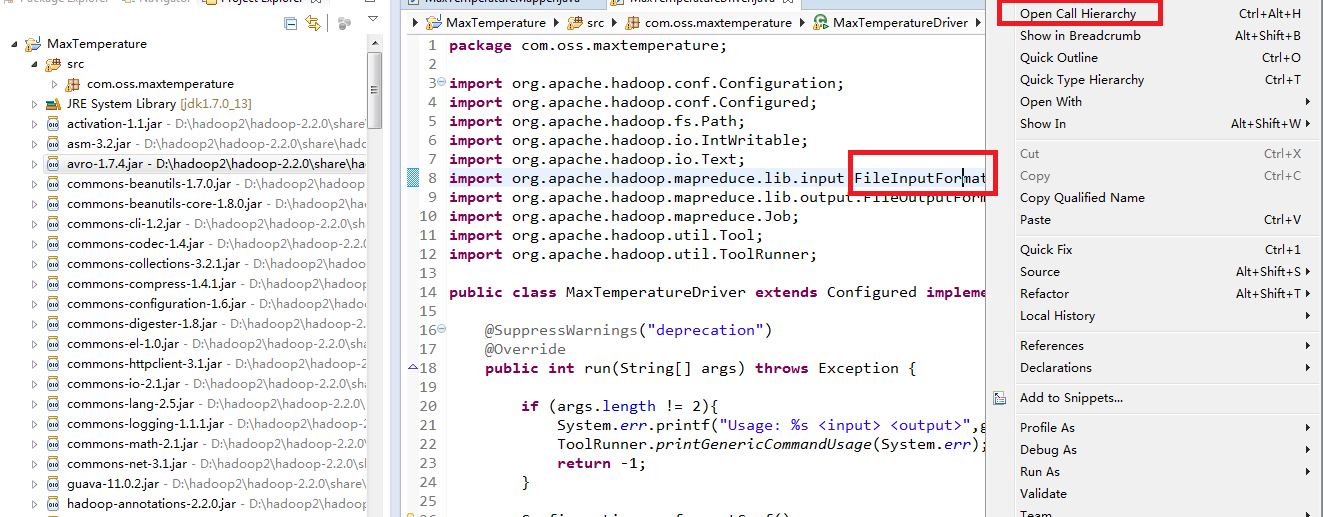

2.找不到源码

当我们想看一个类或则函数怎么实现的时候,通过Open Call Hierarchy,却找不到源文件。

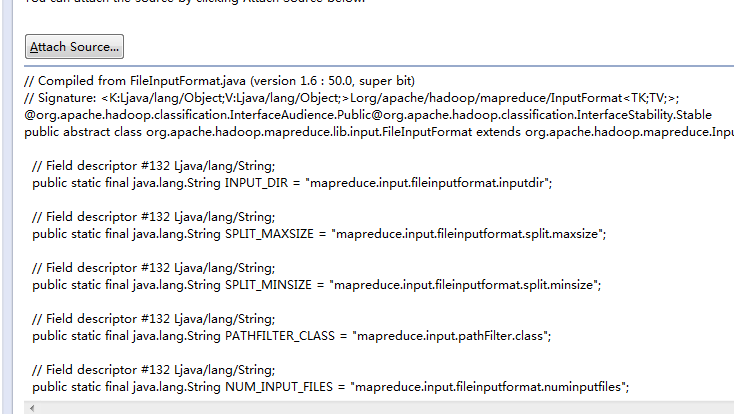

3.Attach Source

上面三处,我们按照顺序添加即可,我们选定压缩包之后,单击确定,ok了,我们的工作已经完毕。

注意:对于hadoop-2.2.0-src.zip则是我们上面通过maven下载的源码,然后压缩的文件,记得一定是压缩文件zip的形式

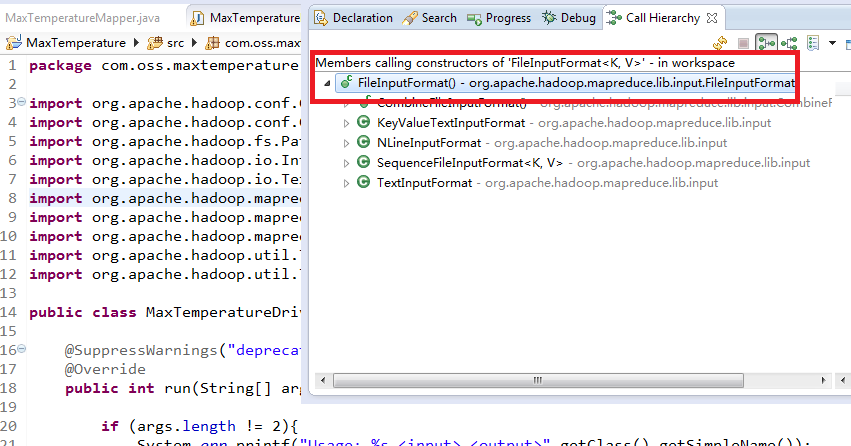

4.验证关联后查看源码

我们再次执行上面操作,通过Open Call Hierarchy

看到下面内容

然后我们双击上图主类,即红字部分,我们看到下面内容:

>

问题:

细心的同学,这里面我们产生一个问题,因为我们看到的是.class文件,而不是.java文件。那么他会不会和我们所看到的.java文件不一样那。

其实是一样的,感兴趣的同学,可以验证一下。

下一篇:

如何通过eclipse查看、阅读hadoop2.4源码

从零教你如何获取hadoop2.4源码并使用eclipse关联hadoop2.4源码的更多相关文章

- 获取hadoop的源码和通过eclipse关联hadoop的源码

一.获取hadoop的源码 首先通过官网下载hadoop-2.5.2-src.tar.gz的软件包,下载好之后解压发现出现了一些错误,无法解压缩, 因此有部分源码我们无法解压 ,因此在这里我讲述一下如 ...

- 从零教你在Linux环境下(ubuntu)如何编译hadoop2.4

问题导读: 1.如果获取hadoop src maven包?2.编译hadoop需要装哪些软件?3.如何编译hadoop2.4?扩展:编译hadoop为何安装这些软件? 本文链接 http://ww ...

- 教你如何获取ipa包中的开发文件

教你如何获取ipa包中的开发文件 1. 从iTunes获取到ipa包 2. 修改ipa包然后获取里面的开发文件

- win8下使用eclipse进行hadoop2.6.2开发

最近在win平台下使用eclipse Mars做在远程linux上的hadoop2.6开发,出现很多问题,让人心力交瘁,在经过不懈努力后,终于解决了,让人欢欣雀跃. 1.安装JDK 在做hadoop2 ...

- eclipse连hadoop2.x运行wordcount 转载

转载地址:http://my.oschina.net/cjun/blog/475576 一.新建java工程,并且导入hadoop相关jar包 此处可以直接创建mapreduce项目就可以,不用下面折 ...

- 详细讲解Hadoop源码阅读工程(以hadoop-2.6.0-src.tar.gz和hadoop-2.6.0-cdh5.4.5-src.tar.gz为代表)

首先,说的是,本人到现在为止,已经玩过. 对于,这样的软件,博友,可以去看我博客的相关博文.在此,不一一赘述! Eclipse *版本 Eclipse *下载 Jd ...

- 转:微信开发获取地理位置实例(java,非常详细,附工程源码)

微信开发获取地理位置实例(java,非常详细,附工程源码) 在本篇博客之前,博主已经写了4篇关于微信相关文章,其中三篇是本文基础: 1.微信开发之入门教程,该文章详细讲解了企业号体验号免费申请与一 ...

- eclipse开发hadoop2.2.0程序

在 Eclipse 环境下可以方便地进行 Hadoop 并行程序的开发和调试.前提是安装hadoop-eclipse-plugin,利用这个 plugin, 可以在 Eclipse 中创建一个 Had ...

- Django---图书管理系统,多对多(ManyToMany),request获取多个值getlist(),反查(被关联的对象.author_set.all)

Django---图书管理系统,多对多(ManyToMany),request获取多个值getlist(),反查(被关联的对象.author_set.all) 一丶多对多查询 表建立多对多关系的方式 ...

随机推荐

- 88. Merge Sorted Array

题目: Given two sorted integer arrays A and B, merge B into A as one sorted array. Note:You may assume ...

- Wireshark抓包分析HTTPS与HTTP报文的差异

一.什么是HTTPS: HTTPS(Secure Hypertext Transfer Protocol)安全超文本传输协议 它是一个安全通信通道,它基于HTTP开发,用于在客户计算机和服务器之间交换 ...

- Java API —— 泛型

1.泛型概述及使用 JDK1.5以后出现的机制 泛型是一种特殊的类型,它把指定类型的工作推迟到客户端代码声明并实例化类或方法的时候进行.也被称为参数化类型,可以把类型当作参数一样传递过来,在传递过来之 ...

- AsciiDoc Markup Syntax Summary

AsciiDoc Markup Syntax Summary ============================== A summary of the most commonly used ma ...

- 运行Android应用时提示ADB是否存在于指定路径问题

打开eclipse,选择指定的Android应用工程并Run,提示: [2014-06-28 11:32:26 - LinearLayout] The connectionto adb is down ...

- POJ 2065 SETI(高斯消元)

题目链接:http://poj.org/problem?id=2065 题意:给出一个字符串S[1,n],字母a-z代表1到26,*代表0.我们用数组C[i]表示S[i]经过该变换得到的数字.给出一个 ...

- SecureCRT访问开发板linux系统

前言: 最近在用OK6410开发板跑linux系统,经常在终端上敲一些指令,无奈开发板屏幕太小用起来非常不方便,所以使用终端一款能运行在windows上的软件与开发板连接,直接在电脑上操作开发板了,这 ...

- JPA中的@MappedSuperclass

说明地址:http://docs.oracle.com/javaee/5/api/javax/persistence/MappedSuperclass.html 用来申明一个超类,继承这个类的子类映射 ...

- super.getClass()方法

下面程序的输出结果是多少? importjava.util.Date; public class Test extends Date{ public static void main(String[] ...

- SyntaxHighlighter -- 代码高亮插件

SyntaxHighlighter 下载文件里面支持皮肤匹配. 地址:http://alexgorbatchev.com/SyntaxHighlighter/