NEURAL NETWORKS, PART 1: BACKGROUND

NEURAL NETWORKS, PART 1: BACKGROUND

Artificial neural networks (NN for short) are practical, elegant, and mathematically fascinating models for machine learning. They are inspired by the central nervous systems of humans and animals – smaller processing units (neurons) are connected together to form a complex network that is capable of learning and adapting.

The idea of such neural networks is not new. McCulloch-Pitts (1943) described binary threshold neurons already back in 1940’s. Rosenblatt (1958) popularised the use of perceptrons, a specific type of neurons, as very flexible tools for performing a variety of tasks. The rise of neural networks was halted after Minsky and Papert (1969) published a book about the capabilities of perceptrons, and mathematically proved that they can’t really do very much. This result was quickly generalised to all neural networks, whereas it actually applied only to a specific type of perceptrons, leading to neural networks being disregarded as a viable machine learning method.

In recent years, however, the neural network has made an impressive comeback. Research in the area has become much more active, and neural networks have been found to be more than capable learners, breaking state-of-the-art results on a wide variety of tasks. This has been substantially helped by developments in computing hardware, allowing us to train very large complex networks in reasonable time. In order to learn more about neural networks, we must first understand the concept of vector space, and this is where we’ll start.

A vector space is a space where we can represent the position of a specific point or object as a vector (a sequence of numbers). You’re probably familiar with 2 or 3-dimensional coordinate systems, but we can easily extend this to much higher dimensions (think hundreds or thousands). However, it’s quite difficult to imagine a 1000-dimensional space, so we’ll stick to 2-dimensional illustations.

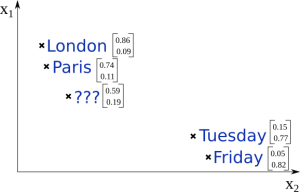

In the graph below, we have placed 4 objects in a 2-dimensional space, and each of them has a 2-dimensional vector that represents their position in this space. For machine learning and classification we make the assumption that similar objects have similar coordinates and are therefore positioned close to each other. This is true in our example, as cities are positioned in the upper left corner, and days-of-the-week are positioned a bit further in the lower right corner.

Let’s say we now get a new object (see image below) and all we know are its coordinates. What do you think, is this object a city or a day-of-the-week? It’s probably a city, because it is positioned much closer to other existing cities we already know about.

This is the kind of reasoning that machine learning tries to perform. Our example was very simple, but this problem gets more difficult when dealing with thousands of dimensions and millions of noisy datapoints.

In a traditional machine learning context these vectors are given as input to the classifier, both at training and testing time. However, there exist methods of representation learning where these vectors are learned automatically, together with the model.

Now that we know about vector spaces, it’s time to look at how the neuron works.

References

- McCulloch, Warren S., and Walter Pitts. “A logical calculus of the ideas immanent in nervous activity.” The Bulletin of Mathematical Biophysics 5.4 (1943): 115-133.

- Minsky, Marvin, and Papert Seymour. “Perceptrons.” (1969).

- Rosenblatt, Frank. “The perceptron: a probabilistic model for information storage and organization in the brain.” Psychological review 65.6 (1958): 386.

NEURAL NETWORKS, PART 1: BACKGROUND的更多相关文章

- 【转】Artificial Neurons and Single-Layer Neural Networks

原文:written by Sebastian Raschka on March 14, 2015 中文版译文:伯乐在线 - atmanic 翻译,toolate 校稿 This article of ...

- A Beginner's Guide To Understanding Convolutional Neural Networks(转)

A Beginner's Guide To Understanding Convolutional Neural Networks Introduction Convolutional neural ...

- Hacker's guide to Neural Networks

Hacker's guide to Neural Networks Hi there, I'm a CS PhD student at Stanford. I've worked on Deep Le ...

- 提高神经网络的学习方式Improving the way neural networks learn

When a golf player is first learning to play golf, they usually spend most of their time developing ...

- (转)A Beginner's Guide To Understanding Convolutional Neural Networks

Adit Deshpande CS Undergrad at UCLA ('19) Blog About A Beginner's Guide To Understanding Convolution ...

- 论文笔记之:Learning Multi-Domain Convolutional Neural Networks for Visual Tracking

Learning Multi-Domain Convolutional Neural Networks for Visual Tracking CVPR 2016 本文提出了一种新的CNN 框架来处理 ...

- 神经网络指南Hacker's guide to Neural Networks

Hi there, I'm a CS PhD student at Stanford. I've worked on Deep Learning for a few years as part of ...

- NEURAL NETWORKS, PART 2: THE NEURON

NEURAL NETWORKS, PART 2: THE NEURON A neuron is a very basic classifier. It takes a number of input ...

- [CVPR2015] Is object localization for free? – Weakly-supervised learning with convolutional neural networks论文笔记

p.p1 { margin: 0.0px 0.0px 0.0px 0.0px; font: 13.0px "Helvetica Neue"; color: #323333 } p. ...

随机推荐

- [D3] 12. Basic Transitions with D3

<!DOCTYPE html> <html> <head lang="en"> <meta charset="UTF-8&quo ...

- 如何判断Android系统的版本

随着Android版本的增多,在不同的版本中使用不同的设计是必须的,根据程序运行的版本来提供不同的功能.这涉及到如何在程序中判断Android系统的版本. 在Android api中的android. ...

- android 66 sharedperference的使用

package com.itheima.qqlogin; import java.io.BufferedReader; import java.io.File; import java.io.File ...

- CGI初识

---恢复内容开始--- 转自http://www.moon-soft.com/program/bbs/readelite887957.htm 用 C/C++ 写 CGI 程序 小传(zhcharle ...

- RedHat7配置IdM server

IdM服务器是一个集成身份验证服务器. Figure 1.1. The IdM Server: Unifying Services Authentication: Kerberos KDC Kerbe ...

- 项目打包 tomcat部署

IDE: IDEA 1.项目maven管理先执行 clean,再执行 compile 2.如果编译compile不成功,则将 C:\Users\Administrator\.m2\repository ...

- 两种隐藏元素方式【display: none】和【visibility: hidden】的区别

此随笔的灵感来源于上周的一个面试,在谈到隐藏元素的时候,面试官突然问我[display: none]和[visibility: hidden]的区别,我当时一愣,这俩有区别吗,好像有,但是忘记了啊,因 ...

- 自己做的demo---宣告可以在java世界开始自由了

package $interface; public interface ILeaveHome { public abstract int a(); public abstract int b(); ...

- myeclipse10 中修改html,servlet,jsp等的生成模板

1.进入myeclipse的安装目录 2.用减压软件,(如winrar)打开common\plugins\com.genuitec.eclipse.wizards_9.0.0.me2011080913 ...

- SQL使用存儲過程訪問不同服務器

用openrowset连接远程SQL或插入数据 --如果只是临时访问,可以直接用openrowset --查询示例 select * from openrowset('SQLOLEDB', 'sql服 ...