flink 安装及wordcount

1、下载

http://mirror.bit.edu.cn/apache/flink/

2、安装

确保已经安装java8以上 解压flink

tar zxvf flink-1.8.0-bin-scala_2.11.tgz 启动本地模式

$ ./bin/start-cluster.sh # Start Flink

[hadoop@bigdata-senior01 flink-1.8.0]$ ./bin/start-cluster.sh

Starting cluster.

Starting standalonesession daemon on host bigdata-senior01.home.com.

Starting taskexecutor daemon on host bigdata-senior01.home.com.

[hadoop@bigdata-senior01 flink-1.8.0]$ jps

1995 StandaloneSessionClusterEntrypoint

2443 TaskManagerRunner

2526 Jps

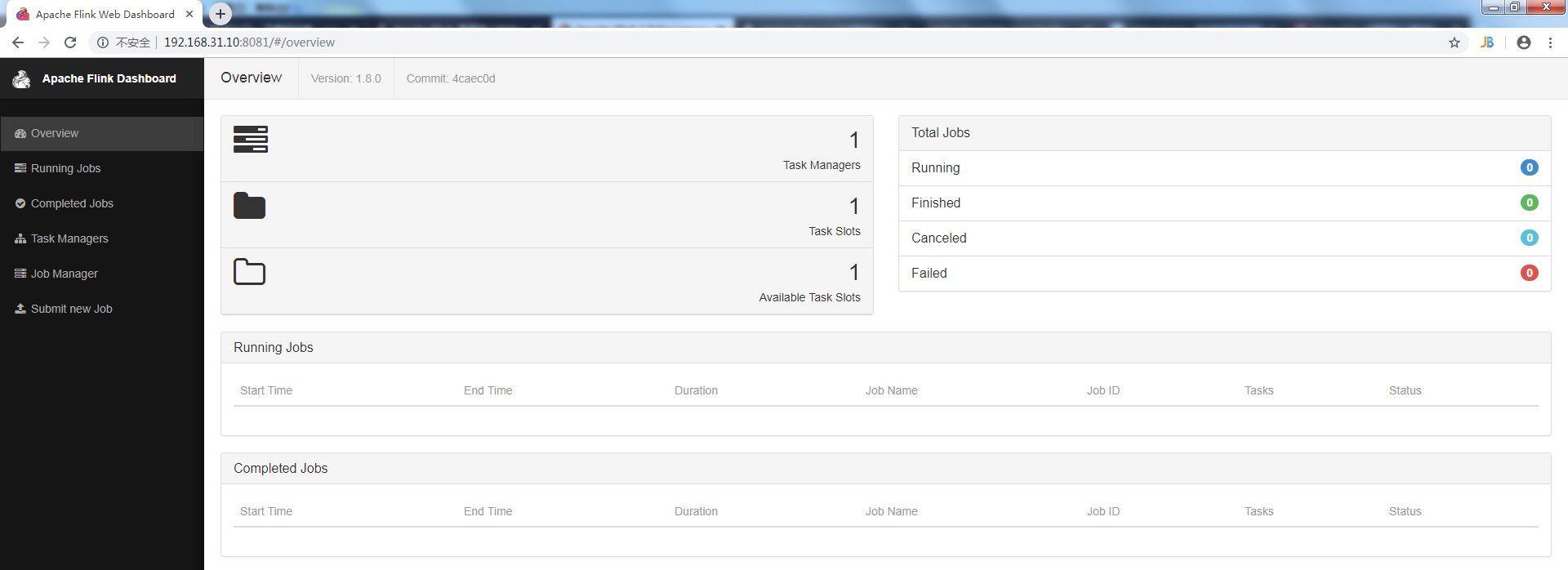

3、访问flink

http://localhost:8081

4、第一个程序wordcount,从一个socket流中读出字符串,计算10秒内的词频

4.1 引入依赖

<dependencies>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-clients_2.12</artifactId>

<version>1.8.0</version>

</dependency> <dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-streaming-java_2.12</artifactId>

<version>1.8.0</version>

<scope>provided</scope>

</dependency> </dependencies>

4.2 代码

public class SocketWindowWordCount {

public static void main(String args[]) throws Exception {

// the host and the port to connect to

final String hostname;

final int port;

try {

final ParameterTool params = ParameterTool.fromArgs(args);

hostname = params.has("hostname") ? params.get("hostname") : "localhost";

port = params.getInt("port");

} catch (Exception e) {

e.printStackTrace();

System.err.println(e.getMessage());

System.err.println("No port specified. Please run 'SocketWindowWordCount " +

"--hostname <hostname> --port <port>', where hostname (localhost by default) " +

"and port is the address of the text server");

System.err.println("To start a simple text server, run 'netcat -l <port>' and " +

"type the input text into the command line");

return;

}

// get the execution environment

final StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

// get input data by connecting to the socket

DataStream<String> text = env.socketTextStream(hostname, port, "\n");

// parse the data, group it, window it, and aggregate the counts

DataStream<WordWithCount> windowCounts = text

.flatMap(new FlatMapFunction<String, WordWithCount>() {

@Override

public void flatMap(String value, Collector<WordWithCount> out) throws Exception {

for (String word : value.split("\\s")) {

out.collect(new WordWithCount(word,1L));

}

}

})

.keyBy("word")

.timeWindow(Time.seconds(10))

.reduce(new ReduceFunction<WordWithCount>() {

@Override

public WordWithCount reduce(WordWithCount value1, WordWithCount value2) throws Exception {

return new WordWithCount(value1.word,value1.count+value2.count);

}

});

// print the results with a single thread, rather than in parallel

windowCounts.print().setParallelism(1);

env.execute("Socket Window WordCount");

}

/**

* Data type for words with count.

*/

public static class WordWithCount {

public String word;

public long count;

public WordWithCount() {

}

public WordWithCount(String word, long count) {

this.word = word;

this.count = count;

}

@Override

public String toString() {

return word + " : " + count;

}

}

}

4.4 编译成jar包上传

先用nc启动侦听并接受连接 nc -lk 9000 启动SocketWindowWordCount

[hadoop@bigdata-senior01 bin]$ ./flink run /home/hadoop/SocketWindowWordCount.jar --port 9000 查看输出

[root@bigdata-senior01 log]# tail -f flink-hadoop-taskexecutor-0-bigdata-senior01.home.com.out

在nc端输入字符串,在日志监控端10秒为一个周期就可以看到输出合计。

flink 安装及wordcount的更多相关文章

- Flink单机版安装与wordCount

Flink为大数据处理工具,类似hadoop,spark.但它能够在大规模分布式系统中快速处理,与spark相似也是基于内存运算,并以低延迟性和高容错性主城,其核心特性是实时的处理流数据.从此大数据生 ...

- 第02讲:Flink 入门程序 WordCount 和 SQL 实现

我们右键运行时相当于在本地启动了一个单机版本.生产中都是集群环境,并且是高可用的,生产上提交任务需要用到flink run 命令,指定必要的参数. 本课时我们主要介绍 Flink 的入门程序以及 SQ ...

- Eclipse的下载、安装和WordCount的初步使用(本地模式和集群模式)

包括: Eclipse的下载 Eclipse的安装 Eclipse的使用 本地模式或集群模式 Scala IDE for Eclipse的下载.安装和WordCount的初步使用(本地模式和集群 ...

- IntelliJ IDEA的下载、安装和WordCount的初步使用(本地模式和集群模式)

包括: IntelliJ IDEA的下载 IntelliJ IDEA的安装 IntelliJ IDEA中的scala插件安装 用SBT方式来创建工程 或 选择Scala方式来创建工程 本地模式或集群 ...

- Hadoop-2.4.0安装和wordcount执行验证

Hadoop-2.4.0安装和wordcount执行验证 下面描写叙述了64位centos6.5机器下,安装32位hadoop-2.4.0,并通过执行 系统自带的WordCount样例来验证服务正确性 ...

- IntelliJ IDEA(Ultimate版本)的下载、安装和WordCount的初步使用(本地模式和集群模式)

不多说,直接上干货! IntelliJ IDEA号称当前Java开发效率最高的IDE工具.IntelliJ IDEA有两个版本:社区版(Community)和旗舰版(Ultimate).社区版时免费的 ...

- IntelliJ IDEA(Community版本)的下载、安装和WordCount的初步使用(本地模式和集群模式)

不多说,直接上干货! 对于初学者来说,建议你先玩玩这个免费的社区版,但是,一段时间,还是去玩专业版吧,这个很简单哈,学聪明点,去搞到途径激活!可以看我的博客. 包括: IntelliJ IDEA(Co ...

- 从flink-example分析flink组件(3)WordCount 流式实战及源码分析

前面介绍了批量处理的WorkCount是如何执行的 <从flink-example分析flink组件(1)WordCount batch实战及源码分析> <从flink-exampl ...

- 2、flink入门程序Wordcount和sql实现

一.DataStream Wordcount 代码地址:https://gitee.com/nltxwz_xxd/abc_bigdata 基于scala实现 maven依赖如下: <depend ...

随机推荐

- grep命令用法

linux中grep命令的用法 作为linux中最为常用的三大文本(awk,sed,grep)处理工具之一,掌握好其用法是很有必要的. 首先谈一下grep命令的常用格式为:grep [选项] ”模 ...

- 在 Oracle 中使用正则表达式

Oracle使用正则表达式离不开这4个函数: 1.regexp_like 2.regexp_substr 3.regexp_instr 4.regexp_replace 看函数名称大概就能猜到有什么用 ...

- Docker安装(二)

一.前提条件 目前,CentOS 仅发行版本中的内核支持 Docker. Docker 运行在 CentOS 7 上,要求系统为64位.系统内核版本为 3.10 以上. Docker 运行在 Cent ...

- RGB颜色查询

RGB颜色速查表 #FFFFFF #FFFFF0 #FFFFE0 #FFFF00 #FFFAFA #FFFAF0 #FFFACD #FFF8DC #FFF68F ...

- 11/5 <backtracking> 伪BFS+回溯

78. Subsets While iterating through all numbers, for each new number, we can either pick it or not p ...

- raid,磁盘配额,DNS综合测试题

DNS解析综合学习案例1.用户需把/dev/myvg/mylv逻辑卷以支持磁盘配额的方式挂载到网页目录下2.在网页目录下创建测试文件index.html,内容为用户名称,通过浏览器访问测试3.创建用户 ...

- 【CF1097F】Alex and a TV Show

[CF1097F]Alex and a TV Show 题面 洛谷 题解 我们对于某个集合中的每个\(i\),令\(f(i)\)表示\(i\)作为约数出现次数的奇偶性. 因为只要因为奇偶性只有\(0, ...

- [LeetCode] 905. Sort Array By Parity 按奇偶排序数组

Given an array A of non-negative integers, return an array consisting of all the even elements of A, ...

- oracle--错误笔记(二)--ORA-16014

ORA-16014错误解决办法 01.问题以及解决过程 SQL> select status from v$instance; STATUS ------------ MOUNTED SQL&g ...

- oracle --工具 ODU

一,什么是ODU ODU全称为Oracle Data ba se Unloader ,是用于Oracle 数据库紧急恢复的软件,在各种原因 造成的数据库不能打开或数据删除后没有备份时,使用ODU抢救数 ...