自然语言20_The corpora with NLTK

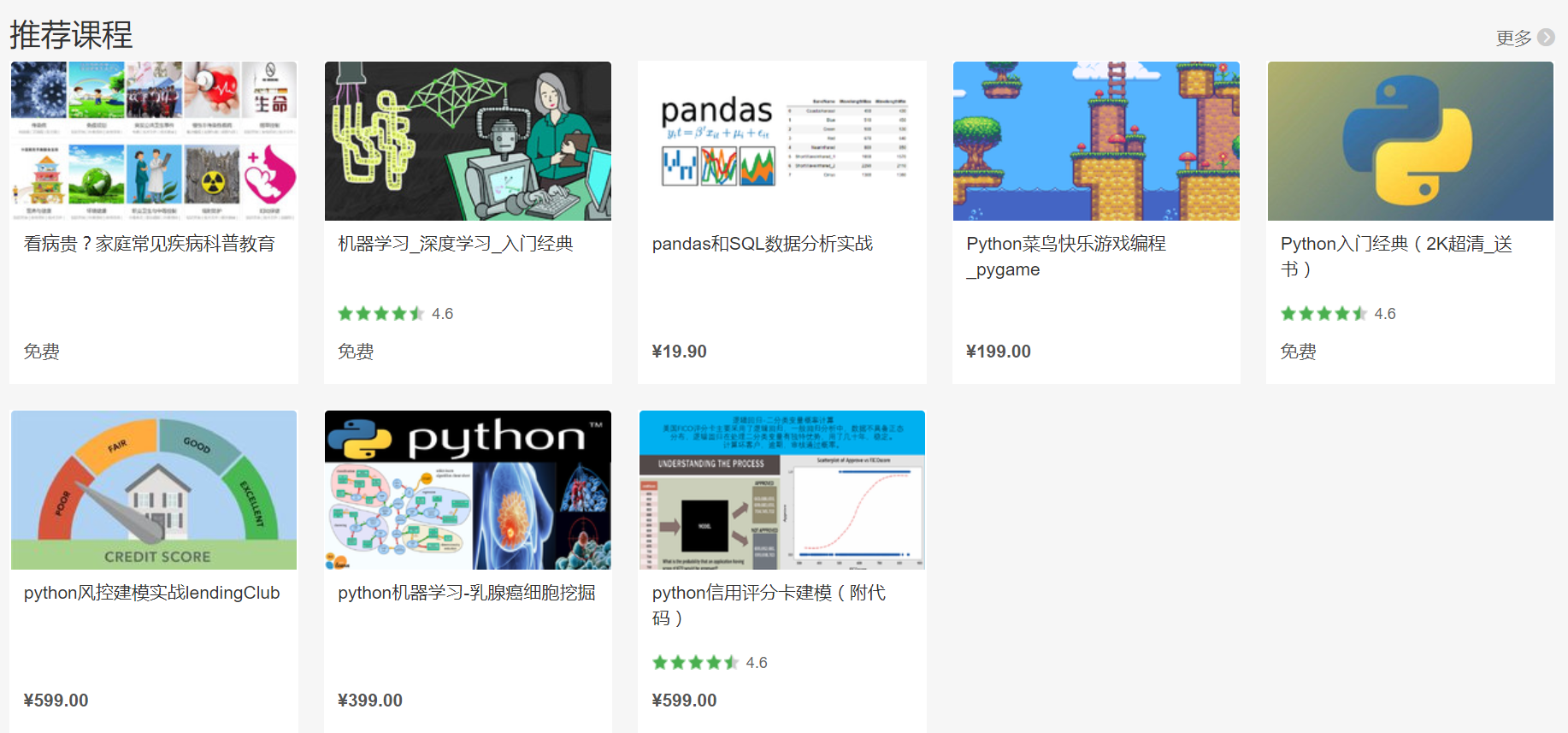

python机器学习-乳腺癌细胞挖掘(博主亲自录制视频)https://study.163.com/course/introduction.htm?courseId=1005269003&utm_campaign=commission&utm_source=cp-400000000398149&utm_medium=share

机器学习,统计项目合作QQ:231469242

The corpora with NLTK

寻找文件路径的代码

# -*- coding: utf-8 -*-

"""

Spyder Editor This is a temporary script file.

""" import nltk,sys,os

print(nltk.__file__) if sys.platform.startswith('win'):

# Common locations on Windows:

sys.path += [

str(r'C:\nltk_data'), str(r'D:\nltk_data'), str(r'E:\nltk_data'),

os.path.join(sys.prefix, str('nltk_data')),

os.path.join(sys.prefix, str('lib'), str('nltk_data')),

os.path.join(os.environ.get(str('APPDATA'), str('C:\\')), str('nltk_data'))

]

else:

# Common locations on UNIX & OS X:

sys.path += [

str('/usr/share/nltk_data'),

str('/usr/local/share/nltk_data'),

str('/usr/lib/nltk_data'),

str('/usr/local/lib/nltk_data')

]

nltk的corpus语料库是一个所有语言的数据集合。大多数语料库是TXT文本存储,少数为xml和其它格式,

In this part of the tutorial, I want us to take a moment to peak into the corpora we all downloaded! The NLTK corpus is a massive dump of all kinds of natural language data sets that are definitely worth taking a look at.

Almost all of the files in the NLTK corpus follow the same rules for accessing them by using the NLTK module, but nothing is magical about them. These files are plain text files for the most part, some are XML and some are other formats, but they are all accessible by you manually, or via the module and Python. Let's talk about viewing them manually.

Depending on your installation, your nltk_data directory might be hiding in a multitude of locations. To figure out where it is, head to your Python directory, where the NLTK module is. If you do not know where that is, use the following code:

import nltk

print(nltk.__file__)

Run that, and the output will be the location of the NLTK module's __init__.py. Head into the NLTK directory, and then look for the data.py file.

The important blurb of code is:

if sys.platform.startswith('win'):

# Common locations on Windows:

path += [

str(r'C:\nltk_data'), str(r'D:\nltk_data'), str(r'E:\nltk_data'),

os.path.join(sys.prefix, str('nltk_data')),

os.path.join(sys.prefix, str('lib'), str('nltk_data')),

os.path.join(os.environ.get(str('APPDATA'), str('C:\\')), str('nltk_data'))

]

else:

# Common locations on UNIX & OS X:

path += [

str('/usr/share/nltk_data'),

str('/usr/local/share/nltk_data'),

str('/usr/lib/nltk_data'),

str('/usr/local/lib/nltk_data')

]

There, you can see the various possible directories for the nltk_data. If you're on Windows, chances are it is in your appdata, in the local directory. To get there, you will want to open your file browser, go to the top, and type in %appdata%

Next click on roaming, and then find the nltk_data directory. In there, you will have your corpora file. The full path is something like:

corpora在windows的路径C:\Users\yourname\AppData\Roaming\nltk_data\corpora

语料库包括书籍,聊天记录,电影影评

Within here, you have all of the available corpora, including things like books, chat logs, movie reviews, and a whole lot more.

Now, we're going to talk about accessing these documents via NLTK.

As you can see, these are mostly text documents, so you could just use

normal Python code to open and read documents. That said, the NLTK

module has a few nice methods for handling the corpus, so you may find

it useful to use their methology. Here's an example of us opening the

Gutenberg Bible, and reading the first few lines:

古腾堡圣经(Gutenberg Bible),亦称四十二行圣经, 是《圣经》拉丁文公认翻译的印刷品,由翰尼斯·古腾堡于1454年到1455年在德国美因兹(Mainz)采用活字印刷术印刷的。这个圣经是最著名的古版书,他的产生标志着西方图书批量生产的开始

# -*- coding: utf-8 -*-

"""

Spyder Editor This is a temporary script file.

""" from nltk.tokenize import sent_tokenize

from nltk.corpus import gutenberg #sample text

sample=gutenberg.raw("bible-kjv.txt")

tok=sent_tokenize(sample)

for x in range(5):

print (tok[x])

Appdata是什么意思?

意思就是说包括系统程序运行时需要的文件,不建议删除!

Appdata下有三个子文件夹local,locallow,roaming,当你解压缩包时如果不指定路径,系统就把压缩包解到local\temp文件夹下,存放了一些解压文件,

安装软件时就从这里调取数据特别是一些制图软件,体积非常大,占用很多空间。locallow是用来存放共享数据,这两个文件夹下的文件就用优化大师清理,一般都可以清理无用的文件。

roaming文件夹也是存放一些使用程序后产生的数据文件,

如 空间听音乐,登入 的号码等而缓存的一些数据,这些数据优化大师是清理不掉的,

可以打开roaming文件夹里的文件全选定点击删除,删除不掉的就选择跳过,不过当你再使用程序时,这个文件夹又开始膨胀,又会缓存数据.

from nltk.tokenize import sent_tokenize, PunktSentenceTokenizer

from nltk.corpus import gutenberg # sample text

sample = gutenberg.raw("bible-kjv.txt") tok = sent_tokenize(sample) for x in range(5):

print(tok[x])

One of the more advanced data sets in here is "wordnet." Wordnet is a collection of words, definitions, examples of their use, synonyms, antonyms, and more. We'll dive into using wordnet next.

自然语言20_The corpora with NLTK的更多相关文章

- 自然语言处理(1)之NLTK与PYTHON

自然语言处理(1)之NLTK与PYTHON 题记: 由于现在的项目是搜索引擎,所以不由的对自然语言处理产生了好奇,再加上一直以来都想学Python,只是没有机会与时间.碰巧这几天在亚马逊上找书时发现了 ...

- 自然语言23_Text Classification with NLTK

QQ:231469242 欢迎喜欢nltk朋友交流 https://www.pythonprogramming.net/text-classification-nltk-tutorial/?compl ...

- 自然语言19.1_Lemmatizing with NLTK(单词变体还原)

QQ:231469242 欢迎喜欢nltk朋友交流 https://www.pythonprogramming.net/lemmatizing-nltk-tutorial/?completed=/na ...

- 自然语言14_Stemming words with NLTK

https://www.pythonprogramming.net/stemming-nltk-tutorial/?completed=/stop-words-nltk-tutorial/ # -*- ...

- 自然语言13_Stop words with NLTK

https://www.pythonprogramming.net/stop-words-nltk-tutorial/?completed=/tokenizing-words-sentences-nl ...

- 自然语言处理2.1——NLTK文本语料库

1.获取文本语料库 NLTK库中包含了大量的语料库,下面一一介绍几个: (1)古腾堡语料库:NLTK包含古腾堡项目电子文本档案的一小部分文本.该项目目前大约有36000本免费的电子图书. >&g ...

- python自然语言处理函数库nltk从入门到精通

1. 关于Python安装的补充 若在ubuntu系统中同时安装了Python2和python3,则输入python或python2命令打开python2.x版本的控制台:输入python3命令打开p ...

- Python自然语言处理实践: 在NLTK中使用斯坦福中文分词器

http://www.52nlp.cn/python%E8%87%AA%E7%84%B6%E8%AF%AD%E8%A8%80%E5%A4%84%E7%90%86%E5%AE%9E%E8%B7%B5-% ...

- 推荐《用Python进行自然语言处理》中文翻译-NLTK配套书

NLTK配套书<用Python进行自然语言处理>(Natural Language Processing with Python)已经出版好几年了,但是国内一直没有翻译的中文版,虽然读英文 ...

随机推荐

- CentOS6.6搭建LNMP环境

CentOS6.6搭建LNMP环境 1.设置yum源,本地安装依赖包 1 yum -y install gcc gcc-c++ automake autoconf libtool make 2.下载依 ...

- 软件工程(QLGY2015)第三次作业点评(含成绩)

相关博文目录: 第一次作业点评 第二次作业点评 第三次作业点评 团队信息 本页点评团队1-22,其他组见:http://www.cnblogs.com/xiaozhi_5638/p/4490764.h ...

- hibernate的hql查询

1.概念介绍 1.Query是Hibernate的查询接口,用于从数据存储源查询对象及控制执行查询的过程,Query包装了一个HQL查询语句. 2.HQL是Hibernate Query Langua ...

- JNI系列——常见错误

1.本地方法没有找到 原因一:在Java代码中没有加载对应的类 原因二:在.c文件中将本地的方法名转换错误 2.本地库返回为空 原因一:加载的库名称错误 原因二:生成的库与部署设备平台错误

- Spring_SpEL

一.本文目录 简单介绍SpEL的概念和使用 二.概念 Spring 表达式语言(简称SpEL):是一个支持运行时查询和操作对象图的强大的表达式语言.语法类似于 EL:SpEL ...

- lucene-查询query->TermQuery按词条搜索

TermQuery是最简单.也是最常用的Query.TermQuery可以理解成为“词条搜索”,在搜索引擎中最基本的搜索就是在索引中搜索某一词条,而TermQuery就是用来完成这项工作的. 在Lu ...

- EventBus完全解析--组件/线程间通信利器

github地址:https://github.com/greenrobot/EventBus 1, Android EventBus实战, 没听过你就out了 2, Android EventBu ...

- if __name__ == '__main__':

python if __name__ == '__main__': 模块是对象,并且所有的模块都有一个内置属性 __name__.一个模块的 __name__ 的值取决于您如何应用模块.如果 impo ...

- Hadoop 学习笔记3 Develping MapReduce

小笔记: Mavon是一种项目管理工具,通过xml配置来设置项目信息. Mavon POM(project of model). Steps: 1. set up and configure the ...

- python 常用内置函数

编译,执行 repr(me) # 返回对象的字符串表达 compile("print('Hello')",'test.py','e ...