(原)faster rcnn的tensorflow代码的理解

转载请注明出处:

https://www.cnblogs.com/darkknightzh/p/10043864.html

参考网址:

论文:https://arxiv.org/abs/1506.01497

tf的第三方faster rcnn:https://github.com/endernewton/tf-faster-rcnn

IOU:https://www.cnblogs.com/darkknightzh/p/9043395.html

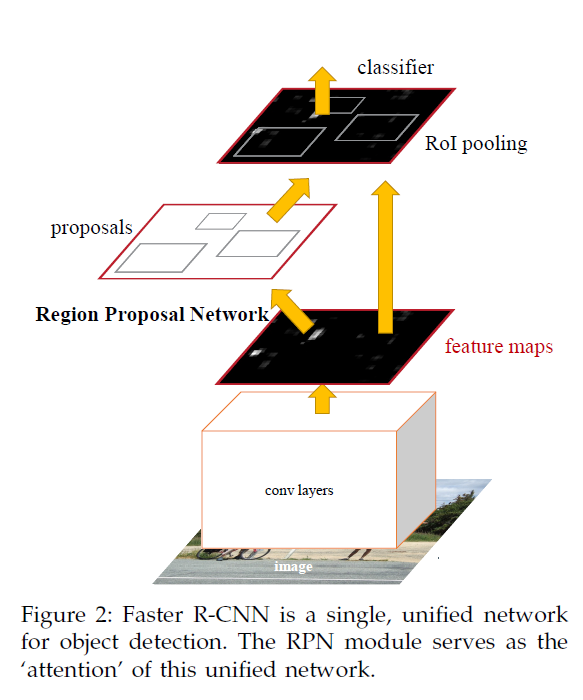

faster rcnn主要包括两部分:rpn网络和rcnn网络。rpn网络用于保留在图像内部的archors,同时得到这些archors是正样本还是负样本还是不关注。最终训练时通过nms保留最多2000个archors,测试时保留300个archors。另一方面,rpn网络会提供256个archors给rcnn网络,用于rcnn分类及回归坐标位置。

Network为基类,vgg16为派生类,重载了Network中的_image_to_head和_head_to_tail。

下面只针对vgg16进行分析。

faster rcnn网络总体结构如下图所示。

1. 训练阶段:

SolverWrapper通过construct_graph创建网络、train_op等。

construct_graph通过Network的create_architecture创建网络。

1.1 create_architecture

create_architecture通过_build_network具体创建网络模型、损失及其他相关操作,得到rois, cls_prob, bbox_pred,定义如下

def create_architecture(self, mode, num_classes, tag=None, anchor_scales=(8, 16, 32), anchor_ratios=(0.5, 1, 2)):

self._image = tf.placeholder(tf.float32, shape=[1, None, None, 3]) # 由于图像宽高不定,因而第二维和第三维都是None

self._im_info = tf.placeholder(tf.float32, shape=[3]) # 图像信息,高、宽、缩放到宽为600或者高为1000的最小比例

self._gt_boxes = tf.placeholder(tf.float32, shape=[None, 5]) # ground truth框的信息。前四个为位置信息,最后一个为该框对应的类别(见roi_data_layer/minibatch.py/get_minibatch)

self._tag = tag self._num_classes = num_classes

self._mode = mode

self._anchor_scales = anchor_scales

self._num_scales = len(anchor_scales) self._anchor_ratios = anchor_ratios

self._num_ratios = len(anchor_ratios) self._num_anchors = self._num_scales * self._num_ratios # self._num_anchors=9 training = mode == 'TRAIN'

testing = mode == 'TEST' weights_regularizer = tf.contrib.layers.l2_regularizer(cfg.TRAIN.WEIGHT_DECAY) # handle most of the regularizers here

if cfg.TRAIN.BIAS_DECAY:

biases_regularizer = weights_regularizer

else:

biases_regularizer = tf.no_regularizer # list as many types of layers as possible, even if they are not used now

with arg_scope([slim.conv2d, slim.conv2d_in_plane, slim.conv2d_transpose, slim.separable_conv2d, slim.fully_connected],

weights_regularizer=weights_regularizer, biases_regularizer=biases_regularizer, biases_initializer=tf.constant_initializer(0.0)):

# rois:256个archors的类别(训练时为每个archors的类别,测试时全0)

# cls_prob:256个archors每一类别的概率

# bbox_pred:预测位置信息的偏移

rois, cls_prob, bbox_pred = self._build_network(training) layers_to_output = {'rois': rois} for var in tf.trainable_variables():

self._train_summaries.append(var) if testing:

stds = np.tile(np.array(cfg.TRAIN.BBOX_NORMALIZE_STDS), (self._num_classes))

means = np.tile(np.array(cfg.TRAIN.BBOX_NORMALIZE_MEANS), (self._num_classes))

self._predictions["bbox_pred"] *= stds # 训练时_region_proposal中预测的位置偏移减均值除标准差,因而测试时需要反过来。

self._predictions["bbox_pred"] += means

else:

self._add_losses()

layers_to_output.update(self._losses) val_summaries = []

with tf.device("/cpu:0"):

val_summaries.append(self._add_gt_image_summary())

for key, var in self._event_summaries.items():

val_summaries.append(tf.summary.scalar(key, var))

for key, var in self._score_summaries.items():

self._add_score_summary(key, var)

for var in self._act_summaries:

self._add_act_summary(var)

for var in self._train_summaries:

self._add_train_summary(var) self._summary_op = tf.summary.merge_all()

self._summary_op_val = tf.summary.merge(val_summaries) layers_to_output.update(self._predictions) return layers_to_output

1.2 _build_network

_build_network用于创建网络

_build_network = _image_to_head + //得到输入图像的特征

_anchor_component + //得到所有可能的archors在原始图像中的坐标(可能超出图像边界)及archors的数量

_region_proposal + //对输入特征进行处理,最终得到2000个archors(训练)或300个archors(测试)

_crop_pool_layer + //将256个archors裁剪出来,并缩放到7*7的固定大小,得到特征

_head_to_tail + //将256个archors的特征增加fc及dropout,得到4096维的特征

_region_classification // 增加fc层及dropout层,用于rcnn的分类及回归

总体流程:网络通过vgg1-5得到特征net_conv后,送入rpn网络得到候选区域archors,去除超出图像边界的archors并选出2000个archors用于训练rpn网络(300个用于测试)。并进一步选择256个archors(用于rcnn分类)。之后将这256个archors的特征根据rois进行裁剪缩放及pooling,得到相同大小7*7的特征pool5,pool5通过两个fc层得到4096维特征fc7,fc7送入_region_classification(2个并列的fc层),得到21维的cls_score和21*4维的bbox_pred。

_build_network定义如下

def _build_network(self, is_training=True):

if cfg.TRAIN.TRUNCATED: # select initializers

initializer = tf.truncated_normal_initializer(mean=0.0, stddev=0.01)

initializer_bbox = tf.truncated_normal_initializer(mean=0.0, stddev=0.001)

else:

initializer = tf.random_normal_initializer(mean=0.0, stddev=0.01)

initializer_bbox = tf.random_normal_initializer(mean=0.0, stddev=0.001) net_conv = self._image_to_head(is_training) # 得到vgg16的conv5_3

with tf.variable_scope(self._scope, self._scope):

self._anchor_component() # 通过特征图及相对原始图像的缩放倍数_feat_stride得到所有archors的起点及终点坐标

rois = self._region_proposal(net_conv, is_training, initializer) # 通过rpn网络,得到256个archors的类别(训练时为每个archors的类别,测试时全0)及位置(后四维)

pool5 = self._crop_pool_layer(net_conv, rois, "pool5") # 对特征图通过rois得到候选区域,并对候选区域进行缩放,得到14*14的固定大小,进一步pooling成7*7大小 fc7 = self._head_to_tail(pool5, is_training) # 对固定大小的rois增加fc及dropout,得到4096维的特征,用于分类及回归

with tf.variable_scope(self._scope, self._scope):

cls_prob, bbox_pred = self._region_classification(fc7, is_training, initializer, initializer_bbox) # 对rois进行分类,完成目标检测;进行回归,得到预测坐标 self._score_summaries.update(self._predictions) # rois:256个archors的类别(训练时为每个archors的类别,测试时全0)

# cls_prob:256个archors每一类别的概率

# bbox_pred:预测位置信息的偏移

return rois, cls_prob, bbox_pred

1.3 _image_to_head

_image_to_head用于得到输入图像的特征

该函数位于vgg16.py中,定义如下

def _image_to_head(self, is_training, reuse=None):

with tf.variable_scope(self._scope, self._scope, reuse=reuse):

net = slim.repeat(self._image, 2, slim.conv2d, 64, [3, 3], trainable=False, scope='conv1')

net = slim.max_pool2d(net, [2, 2], padding='SAME', scope='pool1')

net = slim.repeat(net, 2, slim.conv2d, 128, [3, 3], trainable=False, scope='conv2')

net = slim.max_pool2d(net, [2, 2], padding='SAME', scope='pool2')

net = slim.repeat(net, 3, slim.conv2d, 256, [3, 3], trainable=is_training, scope='conv3')

net = slim.max_pool2d(net, [2, 2], padding='SAME', scope='pool3')

net = slim.repeat(net, 3, slim.conv2d, 512, [3, 3], trainable=is_training, scope='conv4')

net = slim.max_pool2d(net, [2, 2], padding='SAME', scope='pool4')

net = slim.repeat(net, 3, slim.conv2d, 512, [3, 3], trainable=is_training, scope='conv5') self._act_summaries.append(net)

self._layers['head'] = net return net

1.4 _anchor_component

_anchor_component:用于得到所有可能的archors在原始图像中的坐标(可能超出图像边界)及archors的数量(特征图宽*特征图高*9)。该函数使用的self._im_info,为一个3维向量,[0]代表图像高,[1]代表图像宽(感谢carrot359提醒,之前宽高写反了),[2]代表图像缩放的比例(将图像宽缩放到600,或高缩放到1000的最小比例,比如缩放到600*900、850*1000)。该函数调用generate_anchors_pre_tf并进一步调用generate_anchors来得到所有可能的archors在原始图像中的坐标及archors的个数(由于图像大小不一样,因而最终archor的个数也不一样)。

generate_anchors_pre_tf步骤如下:

1. 通过_ratio_enum得到archor时,使用 (0, 0, 15, 15) 的基准窗口,先通过ratio=[0.5,1,2]的比例得到archors。ratio指的是像素总数(宽*高)的比例,而不是宽或者高的比例,得到如下三个archor(每个archor为左上角和右下角的坐标):

2. 而后在通过scales=(8, 16, 32)得到放大倍数后的archors。scales时,将上面的每个都直接放大对应的倍数,最终得到9个archors(每个archor为左上角和右下角的坐标)。将上面三个archors分别放大就行了,因而本文未给出该图。

之后通过tf.add(anchor_constant, shifts)得到缩放后的每个点的9个archor在原始图的矩形框。anchor_constant:1*9*4。shifts:N*1*4。N为缩放后特征图的像素数。将维度从N*9*4变换到(N*9)*4,得到缩放后的图像每个点在原始图像中的archors。

_anchor_component如下:

def _anchor_component(self):

with tf.variable_scope('ANCHOR_' + self._tag) as scope:

height = tf.to_int32(tf.ceil(self._im_info[0] / np.float32(self._feat_stride[0]))) # 图像经过vgg16得到特征图的宽高

width = tf.to_int32(tf.ceil(self._im_info[1] / np.float32(self._feat_stride[0])))

if cfg.USE_E2E_TF:

# 通过特征图宽高、_feat_stride(特征图相对原始图缩小的比例)及_anchor_scales、_anchor_ratios得到原始图像上

# 所有可能的archors(坐标可能超出原始图像边界)和archor的数量

anchors, anchor_length = generate_anchors_pre_tf(height, width, self._feat_stride, self._anchor_scales, self._anchor_ratios )

else:

anchors, anchor_length = tf.py_func(generate_anchors_pre,

[height, width, self._feat_stride, self._anchor_scales, self._anchor_ratios], [tf.float32, tf.int32], name="generate_anchors")

anchors.set_shape([None, 4]) # 起点坐标,终点坐标,共4个值

anchor_length.set_shape([])

self._anchors = anchors

self._anchor_length = anchor_length def generate_anchors_pre_tf(height, width, feat_stride=16, anchor_scales=(8, 16, 32), anchor_ratios=(0.5, 1, 2)):

shift_x = tf.range(width) * feat_stride # 得到所有archors在原始图像的起始x坐标:(0,feat_stride,2*feat_stride...)

shift_y = tf.range(height) * feat_stride # 得到所有archors在原始图像的起始y坐标:(0,feat_stride,2*feat_stride...)

shift_x, shift_y = tf.meshgrid(shift_x, shift_y) # shift_x:height个(0,feat_stride,2*feat_stride...);shift_y:width个(0,feat_stride,2*feat_stride...)'

sx = tf.reshape(shift_x, shape=(-1,)) # 0,feat_stride,2*feat_stride...0,feat_stride,2*feat_stride...0,feat_stride,2*feat_stride...

sy = tf.reshape(shift_y, shape=(-1,)) # 0,0,0...feat_stride,feat_stride,feat_stride...2*feat_stride,2*feat_stride,2*feat_stride..

shifts = tf.transpose(tf.stack([sx, sy, sx, sy])) # width*height个四位矩阵

K = tf.multiply(width, height) # 特征图总共像素数

shifts = tf.transpose(tf.reshape(shifts, shape=[1, K, 4]), perm=(1, 0, 2)) # 增加一维,变成1*(width*height)*4矩阵,而后变换维度为(width*height)*1*4矩阵 anchors = generate_anchors(ratios=np.array(anchor_ratios), scales=np.array(anchor_scales)) #得到9个archors的在原始图像中的四个坐标(放大比例默认为16)

A = anchors.shape[0] # A=9

anchor_constant = tf.constant(anchors.reshape((1, A, 4)), dtype=tf.int32) # anchors增加维度为1*9*4 length = K * A # 总共的archors的个数(每个点对应A=9个archor,共K=height*width个点)

# 1*9*4的base archors和(width*height)*1*4的偏移矩阵进行broadcast相加,得到(width*height)*9*4,并改变形状为(width*height*9)*4,得到所有的archors的四个坐标

anchors_tf = tf.reshape(tf.add(anchor_constant, shifts), shape=(length, 4)) return tf.cast(anchors_tf, dtype=tf.float32), length def generate_anchors(base_size=16, ratios=[0.5, 1, 2], scales=2 ** np.arange(3, 6)):

"""Generate anchor (reference) windows by enumerating aspect ratios X scales wrt a reference (0, 0, 15, 15) window."""

base_anchor = np.array([1, 1, base_size, base_size]) - 1 # base archor的四个坐标

ratio_anchors = _ratio_enum(base_anchor, ratios) # 通过ratio得到3个archors的坐标(3*4矩阵)

anchors = np.vstack([_scale_enum(ratio_anchors[i, :], scales) for i in range(ratio_anchors.shape[0])]) # 3*4矩阵变成9*4矩阵,得到9个archors的坐标

return anchors def _whctrs(anchor):

""" Return width, height, x center, and y center for an anchor (window). """

w = anchor[2] - anchor[0] + 1 # 宽

h = anchor[3] - anchor[1] + 1 # 高

x_ctr = anchor[0] + 0.5 * (w - 1) # 中心x

y_ctr = anchor[1] + 0.5 * (h - 1) # 中心y

return w, h, x_ctr, y_ctr def _mkanchors(ws, hs, x_ctr, y_ctr):

""" Given a vector of widths (ws) and heights (hs) around a center (x_ctr, y_ctr), output a set of anchors (windows)."""

ws = ws[:, np.newaxis] # 3维向量变成3*1矩阵

hs = hs[:, np.newaxis] # 3维向量变成3*1矩阵

anchors = np.hstack((x_ctr - 0.5 * (ws - 1), y_ctr - 0.5 * (hs - 1), x_ctr + 0.5 * (ws - 1), y_ctr + 0.5 * (hs - 1))) # 3*4矩阵

return anchors def _ratio_enum(anchor, ratios): # 缩放比例为像素总数的比例,而非单独宽或者高的比例

""" Enumerate a set of anchors for each aspect ratio wrt an anchor. """

w, h, x_ctr, y_ctr = _whctrs(anchor) # 得到中心位置和宽高

size = w * h # 总共像素数

size_ratios = size / ratios # 缩放比例

ws = np.round(np.sqrt(size_ratios)) # 缩放后的宽,3维向量(值由大到小)

hs = np.round(ws * ratios) # 缩放后的高,两个3维向量对应元素相乘,为3维向量(值由小到大)

anchors = _mkanchors(ws, hs, x_ctr, y_ctr) # 根据中心及宽高得到3个archors的四个坐标

return anchors def _scale_enum(anchor, scales):

""" Enumerate a set of anchors for each scale wrt an anchor. """

w, h, x_ctr, y_ctr = _whctrs(anchor) # 得到中心位置和宽高

ws = w * scales # 得到宽的放大倍数

hs = h * scales # 得到宽的放大倍数

anchors = _mkanchors(ws, hs, x_ctr, y_ctr) # 根据中心及宽高得到3个archors的四个坐标

return anchors

1.5 _region_proposal

_region_proposal用于将vgg16的conv5的特征通过3*3的滑动窗得到rpn特征,进行两条并行的线路,分别送入cls和reg网络。cls网络判断通过1*1的卷积得到archors是正样本还是负样本(由于archors过多,还有可能有不关心的archors,使用时只使用正样本和负样本),用于二分类rpn_cls_score;reg网络对通过1*1的卷积回归出archors的坐标偏移rpn_bbox_pred。这两个网络共用3*3 conv(rpn)。由于每个位置有k个archor,因而每个位置均有2k个soores和4k个coordinates。

cls(将输入的512维降低到2k维):3*3 conv + 1*1 conv(2k个scores,k为每个位置archors个数,如9)

在第一次使用_reshape_layer时,由于输入bottom为1*?*?*2k,先得到caffe中的数据顺序(tf为batchsize*height*width*channels,caffe中为batchsize*channels*height*width)to_caffe:1*2k*?*?,而后reshape后得到reshaped为1*2*?*?,最后在转回tf的顺序to_tf为1*?*?*2,得到rpn_cls_score_reshape。之后通过rpn_cls_prob_reshape(softmax的值,只针对最后一维,即2计算softmax),得到概率rpn_cls_prob_reshape(其最大值,即为预测值rpn_cls_pred),再次_reshape_layer,得到1*?*?*2k的rpn_cls_prob,为原始的概率。

reg(将输入的512维降低到4k维):3*3 conv + 1*1 conv(4k个coordinates,k为每个位置archors个数,如9)。

_region_proposal定义如下:

def _region_proposal(self, net_conv, is_training, initializer): # 对输入特征图进行处理

rpn = slim.conv2d(net_conv, cfg.RPN_CHANNELS, [3, 3], trainable=is_training, weights_initializer=initializer, scope="rpn_conv/3x3") #3*3的conv,作为rpn网络

self._act_summaries.append(rpn)

rpn_cls_score = slim.conv2d(rpn, self._num_anchors * 2, [1, 1], trainable=is_training, weights_initializer=initializer, # _num_anchors为9

padding='VALID', activation_fn=None, scope='rpn_cls_score') #1*1的conv,得到每个位置的9个archors分类特征1*?*?*(9*2)(二分类),判断当前archors是正样本还是负样本

rpn_cls_score_reshape = self._reshape_layer(rpn_cls_score, 2, 'rpn_cls_score_reshape') # 1*?*?*18==>1*(?*9)*?*2

rpn_cls_prob_reshape = self._softmax_layer(rpn_cls_score_reshape, "rpn_cls_prob_reshape") # 以最后一维为特征长度,得到所有特征的概率1*(?*9)*?*2

rpn_cls_pred = tf.argmax(tf.reshape(rpn_cls_score_reshape, [-1, 2]), axis=1, name="rpn_cls_pred") # 得到每个位置的9个archors预测的类别,(1*?*9*?)的列向量

rpn_cls_prob = self._reshape_layer(rpn_cls_prob_reshape, self._num_anchors * 2, "rpn_cls_prob") # 变换会原始维度1*(?*9)*?*2==>1*?*?*(9*2)

rpn_bbox_pred = slim.conv2d(rpn, self._num_anchors * 4, [1, 1], trainable=is_training, weights_initializer=initializer,

padding='VALID', activation_fn=None, scope='rpn_bbox_pred') #1*1的conv,每个位置的9个archors回归位置偏移1*?*?*(9*4)

if is_training:

# 每个位置的9个archors的类别概率和每个位置的9个archors的回归位置偏移得到post_nms_topN=2000个archors的位置(包括全0的batch_inds)及为1的概率

rois, roi_scores = self._proposal_layer(rpn_cls_prob, rpn_bbox_pred, "rois")

rpn_labels = self._anchor_target_layer(rpn_cls_score, "anchor") # rpn_labels:特征图中每个位置对应的是正样本、负样本还是不关注

with tf.control_dependencies([rpn_labels]): # Try to have a deterministic order for the computing graph, for reproducibility

rois, _ = self._proposal_target_layer(rois, roi_scores, "rpn_rois") #通过post_nms_topN个archors的位置及为1(正样本)的概率得到256个rois(第一列的全0更新为每个archors对应的类别)及对应信息

else:

if cfg.TEST.MODE == 'nms':

# 每个位置的9个archors的类别概率和每个位置的9个archors的回归位置偏移得到post_nms_topN=300个archors的位置(包括全0的batch_inds)及为1的概率

rois, _ = self._proposal_layer(rpn_cls_prob, rpn_bbox_pred, "rois")

elif cfg.TEST.MODE == 'top':

rois, _ = self._proposal_top_layer(rpn_cls_prob, rpn_bbox_pred, "rois")

else:

raise NotImplementedError self._predictions["rpn_cls_score"] = rpn_cls_score # 每个位置的9个archors是正样本还是负样本

self._predictions["rpn_cls_score_reshape"] = rpn_cls_score_reshape # 每个archors是正样本还是负样本

self._predictions["rpn_cls_prob"] = rpn_cls_prob # 每个位置的9个archors是正样本和负样本的概率

self._predictions["rpn_cls_pred"] = rpn_cls_pred # 每个位置的9个archors预测的类别,(1*?*9*?)的列向量

self._predictions["rpn_bbox_pred"] = rpn_bbox_pred # 每个位置的9个archors回归位置偏移

self._predictions["rois"] = rois # 256个archors的类别(第一维)及位置(后四维) return rois # 返回256个archors的类别(第一维,训练时为每个archors的类别,测试时全0)及位置(后四维) def _reshape_layer(self, bottom, num_dim, name):

input_shape = tf.shape(bottom)

with tf.variable_scope(name) as scope:

to_caffe = tf.transpose(bottom, [0, 3, 1, 2]) # NHWC(TF数据格式)变成NCHW(caffe格式)

reshaped = tf.reshape(to_caffe, tf.concat(axis=0, values=[[1, num_dim, -1], [input_shape[2]]])) # 1*(num_dim*9)*?*?==>1*num_dim*(9*?)*? 或 1*num_dim*(9*?)*?==>1*(num_dim*9)*?*?

to_tf = tf.transpose(reshaped, [0, 2, 3, 1])

return to_tf def _softmax_layer(self, bottom, name):

if name.startswith('rpn_cls_prob_reshape'): # bottom:1*(?*9)*?*2

input_shape = tf.shape(bottom)

bottom_reshaped = tf.reshape(bottom, [-1, input_shape[-1]]) # 只保留最后一维,用于计算softmax的概率,其他的全合并:1*(?*9)*?*2==>(1*?*9*?)*2

reshaped_score = tf.nn.softmax(bottom_reshaped, name=name) # 得到所有特征的概率

return tf.reshape(reshaped_score, input_shape) # (1*?*9*?)*2==>1*(?*9)*?*2

return tf.nn.softmax(bottom, name=name)

1.6 _proposal_layer

_proposal_layer调用proposal_layer_tf,通过(N*9)*4个archors,计算估计后的坐标(bbox_transform_inv_tf),并对坐标进行裁剪(clip_boxes_tf)及非极大值抑制(tf.image.non_max_suppression,可得到符合条件的索引indices)的archors:rois及这些archors为正样本的概率:rpn_scores。rois为m*5维,rpn_scores为m*4维,其中m为经过非极大值抑制后得到的候选区域个数(训练时2000个,测试时300个)。m*5的第一列为全为0的batch_inds,后4列为坐标(坐上+右下)

_proposal_layer如下

def _proposal_layer(self, rpn_cls_prob, rpn_bbox_pred, name): #每个位置的9个archors的类别概率和每个位置的9个archors的回归位置偏移得到post_nms_topN个archors的位置及为1的概率

with tf.variable_scope(name) as scope:

if cfg.USE_E2E_TF: # post_nms_topN*5的rois(第一列为全0的batch_inds,后4列为坐标);rpn_scores:post_nms_topN*1个对应的为1的概率

rois, rpn_scores = proposal_layer_tf(rpn_cls_prob, rpn_bbox_pred, self._im_info, self._mode, self._feat_stride, self._anchors, self._num_anchors)

else:

rois, rpn_scores = tf.py_func(proposal_layer, [rpn_cls_prob, rpn_bbox_pred, self._im_info, self._mode,

self._feat_stride, self._anchors, self._num_anchors], [tf.float32, tf.float32], name="proposal") rois.set_shape([None, 5])

rpn_scores.set_shape([None, 1]) return rois, rpn_scores def proposal_layer_tf(rpn_cls_prob, rpn_bbox_pred, im_info, cfg_key, _feat_stride, anchors, num_anchors): #每个位置的9个archors的类别概率和每个位置的9个archors的回归位置偏移

if type(cfg_key) == bytes:

cfg_key = cfg_key.decode('utf-8')

pre_nms_topN = cfg[cfg_key].RPN_PRE_NMS_TOP_N

post_nms_topN = cfg[cfg_key].RPN_POST_NMS_TOP_N # 训练时为2000,测试时为300

nms_thresh = cfg[cfg_key].RPN_NMS_THRESH # nms的阈值,为0.7 scores = rpn_cls_prob[:, :, :, num_anchors:] # 1*?*?*(9*2)取后9个:1*?*?*9。应该是前9个代表9个archors为背景景的概率,后9个代表9个archors为前景的概率(二分类,只有背景和前景)

scores = tf.reshape(scores, shape=(-1,)) # 所有的archors为1的概率

rpn_bbox_pred = tf.reshape(rpn_bbox_pred, shape=(-1, 4)) # 所有的archors的四个坐标 proposals = bbox_transform_inv_tf(anchors, rpn_bbox_pred) # 已知archor和偏移求预测的坐标

proposals = clip_boxes_tf(proposals, im_info[:2]) # 限制预测坐标在原始图像上 indices = tf.image.non_max_suppression(proposals, scores, max_output_size=post_nms_topN, iou_threshold=nms_thresh) # 通过nms得到分值最大的post_nms_topN个坐标的索引 boxes = tf.gather(proposals, indices) # 得到post_nms_topN个对应的坐标

boxes = tf.to_float(boxes)

scores = tf.gather(scores, indices) # 得到post_nms_topN个对应的为1的概率

scores = tf.reshape(scores, shape=(-1, 1)) batch_inds = tf.zeros((tf.shape(indices)[0], 1), dtype=tf.float32) # Only support single image as input

blob = tf.concat([batch_inds, boxes], 1) # post_nms_topN*1个batch_inds和post_nms_topN*4个坐标concat,得到post_nms_topN*5的blob return blob, scores def bbox_transform_inv_tf(boxes, deltas): # 已知archor和偏移求预测的坐标

boxes = tf.cast(boxes, deltas.dtype)

widths = tf.subtract(boxes[:, 2], boxes[:, 0]) + 1.0 # 宽

heights = tf.subtract(boxes[:, 3], boxes[:, 1]) + 1.0 # 高

ctr_x = tf.add(boxes[:, 0], widths * 0.5) # 中心x

ctr_y = tf.add(boxes[:, 1], heights * 0.5) # 中心y dx = deltas[:, 0] # 预测的dx

dy = deltas[:, 1] # 预测的dy

dw = deltas[:, 2] # 预测的dw

dh = deltas[:, 3] # 预测的dh pred_ctr_x = tf.add(tf.multiply(dx, widths), ctr_x) # 公式2已知xa,wa,tx反过来求预测的x中心坐标

pred_ctr_y = tf.add(tf.multiply(dy, heights), ctr_y) # 公式2已知ya,ha,ty反过来求预测的y中心坐标

pred_w = tf.multiply(tf.exp(dw), widths) # 公式2已知wa,tw反过来求预测的w

pred_h = tf.multiply(tf.exp(dh), heights) # 公式2已知ha,th反过来求预测的h pred_boxes0 = tf.subtract(pred_ctr_x, pred_w * 0.5) # 预测的框的起始和终点四个坐标

pred_boxes1 = tf.subtract(pred_ctr_y, pred_h * 0.5)

pred_boxes2 = tf.add(pred_ctr_x, pred_w * 0.5)

pred_boxes3 = tf.add(pred_ctr_y, pred_h * 0.5) return tf.stack([pred_boxes0, pred_boxes1, pred_boxes2, pred_boxes3], axis=1) def clip_boxes_tf(boxes, im_info): # 限制预测坐标在原始图像上

b0 = tf.maximum(tf.minimum(boxes[:, 0], im_info[1] - 1), 0)

b1 = tf.maximum(tf.minimum(boxes[:, 1], im_info[0] - 1), 0)

b2 = tf.maximum(tf.minimum(boxes[:, 2], im_info[1] - 1), 0)

b3 = tf.maximum(tf.minimum(boxes[:, 3], im_info[0] - 1), 0)

return tf.stack([b0, b1, b2, b3], axis=1)

1.7 _anchor_target_layer

通过_anchor_target_layer首先去除archors中边界超出图像的archors。而后通过bbox_overlaps计算archors(N*4)和gt_boxes(M*4)的重叠区域的值overlaps(N*M),并得到每个archor对应的最大的重叠ground_truth的值max_overlaps(1*N),以及ground_truth的背景对应的最大重叠archors的值gt_max_overlaps(1*M)和每个背景对应的archor的位置gt_argmax_overlaps。之后通过_compute_targets计算anchors和最大重叠位置的gt_boxes的变换后的坐标bbox_targets(见公式2后四个)。最后通过_unmap在变换回和原始的archors一样大小的rpn_labels(archors是正样本、负样本还是不关注),rpn_bbox_targets, rpn_bbox_inside_weights, rpn_bbox_outside_weights。

_anchor_target_layer定义:

def _anchor_target_layer(self, rpn_cls_score, name): # rpn_cls_score:每个位置的9个archors分类特征1*?*?*(9*2)

with tf.variable_scope(name) as scope:

# rpn_labels; 特征图中每个位置对应的是正样本、负样本还是不关注(去除了边界在图像外面的archors)

# rpn_bbox_targets:# 特征图中每个位置和对应的正样本的坐标偏移(很多为0)

# rpn_bbox_inside_weights: 正样本的权重为1(去除负样本和不关注的样本,均为0)

# rpn_bbox_outside_weights: 正样本和负样本(不包括不关注的样本)归一化的权重

rpn_labels, rpn_bbox_targets, rpn_bbox_inside_weights, rpn_bbox_outside_weights = tf.py_func(

anchor_target_layer, [rpn_cls_score, self._gt_boxes, self._im_info, self._feat_stride, self._anchors, self._num_anchors],

[tf.float32, tf.float32, tf.float32, tf.float32], name="anchor_target") rpn_labels.set_shape([1, 1, None, None])

rpn_bbox_targets.set_shape([1, None, None, self._num_anchors * 4])

rpn_bbox_inside_weights.set_shape([1, None, None, self._num_anchors * 4])

rpn_bbox_outside_weights.set_shape([1, None, None, self._num_anchors * 4]) rpn_labels = tf.to_int32(rpn_labels, name="to_int32")

self._anchor_targets['rpn_labels'] = rpn_labels # 特征图中每个位置对应的是正样本、负样本还是不关注(去除了边界在图像外面的archors)

self._anchor_targets['rpn_bbox_targets'] = rpn_bbox_targets # 特征图中每个位置和对应的正样本的坐标偏移(很多为0)

self._anchor_targets['rpn_bbox_inside_weights'] = rpn_bbox_inside_weights # 正样本的权重为1(去除负样本和不关注的样本,均为0)

self._anchor_targets['rpn_bbox_outside_weights'] = rpn_bbox_outside_weights # 正样本和负样本(不包括不关注的样本)归一化的权重 self._score_summaries.update(self._anchor_targets) return rpn_labels def anchor_target_layer(rpn_cls_score, gt_boxes, im_info, _feat_stride, all_anchors, num_anchors):# 1*?*?*(9*2); ?*5; 3; [16], ?*4; [9]

"""Same as the anchor target layer in original Fast/er RCNN """

A = num_anchors # [9]

total_anchors = all_anchors.shape[0] # 所有archors的个数,9*特征图宽*特征图高 个

K = total_anchors / num_anchors _allowed_border = 0 # allow boxes to sit over the edge by a small amount

height, width = rpn_cls_score.shape[1:3] # rpn网络得到的特征的高宽 inds_inside = np.where( # 所有archors边界可能超出图像,取在图像内部的archors的索引

(all_anchors[:, 0] >= -_allowed_border) & (all_anchors[:, 1] >= -_allowed_border) &

(all_anchors[:, 2] < im_info[1] + _allowed_border) & # width

(all_anchors[:, 3] < im_info[0] + _allowed_border) # height

)[0] anchors = all_anchors[inds_inside, :] # 得到在图像内部archors的坐标 labels = np.empty((len(inds_inside),), dtype=np.float32) # label: 1 正样本, 0 负样本, -1 不关注

labels.fill(-1) # 计算每个anchors:n*4和每个真实位置gt_boxes:m*4的重叠区域的比的矩阵:n*m

overlaps = bbox_overlaps(np.ascontiguousarray(anchors, dtype=np.float), np.ascontiguousarray(gt_boxes, dtype=np.float))

argmax_overlaps = overlaps.argmax(axis=1) # 找到每行最大值的位置,即每个archors对应的正样本的位置,得到n维的行向量

max_overlaps = overlaps[np.arange(len(inds_inside)), argmax_overlaps] # 取出每个archors对应的正样本的重叠区域,n维向量

gt_argmax_overlaps = overlaps.argmax(axis=0) # 找到每列最大值的位置,即每个真实位置对应的archors的位置,得到m维的行向量

gt_max_overlaps = overlaps[gt_argmax_overlaps, np.arange(overlaps.shape[1])] # 取出每个真实位置对应的archors的重叠区域,m维向量

gt_argmax_overlaps = np.where(overlaps == gt_max_overlaps)[0] # 得到从小到大顺序的位置 if not cfg.TRAIN.RPN_CLOBBER_POSITIVES: # assign bg labels first so that positive labels can clobber them first set the negatives

labels[max_overlaps < cfg.TRAIN.RPN_NEGATIVE_OVERLAP] = 0 # 将archors对应的正样本的重叠区域中小于阈值的置0 labels[gt_argmax_overlaps] = 1 # fg label: for each gt, anchor with highest overlap 每个真实位置对应的archors置1

labels[max_overlaps >= cfg.TRAIN.RPN_POSITIVE_OVERLAP] = 1 # fg label: above threshold IOU 将archors对应的正样本的重叠区域中大于阈值的置1 if cfg.TRAIN.RPN_CLOBBER_POSITIVES: # assign bg labels last so that negative labels can clobber positives

labels[max_overlaps < cfg.TRAIN.RPN_NEGATIVE_OVERLAP] = 0 # 如果有过多的正样本,则只随机选择num_fg=0.5*256=128个正样本

num_fg = int(cfg.TRAIN.RPN_FG_FRACTION * cfg.TRAIN.RPN_BATCHSIZE) # subsample positive labels if we have too many

fg_inds = np.where(labels == 1)[0]

if len(fg_inds) > num_fg:

disable_inds = npr.choice(fg_inds, size=(len(fg_inds) - num_fg), replace=False)

labels[disable_inds] = -1 # 将多于的正样本设置为不关注 # 如果有过多的负样本,则只随机选择 num_bg=256-正样本个数 个负样本

num_bg = cfg.TRAIN.RPN_BATCHSIZE - np.sum(labels == 1) # subsample negative labels if we have too many

bg_inds = np.where(labels == 0)[0]

if len(bg_inds) > num_bg:

disable_inds = npr.choice(bg_inds, size=(len(bg_inds) - num_bg), replace=False)

labels[disable_inds] = -1 # 将多于的负样本设置为不关注 bbox_targets = np.zeros((len(inds_inside), 4), dtype=np.float32)

bbox_targets = _compute_targets(anchors, gt_boxes[argmax_overlaps, :]) # 通过archors和archors对应的正样本计算坐标的偏移 bbox_inside_weights = np.zeros((len(inds_inside), 4), dtype=np.float32)

bbox_inside_weights[labels == 1, :] = np.array(cfg.TRAIN.RPN_BBOX_INSIDE_WEIGHTS) # 正样本的四个坐标的权重均设置为1 bbox_outside_weights = np.zeros((len(inds_inside), 4), dtype=np.float32)

if cfg.TRAIN.RPN_POSITIVE_WEIGHT < 0: # uniform weighting of examples (given non-uniform sampling)

num_examples = np.sum(labels >= 0) # 正样本和负样本的总数(去除不关注的样本)

positive_weights = np.ones((1, 4)) * 1.0 / num_examples # 归一化的权重

negative_weights = np.ones((1, 4)) * 1.0 / num_examples # 归一化的权重

else:

assert ((cfg.TRAIN.RPN_POSITIVE_WEIGHT > 0) & (cfg.TRAIN.RPN_POSITIVE_WEIGHT < 1))

positive_weights = (cfg.TRAIN.RPN_POSITIVE_WEIGHT / np.sum(labels == 1))

negative_weights = ((1.0 - cfg.TRAIN.RPN_POSITIVE_WEIGHT) / np.sum(labels == 0))

bbox_outside_weights[labels == 1, :] = positive_weights # 归一化的权重

bbox_outside_weights[labels == 0, :] = negative_weights # 归一化的权重 # 由于上面使用了inds_inside,此处将labels,bbox_targets,bbox_inside_weights,bbox_outside_weights映射到原始的archors(包含未知

# 参数超出图像边界的archors)对应的labels,bbox_targets,bbox_inside_weights,bbox_outside_weights,同时将不需要的填充fill的值

labels = _unmap(labels, total_anchors, inds_inside, fill=-1)

bbox_targets = _unmap(bbox_targets, total_anchors, inds_inside, fill=0)

bbox_inside_weights = _unmap(bbox_inside_weights, total_anchors, inds_inside, fill=0) # 所有archors中正样本的四个坐标的权重均设置为1,其他为0

bbox_outside_weights = _unmap(bbox_outside_weights, total_anchors, inds_inside, fill=0) labels = labels.reshape((1, height, width, A)).transpose(0, 3, 1, 2) # (1*?*?)*9==>1*?*?*9==>1*9*?*?

labels = labels.reshape((1, 1, A * height, width)) # 1*9*?*?==>1*1*(9*?)*?

rpn_labels = labels # 特征图中每个位置对应的是正样本、负样本还是不关注(去除了边界在图像外面的archors) bbox_targets = bbox_targets.reshape((1, height, width, A * 4)) # 1*(9*?)*?*4==>1*?*?*(9*4) rpn_bbox_targets = bbox_targets # 特征图中每个位置和对应的正样本的坐标偏移(很多为0)

bbox_inside_weights = bbox_inside_weights.reshape((1, height, width, A * 4)) # 1*(9*?)*?*4==>1*?*?*(9*4)

rpn_bbox_inside_weights = bbox_inside_weights

bbox_outside_weights = bbox_outside_weights.reshape((1, height, width, A * 4)) # 1*(9*?)*?*4==>1*?*?*(9*4)

rpn_bbox_outside_weights = bbox_outside_weights # 归一化的权重

return rpn_labels, rpn_bbox_targets, rpn_bbox_inside_weights, rpn_bbox_outside_weights def _unmap(data, count, inds, fill=0):

""" Unmap a subset of item (data) back to the original set of items (of size count) """

if len(data.shape) == 1:

ret = np.empty((count,), dtype=np.float32) # 得到1维矩阵

ret.fill(fill) # 默认填充fill的值

ret[inds] = data # 有效位置填充具体数据

else:

ret = np.empty((count,) + data.shape[1:], dtype=np.float32) # 得到对应维数的矩阵

ret.fill(fill) # 默认填充fill的值

ret[inds, :] = data # 有效位置填充具体数据

return ret def _compute_targets(ex_rois, gt_rois):

"""Compute bounding-box regression targets for an image."""

assert ex_rois.shape[0] == gt_rois.shape[0]

assert ex_rois.shape[1] == 4

assert gt_rois.shape[1] == 5 # 通过公式2后四个,结合archor和对应的正样本的坐标计算坐标的偏移

return bbox_transform(ex_rois, gt_rois[:, :4]).astype(np.float32, copy=False) # 由于gt_rois是5列,去掉最后一列的batch_inds def bbox_transform(ex_rois, gt_rois):

ex_widths = ex_rois[:, 2] - ex_rois[:, 0] + 1.0 # archor的宽

ex_heights = ex_rois[:, 3] - ex_rois[:, 1] + 1.0 # archor的高

ex_ctr_x = ex_rois[:, 0] + 0.5 * ex_widths #archor的中心x

ex_ctr_y = ex_rois[:, 1] + 0.5 * ex_heights #archor的中心y gt_widths = gt_rois[:, 2] - gt_rois[:, 0] + 1.0 # 真实正样本w

gt_heights = gt_rois[:, 3] - gt_rois[:, 1] + 1.0 # 真实正样本h

gt_ctr_x = gt_rois[:, 0] + 0.5 * gt_widths # 真实正样本中心x

gt_ctr_y = gt_rois[:, 1] + 0.5 * gt_heights # 真实正样本中心y targets_dx = (gt_ctr_x - ex_ctr_x) / ex_widths # 通过公式2后四个的x*,xa,wa得到dx

targets_dy = (gt_ctr_y - ex_ctr_y) / ex_heights # 通过公式2后四个的y*,ya,ha得到dy

targets_dw = np.log(gt_widths / ex_widths) # 通过公式2后四个的w*,wa得到dw

targets_dh = np.log(gt_heights / ex_heights) # 通过公式2后四个的h*,ha得到dh targets = np.vstack((targets_dx, targets_dy, targets_dw, targets_dh)).transpose()

return targets

1.8 bbox_overlaps

bbox_overlaps用于计算archors和ground truth box重叠区域的面积。具体可见参考网址https://www.cnblogs.com/darkknightzh/p/9043395.html,程序中的代码如下:

def bbox_overlaps(

np.ndarray[DTYPE_t, ndim=2] boxes,

np.ndarray[DTYPE_t, ndim=2] query_boxes):

"""

Parameters

----------

boxes: (N, 4) ndarray of float

query_boxes: (K, 4) ndarray of float

Returns

-------

overlaps: (N, K) ndarray of overlap between boxes and query_boxes

"""

cdef unsigned int N = boxes.shape[0]

cdef unsigned int K = query_boxes.shape[0]

cdef np.ndarray[DTYPE_t, ndim=2] overlaps = np.zeros((N, K), dtype=DTYPE)

cdef DTYPE_t iw, ih, box_area

cdef DTYPE_t ua

cdef unsigned int k, n

for k in range(K):

box_area = (

(query_boxes[k, 2] - query_boxes[k, 0] + 1) *

(query_boxes[k, 3] - query_boxes[k, 1] + 1)

)

for n in range(N):

iw = (

min(boxes[n, 2], query_boxes[k, 2]) -

max(boxes[n, 0], query_boxes[k, 0]) + 1

)

if iw > 0:

ih = (

min(boxes[n, 3], query_boxes[k, 3]) -

max(boxes[n, 1], query_boxes[k, 1]) + 1

)

if ih > 0:

ua = float(

(boxes[n, 2] - boxes[n, 0] + 1) *

(boxes[n, 3] - boxes[n, 1] + 1) +

box_area - iw * ih

)

overlaps[n, k] = iw * ih / ua

return overlaps

1.9 _proposal_target_layer

_proposal_target_layer调用proposal_target_layer,并进一步调用_sample_rois从之前_proposal_layer中选出的2000个archors筛选出256个archors。_sample_rois将正样本数量固定为最大64(小于时补负样本),并根据公式2对坐标归一化,通过_get_bbox_regression_labels得到bbox_targets。用于rcnn的分类及回归。该层只在训练时使用;测试时,直接选择了300个archors,不需要该层了。

=============================================================

190901更新:

说明:感谢@ pytf 的说明(见第19楼和20楼),此处注释有误,146行的注释:

# rois:从post_nms_topN个archors中选择256个archors(第一列的全0更新为每个archors对应的类别)

rois第一列解释错误。由于每次只有一张图像输入,因而rois第一列全为0.此处并没有更新rois第一列为每个archors对应的类别。

另一方面,第139行,是将bbox_target_data第一列更新为每个archors对应的类别。该行解释不太清晰。

190901更新结束

=============================================================

_proposal_target_layer定义如下

def _proposal_target_layer(self, rois, roi_scores, name): # post_nms_topN个archors的位置及为1(正样本)的概率

# 只在训练时使用该层,从post_nms_topN个archors中选择256个archors

with tf.variable_scope(name) as scope:

# labels:正样本和负样本对应的真实的类别

# rois:从post_nms_topN个archors中选择256个archors(第一列的全0更新为每个archors对应的类别)

# roi_scores:256个archors对应的为正样本的概率

# bbox_targets:256*(4*21)的矩阵,只有为正样本时,对应类别的坐标才不为0,其他类别的坐标全为0

# bbox_inside_weights:256*(4*21)的矩阵,正样本时,对应类别四个坐标的权重为1,其他全为0

# bbox_outside_weights:256*(4*21)的矩阵,正样本时,对应类别四个坐标的权重为1,其他全为0

rois, roi_scores, labels, bbox_targets, bbox_inside_weights, bbox_outside_weights = tf.py_func(

proposal_target_layer, [rois, roi_scores, self._gt_boxes, self._num_classes],

[tf.float32, tf.float32, tf.float32, tf.float32, tf.float32, tf.float32], name="proposal_target") rois.set_shape([cfg.TRAIN.BATCH_SIZE, 5])

roi_scores.set_shape([cfg.TRAIN.BATCH_SIZE])

labels.set_shape([cfg.TRAIN.BATCH_SIZE, 1])

bbox_targets.set_shape([cfg.TRAIN.BATCH_SIZE, self._num_classes * 4])

bbox_inside_weights.set_shape([cfg.TRAIN.BATCH_SIZE, self._num_classes * 4])

bbox_outside_weights.set_shape([cfg.TRAIN.BATCH_SIZE, self._num_classes * 4]) self._proposal_targets['rois'] = rois

self._proposal_targets['labels'] = tf.to_int32(labels, name="to_int32")

self._proposal_targets['bbox_targets'] = bbox_targets

self._proposal_targets['bbox_inside_weights'] = bbox_inside_weights

self._proposal_targets['bbox_outside_weights'] = bbox_outside_weights self._score_summaries.update(self._proposal_targets) return rois, roi_scores def proposal_target_layer(rpn_rois, rpn_scores, gt_boxes, _num_classes):

"""Assign object detection proposals to ground-truth targets. Produces proposal classification labels and bounding-box regression targets."""

# Proposal ROIs (0, x1, y1, x2, y2) coming from RPN (i.e., rpn.proposal_layer.ProposalLayer), or any other source

all_rois = rpn_rois # rpn_rois为post_nms_topN*5的矩阵

all_scores = rpn_scores # rpn_scores为post_nms_topN的矩阵,代表对应的archors为正样本的概率 if cfg.TRAIN.USE_GT: # Include ground-truth boxes in the set of candidate rois; USE_GT=False,未使用这段代码

zeros = np.zeros((gt_boxes.shape[0], 1), dtype=gt_boxes.dtype)

all_rois = np.vstack((all_rois, np.hstack((zeros, gt_boxes[:, :-1]))))

all_scores = np.vstack((all_scores, zeros)) # not sure if it a wise appending, but anyway i am not using it num_images = 1 # 该程序只能一次处理一张图片

rois_per_image = cfg.TRAIN.BATCH_SIZE / num_images # 每张图片中最终选择的rois

fg_rois_per_image = np.round(cfg.TRAIN.FG_FRACTION * rois_per_image) # 正样本的个数:0.25*rois_per_image # Sample rois with classification labels and bounding box regression targets

# labels:正样本和负样本对应的真实的类别

# rois:从post_nms_topN个archors中选择256个archors(第一列的全0更新为每个archors对应的类别)

# roi_scores:256个archors对应的为正样本的概率

# bbox_targets:256*(4*21)的矩阵,只有为正样本时,对应类别的坐标才不为0,其他类别的坐标全为0

# bbox_inside_weights:256*(4*21)的矩阵,正样本时,对应类别四个坐标的权重为1,其他全为0

labels, rois, roi_scores, bbox_targets, bbox_inside_weights = _sample_rois(all_rois, all_scores, gt_boxes, fg_rois_per_image, rois_per_image, _num_classes) # 选择256个archors rois = rois.reshape(-1, 5)

roi_scores = roi_scores.reshape(-1)

labels = labels.reshape(-1, 1)

bbox_targets = bbox_targets.reshape(-1, _num_classes * 4)

bbox_inside_weights = bbox_inside_weights.reshape(-1, _num_classes * 4)

bbox_outside_weights = np.array(bbox_inside_weights > 0).astype(np.float32) # 256*(4*21)的矩阵,正样本时,对应类别四个坐标的权重为1,其他全为0 return rois, roi_scores, labels, bbox_targets, bbox_inside_weights, bbox_outside_weights def _get_bbox_regression_labels(bbox_target_data, num_classes):

"""Bounding-box regression targets (bbox_target_data) are stored in a compact form N x (class, tx, ty, tw, th)

This function expands those targets into the 4-of-4*K representation used by the network (i.e. only one class has non-zero targets).

Returns:

bbox_target (ndarray): N x 4K blob of regression targets

bbox_inside_weights (ndarray): N x 4K blob of loss weights

"""

clss = bbox_target_data[:, 0] # 第1列,为类别

bbox_targets = np.zeros((clss.size, 4 * num_classes), dtype=np.float32) # 256*(4*21)的矩阵

bbox_inside_weights = np.zeros(bbox_targets.shape, dtype=np.float32)

inds = np.where(clss > 0)[0] # 正样本的索引

for ind in inds:

cls = clss[ind] # 正样本的类别

start = int(4 * cls) # 每个正样本的起始坐标

end = start + 4 # 每个正样本的终止坐标(由于坐标为4)

bbox_targets[ind, start:end] = bbox_target_data[ind, 1:] # 对应的坐标偏移赋值给对应的类别

bbox_inside_weights[ind, start:end] = cfg.TRAIN.BBOX_INSIDE_WEIGHTS # 对应的权重(1.0, 1.0, 1.0, 1.0)赋值给对应的类别 # bbox_targets:256*(4*21)的矩阵,只有为正样本时,对应类别的坐标才不为0,其他类别的坐标全为0

# bbox_inside_weights:256*(4*21)的矩阵,正样本时,对应类别四个坐标的权重为1,其他全为0

return bbox_targets, bbox_inside_weights def _compute_targets(ex_rois, gt_rois, labels):

"""Compute bounding-box regression targets for an image."""

assert ex_rois.shape[0] == gt_rois.shape[0]

assert ex_rois.shape[1] == 4

assert gt_rois.shape[1] == 4 targets = bbox_transform(ex_rois, gt_rois) # 通过公式2后四个,结合256个archor和对应的正样本的坐标计算坐标的偏移

if cfg.TRAIN.BBOX_NORMALIZE_TARGETS_PRECOMPUTED: # Optionally normalize targets by a precomputed mean and stdev

targets = ((targets - np.array(cfg.TRAIN.BBOX_NORMALIZE_MEANS)) / np.array(cfg.TRAIN.BBOX_NORMALIZE_STDS)) # 坐标减去均值除以标准差,进行归一化

return np.hstack((labels[:, np.newaxis], targets)).astype(np.float32, copy=False) # 之前的bbox第一列为全0,此处第一列为对应的类别 def _sample_rois(all_rois, all_scores, gt_boxes, fg_rois_per_image, rois_per_image, num_classes): # all_rois第一列全0,后4列为坐标;gt_boxes前4列为坐标,最后一列为类别

"""Generate a random sample of RoIs comprising foreground and background examples."""

# 计算archors和gt_boxes重叠区域面积的比值

overlaps = bbox_overlaps(np.ascontiguousarray(all_rois[:, 1:5], dtype=np.float), np.ascontiguousarray(gt_boxes[:, :4], dtype=np.float)) # overlaps: (rois x gt_boxes)

gt_assignment = overlaps.argmax(axis=1) # 得到每个archors对应的gt_boxes的索引

max_overlaps = overlaps.max(axis=1) # 得到每个archors对应的gt_boxes的重叠区域的值

labels = gt_boxes[gt_assignment, 4] # 得到每个archors对应的gt_boxes的类别 # 每个archors对应的gt_boxes的重叠区域的值大于阈值的作为正样本,得到正样本的索引

fg_inds = np.where(max_overlaps >= cfg.TRAIN.FG_THRESH)[0] # Select foreground RoIs as those with >= FG_THRESH overlap

# Guard against the case when an image has fewer than fg_rois_per_image. Select background RoIs as those within [BG_THRESH_LO, BG_THRESH_HI)

# 每个archors对应的gt_boxes的重叠区域的值在给定阈值内的作为负样本,得到负样本的索引

bg_inds = np.where((max_overlaps < cfg.TRAIN.BG_THRESH_HI) & (max_overlaps >= cfg.TRAIN.BG_THRESH_LO))[0] # Small modification to the original version where we ensure a fixed number of regions are sampled

# 最终选择256个archors

if fg_inds.size > 0 and bg_inds.size > 0: # 正负样本均存在,则选择最多fg_rois_per_image个正样本,不够的话,补充负样本

fg_rois_per_image = min(fg_rois_per_image, fg_inds.size)

fg_inds = npr.choice(fg_inds, size=int(fg_rois_per_image), replace=False)

bg_rois_per_image = rois_per_image - fg_rois_per_image

to_replace = bg_inds.size < bg_rois_per_image

bg_inds = npr.choice(bg_inds, size=int(bg_rois_per_image), replace=to_replace)

elif fg_inds.size > 0: # 只有正样本,选择rois_per_image个正样本

to_replace = fg_inds.size < rois_per_image

fg_inds = npr.choice(fg_inds, size=int(rois_per_image), replace=to_replace)

fg_rois_per_image = rois_per_image

elif bg_inds.size > 0: # 只有负样本,选择rois_per_image个负样本

to_replace = bg_inds.size < rois_per_image

bg_inds = npr.choice(bg_inds, size=int(rois_per_image), replace=to_replace)

fg_rois_per_image = 0

else:

import pdb

pdb.set_trace() keep_inds = np.append(fg_inds, bg_inds) # 正样本和负样本的索引

labels = labels[keep_inds] # 正样本和负样本对应的真实的类别

labels[int(fg_rois_per_image):] = 0 # 负样本对应的类别设置为0

rois = all_rois[keep_inds] # 从post_nms_topN个archors中选择256个archors

roi_scores = all_scores[keep_inds] # 256个archors对应的为正样本的概率 # 通过256个archors的坐标和每个archors对应的gt_boxes的坐标及这些archors的真实类别得到坐标偏移(将rois第一列的全0更新为每个archors对应的类别)

bbox_target_data = _compute_targets(rois[:, 1:5], gt_boxes[gt_assignment[keep_inds], :4], labels)

# bbox_targets:256*(4*21)的矩阵,只有为正样本时,对应类别的坐标才不为0,其他类别的坐标全为0

# bbox_inside_weights:256*(4*21)的矩阵,正样本时,对应类别四个坐标的权重为1,其他全为0

bbox_targets, bbox_inside_weights = _get_bbox_regression_labels(bbox_target_data, num_classes) # labels:正样本和负样本对应的真实的类别

# rois:从post_nms_topN个archors中选择256个archors(第一列的全0更新为每个archors对应的类别)

# roi_scores:256个archors对应的为正样本的概率

# bbox_targets:256*(4*21)的矩阵,只有为正样本时,对应类别的坐标才不为0,其他类别的坐标全为0

# bbox_inside_weights:256*(4*21)的矩阵,正样本时,对应类别四个坐标的权重为1,其他全为0

return labels, rois, roi_scores, bbox_targets, bbox_inside_weights

1.10 _crop_pool_layer

_crop_pool_layer用于将256个archors从特征图中裁剪出来缩放到14*14,并进一步max pool到7*7的固定大小,得到特征,方便rcnn网络分类及回归坐标。

该函数先得到特征图对应的原始图像的宽高,而后将原始图像对应的rois进行归一化,并使用tf.image.crop_and_resize(该函数需要归一化的坐标信息)缩放到[cfg.POOLING_SIZE * 2, cfg.POOLING_SIZE * 2],最后通过slim.max_pool2d进行pooling,输出大小依旧一样(256*7*7*512)。

tf.slice(rois, [0, 0], [-1, 1])是对输入进行切片。其中第二个参数为起始的坐标,第三个参数为切片的尺寸。注意,对于二维输入,后两个参数均为y,x的顺序;对于三维输入,后两个均为z,y,x的顺序。当第三个参数为-1时,代表取整个该维度。上面那句是将roi的从0,0开始第一列的数据(y为-1,代表所有行,x为1,代表第一列)

_crop_pool_layer定义如下:

def _crop_pool_layer(self, bottom, rois, name):

with tf.variable_scope(name) as scope:

batch_ids = tf.squeeze(tf.slice(rois, [0, 0], [-1, 1], name="batch_id"), [1]) # 得到第一列,为类别

bottom_shape = tf.shape(bottom) # Get the normalized coordinates of bounding boxes

height = (tf.to_float(bottom_shape[1]) - 1.) * np.float32(self._feat_stride[0])

width = (tf.to_float(bottom_shape[2]) - 1.) * np.float32(self._feat_stride[0])

x1 = tf.slice(rois, [0, 1], [-1, 1], name="x1") / width # 由于crop_and_resize的bboxes范围为0-1,得到归一化的坐标

y1 = tf.slice(rois, [0, 2], [-1, 1], name="y1") / height

x2 = tf.slice(rois, [0, 3], [-1, 1], name="x2") / width

y2 = tf.slice(rois, [0, 4], [-1, 1], name="y2") / height

bboxes = tf.stop_gradient(tf.concat([y1, x1, y2, x2], axis=1)) # Won't be back-propagated to rois anyway, but to save time

pre_pool_size = cfg.POOLING_SIZE * 2 # 根据bboxes裁剪出256个特征,并缩放到14*14(channels和bottom的channels一样),batchsize为256

crops = tf.image.crop_and_resize(bottom, bboxes, tf.to_int32(batch_ids), [pre_pool_size, pre_pool_size], name="crops") return slim.max_pool2d(crops, [2, 2], padding='SAME') # amx pool后得到7*7的特征

1.11 _head_to_tail

_head_to_tail用于将上面得到的256个archors的特征增加两个fc层(ReLU)和两个dropout(train时有,test时无),降维到4096维,用于_region_classification的分类及回归。

_head_to_tail位于vgg16.py中,定义如下

def _head_to_tail(self, pool5, is_training, reuse=None):

with tf.variable_scope(self._scope, self._scope, reuse=reuse):

pool5_flat = slim.flatten(pool5, scope='flatten')

fc6 = slim.fully_connected(pool5_flat, 4096, scope='fc6')

if is_training:

fc6 = slim.dropout(fc6, keep_prob=0.5, is_training=True, scope='dropout6')

fc7 = slim.fully_connected(fc6, 4096, scope='fc7')

if is_training:

fc7 = slim.dropout(fc7, keep_prob=0.5, is_training=True, scope='dropout7') return fc7

1.12 _region_classification

fc7通过_region_classification进行分类及回归。fc7先通过fc层(无ReLU)降维到21层(类别数,得到cls_score),得到概率cls_prob及预测值cls_pred(用于rcnn的分类)。另一方面fc7通过fc层(无ReLU),降维到21*4,得到bbox_pred(用于rcnn的回归)。

_region_classification定义如下:

def _region_classification(self, fc7, is_training, initializer, initializer_bbox):

# 增加fc层,输出为总共类别的个数,进行分类

cls_score = slim.fully_connected(fc7, self._num_classes, weights_initializer=initializer, trainable=is_training, activation_fn=None, scope='cls_score')

cls_prob = self._softmax_layer(cls_score, "cls_prob") # 得到每一类别的概率

cls_pred = tf.argmax(cls_score, axis=1, name="cls_pred") # 得到预测的类别

# 增加fc层,预测位置信息的偏移

bbox_pred = slim.fully_connected(fc7, self._num_classes * 4, weights_initializer=initializer_bbox, trainable=is_training, activation_fn=None, scope='bbox_pred') self._predictions["cls_score"] = cls_score # 用于rcnn分类的256个archors的特征

self._predictions["cls_pred"] = cls_pred

self._predictions["cls_prob"] = cls_prob

self._predictions["bbox_pred"] = bbox_pred return cls_prob, bbox_pred

通过以上步骤,完成了网络的创建rois, cls_prob, bbox_pred = self._build_network(training)。

rois:256*5

cls_prob:256*21(类别数)

bbox_pred:256*84(类别数*4)

2. 损失函数_add_losses

faster rcnn包括两个损失:rpn网络的损失+rcnn网络的损失。其中每个损失又包括分类损失和回归损失。分类损失使用的是交叉熵,回归损失使用的是smooth L1 loss。

程序通过_add_losses增加对应的损失函数。其中rpn_cross_entropy和rpn_loss_box是RPN网络的两个损失,cls_score和bbox_pred是rcnn网络的两个损失。前两个损失用于判断archor是否是ground truth(二分类);后两个损失的batchsize是256。

将rpn_label(1,?,?,2)中不是-1的index取出来,之后将rpn_cls_score(1,?,?,2)及rpn_label中对应于index的取出,计算sparse_softmax_cross_entropy_with_logits,得到rpn_cross_entropy。

计算rpn_bbox_pred(1,?,?,36)和rpn_bbox_targets(1,?,?,36)的_smooth_l1_loss,得到rpn_loss_box。

计算cls_score(256*21)和label(256)的sparse_softmax_cross_entropy_with_logits:cross_entropy。

计算bbox_pred(256*84)和bbox_targets(256*84)的_smooth_l1_loss:loss_box。

最终将上面四个loss相加,得到总的loss(还需要加上regularization_loss)。

至此,损失构造完毕。

程序中通过_add_losses增加损失:

def _add_losses(self, sigma_rpn=3.0):

with tf.variable_scope('LOSS_' + self._tag) as scope:

rpn_cls_score = tf.reshape(self._predictions['rpn_cls_score_reshape'], [-1, 2]) # 每个archors是正样本还是负样本

rpn_label = tf.reshape(self._anchor_targets['rpn_labels'], [-1]) # 特征图中每个位置对应的是正样本、负样本还是不关注(去除了边界在图像外面的archors)

rpn_select = tf.where(tf.not_equal(rpn_label, -1)) # 不关注的archor到的索引

rpn_cls_score = tf.reshape(tf.gather(rpn_cls_score, rpn_select), [-1, 2]) # 去除不关注的archor

rpn_label = tf.reshape(tf.gather(rpn_label, rpn_select), [-1]) # 去除不关注的label

rpn_cross_entropy = tf.reduce_mean(tf.nn.sparse_softmax_cross_entropy_with_logits(logits=rpn_cls_score, labels=rpn_label)) # rpn二分类的损失 rpn_bbox_pred = self._predictions['rpn_bbox_pred'] # 每个位置的9个archors回归位置偏移

rpn_bbox_targets = self._anchor_targets['rpn_bbox_targets'] # 特征图中每个位置和对应的正样本的坐标偏移(很多为0)

rpn_bbox_inside_weights = self._anchor_targets['rpn_bbox_inside_weights'] # 正样本的权重为1(去除负样本和不关注的样本,均为0)

rpn_bbox_outside_weights = self._anchor_targets['rpn_bbox_outside_weights'] # 正样本和负样本(不包括不关注的样本)归一化的权重

rpn_loss_box = self._smooth_l1_loss(rpn_bbox_pred, rpn_bbox_targets, rpn_bbox_inside_weights, rpn_bbox_outside_weights, sigma=sigma_rpn, dim=[1, 2, 3]) cls_score = self._predictions["cls_score"] # 用于rcnn分类的256个archors的特征

label = tf.reshape(self._proposal_targets["labels"], [-1]) # 正样本和负样本对应的真实的类别

cross_entropy = tf.reduce_mean(tf.nn.sparse_softmax_cross_entropy_with_logits(logits=cls_score, labels=label)) # rcnn分类的损失 bbox_pred = self._predictions['bbox_pred'] # RCNN, bbox loss

bbox_targets = self._proposal_targets['bbox_targets'] # 256*(4*21)的矩阵,只有为正样本时,对应类别的坐标才不为0,其他类别的坐标全为0

bbox_inside_weights = self._proposal_targets['bbox_inside_weights'] # 256*(4*21)的矩阵,正样本时,对应类别四个坐标的权重为1,其他全为0

bbox_outside_weights = self._proposal_targets['bbox_outside_weights'] # 256*(4*21)的矩阵,正样本时,对应类别四个坐标的权重为1,其他全为0

loss_box = self._smooth_l1_loss(bbox_pred, bbox_targets, bbox_inside_weights, bbox_outside_weights) self._losses['cross_entropy'] = cross_entropy

self._losses['loss_box'] = loss_box

self._losses['rpn_cross_entropy'] = rpn_cross_entropy

self._losses['rpn_loss_box'] = rpn_loss_box loss = cross_entropy + loss_box + rpn_cross_entropy + rpn_loss_box # 总共的损失

regularization_loss = tf.add_n(tf.losses.get_regularization_losses(), 'regu')

self._losses['total_loss'] = loss + regularization_loss self._event_summaries.update(self._losses) return loss

smooth L1 loss定义如下(见fast rcnn论文):

${{L}_{loc}}({{t}^{u}},v)=\sum\limits_{i\in \{x,y,w,h\}}{smoot{{h}_{{{L}_{1}}}}(t_{i}^{u}-{{v}_{i}})}\text{ (2)}$

in which

程序中先计算pred和target的差box_diff,而后得到正样本的差in_box_diff(通过乘以权重bbox_inside_weights将负样本设置为0)及绝对值abs_in_box_diff,之后计算上式(3)中的符号smoothL1_sign,并得到的smooth L1 loss:in_loss_box,乘以bbox_outside_weights权重,并得到最终的loss:loss_box。

其中_smooth_l1_loss定义如下:

def _smooth_l1_loss(self, bbox_pred, bbox_targets, bbox_inside_weights, bbox_outside_weights, sigma=1.0, dim=[1]):

sigma_2 = sigma ** 2

box_diff = bbox_pred - bbox_targets # 预测的和真实的相减

in_box_diff = bbox_inside_weights * box_diff # 乘以正样本的权重1(rpn:去除负样本和不关注的样本,rcnn:去除负样本)

abs_in_box_diff = tf.abs(in_box_diff) # 绝对值

smoothL1_sign = tf.stop_gradient(tf.to_float(tf.less(abs_in_box_diff, 1. / sigma_2))) # 小于阈值的截断的标志位

in_loss_box = tf.pow(in_box_diff, 2) * (sigma_2 / 2.) * smoothL1_sign + (abs_in_box_diff - (0.5 / sigma_2)) * (1. - smoothL1_sign) # smooth l1 loss

out_loss_box = bbox_outside_weights * in_loss_box # rpn:除以有效样本总数(不考虑不关注的样本),进行归一化;rcnn:正样本四个坐标权重为1,负样本为0

loss_box = tf.reduce_mean(tf.reduce_sum(out_loss_box, axis=dim))

return loss_box

3. 测试阶段:

测试时,预测得到的bbox_pred需要乘以(0.1, 0.1, 0.2, 0.2),(而后在加上(0.0, 0.0, 0.0, 0.0))。create_architecture中

if testing:

stds = np.tile(np.array(cfg.TRAIN.BBOX_NORMALIZE_STDS), (self._num_classes))

means = np.tile(np.array(cfg.TRAIN.BBOX_NORMALIZE_MEANS), (self._num_classes))

self._predictions["bbox_pred"] *= stds # 训练时_region_proposal中预测的位置偏移减均值除标准差,因而测试时需要反过来。

self._predictions["bbox_pred"] += means

具体可参见demo.py中的函数demo(调用test.py中的im_detect)。直接在python中调用该函数时,不需要先乘后加,模型freeze后,得到self._predictions["bbox_pred"]时,结果不对,调试后发现,先乘后加之后结果一致。

_im_info

(原)faster rcnn的tensorflow代码的理解的更多相关文章

- 新人如何运行Faster RCNN的tensorflow代码

0.目的 刚刚学习faster rcnn目标检测算法,在尝试跑通github上面Xinlei Chen的tensorflow版本的faster rcnn代码时候遇到很多问题(我真是太菜),代码地址如下 ...

- faster RCNN(keras版本)代码讲解(3)-训练流程详情

转载:https://blog.csdn.net/u011311291/article/details/81121519 https://blog.csdn.net/qq_34564612/artic ...

- 对faster rcnn 中rpn层的理解

1.介绍 图为faster rcnn的rpn层,接自conv5-3 图为faster rcnn 论文中关于RPN层的结构示意图 2 关于anchor: 一般是在最末层的 feature map 上再用 ...

- Faster RCNN算法训练代码解析(1)

这周看完faster-rcnn后,应该对其源码进行一个解析,以便后面的使用. 那首先直接先主函数出发py-faster-rcnn/tools/train_faster_rcnn_alt_opt.py ...

- Faster RCNN算法demo代码解析

一. Faster-RCNN代码解释 先看看代码结构: Data: This directory holds (after you download them): Caffe models pre-t ...

- Faster RCNN算法训练代码解析(3)

四个层的forward函数分析: RoIDataLayer:读数据,随机打乱等 AnchorTargetLayer:输出所有anchors(这里分析这个) ProposalLayer:用产生的anch ...

- 完整工程,deeplab v3+(tensorflow)代码全理解及其运行过程,长期更新

前提:ubuntu+tensorflow-gpu+python3.6 各种环境提前配好 1.下载工程源码 网址:https://github.com/tensorflow/models 下载时会遇到速 ...

- Faster RCNN算法训练代码解析(2)

接着上篇的博客,我们获取imdb和roidb的数据后,就可以搭建网络进行训练了. 我们回到trian_rpn()函数里面,此时运行完了roidb, imdb = get_roidb(imdb_name ...

- 再读faster rcnn,有了深层次的理解

1. https://www.wengbi.com/thread_88754_1.html (图) 2. https://blog.csdn.net/WZZ18191171661/article/de ...

随机推荐

- 进程,线程,协程,io多路复用 总结

并发:要做到同时服务多个客户端,有三种技术 1. 进程并行,只能开到当前cpu个数的进程,但能用来处理计算型任务 ,开销最大 2. 如果并行不必要,那么可以考虑用线程并发,单位开销比进程小很多 线程: ...

- miniui表格load数据成功后,回调函数,其中setData要用如下方法

init: function () { mini.parse(); this.grid = mini.get("jsDatagrid"); var grid1 = mini.get ...

- JavaEE 之 Spring(二)

1.AOP(面向切面编程) a.定义:AOP将分散在系统中的功能块放到一个地方——切面 b.重要术语: ①切面(Aspect):就是你要实现的交叉功能---共通业务处理可以被切入到多个目标对象.并且多 ...

- docker+springboot+elasticsearch+kibana+elasticsearch-head整合(详细说明 ,看这一篇就够了)

一开始是没有打算写这一篇博客的,但是看见好多朋友问关于elasticsearch的坑,决定还是写一份详细的安装说明与简单的测试demo,只要大家跟着我的步骤一步步来,100%是可以测试成功的. 一. ...

- 【Spring Boot】构造、访问Restful Webservice与定时任务

Spring Boot Guides Examples(1~3) 参考网址:https://spring.io/guides 创建一个RESTful Web Service 使用Eclipse 创建一 ...

- Android XML shape 标签使用详解(apk瘦身,减少内存好帮手)

Android XML shape 标签使用详解 一个android开发者肯定懂得使用 xml 定义一个 Drawable,比如定义一个 rect 或者 circle 作为一个 View 的背景. ...

- PAT (Advanced Level) Practise 1001 解题报告

GiHub markdown PDF 问题描述 解题思路 代码 提交记录 问题描述 A+B Format (20) 时间限制 400 ms 内存限制 65536 kB 代码长度限制 16000 B 判 ...

- 前后端通过API交互

前两篇已经写好了后端接口,和前段项目环境也搭建好了 现在要通过接口把数据展示在页面上 先占位置写架子 创建一个头部组件和底部组件占位置 <template> <h1>这是头部组 ...

- tomcat端口被占用的问题

在dos下,输入 netstat -ano|findstr 8080 //说明:查看占用8080端口的进程 显示占用端口的进程 taskkill /pid 6856 /f //说明, ...

- (转)java程序员进入名企需要掌握哪些,立一个flag

想要成为合格的Java程序员或工程师到底需要具备哪些专业技能,在面试之前到底需要准备哪些东西呢?面试时面试官想了解你的什么专业技能,以下都是一个合格Java软件工程师所要具备的. 一.专业技能 熟练的 ...