Intro to Machine Learning

本节主要用于机器学习入门,介绍两个简单的分类模型:

决策树和随机森林

不涉及内部原理,仅仅介绍基础的调用方法

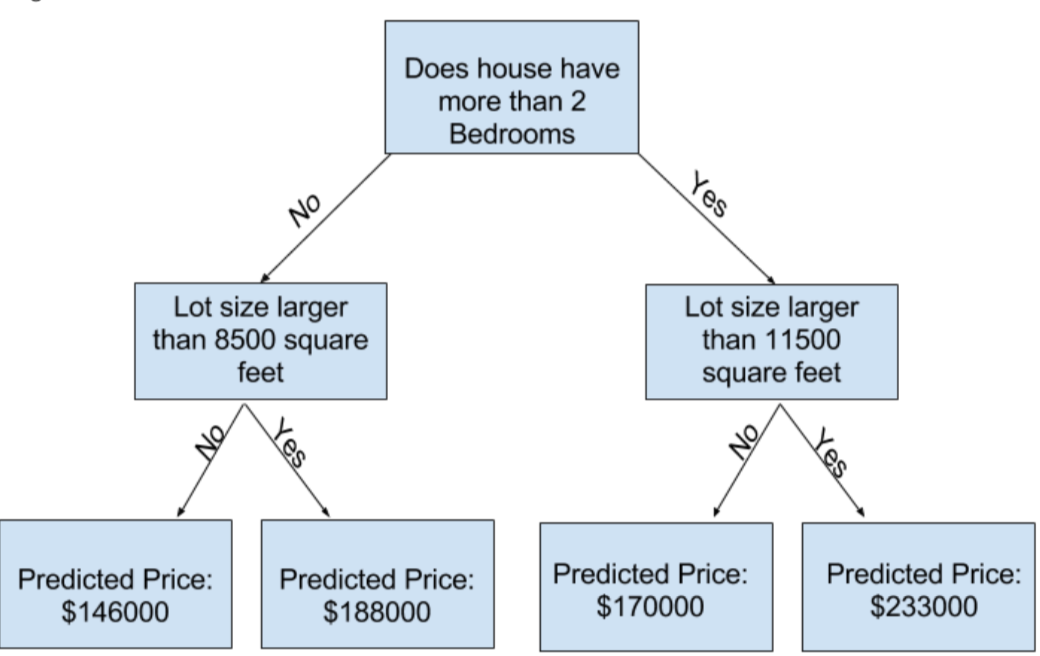

1. How Models Work

以简单的决策树为例

This step of capturing patterns from data is called fitting or training the model

The data used to train the data is called the trainning data

After the model has been fit, you can apply it to new data to predict prices of additional homes

2.Basic Data Exploration

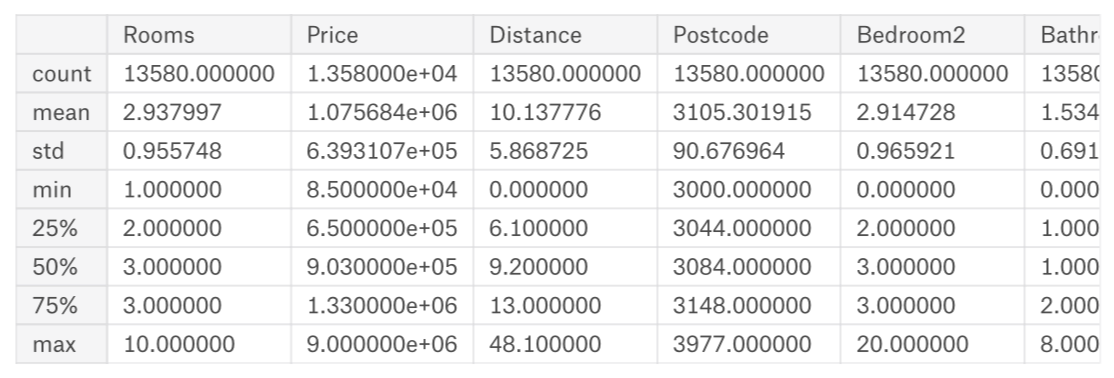

使用pandas中的describle()来探究数据:

melbourne_file_path = '../input/melbourne-housing-snapshot/melb_data.csv'

melbourne_data = pd.read_csv(melbourne_file_path)

melbourne.describe()

output:

注:数值含义

count: 非缺失值的数量

mean: 平均值

std: 标准偏差,它度量值在数值上的分布情况

min、25%、50%、75%、max: 将每一列按照从lowest到highest排序,最小值是min, 1/4位置上,大于25%而小于50%是25%

3.Your First Machine Learning Model

- Selecting Data for Modeling

import pandas as pd

melbourne_file_path = ' ../input/melbourne-housing-snapshot/melb_data.csv'

melbourne_data = pd.read_csv(melbourne_file_path)

- Selecting The Prediction Target

方法:使用dot-notation来挑选prediction target

- Choosing "Features"

melbourne_features = ['Rooms', 'Bathroom', 'Landsize', 'Lattitude', 'Longtitude']

X = melbourne_data[melbourne_features]

查看数据是否加载正确:

X.head()

探究数据基本特性:

- Building Your Model

我们使用scikit-learn来创造模型,scikit-learn教程如下:

具体的原理可以根据需要自己探究

https://scikit-learn.org/stable/supervised_learning.html#supervised-learning

构建模型步骤:

- Define:

What type of model will it be? A decision tree? Some other type of model? Some other parameters of the model type are specified too.

- Fit:

Capture patterns from provided data. This is the heart of modeling

- Predict:

Just what it sounds like

- Evaluate:

Determine how accurate the model's predictions are

实现:

from sklearn.tree import DecisionTreeRegressor

melbourne_mode = DecisionTreeRegressor(random_state=1)

melbourne_mode.fit(X , y)

打印出开始几行:

print (X.head())

预测后的价格如下:

print (melbourne_mode.predict(X.head())

4.Model Validation

由于预测的价格和真实的价格会有差距,而差距多少,我们需要衡量

使用Mean Absolute Error

error= actual-predicted

在实际过程中,我们要将数据分成两份,一份用于训练,叫做training data, 一份用于验证叫validataion data

from sklearn.model_selection import train_test_split

train_X, val_X, train_y, val_y = train_test_split(X, y, random_state=0)

melbourne_model = DecisionTreeRegressor()

melbourne_model.fit(train_X, train_y)

val_predictions = melbourne_model.predict(val_X)

print(mean_absolute_error(val_y, val_predictions))

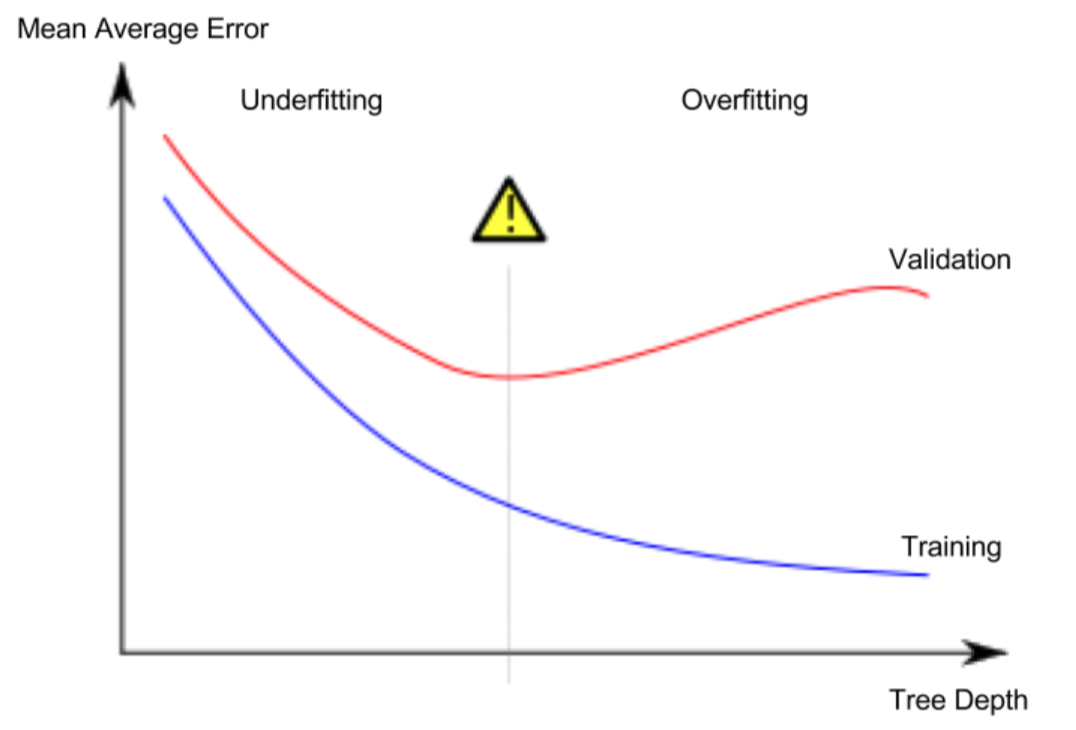

5.Underfitting and Overfitting

- overfitting: A model matches the data almost perfectly, but does poorly in validation and other new data.

- underfitting: When a model fails to capture important distinctions and patterns in the data, so it performs poorly even in training data.

The more leaves we allow the model to make, the more we move from the underfitting area in the above graph to overfitting area.

from sklearn.metrics import mean_absolute_error

from sklearn.tree import DecsionTreeRegressor

def get_ame(max_leaf_nodes, train_X, val_X, train_y, val_y):

model = DecisionTreeRegressor(max_leaf_nodes = max_leaf_nodes, random_state = 0)

model.fit(train_X, train_y)

preds_val = model.predict(val_X)

mae = mean_absolute_error(val_y, preds_val)

return(mae)

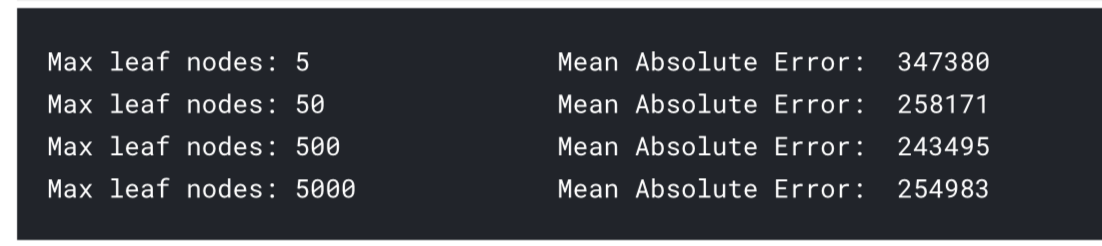

我可以使用循环比较选择最合适的max_leaf_nodes

for max_leaf_nodes in [5,50,500,5000]:

my_ame = get_ame(max_leaf_nodes, train_X, val_X, train_y, val_y)

print(max_leaf_nodes, my_ame)

最后可以发现,当max leaf nodes 为 500时,MAE最小, 接下来我们换另外一种模型

6.Random Forests

The random forest uses many trees, and it makes a prediction by averaging the predictions of each component tree. It generally has much better predictive accuracy than a single decision tree and it works well with default parameters.

from sklearn.ensemble import RandomForestRegressor

from sklearn.metrics import mean_absolute_error

forest_model = RandomForestRegressor(random_state=1)

forest_model.fit(train_X,train_y)

melb_preds = forest_model.predict(val_X)

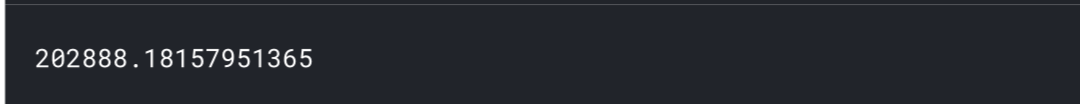

print(mean_absolute_error(val_y, melb_preds))

可以发现最后的误差,相对于决策树小。

one of the best features of Random Forest models is that they generally work reasonably even without this tuning.

7.Machine Learning Competitions

- Build a Random Forest model with all of your data

- Read in the "test" data, which doesn't include values for the target. Predict home values in the test data with your Random Forest model.

- Submit those predictions to the competition and see your score.

- Optionally, come back to see if you can improve your model by adding features or changing your model. Then you can resubmit to see how that stacks up on the competition leaderboard.

Intro to Machine Learning的更多相关文章

- 【机器学习Machine Learning】资料大全

昨天总结了深度学习的资料,今天把机器学习的资料也总结一下(友情提示:有些网站需要"科学上网"^_^) 推荐几本好书: 1.Pattern Recognition and Machi ...

- 机器学习(Machine Learning)&深度学习(Deep Learning)资料【转】

转自:机器学习(Machine Learning)&深度学习(Deep Learning)资料 <Brief History of Machine Learning> 介绍:这是一 ...

- How do I learn machine learning?

https://www.quora.com/How-do-I-learn-machine-learning-1?redirected_qid=6578644 How Can I Learn X? ...

- 机器学习(Machine Learning)与深度学习(Deep Learning)资料汇总

<Brief History of Machine Learning> 介绍:这是一篇介绍机器学习历史的文章,介绍很全面,从感知机.神经网络.决策树.SVM.Adaboost到随机森林.D ...

- Easy machine learning pipelines with pipelearner: intro and call for contributors

@drsimonj here to introduce pipelearner – a package I'm developing to make it easy to create machine ...

- How do I learn mathematics for machine learning?

https://www.quora.com/How-do-I-learn-mathematics-for-machine-learning How do I learn mathematics f ...

- 机器学习案例学习【每周一例】之 Titanic: Machine Learning from Disaster

下面一文章就总结几点关键: 1.要学会观察,尤其是输入数据的特征提取时,看各输入数据和输出的关系,用绘图看! 2.训练后,看测试数据和训练数据误差,确定是否过拟合还是欠拟合: 3.欠拟合的话,说明模 ...

- 【Machine Learning】KNN算法虹膜图片识别

K-近邻算法虹膜图片识别实战 作者:白宁超 2017年1月3日18:26:33 摘要:随着机器学习和深度学习的热潮,各种图书层出不穷.然而多数是基础理论知识介绍,缺乏实现的深入理解.本系列文章是作者结 ...

- 【Machine Learning】Python开发工具:Anaconda+Sublime

Python开发工具:Anaconda+Sublime 作者:白宁超 2016年12月23日21:24:51 摘要:随着机器学习和深度学习的热潮,各种图书层出不穷.然而多数是基础理论知识介绍,缺乏实现 ...

随机推荐

- 【Java例题】3.1 7、11、13的倍数

1.找出1~5000范围内分别满足如下条件的数: (1) 7或11或13的倍数 (2) 7.11,或7.13或11.13的倍数 (3) 7.11和13的倍数. package chapter3; pu ...

- Mybatis获取代理对象

mybatis-config.xml里标签可以放置多个environment,这里可以切换test和develop数据源 databaseIdProvider提供多种数据库,在xml映射文件里选择da ...

- WebSphere MQ性能调优浅谈

导读:目前随着我们在中国的WebSphere MQ(MQSeries)用户数量越来越多,越来越多的用户开始对MQ使用时的性能优化问题提出要求,我根据日常积累的经验谈一谈在MQ性能优化方面应该考虑的因素 ...

- mysql根据逗号将一行数据拆分成多行数据

mysql根据逗号将一行数据拆分成多行数据 原始数据 处理结果展示 DDL CREATE TABLE `company` ( `id` ) DEFAULT NULL, `name` ) DEFAULT ...

- 从零写一个编译器(九):语义分析之构造抽象语法树(AST)

项目的完整代码在 C2j-Compiler 前言 在上一篇完成了符号表的构建,下一步就是输出抽象语法树(Abstract Syntax Tree,AST) 抽象语法树(abstract syntax ...

- 「雕爷学编程」Arduino动手做(15)——手指侦测心跳模块

37款传感器和模块的提法,在网络上广泛流传,其实Arduino能够兼容的传感器模块肯定是不止37种的.鉴于本人手头积累了一些传感器与模块,依照实践出真知(动手试试)的理念,以学习和交流为目的,这里准备 ...

- Hive 系列(六)—— Hive 视图和索引

一.视图 1.1 简介 Hive 中的视图和 RDBMS 中视图的概念一致,都是一组数据的逻辑表示,本质上就是一条 SELECT 语句的结果集.视图是纯粹的逻辑对象,没有关联的存储 (Hive 3.0 ...

- 剑指offer总结一:字符、数字重复问题

问题1:字符串中第一个不重复的字符 题目描述 请实现一个函数用来找出字符流中第一个只出现一次的字符.例如,当从字符流中只读出前两个字符"go"时,第一个只出现一次的字符是" ...

- ZooKeeper 相关概念以及使用小结

Dubbo 通过注册中心在分布式环境中实现服务的注册与发现,而注册中心通常采用 ZooKeeper,研究注册中心相关源码绕不开 ZooKeeper,所以学习了 ZooKeeper 的基本概念以及相关 ...

- nginx单机1w并发优化

目录 ab工具 整体优化思路 具体的优化思路 编写脚本完成并发优化配置 性能统计工具 tips 总结 ab工具 ab -c 10000 -n 200000 http://localhost/index ...