1.1 Introduction中 Apache Kafka™ is a distributed streaming platform. What exactly does that mean?(官网剖析)(博主推荐)

不多说,直接上干货!

一切来源于官网

http://kafka.apache.org/documentation/

Apache Kafka™ is a distributed streaming platform. What exactly does that mean?

Kafka是一个分布式流数据处理平台。这到底是什么意思呢?

We think of a streaming platform as having three key capabilities:

我们认为一个流数据处理平台必须具备三个关键功能:

- It lets you publish and subscribe to streams of records. In this respect it is similar to a message queue or enterprise messaging system.

- It lets you store streams of records in a fault-tolerant way.

- It lets you process streams of records as they occur.

1、它允许你发布订阅流数据,在这方面,他类似一个消息队列或企业级消息系统(消息中间件);

2、它让你存储的流式数据具有容错机制;

3、它让你能实时处理流式数据

What is Kafka good for?

It gets used for two broad classes of application:

Kafka有什么好处? 它被用于两大类别的程序:

- Building real-time streaming data pipelines that reliably get data between systems or applications

- Building real-time streaming applications that transform or react to the streams of data

1、在系统和应用之间构建实时、可靠获取数据的流数据管道

2、构建用于数据转换、数据重复处理的实时流数据应用

To understand how Kafka does these things, let's dive in and explore Kafka's capabilities from the bottom up.

要想明白kafka如何做这些事情,需要我们从上到下深入探索kafka的功能。

First a few concepts:

- Kafka is run as a cluster on one or more servers.

- The Kafka cluster stores streams of records in categories called topics.

- Each record consists of a key, a value, and a timestamp.

先熟悉几个概念:

1、kafka可以作为集群运行在一个或多个的服务器上;

2、kafka集群存储流数据类别的称为topic。

3、每条记录由一个key,value,timestamp组成的。

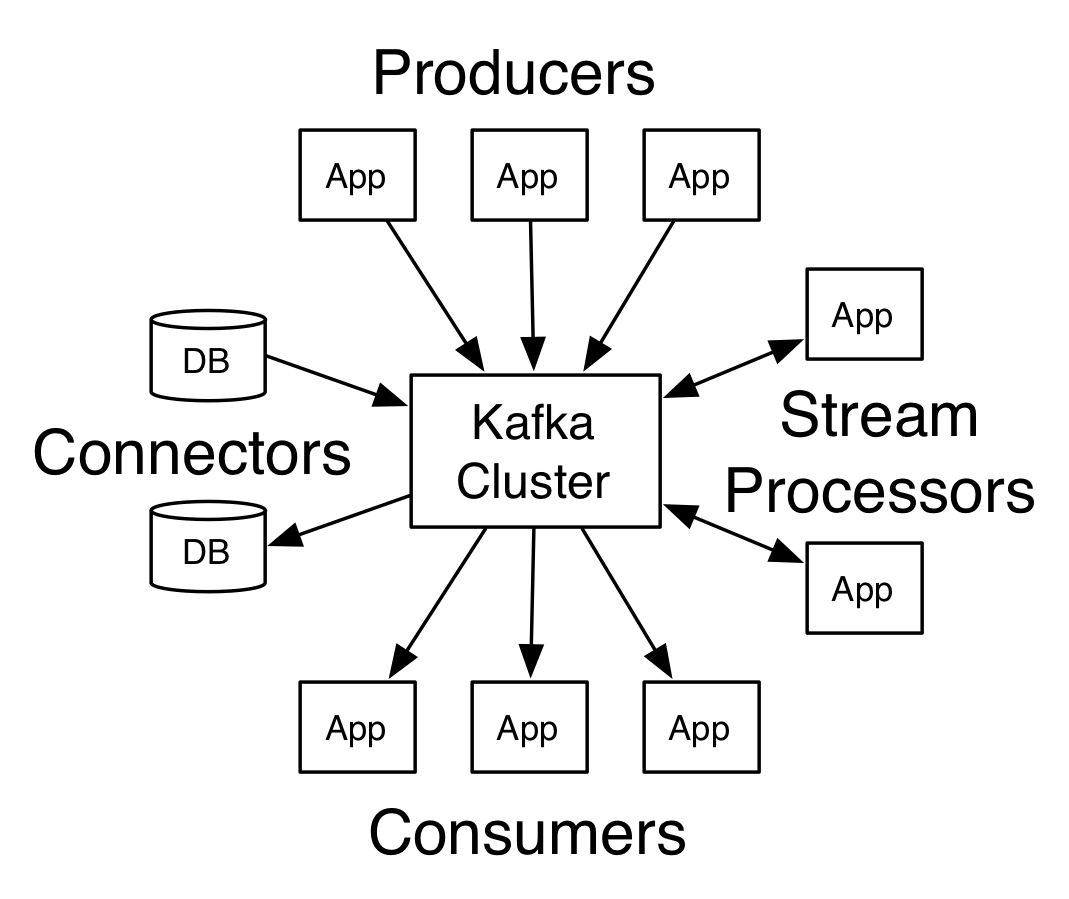

Kafka has four core APIs:

- The Producer API allows an application to publish a stream of records to one or more Kafka topics.

- The Consumer API allows an application to subscribe to one or more topics and process the stream of records produced to them.

- The Streams API allows an application to act as a stream processor, consuming an input stream from one or more topics and producing an output stream to one or more output topics, effectively transforming the input streams to output streams.

- The Connector API allows building and running reusable producers or consumers that connect Kafka topics to existing applications or data systems. For example, a connector to a relational database might capture every change to a table.

Kafka有4个核心的APIs:

Producer API:允许某个应用程序发布流数据到一个或多个kafka topic

Consumer API:允许某个应用程序订阅一个或多个kafka topic同时处理生产的流数据。

Streams API:允许某应用程序作为流处理器,消费一个或多个topic输入流和生产一个或多个topic输出流,高效地转换输入输出流。

Connector API:允许构建和运行可重用的生产者或消费者,连接kafka topic到现有的应用程序或数据系统、例如:一个连接到关系型数据库可能需要捕获一张表的每次改变。(可作为数据库日志同步功能)

In Kafka the communication between the clients and the servers is done with a simple, high-performance, language agnostic TCP protocol. This protocol is versioned and maintains backwards compatibility with older version. We provide a Java client for Kafka, but clients are available in many languages.

在kafka中,客户端和服务器之间的通信采用简单的,高性能,开发语言无关的TCP协议。这种协议是有版本的,并且保持向后兼容性。

Kafka提供了一个Java的客户端,同时也提供其他开发语言的客户端。

1.1 Introduction中 Apache Kafka™ is a distributed streaming platform. What exactly does that mean?(官网剖析)(博主推荐)的更多相关文章

- Apache Kafka® is a distributed streaming platform

Kafka Connect简介 我们知道过去对于Kafka的定义是分布式,分区化的,带备份机制的日志提交服务.也就是一个分布式的消息队列,这也是他最常见的用法.但是Kafka不止于此,打开最新的官网. ...

- Apache Kafka: Next Generation Distributed Messaging System---reference

Introduction Apache Kafka is a distributed publish-subscribe messaging system. It was originally dev ...

- 基于Windows7下snort+apache+php 7 + acid(或者base) + adodb + jpgraph的入侵检测系统的搭建(图文详解)(博主推荐)

为什么,要写这篇论文? 是因为,目前科研的我,正值研三,致力于网络安全.大数据.机器学习.人工智能.区域链研究领域! 论文方向的需要,同时不局限于真实物理环境机器实验室的攻防环境.也不局限于真实物理机 ...

- 1.1 Introduction中 Kafka for Stream Processing官网剖析(博主推荐)

不多说,直接上干货! 一切来源于官网 http://kafka.apache.org/documentation/ Kafka for Stream Processing kafka的流处理 It i ...

- 1.1 Introduction中 Kafka as a Storage System官网剖析(博主推荐)

不多说,直接上干货! 一切来源于官网 http://kafka.apache.org/documentation/ Kafka as a Storage System kafka作为一个存储系统 An ...

- 1.1 Introduction中 Kafka as a Messaging System官网剖析(博主推荐)

不多说,直接上干货! 一切来源于官网 http://kafka.apache.org/documentation/ Kafka as a Messaging System kafka作为一个消息系统 ...

- 1.3 Quick Start中 Step 8: Use Kafka Streams to process data官网剖析(博主推荐)

不多说,直接上干货! 一切来源于官网 http://kafka.apache.org/documentation/ Step 8: Use Kafka Streams to process data ...

- 1.3 Quick Start中 Step 7: Use Kafka Connect to import/export data官网剖析(博主推荐)

不多说,直接上干货! 一切来源于官网 http://kafka.apache.org/documentation/ Step 7: Use Kafka Connect to import/export ...

- 1.1 Introduction中 Guarantees官网剖析(博主推荐)

不多说,直接上干货! 一切来源于官网 http://kafka.apache.org/documentation/ Guarantees Kafka的保证(Guarantees) At a high- ...

随机推荐

- WebAssembly学习(三):AssemblyScript - TypeScript到WebAssembly的编译

虽然说只要高级语言能转换成 LLVM IR,就能被编译成 WebAssembly 字节码,官方也推荐c/c++的方式,但是让一个前端工程师去熟练使用c/c++显然是有点困难,那么TypeScript ...

- Django_高级扩展

- HDU 2883 kebab

kebab Time Limit: 1000ms Memory Limit: 32768KB This problem will be judged on HDU. Original ID: 2883 ...

- Activiti工作流框架学习(二)——使用Activiti提供的API完成流程操作

可以在项目中加入log4j,将logj4.properties文件拷入到src目录下,这样框架执行的sql就可以输出到到控制台,log4j提供的日志级别有以下几种: Fatal error war ...

- qt qlineedit只输入数字

lineEdit->setValidator(new QRegExpValidator(QRegExp("[0-9]+$")));

- 阿里云server改动MySQL初始password---Linux学习笔记

主要方法就是改动 MySQL依照文件以下的my.cnf文件 首先是找到my.cnf文件. # find / -name "my.cnf" # cd /etc 接下来最好是先备份my ...

- 如何分解json值设置到text文本框中

<td><input type="text" id="name11"></td> //4设置访问成功返回的操作 xhr.on ...

- Domino系统从UNIX平台到windows平台的迁移及备份

单位机房的一台服务机器到折旧期了,换成了新购IBM机器X3950,而且都预装了windows 2003 server 标准版,所以只有把以前在Unix平台下跑的OA系统迁移到新的windows 200 ...

- CSS Loading 特效

全页面遮罩效果loading css: .loading_shade { position: fixed; left:; top:; width: 100%; height: 100%; displa ...

- 【2017 Multi-University Training Contest - Team 3】Kanade's sum

[Link]:http://acm.hdu.edu.cn/showproblem.php?pid=6058 [Description] 给你n个数; 它们是由(1..n)组成的排列; 然后给你一个数字 ...