Bootstrap aggregating Bagging 合奏 Ensemble Neural Network

zh.wikipedia.org/wiki/Bagging算法

Bagging算法 (英语:Bootstrap aggregating,引导聚集算法),又称装袋算法,是机器学习领域的一种团体学习算法。最初由Leo Breiman于1994年提出。Bagging算法可与其他分类、回归算法结合,提高其准确率、稳定性的同时,通过降低结果的方差,避免过拟合的发生。

给定一个大小为

http://machine-learning.martinsewell.com/ensembles/bagging/

【bootstrap samples 放回抽样 random samples with replacement】

Bagging (Breiman, 1996), a name derived from “bootstrap aggregation”, was the first effective method of ensemble learning and is one of the simplest methods of arching [1]. The meta-algorithm, which is a special case of the model averaging, was originally designed for classification and is usually applied to decision tree models, but it can be used with any type of model for classification or regression. The method uses multiple versions of a training set by using the bootstrap, i.e. sampling with replacement. Each of these data sets is used to train a different model. The outputs of the models are combined by averaging (in case of regression) or voting (in case of classification) to create a single output. Bagging is only effective when using unstable (i.e. a small change in the training set can cause a significant change in the model) nonlinear models.

https://www.packtpub.com/mapt/book/big_data_and_business_intelligence/9781787128576/7/ch07lvl1sec46/bagging--building-an-ensemble-of-classifiers-from-bootstrap-samples

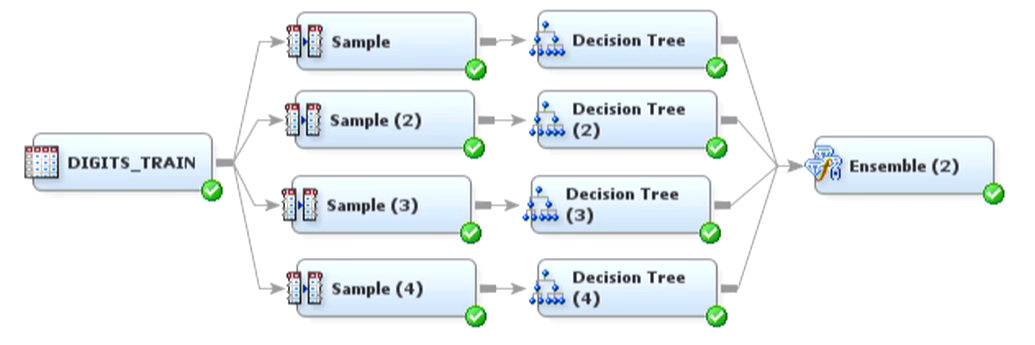

Bagging is an ensemble learning technique that is closely related to the MajorityVoteClassifier that we implemented in the previous section, as illustrated in the following diagram:

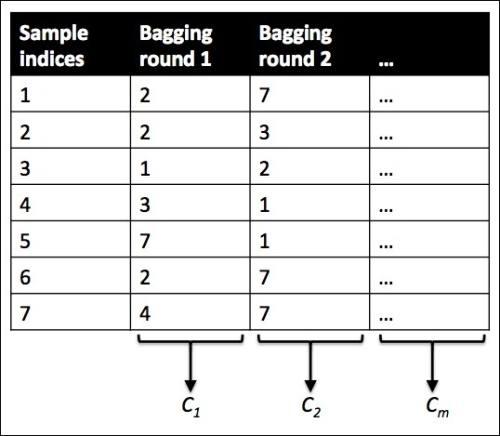

However, instead of using the same training set to fit the individual classifiers in the ensemble, we draw bootstrap samples (random samples with replacement) from the initial training set, which is why bagging is also known as bootstrap aggregating. To provide a more concrete example of how bootstrapping works, let's consider the example shown in the following figure. Here, we have seven different training instances (denoted as indices 1-7) that are sampled randomly with replacement in each round of bagging. Each bootstrap sample is then used to fit a classifier , which is most typically an unpruned decision tree:

, which is most typically an unpruned decision tree:

【LOWESS (locally weighted scatterplot smoothing) 局部散点加权平滑】

LOESS and LOWESS thus build on "classical" methods, such as linear and nonlinear least squares regression. They address situations in which the classical procedures do not perform well or cannot be effectively applied without undue labor. LOESS combines much of the simplicity of linear least squares regression with the flexibility of nonlinear regression. It does this by fitting simple models to localized subsets of the data to build up a function that describes the deterministic part of the variation in the data, point by point. In fact, one of the chief attractions of this method is that the data analyst is not required to specify a global function of any form to fit a model to the data, only to fit segments of the data.

【用局部数据去逐点拟合局部--不用全局函数拟合模型--局部问题局部解决】

http://www.richardafolabi.com/blog/non-technical-introduction-to-random-forest-and-gradient-boosting-in-machine-learning.html

【A collective wisdom of many is likely more accurate than any one. Wisdom of the crowd – Aristotle, 300BC-】

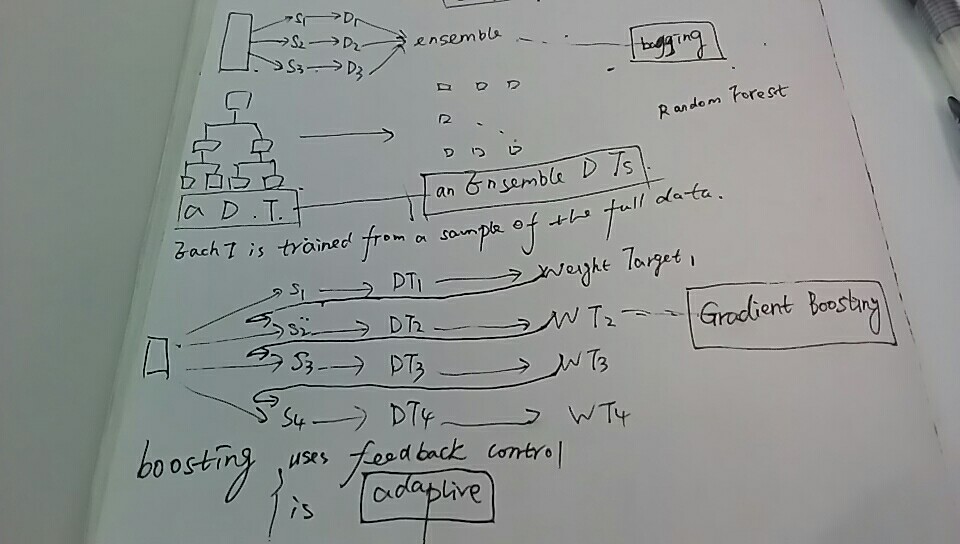

bagging

gradient boosting

- Ensemble model are great for producing robust, highly optimized and improved models.

- Random Forest and Gradient Boosting are Ensembled-Based algorithms

- Random Forest uses Bagging technique while Gradient Boosting uses Boosting technique.

- Bagging uses multiple random data sampling for modeling while Boosting uses iterative refinement for modeling.

- Ensemble models are not easy to interpret and they often work like a little back box.

- Multiple algorithms must be minimally used to that the prediction system can be reasonably tractable.

Bootstrap aggregating Bagging 合奏 Ensemble Neural Network的更多相关文章

- Ensemble Learning: Bootstrap aggregating (Bagging) & Boosting & Stacked generalization (Stacking)

Booststrap aggregating (有些地方译作:引导聚集),也就是通常为大家所熟知的bagging.在维基上被定义为一种提升机器学习算法稳定性和准确性的元算法,常用于统计分类和回归中. ...

- 读paper:Deep Convolutional Neural Network using Triplets of Faces, Deep Ensemble, andScore-level Fusion for Face Recognition

今天给大家带来一篇来自CVPR 2017关于人脸识别的文章. 文章题目:Deep Convolutional Neural Network using Triplets of Faces, Deep ...

- 【集成模型】Bootstrap Aggregating(Bagging)

0 - 思想 如下图所示,Bagging(Bootstrap Aggregating)的基本思想是,从训练数据集中有返回的抽象m次形成m个子数据集(bootstrapping),对于每一个子数据集训练 ...

- 转载:bootstrap, boosting, bagging 几种方法的联系

转:http://blog.csdn.net/jlei_apple/article/details/8168856 这两天在看关于boosting算法时,看到一篇不错的文章讲bootstrap, ja ...

- bootstrap, boosting, bagging 几种方法的联系

http://blog.csdn.net/jlei_apple/article/details/8168856 这两天在看关于boosting算法时,看到一篇不错的文章讲bootstrap, jack ...

- (转)关于bootstrap, boosting, bagging,Rand forest

转自:https://blog.csdn.net/jlei_apple/article/details/8168856 这两天在看关于boosting算法时,看到一篇不错的文章讲bootstrap, ...

- bootstrap, boosting, bagging

介绍boosting算法的资源: 视频讲义.介绍boosting算法,主要介绍AdaBoosing http://videolectures.net/mlss05us_schapire_b/ 在这个站 ...

- 【DKNN】Distilling the Knowledge in a Neural Network 第一次提出神经网络的知识蒸馏概念

原文链接 小样本学习与智能前沿 . 在这个公众号后台回复"DKNN",即可获得课件电子资源. 文章已经表明,对于将知识从整体模型或高度正则化的大型模型转换为较小的蒸馏模型,蒸馏非常 ...

- 【论文考古】知识蒸馏 Distilling the Knowledge in a Neural Network

论文内容 G. Hinton, O. Vinyals, and J. Dean, "Distilling the Knowledge in a Neural Network." 2 ...

随机推荐

- mysql5.6新补充

输入:cd C:\Program Files(x86)\MySQL\MySQL Server 5.6\bin 回车 然后输入:mysqld -install再回车 然后出现 安装成功后,再输入net ...

- 2016集训测试赛(二十)Problem B: 字典树

题目大意 你们自己感受一下原题的画风... 我怀疑出题人当年就是语文爆零的 下面复述一下出题人的意思: 操作1: 给你一个点集, 要你在trie上找到所有这样的点, 满足点集中存在某个点所表示的字符串 ...

- Maven错误“Failed to execute goal org.apache.maven.plugins:maven-archetype-plugin:2.4:create ”解决

用maven3新建一个项目时,输入的命令如下: mvn archetype:create 出现错误如下: [ERROR] Failed to execute goal org.apache.maven ...

- shell 实现自动备份nginx下的站点

shell 实现自动备份nginx下的站点 优点 实现自动备份ngnix下的所有运行的站点 自定义排除备份站点,支持三种排除 自动维护备份目录,防止备份目录无限扩大 备份压缩tar.gz格式 源码: ...

- Android Retrofit使用教程(二)

上一篇文章讲述了Retrofit的简单使用,这次我们学习一下Retrofit的各种HTTP请求. Retrofit基础 在Retrofit中使用注解的方式来区分请求类型.比如@GET("&q ...

- linux中文件描述符

:: # cat ping.txt PING baidu.com (() bytes of data. bytes from ttl= time=32.1 ms bytes from ttl= tim ...

- UVA - 10895 Matrix Transpose

UVA - 10895 Matrix Transpose Time Limit:3000MS Memory Limit:Unknown 64bit IO Format:%lld & % ...

- C 位域

C 位域 如果程序的结构中包含多个开关量,只有 TRUE/FALSE 变量,如下: struct { unsigned int widthValidated; unsigned int heightV ...

- 读刘未鹏老大《你应当怎样学习C++(以及编程)》

标签(空格分隔): 三省吾身 原文地址:你应当怎样学习C++(以及编程) 本人反思自己这些年在学校学得稀里糊涂半灌水. 看到这篇文章,感觉收获不少.仿佛有指明自己道路的感觉,当然真正困难的还是坚持学习 ...

- hibernate 数据缓存

http://www.cnblogs.com/wean/archive/2012/05/16/2502724.html,