吴裕雄 python 机器学习-DMT(1)

import numpy as np

import operator as op from math import log def createDataSet():

dataSet = [[1, 1, 'yes'],

[1, 1, 'yes'],

[1, 0, 'no'],

[0, 1, 'no'],

[0, 1, 'no']]

labels = ['no surfacing','flippers']

return dataSet, labels dataSet,labels = createDataSet()

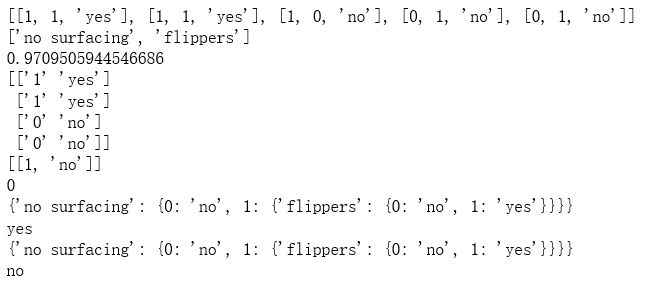

print(dataSet)

print(labels) def calcShannonEnt(dataSet):

labelCounts = {}

for featVec in dataSet:

currentLabel = featVec[-1]

if(currentLabel not in labelCounts.keys()):

labelCounts[currentLabel] = 0

labelCounts[currentLabel] += 1

shannonEnt = 0.0

rowNum = len(dataSet)

for key in labelCounts:

prob = float(labelCounts[key])/rowNum

shannonEnt -= prob * log(prob,2)

return shannonEnt shannonEnt = calcShannonEnt(dataSet)

print(shannonEnt) def splitDataSet(dataSet, axis, value):

retDataSet = []

for featVec in dataSet:

if(featVec[axis] == value):

reducedFeatVec = featVec[:axis]

reducedFeatVec.extend(featVec[axis+1:])

retDataSet.append(reducedFeatVec)

return retDataSet retDataSet = splitDataSet(dataSet,1,1)

print(np.array(retDataSet))

retDataSet = splitDataSet(dataSet,1,0)

print(retDataSet) def chooseBestFeatureToSplit(dataSet):

numFeatures = np.shape(dataSet)[1]-1

baseEntropy = calcShannonEnt(dataSet)

bestInfoGain = 0.0

bestFeature = -1

for i in range(numFeatures):

featList = [example[i] for example in dataSet]

uniqueVals = set(featList)

newEntropy = 0.0

for value in uniqueVals:

subDataSet = splitDataSet(dataSet, i, value)

prob = len(subDataSet)/float(len(dataSet))

newEntropy += prob * calcShannonEnt(subDataSet)

infoGain = baseEntropy - newEntropy

if (infoGain > bestInfoGain):

bestInfoGain = infoGain

bestFeature = i

return bestFeature bestFeature = chooseBestFeatureToSplit(dataSet)

print(bestFeature) def majorityCnt(classList):

classCount={}

for vote in classList:

if(vote not in classCount.keys()):

classCount[vote] = 0

classCount[vote] += 1

sortedClassCount = sorted(classCount.items(), key=op.itemgetter(1), reverse=True)

return sortedClassCount[0][0] def createTree(dataSet,labels):

classList = [example[-1] for example in dataSet]

if(classList.count(classList[0]) == len(classList)):

return classList[0]

if len(dataSet[0]) == 1:

return majorityCnt(classList)

bestFeat = chooseBestFeatureToSplit(dataSet)

bestFeatLabel = labels[bestFeat]

myTree = {bestFeatLabel:{}}

del(labels[bestFeat])

featValues = [example[bestFeat] for example in dataSet]

uniqueVals = set(featValues)

for value in uniqueVals:

subLabels = labels[:]

myTree[bestFeatLabel][value] = createTree(splitDataSet(dataSet, bestFeat, value),subLabels)

return myTree myTree = createTree(dataSet,labels)

print(myTree) def classify(inputTree,featLabels,testVec):

for i in inputTree.keys():

firstStr = i

break

secondDict = inputTree[firstStr]

featIndex = featLabels.index(firstStr)

key = testVec[featIndex]

valueOfFeat = secondDict[key]

if isinstance(valueOfFeat, dict):

classLabel = classify(valueOfFeat, featLabels, testVec)

else:

classLabel = valueOfFeat

return classLabel featLabels = ['no surfacing', 'flippers']

classLabel = classify(myTree,featLabels,[1,1])

print(classLabel) import pickle def storeTree(inputTree,filename):

fw = open(filename,'wb')

pickle.dump(inputTree,fw)

fw.close() def grabTree(filename):

fr = open(filename,'rb')

return pickle.load(fr) filename = "D:\\mytree.txt"

storeTree(myTree,filename)

mySecTree = grabTree(filename)

print(mySecTree) featLabels = ['no surfacing', 'flippers']

classLabel = classify(mySecTree,featLabels,[0,0])

print(classLabel)

吴裕雄 python 机器学习-DMT(1)的更多相关文章

- 吴裕雄 python 机器学习-DMT(2)

import matplotlib.pyplot as plt decisionNode = dict(boxstyle="sawtooth", fc="0.8" ...

- 吴裕雄 python 机器学习——分类决策树模型

import numpy as np import matplotlib.pyplot as plt from sklearn import datasets from sklearn.model_s ...

- 吴裕雄 python 机器学习——回归决策树模型

import numpy as np import matplotlib.pyplot as plt from sklearn import datasets from sklearn.model_s ...

- 吴裕雄 python 机器学习——线性判断分析LinearDiscriminantAnalysis

import numpy as np import matplotlib.pyplot as plt from matplotlib import cm from mpl_toolkits.mplot ...

- 吴裕雄 python 机器学习——逻辑回归

import numpy as np import matplotlib.pyplot as plt from matplotlib import cm from mpl_toolkits.mplot ...

- 吴裕雄 python 机器学习——ElasticNet回归

import numpy as np import matplotlib.pyplot as plt from matplotlib import cm from mpl_toolkits.mplot ...

- 吴裕雄 python 机器学习——Lasso回归

import numpy as np import matplotlib.pyplot as plt from sklearn import datasets, linear_model from s ...

- 吴裕雄 python 机器学习——岭回归

import numpy as np import matplotlib.pyplot as plt from sklearn import datasets, linear_model from s ...

- 吴裕雄 python 机器学习——线性回归模型

import numpy as np from sklearn import datasets,linear_model from sklearn.model_selection import tra ...

随机推荐

- 百度翻译API(C#)

百度翻译开放平台:点击打开链接 1. 定义类用于保存解析json得到的结果 public class Translation { public string Src { get; set; } pub ...

- JavaScript基础知识:数据类型,运算符,流程控制,语法,函数。

JavaScript概述 ECMAScript和JavaScript的关系 1996年11月,JavaScript的创造者--Netscape公司,决定将JavaScript提交给国际标准化组织ECM ...

- Java8之分组

数据库中根据多个条件进行分组 ) from tableA group by a, b 现在不使用sql,而直接使用java编写分组,则通过Java8根据多个条件进行分组代码如下: List<Us ...

- python学习之----正则表达式

- python入门-直方图

使用的是pygal函数库 所以需要先安装 1 安装库文件 pip install pygal=1.7 2 创建骰子类 from random import randint class Die(): # ...

- mybatis关系映射之一对多和多对一

本实例使用用户(User)和博客(Post)的例子做说明: 一个用户可以有多个博客, 一个博客只对应一个用户 一. 例子(本实体采用maven构建): 1. 代码结构图: 2. 数据库: t_user ...

- Jenkins 之邮件配置

Jenkins 之邮件配置其实还是有些麻烦的,坑比较多,一不小心就...我是走了很多弯路的. 这里记录下来,希望大家以后不要重蹈覆辙: 我测试过,这里的 Extended E-mail Notific ...

- C#用log4net记录日志

1.首先安装 log4net. 2.新建 log4net.config 文件,右键-属性 “复制到输出目录”设置为“始终复制” 3.设置 log4net.config 配置文件 <?xml ve ...

- Flex学习笔记-时间触发器

<?xml version="1.0" encoding="utf-8"?> <s:Application xmlns:fx="ht ...

- web 在线聊天的基本实现

参考:https://www.cnblogs.com/guoke-jsp/p/6047496.html