A Statistical View of Deep Learning (II): Auto-encoders and Free Energy

A Statistical View of Deep Learning (II): Auto-encoders and Free Energy

With the success of discriminative modelling using deep feedforward neural networks (or using an alternative statistical lens, recursive generalised linear models) in numerous industrial applications, there is an increased drive to produce similar outcomes with unsupervised learning. In this post, I'd like to explore the connections between denoising auto-encoders as a leading approach for unsupervised learning in deep learning, and density estimation in statistics. The statistical view I'll explore casts learning in denoising auto-encoders as that of inference in latent factor (density) models. Such a connection has a number of useful benefits and implications for our machine learning practice.

Generalised Denoising Auto-encoders

Denoising auto-encoders are an important advancement in unsupervised deep learning, especially in moving towards scalable and robust representations of data. For every data point y, denoising auto-encoders begin by creating a perturbed version of it y', using a known corruption process C(y′|y). We then create a network that given the perturbed data y', reconstructs the original data y. The network is grouped into two parts, an encoder and a decoder, such that the output of the encoder z can be used as a representation/features of the data. The objective function is [1]:

where logp(⋅) is an appropriate likelihood function for the data, and the objective function is averaged over all observations. Generalised denoising auto-encoders (GDAEs) realise that this formulation may be limited due to finite training data, and introduce an additional penalty term R(⋅) for added regularisation [2]:

GDAEs exploit the insight that perturbations in the observation space give rise to robustness and insensitivity in the representation z. Two key questions that arise when we use GDAEs are: how to choose a realistic corruption process, and what are appropriate regularisation functions.

Separating Model and Inference

The difficulty in reasoning statistically about auto-encoders is that they do not maintain or encourage a distinction between a model of the data (statistical assumptions about the properties and structure we expect) and the approach for inference/estimation in that model (the ways in which we link the observed data to our modelling assumptions). The auto-encoder framework provides a computational pipeline, but not a statistical explanation, since to explain the data (which must be an outcome of our model), you must know it beforehand and use it as an input. Not maintaining the distinction between model and inference impedes our ability to correctly evaluate and compare competing approaches for a problem, leaves us unaware of relevant approaches in related literatures that could provide useful insight, and makes it difficult for us to provide the guidance that allows our insights to be incorporated into our community's broader knowledge-base.

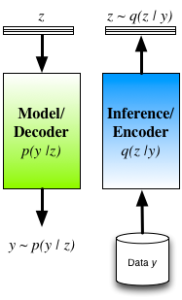

To ameliorate these concerns we typically re-interpret the auto-encoder by seeing thedecoder as the statistical model of interest (and is indeed how many interpret and use auto-encoders in practice). A probabilistic decoder provides a generative description of the data, and our task is inference/learning in this model. For a given model, there are many competing approaches for inference, such as maximum likelihood (ML) andmaximum a posteriori (MAP) estimation, noise-contrastive estimation, Markov chain Monte Carlo (MCMC), variational inference, cavity methods, integrated nested Laplace approximations (INLA), etc. The role of the encoder is now clear: the encoder is one mechanism for inference in the model described by the decoder. Its structure is not tied to the model (decoder), and it is just one from the smorgasbord of available approaches with its own advantages and tradeoffs.

Approximate Inference in Latent Variable Models

Encoder-decoder view of inference in latent variable models.

Another difficulty with DAEs is that robustness is obtained by considering perturbations in the data space — such a corruption process will, in general, not be easy to design. Furthermore, by carefully reasoning about the induced probabilities, we can show [1] that the DAE objective function LDAE corresponds to a lower bound obtained by applying the variational principle to the log-density of the corrupted data logp(y′) — this though, is nota quantity we are interested in reasoning about.

A way forward would be to instead apply the variational principle to the quantity we are interested in, the log-marginal probability of the observed data logp(y) [3][4]. The objective function obtained by applying the variational principle to the generative model (probabilistic decoder) is known as the variational free energy:

By inspection, we can see that this matches the form of the GDAE objective. There are notable differences though:

- Instead of considering perturbations in the observation space, we consider perturbations in the hidden space, obtained by using a prior p(z). The hidden variables are now random, latent variables. Auto-encoders are now generative models that are straightforward to sample from.

- The encoder q(z|y) is a mechanism for approximating the true posterior distribution of the latent/hidden variables p(z|y).

- We are now able to explain the introduction of the penalty function in the GDAE objective in a principled manner. Rather than designing the penalty by hand, we are able to derive the form this penalty should take, appearing as the KL divergence between the the prior and the encoder distribution.

Auto-encoders reformulated in this way, thus provide an efficient way of implementing approximate Bayesian inference. Using an encoder-decoder structure, we gain the ability to jointly optimise all parameters using the single computational graph; and we obtain an efficient way of doing inference at test time, since we only need a single forward pass through the encoder. The cost of taking this approach is that we have now obtained a potentially harder optimisation, since we have coupled the inferences for the latent variables together through the parameters of the encoder. Approaches that do not implement the q-distribution as an encoder have the ability to deal with arbitrary missingness patterns in the observed data and we lose this ability, since the encoder must be trained knowing the missingness pattern it will encounter. One way we explored these connections is in a model we called Deep Latent Gaussian Models (DLGM) with inference based on stochastic variational inference (and implemented using an encoder) [3], and is now the basis of a number of extensions [5][6].

Summary

Auto-encoders address the problem of statistical inference and provide a powerful mechanism for inference that plays a central role in our search for more powerful unsupervised learning. A statistical view, and variational reformulation, of auto-encoders allows us to maintain a clear distinction between the assumed statistical model and our approach for inference, gives us one efficient way of implementing inference, gives us an easy-to-sample generative model, allows us to reason about the statistical quantity we are actually interested in, and gives us a principled loss function that includes the important regularisation terms. This is just one perspective that is becoming increasingly popular, and is worthwhile to reflect upon as we continue to explore the frontiers of unsupervised learning.

Some References

| [1] | Pascal Vincent, Hugo Larochelle, Yoshua Bengio, Pierre-Antoine Manzagol,Extracting and composing robust features with denoising autoencoders, Proceedings of the 25th international conference on Machine learning, 2008 |

| [2] | Yoshua Bengio, Li Yao, Guillaume Alain, Pascal Vincent, Generalized denoising auto-encoders as generative models, Advances in Neural Information Processing Systems, 2013 |

| [3] | Danilo Jimenez Rezende, Shakir Mohamed, Daan Wierstra, Stochastic Backpropagation and Approximate Inference in Deep Generative Models, Proceedings of The 31st International Conference on Machine Learning, 2014 |

| [4] | Diederik P Kingma, Max Welling, Auto-encoding variational bayes, arXiv preprint arXiv:1312.6114, 2014 |

| [5] | Diederik P Kingma, Shakir Mohamed, Danilo Jimenez Rezende, Max Welling, Semi-supervised learning with deep generative models, Advances in Neural Information Processing Systems, 2014 |

| [6] | Karol Gregor, Ivo Danihelka, Alex Graves, Daan Wierstra, DRAW: A Recurrent Neural Network For Image Generation, arXiv preprint arXiv:1502.04623, 2015 |

A Statistical View of Deep Learning (II): Auto-encoders and Free Energy的更多相关文章

- A Statistical View of Deep Learning (V): Generalisation and Regularisation

A Statistical View of Deep Learning (V): Generalisation and Regularisation We now routinely build co ...

- A Statistical View of Deep Learning (IV): Recurrent Nets and Dynamical Systems

A Statistical View of Deep Learning (IV): Recurrent Nets and Dynamical Systems Recurrent neural netw ...

- A Statistical View of Deep Learning (III): Memory and Kernels

A Statistical View of Deep Learning (III): Memory and Kernels Memory, the ways in which we remember ...

- A Statistical View of Deep Learning (I): Recursive GLMs

A Statistical View of Deep Learning (I): Recursive GLMs Deep learningand the use of deep neural netw ...

- 机器学习(Machine Learning)&深度学习(Deep Learning)资料【转】

转自:机器学习(Machine Learning)&深度学习(Deep Learning)资料 <Brief History of Machine Learning> 介绍:这是一 ...

- 【深度学习Deep Learning】资料大全

最近在学深度学习相关的东西,在网上搜集到了一些不错的资料,现在汇总一下: Free Online Books by Yoshua Bengio, Ian Goodfellow and Aaron C ...

- 机器学习(Machine Learning)&深度学习(Deep Learning)资料(Chapter 2)

##机器学习(Machine Learning)&深度学习(Deep Learning)资料(Chapter 2)---#####注:机器学习资料[篇目一](https://github.co ...

- translation of 《deep learning》 Chapter 1 Introduction

原文: http://www.deeplearningbook.org/contents/intro.html Inventors have long dreamed of creating mach ...

- 深度学习基础 Probabilistic Graphical Models | Statistical and Algorithmic Foundations of Deep Learning

目录 Probabilistic Graphical Models Statistical and Algorithmic Foundations of Deep Learning 01 An ove ...

随机推荐

- jQuery Mobile 图标无法显示

对jquery mobile来说,使用data-icon属性配置,可以设置元素的图标.图标没有变成右箭头,而是如下图所示: //已经设置了图标 ,data-icon="home" ...

- Memo打印1

Delphi 打印Memo里面的内容 实现的功能和记事本的打印的功能一样 打印保存为文件时此时的文件名如何设置? 当Memo里的文本数量巨大时 窗体正在打印会出现点数字显示问题 闪 ...

- MapReduce输出格式

针对前面介绍的输入格式,MapReduce也有相应的输出格式.默认情况下只有一个 Reduce,输出只有一个文件,默认文件名为 part-r-00000,输出文件的个数与 Reduce 的个数一致. ...

- 关于echarts的使用----模块化单文件引入(推荐) 与标签式单文件引入

官网:http://echarts.baidu.com/echarts2/doc/doc.html#引入ECharts3 关于模块化单文件引入(推荐) 与标签式单文件引入

- Orchard路由随记(一)

对于Orchard来说,个人以为要真正理解Orchard,必须理解其路由工作方式. 一.Orchard的自定义路由由三种类型组成 1.分发类: HubRoute:其功能是按租户筛选出当前访问租户的路由 ...

- ORACLE多表关联UPDATE 语句

转载至:http://blog.itpub.net/29378313/viewspace-1064069/ 为了方便起见,建立了以下简单模型,和构造了部分测试数据:在某个业务受理子系统BSS中, SQ ...

- 菜鸟学开店—自带U盘的打印机

本文旨在提供最简单.便宜.有效的解决方案,解决普通用户最困扰的问题.今天提供普通用户一个低价的小票打印机驱动安装解决方案 相信很多用户都碰到过这种情况,电脑的重装了系统,打印机的驱动没有备份,要用打印 ...

- 【转】iOS开发UI篇—程序启动原理和UIApplication

原文 http://www.cnblogs.com/wendingding/p/3766347.html 一.UIApplication 1.简单介绍 (1)UIApplication对象是应用程 ...

- 绘图quartz之阴影

//设置矩形的阴影 并在后边加一个圆 不带阴影 步骤: CGContextRef context = UIGraphicsGetCurrentContext(); ...

- Perl中级第四章课后习题

1.下列表达式各表示什么不同的含义: $ginger->[2][1] ${$ginger[2]}[1] $ginger->[2]->[1] ${$ginger->[2]}[1] ...