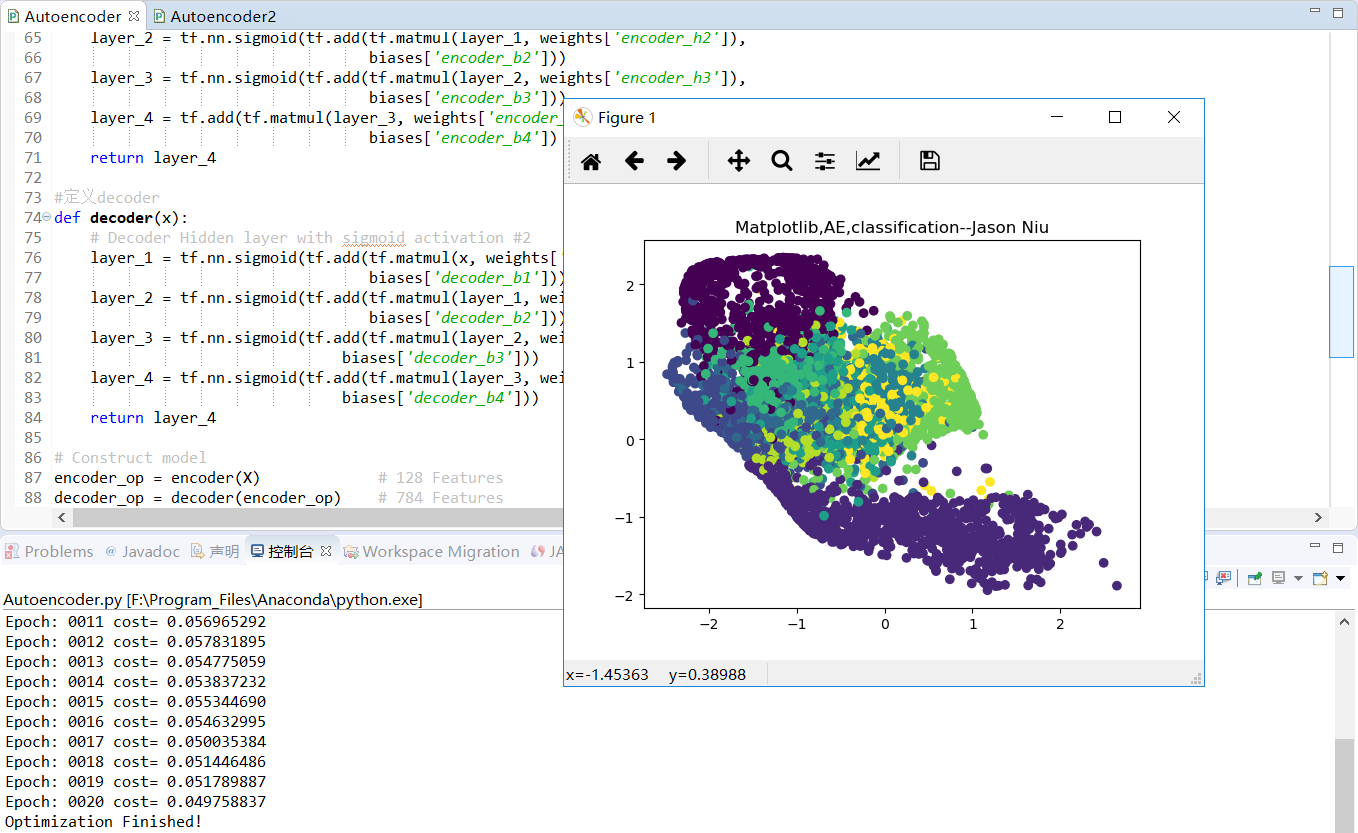

TF之AE:AE实现TF自带数据集AE的encoder之后decoder之前的非监督学习分类—Jason niu

import tensorflow as tf

import numpy as np

import matplotlib.pyplot as plt #Import MNIST data

from tensorflow.examples.tutorials.mnist import input_data

mnist=input_data.read_data_sets("/niu/mnist_data/",one_hot=False) # Parameter

learning_rate = 0.001

training_epochs = 20

batch_size = 256

display_step = 1

examples_to_show = 10 # Network Parameters

n_input = 784 # MNIST data input (img shape: 28*28像素即784个特征值) #tf Graph input(only pictures)

X=tf.placeholder("float", [None,n_input]) # hidden layer settings

n_hidden_1 = 128

n_hidden_2 = 64

n_hidden_3 = 10

n_hidden_4 = 2 weights = {

'encoder_h1': tf.Variable(tf.random_normal([n_input,n_hidden_1])),

'encoder_h2': tf.Variable(tf.random_normal([n_hidden_1,n_hidden_2])),

'encoder_h3': tf.Variable(tf.random_normal([n_hidden_2,n_hidden_3])),

'encoder_h4': tf.Variable(tf.random_normal([n_hidden_3,n_hidden_4])), 'decoder_h1': tf.Variable(tf.random_normal([n_hidden_4,n_hidden_3])),

'decoder_h2': tf.Variable(tf.random_normal([n_hidden_3,n_hidden_2])),

'decoder_h3': tf.Variable(tf.random_normal([n_hidden_2,n_hidden_1])),

'decoder_h4': tf.Variable(tf.random_normal([n_hidden_1, n_input])),

}

biases = {

'encoder_b1': tf.Variable(tf.random_normal([n_hidden_1])),

'encoder_b2': tf.Variable(tf.random_normal([n_hidden_2])),

'encoder_b3': tf.Variable(tf.random_normal([n_hidden_3])),

'encoder_b4': tf.Variable(tf.random_normal([n_hidden_4])), 'decoder_b1': tf.Variable(tf.random_normal([n_hidden_3])),

'decoder_b2': tf.Variable(tf.random_normal([n_hidden_2])),

'decoder_b3': tf.Variable(tf.random_normal([n_hidden_1])),

'decoder_b4': tf.Variable(tf.random_normal([n_input])),

} def encoder(x):

# Encoder Hidden layer with sigmoid activation #1

layer_1 = tf.nn.sigmoid(tf.add(tf.matmul(x, weights['encoder_h1']),

biases['encoder_b1']))

layer_2 = tf.nn.sigmoid(tf.add(tf.matmul(layer_1, weights['encoder_h2']),

biases['encoder_b2']))

layer_3 = tf.nn.sigmoid(tf.add(tf.matmul(layer_2, weights['encoder_h3']),

biases['encoder_b3']))

layer_4 = tf.add(tf.matmul(layer_3, weights['encoder_h4']),

biases['encoder_b4'])

return layer_4 #定义decoder

def decoder(x):

# Decoder Hidden layer with sigmoid activation #2

layer_1 = tf.nn.sigmoid(tf.add(tf.matmul(x, weights['decoder_h1']),

biases['decoder_b1']))

layer_2 = tf.nn.sigmoid(tf.add(tf.matmul(layer_1, weights['decoder_h2']),

biases['decoder_b2']))

layer_3 = tf.nn.sigmoid(tf.add(tf.matmul(layer_2, weights['decoder_h3']),

biases['decoder_b3']))

layer_4 = tf.nn.sigmoid(tf.add(tf.matmul(layer_3, weights['decoder_h4']),

biases['decoder_b4']))

return layer_4 # Construct model

encoder_op = encoder(X) # 128 Features

decoder_op = decoder(encoder_op) # 784 Features # Prediction

y_pred = decoder_op #After

# Targets (Labels) are the input data.

y_true = X #Before cost = tf.reduce_mean(tf.pow(y_true - y_pred, 2))

optimizer = tf.train.AdamOptimizer(learning_rate).minimize(cost) # Launch the graph

with tf.Session() as sess: sess.run(tf.global_variables_initializer())

total_batch = int(mnist.train.num_examples/batch_size)

# Training cycle

for epoch in range(training_epochs):

# Loop over all batches

for i in range(total_batch):

batch_xs, batch_ys = mnist.train.next_batch(batch_size) # max(x) = 1, min(x) = 0

# Run optimization op (backprop) and cost op (to get loss value)

_, c = sess.run([optimizer, cost], feed_dict={X: batch_xs})

# Display logs per epoch step

if epoch % display_step == 0:

print("Epoch:", '%04d' % (epoch+1),

"cost=", "{:.9f}".format(c)) print("Optimization Finished!") encode_result = sess.run(encoder_op,feed_dict={X:mnist.test.images})

plt.scatter(encode_result[:,0],encode_result[:,1],c=mnist.test.labels)

plt.title('Matplotlib,AE,classification--Jason Niu')

plt.show()

TF之AE:AE实现TF自带数据集AE的encoder之后decoder之前的非监督学习分类—Jason niu的更多相关文章

- TF之AE:AE实现TF自带数据集数字真实值对比AE先encoder后decoder预测数字的精确对比—Jason niu

import tensorflow as tf import numpy as np import matplotlib.pyplot as plt #Import MNIST data from t ...

- TF:利用TF的train.Saver载入曾经训练好的variables(W、b)以供预测新的数据—Jason niu

import tensorflow as tf import numpy as np W = tf.Variable(np.arange(6).reshape((2, 3)), dtype=tf.fl ...

- TF:利用sklearn自带数据集使用dropout解决学习中overfitting的问题+Tensorboard显示变化曲线—Jason niu

import tensorflow as tf from sklearn.datasets import load_digits #from sklearn.cross_validation impo ...

- 对抗生成网络-图像卷积-mnist数据生成(代码) 1.tf.layers.conv2d(卷积操作) 2.tf.layers.conv2d_transpose(反卷积操作) 3.tf.layers.batch_normalize(归一化操作) 4.tf.maximum(用于lrelu) 5.tf.train_variable(训练中所有参数) 6.np.random.uniform(生成正态数据

1. tf.layers.conv2d(input, filter, kernel_size, stride, padding) # 进行卷积操作 参数说明:input输入数据, filter特征图的 ...

- TF之RNN:实现利用scope.reuse_variables()告诉TF想重复利用RNN的参数的案例—Jason niu

import tensorflow as tf # 22 scope (name_scope/variable_scope) from __future__ import print_function ...

- TF之RNN:TF的RNN中的常用的两种定义scope的方式get_variable和Variable—Jason niu

# tensorflow中的两种定义scope(命名变量)的方式tf.get_variable和tf.Variable.Tensorflow当中有两种途径生成变量 variable import te ...

- TF之RNN:matplotlib动态演示之基于顺序的RNN回归案例实现高效学习逐步逼近余弦曲线—Jason niu

import tensorflow as tf import numpy as np import matplotlib.pyplot as plt BATCH_START = 0 TIME_STEP ...

- TF之RNN:TensorBoard可视化之基于顺序的RNN回归案例实现蓝色正弦虚线预测红色余弦实线—Jason niu

import tensorflow as tf import numpy as np import matplotlib.pyplot as plt BATCH_START = 0 TIME_STEP ...

- TF之RNN:基于顺序的RNN分类案例对手写数字图片mnist数据集实现高精度预测—Jason niu

import tensorflow as tf from tensorflow.examples.tutorials.mnist import input_data mnist = input_dat ...

随机推荐

- Sybase·调用存储过程并返回结果

最近项目要用Sybase数据库实现分页,第一次使用Sybase数据库,也是第一次使用他的存储过程.2个多小时才调用成功,在此记录: 项目架构:SSM 1.Sybase本身不支持分页操作,需要写存储过程 ...

- deepin 桌面突然卡死

deepin桌面突然卡死 使用快捷键Ctrl+alt+F2 重启systemctl

- (一)python 数据模型

1.通过实现特殊方法,自定义类型可以表现的跟内置类型一样: 如下代码,实现len, getitem,可使自定义类型表现得如同列表一样. import collections from random i ...

- linux 下安装vscode

下载安装包 https://code.visualstudio.com/docs/?dv=linux64_deb (注意是deb包) sudo dpkg -i code_1.18.1-15108573 ...

- Linux基础实操三

实操一: 1) 将用户信息数据库文件和组信息数据库文件纵向合并为一个文件/1.txt(覆盖) cd /etc -->tar passwd * group * > 1.txt 2) 将用户信 ...

- java子类对象和成员变量的隐写&方法重写

1.子类继承的方法只能操作子类继承和隐藏的成员变量名字类新定义的方法可以操作子类继承和子类新生命的成员变量,但是无法操作子类隐藏的成员变量(需要适用super关键字操作子类隐藏的成员变量.) publ ...

- tensorflow(4):神经网络框架总结

#总结神经网络框架 #1,搭建网络设计结构(前向传播) 文件forward.py def forward(x,regularizer): # 输入x 和正则化权重 w= b= y= return y ...

- Centos + docker,Ubuntu + docker介绍安装及详细使用

docker笔记 常用命令 设置docker开机自启:sudo chkconfig docker on 查所有镜像: docker images 删除某个镜像:docker rmi CONTAINER ...

- IDEA的debug操作

- 首次使用idea步骤

修改代码提示快捷键 默认是ctrl+空格,这个会跟中英文切换的快捷键冲突. 我这里改成了alt+/ tomcat的配置