Regularization —— linear regression

本节主要是练习regularization项的使用原则。因为在机器学习的一些模型中,如果模型的参数太多,而训练样本又太少的话,这样训练出来的模型很容易产生过拟合现象。因此在模型的损失函数中,需要对模型的参数进行“惩罚”,这样的话这些参数就不会太大,而越小的参数说明模型越简单,越简单的模型则越不容易产生过拟合现象。

Regularized linear regression

From looking at this plot, it seems that fitting a straight line might be too simple of an approximation. Instead, we will try fitting a higher-order polynomial to the data to capture more of the variations in the points.

Let's try a fifth-order polynomial. Our hypothesis will be

This means that we have a hypothesis of six features, because  are now all features of our regression. Notice that even though we are producing a polynomial fit, we still have a linear regression problem because the hypothesis is linear in each feature.

are now all features of our regression. Notice that even though we are producing a polynomial fit, we still have a linear regression problem because the hypothesis is linear in each feature.

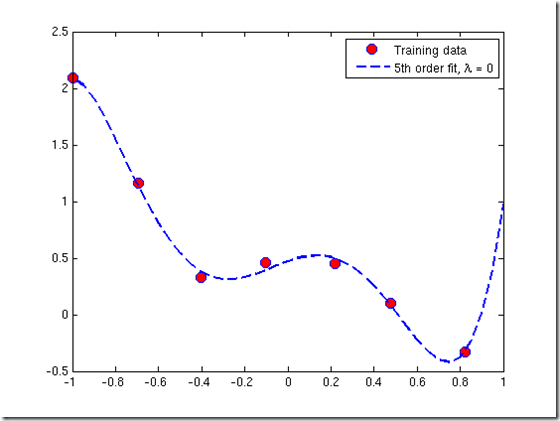

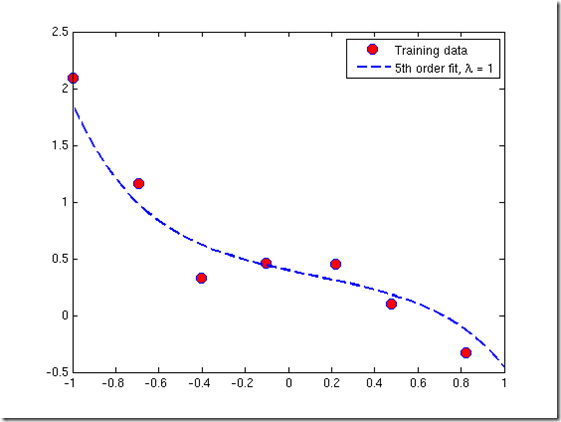

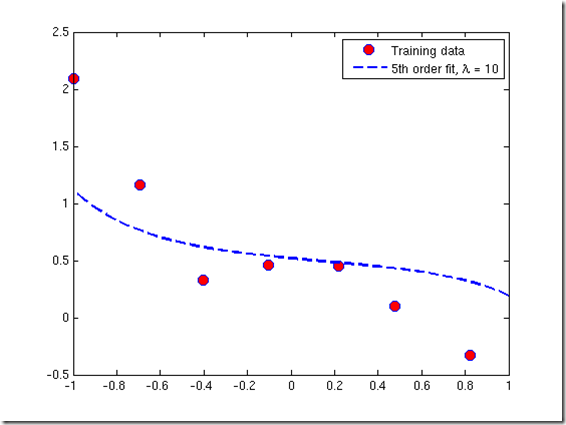

Since we are fitting a 5th-order polynomial to a data set of only 7 points, over-fitting is likely to occur. To guard against this, we will use regularization in our model.

Recall that in regularization problems, the goal is to minimize the following cost function with respect to  :

:

The regularization parameter  is a control on your fitting parameters. As the magnitues of the fitting parameters increase, there will be an increasing penalty on the cost function. This penalty is dependent on the squares of the parameters as well as the magnitude of

is a control on your fitting parameters. As the magnitues of the fitting parameters increase, there will be an increasing penalty on the cost function. This penalty is dependent on the squares of the parameters as well as the magnitude of  . Also, notice that the summation after

. Also, notice that the summation after  does not include

does not include

lamda 越大,训练出的模型越简单 —— 后一项的惩罚越大

Normal equations

Now we will find the best parameters of our model using the normal equations. Recall that the normal equations solution to regularized linear regression is

The matrix following

is an

diagonal matrix with a zero in the upper left and ones down the other diagonal entries. (Remember that

is the number of features, not counting the intecept term). The vector

and the matrix

have the same definition they had for unregularized regression:

Using this equation, find values for

using the three regularization parameters below:

a.

(this is the same case as non-regularized linear regression)

b.

c.

Code

clc,clear

%加载数据

x = load('ex5Linx.dat');

y = load('ex5Liny.dat'); %显示原始数据

plot(x,y,'o','MarkerEdgeColor','b','MarkerFaceColor','r') %将特征值变成训练样本矩阵

x = [ones(length(x),) x x.^ x.^ x.^ x.^];

[m n] = size(x);

n = n -; %计算参数sidta,并且绘制出拟合曲线

rm = diag([;ones(n,)]);%lamda后面的矩阵

lamda = [ ]';

colortype = {'g','b','r'};

sida = zeros(n+,); %初始化参数sida

xrange = linspace(min(x(:,)),max(x(:,)))';

hold on;

for i = :

sida(:,i) = inv(x'*x+lamda(i).*rm)*x'*y;%计算参数sida

norm_sida = norm(sida) % norm 求sida的2阶范数

yrange = [ones(size(xrange)) xrange xrange.^ xrange.^,...

xrange.^ xrange.^]*sida(:,i);

plot(xrange',yrange,char(colortype(i)))

hold on

end

legend('traning data', '\lambda=0', '\lambda=1','\lambda=10')%注意转义字符的使用方法

hold off

Regularization —— linear regression的更多相关文章

- machine learning(14) --Regularization:Regularized linear regression

machine learning(13) --Regularization:Regularized linear regression Gradient descent without regular ...

- Matlab实现线性回归和逻辑回归: Linear Regression & Logistic Regression

原文:http://blog.csdn.net/abcjennifer/article/details/7732417 本文为Maching Learning 栏目补充内容,为上几章中所提到单参数线性 ...

- Stanford机器学习---第二讲. 多变量线性回归 Linear Regression with multiple variable

原文:http://blog.csdn.net/abcjennifer/article/details/7700772 本栏目(Machine learning)包括单参数的线性回归.多参数的线性回归 ...

- Stanford机器学习---第一讲. Linear Regression with one variable

原文:http://blog.csdn.net/abcjennifer/article/details/7691571 本栏目(Machine learning)包括单参数的线性回归.多参数的线性回归 ...

- Regularized Linear Regression with scikit-learn

Regularized Linear Regression with scikit-learn Earlier we covered Ordinary Least Squares regression ...

- 机器学习笔记-1 Linear Regression with Multiple Variables(week 2)

1. Multiple Features note:X0 is equal to 1 2. Feature Scaling Idea: make sure features are on a simi ...

- Simple tutorial for using TensorFlow to compute a linear regression

"""Simple tutorial for using TensorFlow to compute a linear regression. Parag K. Mita ...

- 第五次编程作业-Regularized Linear Regression and Bias v.s. Variance

1.正规化的线性回归 (1)代价函数 (2)梯度 linearRegCostFunction.m function [J, grad] = linearRegCostFunction(X, y, th ...

- [UFLDL] Linear Regression & Classification

博客内容取材于:http://www.cnblogs.com/tornadomeet/archive/2012/06/24/2560261.html Deep learning:六(regulariz ...

随机推荐

- stuff(param1, startIndex, length, param2)

1.作用 stuff(param1, startIndex, length, param2)将param1中自startIndex(SQL中都是从1开始,而非0)起,删除length个字符,然后用pa ...

- ubuntu重启网络报错

执行:gw@ubuntu:/$ /etc/init.d/networking restart 报错:stop: Rejected send message, 1 matched rules; type ...

- Spring的注解@SuppressWarnings用法记录

@SuppressWarnings注解用法 @SuppressWarnings注解主要用在取消一些编译器产生的警告对代码左侧行列的遮挡,有时候这会挡住我们断点调试时打的断点. 如图所示: 这时候我们在 ...

- TP5 模板渲染语法

每次都要去网上找,又发现都不全.所以自己记录一下 volist:循环 {volist name="collection" id="v"} {/volist} i ...

- HDU-2050 折线分割平面 找规律&递推

题目链接:https://cn.vjudge.net/problem/HDU-2050 题意 算了吧,中文题不解释了 我们看到过很多直线分割平面的题目,今天的这个题目稍微有些变化,我们要求的是n条折线 ...

- windows下用winscp的root连接ubuntu“拒绝访问”的解决方法

转载:https://www.cnblogs.com/weizhxa/p/10098640.html 解决: 1.修改ssh配置文件:sudo vim etc/ssh/sshd_config 在#Pe ...

- Rman备份异机恢复

最后更新时间:2018/12/29 前置条件 已准备一台安装好Centos6+oracle11gr2 软件的服务器; 只安装了 oracle 数据库软件,需要手工创建以下目录: #环境变量 expor ...

- NetHogs---按进程或程序实时统计网络带宽使用率。

NetHogs是一个开源的命令行工具(类似于Linux的top命令),用来按进程或程序实时统计网络带宽使用率. 来自NetHogs项目网站: NetHogs是一个小型的net top工具,不像大多数工 ...

- 紫书 例题 10-27 UVa 10214(欧拉函数)

只看一个象限简化问题,最后答案乘4+4 象限里面枚举x, 在当前这条固定的平行于y轴的直线中 分成长度为x的一段段.符合题目要求的点gcd(x,y) = 1 那么第一段1<= y <= x ...

- Unity shader 代码高亮+提示

Shader Unity Support This is Unity CG Shaders Support. It has code completion support and uses C/C++ ...