Linear Decoders

Sparse Autoencoder Recap

In the sparse autoencoder, we had 3 layers of neurons: an input layer, a hidden layer and an output layer. In our previous description of autoencoders (and of neural networks), every neuron in the neural network used the same activation function. In these notes, we describe a modified version of the autoencoder in which some of the neurons use a different activation function. This will result in a model that is sometimes simpler to apply, and can also be more robust to variations in the parameters.

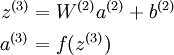

Recall that each neuron (in the output layer) computed the following:

where a(3) is the output. In the autoencoder, a(3) is our approximate reconstruction of the input x = a(1).

Because we used a sigmoid activation function for f(z(3)), we needed to constrain or scale the inputs to be in the range[0,1], since the sigmoid function outputs numbers in the range [0,1].

引入 —— 相同的activation function ,非线性映射会导致输入和输出不等

Linear Decoder

One easy fix for this problem is to set a(3) = z(3). Formally, this is achieved by having the output nodes use an activation function that's the identity function f(z) = z, so that a(3) = f(z(3)) = z(3). 输出结点不适用sigmoid 函数

This particular activation function

is called the linear activation function。Note however that in the hidden layer of the network, we still use a sigmoid (or tanh) activation function, so that the hidden unit activations are given by (say)

, where

is the sigmoid function, x is the input, and W(1) andb(1) are the weight and bias terms for the hidden units. It is only in the output layer that we use the linear activation function.

An autoencoder in this configuration--with a sigmoid (or tanh) hidden layer and a linear output layer--is called a linear decoder.

In this model, we have

. Because the output

is a now linear function of the hidden unit activations, by varying W(2), each output unit a(3) can be made to produce values greater than 1 or less than 0 as well. This allows us to train the sparse autoencoder real-valued inputs without needing to pre-scale every example to a specific range.

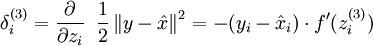

Since we have changed the activation function of the output units, the gradients of the output units also change. Recall that for each output unit, we had set set the error terms as follows:

where y = x is the desired output,

is the output of our autoencoder, and

is our activation function. Because in the output layer we now have f(z) = z, that implies f'(z) = 1 and thus the above now simplifies to:

output结点

Of course, when using backpropagation to compute the error terms for the hidden layer:

hidden结点

Because the hidden layer is using a sigmoid (or tanh) activation f, in the equation above

should still be the derivative of the sigmoid (or tanh) function.

Linear Decoders的更多相关文章

- (六)6.16 Neurons Networks linear decoders and its implements

Sparse AutoEncoder是一个三层结构的网络,分别为输入输出与隐层,前边自编码器的描述可知,神经网络中的神经元都采用相同的激励函数,Linear Decoders 修改了自编码器的定义,对 ...

- CS229 6.16 Neurons Networks linear decoders and its implements

Sparse AutoEncoder是一个三层结构的网络,分别为输入输出与隐层,前边自编码器的描述可知,神经网络中的神经元都采用相同的激励函数,Linear Decoders 修改了自编码器的定义,对 ...

- Unsupervised Feature Learning and Deep Learning(UFLDL) Exercise 总结

7.27 暑假开始后,稍有时间,“搞完”金融项目,便开始跑跑 Deep Learning的程序 Hinton 在Nature上文章的代码 跑了3天 也没跑完 后来Debug 把batch 从200改到 ...

- Deep learning:三十八(Stacked CNN简单介绍)

http://www.cnblogs.com/tornadomeet/archive/2013/05/05/3061457.html 前言: 本节主要是来简单介绍下stacked CNN(深度卷积网络 ...

- [UFLDL] Basic Concept

博客内容取材于:http://www.cnblogs.com/tornadomeet/archive/2012/06/24/2560261.html 参考资料: UFLDL wiki UFLDL St ...

- [UFLDL] Dimensionality Reduction

博客内容取材于:http://www.cnblogs.com/tornadomeet/archive/2012/06/24/2560261.html Deep learning:三十五(用NN实现数据 ...

- Deep Learning 教程翻译

Deep Learning 教程翻译 非常激动地宣告,Stanford 教授 Andrew Ng 的 Deep Learning 教程,于今日,2013年4月8日,全部翻译成中文.这是中国屌丝军团,从 ...

- ICLR 2013 International Conference on Learning Representations深度学习论文papers

ICLR 2013 International Conference on Learning Representations May 02 - 04, 2013, Scottsdale, Arizon ...

- Deep Learning基础--线性解码器、卷积、池化

本文主要是学习下Linear Decoder已经在大图片中经常采用的技术convolution和pooling,分别参考网页http://deeplearning.stanford.edu/wiki/ ...

随机推荐

- JavaScript,ES5和ES6的区别

什么是JavaScript JavaScript一种动态类型.弱类型.基于原型的客户端脚本语言,用来给HTML网页增加动态功能.(好吧,概念什么最讨厌了) 动态: 在运行时确定数据类型.变量使用之前不 ...

- Linux和Windows系统的远程桌面访问知识(转载)

为新手讲解Linux和Windows系统的远程桌面访问知识 很多新手都是使用Linux和Windows双系统的,它们之间的远程桌面访问是如何连接的,我们就为新手讲解Linux和Windows系统的 ...

- JS由Number与new Number的区别引发的思考

在回答园子问题的时候发现了不少新东西,写下来分享一下 == 下面的图就是此篇的概览,另外文章的解释不包括ES6新增的Symbol,话说这货有包装类型,但是不能new... 基于JS是面向对象的,所以我 ...

- TP5 belongsTo 和 hasOne的区别

hasOne和belongsTo这两种方法都可以应用在一对一关联上,但是他们也是有区别的: belongsTo: 从属关系:就是谁为主的问题 A:{id,name,sex} B:{id,name.A_ ...

- neo4j nosql图数据库学习

neo4j 文档:https://neo4j.com/docs/getting-started/current/cypher-intro/ 1.索引 # 给某类标签节点的属性添加索引 CREATE I ...

- 洛谷P2196 && caioj 1415 动态规划6:挖地雷

没看出来动规怎么做,看到n <= 20,直接一波暴搜,过了. #include<cstdio> #include<cstring> #include<algorit ...

- js实现复选框的操作-------Day41

不知道之前的一篇为什么一直处于审核阶段.难道有哪个词语是敏感词被河蟹了? 无论了,又一次写了这篇,也算是加深记忆吧. 首先要写的是今天在进行表格数据操作时用到的对复选框checkbox的全选和全不选, ...

- linux命令su与su-的差别

su命令和su -命令最大的本质差别就是: su仅仅是切换了root身份.但Shell环境仍然是普通用户的Shell. 而su -连用户和Shell环境一起切换成root身份了. 仅仅有切换了Shel ...

- vim使用(二):经常使用功能

1. vim经常使用功能 vim的经常使用功能.包含块的选择.复制,多文件的编辑.多窗体等功能. 2. vim块选择 块选择是将文档中的一块能够选择复制,粘贴,不用整行的处理. 按下 v , V . ...

- 烦人的Facebook分享授权

开发端授权app权限 facebook要求提交应用到他们平台, 并且还限制100mb, 坑爹死了, 果断使用google drive分享给他们, 最開始不确定分享给他们什么样的程序包, 结果审核没通过 ...