【转帖】You can now run a GPT-3-level AI model on your laptop, phone, and Raspberry Pi

https://arstechnica.com/information-technology/2023/03/you-can-now-run-a-gpt-3-level-ai-model-on-your-laptop-phone-and-raspberry-pi/

Things are moving at lightning speed in AI Land. On Friday, a software developer named Georgi Gerganov created a tool called "llama.cpp" that can run Meta's new GPT-3-class AI large language model, LLaMA, locally on a Mac laptop. Soon thereafter, people worked out how to run LLaMA on Windows as well. Then someone showed it running on a Pixel 6 phone, and next came a Raspberry Pi (albeit running very slowly).

If this keeps up, we may be looking at a pocket-sized ChatGPT competitor before we know it.

But let's back up a minute, because we're not quite there yet. (At least not today—as in literally today, March 13, 2023.) But what will arrive next week, no one knows.

Since ChatGPT launched, some people have been frustrated by the AI model's built-in limits that prevent it from discussing topics that OpenAI has deemed sensitive. Thus began the dream—in some quarters—of an open source large language model (LLM) that anyone could run locally without censorship and without paying API fees to OpenAI.

Open source solutions do exist (such as GPT-J), but they require a lot of GPU RAM and storage space. Other open source alternatives could not boast GPT-3-level performance on readily available consumer-level hardware.

Enter LLaMA, an LLM available in parameter sizes ranging from 7B to 65B (that's "B" as in "billion parameters," which are floating point numbers stored in matrices that represent what the model "knows"). LLaMA made a heady claim: that its smaller-sized models could match OpenAI's GPT-3, the foundational model that powers ChatGPT, in the quality and speed of its output. There was just one problem—Meta released the LLaMA code open source, but it held back the "weights" (the trained "knowledge" stored in a neural network) for qualified researchers only.

Advertisement

Flying at the speed of LLaMA

Meta's restrictions on LLaMA didn't last long, because on March 2, someone leaked the LLaMA weights on BitTorrent. Since then, there has been an explosion of development surrounding LLaMA. Independent AI researcher Simon Willison has compared this situation to the release of Stable Diffusion, an open source image synthesis model that launched last August. Here's what he wrote in a post on his blog:

It feels to me like that Stable Diffusion moment back in August kick-started the entire new wave of interest in generative AI—which was then pushed into over-drive by the release of ChatGPT at the end of November.

That Stable Diffusion moment is happening again right now, for large language models—the technology behind ChatGPT itself. This morning I ran a GPT-3 class language model on my own personal laptop for the first time!

AI stuff was weird already. It’s about to get a whole lot weirder.

Typically, running GPT-3 requires several datacenter-class A100 GPUs (also, the weights for GPT-3 are not public), but LLaMA made waves because it could run on a single beefy consumer GPU. And now, with optimizations that reduce the model size using a technique called quantization, LLaMA can run on an M1 Mac or a lesser Nvidia consumer GPU (although "llama.cpp" only runs on CPU at the moment—which is impressive and surprising in its own way).

Things are moving so quickly that it's sometimes difficult to keep up with the latest developments. (Regarding AI's rate of progress, a fellow AI reporter told Ars, "It's like those videos of dogs where you upend a crate of tennis balls on them. [They] don't know where to chase first and get lost in the confusion.")

For example, here's a list of notable LLaMA-related events based on a timeline Willison laid out in a Hacker News comment:

- February 24, 2023: Meta AI announces LLaMA.

- March 2, 2023: Someone leaks the LLaMA models via BitTorrent.

- March 10, 2023: Georgi Gerganov creates llama.cpp, which can run on an M1 Mac.

- March 11, 2023: Artem Andreenko runs LLaMA 7B (slowly) on a Raspberry Pi 4, 4GB RAM, 10 sec/token.

- March 12, 2023: LLaMA 7B running on NPX, a node.js execution tool.

- March 13, 2023: Someone gets llama.cpp running on a Pixel 6 phone, also very slowly.

- March 13, 2023, 2023: Stanford releases Alpaca 7B, an instruction-tuned version of LLaMA 7B that "behaves similarly to OpenAI's "text-davinci-003" but runs on much less powerful hardware.

Advertisement

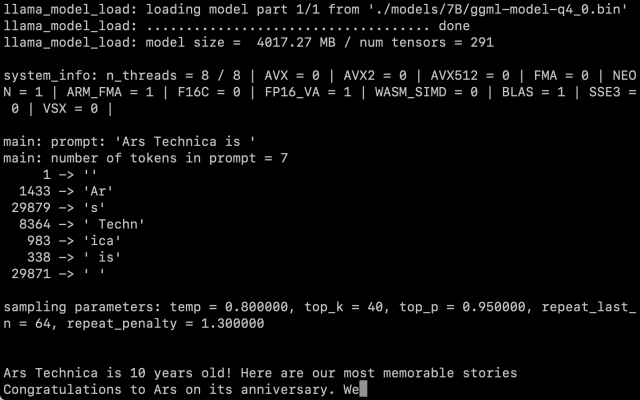

After obtaining the LLaMA weights ourselves, we followed Willison's instructions and got the 7B parameter version running on an M1 Macbook Air, and it runs at a reasonable rate of speed. You call it as a script on the command line with a prompt, and LLaMA does its best to complete it in a reasonable way.

There's still the question of how much the quantization affects the quality of the output. In our tests, LLaMA 7B trimmed down to 4-bit quantization was very impressive for running on a MacBook Air—but still not on par with what you might expect from ChatGPT. It's entirely possible that better prompting techniques might generate better results.

Also, optimizations and fine-tunings come quickly when everyone has their hands on the code and the weights—even though LLaMA is still saddled with some fairly restrictive terms of use. The release of Alpaca today by Stanford proves that fine tuning (additional training with a specific goal in mind) can improve performance, and it's still early days after LLaMA's release.

FURTHER READING

As of this writing, running LLaMA on a Mac remains a fairly technical exercise. You have to install Python and Xcode and be familiar with working on the command line. Willison has good step-by-step instructions for anyone who would like to attempt it. But that may soon change as developers continue to code away.

As for the implications of having this tech out in the wild—no one knows yet. While some worry about AI's impact as a tool for spam and misinformation, Willison says, "It’s not going to be un-invented, so I think our priority should be figuring out the most constructive possible ways to use it."

Right now, our only guarantee is that things will change rapidly.

【转帖】You can now run a GPT-3-level AI model on your laptop, phone, and Raspberry Pi的更多相关文章

- Raspberry Pi 3 --- Kernel Building and Run in A New Version Kernal

ABSTRACT There are two main methods for building the kernel. You can build locally on a Raspberry Pi ...

- OSMC Vs. OpenELEC Vs. LibreELEC – Kodi Operating System Comparison

Kodi's two slim-and-trim kid brothers LibreELEC and OpenELEC were once great solutions for getting t ...

- Jenkins报错Caused: java.io.IOException: Cannot run program "sh" (in directory "D:\Jenkins\Jenkins_home\workspace\jmeter_test"): CreateProcess error=2, 系统找不到指定的文件。

想在本地执行我的python文件,我本地搭建了一个Jenkins,使用了execute shell来运行我的脚本,发现报错 [jmeter_test] $ sh -xe D:\tomcat\apach ...

- FAT-fs (mmcblk0p1): Volume was not properly unmounted. Some data may be corrupt. Please run fsck.

/******************************************************************************** * FAT-fs (mmcblk0p ...

- (转载)如何创建一个以管理员权限运行exe 的快捷方式? How To Create a Shortcut That Lets a Standard User Run An Application as Administrator

How To Create a Shortcut That Lets a Standard User Run An Application as Administrator by Chris Hoff ...

- java.io.IOException: Cannot run program "/opt/jdk1.8.0_191/bin/java" (in directory "/var/lib/jenkins/workspace/xinguan"): error=2, No such file or directory

测试jenkins构建,报错如下 Parsing POMs Established TCP socket on 44463 [xinguan] $ /opt/jdk1.8.0_191/bin/java ...

- 【协作式原创】自己动手写docker之run代码解析

linux预备知识 urfave cli预备知识 准备工作 阿里云抢占式实例:centos7.4 每次实例释放后都要重新安装go wget https://dl.google.com/go/go1.1 ...

- FreeBSD 10 发布

发行注记:http://www.freebsd.org/releases/10.0R/relnotes.html 下文翻译中... 主要有安全问题修复.新的驱动与硬件支持.新的命名/选项.主要bug修 ...

- 【Xamarin挖墙脚系列:Mono项目的图标为啥叫Mono】

因为发起人大Boss :Miguel de lcaza 是西班牙人,喜欢猴子.................就跟Hadoop的创始人的闺女喜欢大象一样...................... 历 ...

- Win8.1重装win7或win10中途无法安装

一.有的是usb识别不了,因为新的机器可能都是USB3.0的,安装盘是Usb2.0的. F12更改系统BIOS设置,我改了三个地方: 1.设置启动顺序为U盘启动 2.关闭了USB3.0 control ...

随机推荐

- GaussDB技术解读丨数据库迁移创新实践

本文分享自华为云社区<DTCC 2023专家解读丨GaussDB技术解读系列之数据库迁移创新实践>,作者:GaussDB 数据库. 近日,以"数智赋能 共筑未来"为主题 ...

- 云小课|云数据库RDS实例连接失败了?送你7大妙招轻松应对

摘要:自从购买了RDS实例,连接失败的问题就伴随着我,真是太难了.不要害怕,不要着急,跟着小云妹,读了本篇云小课,让你风里雨里,实例连接自此畅通无阻! 顺着以下几个方面进行排查,问题就可以迎刃而解~ ...

- 华为云PB级数据库GaussDB(for Redis)介绍第四期:高斯 Geo的介绍与应用

摘要:高斯Redis的大规模地理位置信息存储的解决方案. 1.背景 LBS(Location Based Service,基于位置的服务)有非常广泛的应用场景,最常见的应用就是POI(Point of ...

- 探索开源工作流引擎Azkaban在MRS中的实践

摘要:本文主要介绍如何在华为云上从0-1搭建azkaban并指导用户如何提交作业至MRS. 本文分享自华为云社区<开源工作流引擎Azkaban在MRS中的实践>,作者:啊喔YeYe. 环境 ...

- M-SQL:超强的多任务表示学习方法

摘要:本篇文章将硬核讲解M-SQL:一种将自然语言转换为SQL语句的多任务表示学习方法的相关论文. 本文分享自华为云社区<[云驻共创]M-SQL,一种超强的多任务表示学习方法,你值得拥有> ...

- CNCF即将推出平台成熟度模型丨亮点导览

今年年初,云原生计算基金会(CNCF)发布了平台白皮书(点击这里查看中文版本).白皮书描述了云计算内部平台是什么,以及它们可以为企业提供的价值. 为了进一步挖掘平台对企业的价值,为企业提供一个可以评估 ...

- [BitSail] Connector开发详解系列四:Sink、Writer

更多技术交流.求职机会,欢迎关注字节跳动数据平台微信公众号,回复[1]进入官方交流群 Sink Connector BitSail Sink Connector交互流程介绍 Sink:数据写入组件的生 ...

- 火山引擎DataLeap数据质量动态探查及相关前端实现

更多技术交流.求职机会,欢迎关注字节跳动数据平台微信公众号,回复[1]进入官方交流群 需求背景 火山引擎DataLeap数据探查上线之前,数据验证都是通过写SQL方式进行查询的,从编写SQL,到解析运 ...

- 错误: -source 1.7 中不支持 lambda 表达式 (请使用 -source 8 或更高版本以启用 lambda 表达式)

Modules 把 Language level 调成 8

- java大纲图解