ResNet实战

# Resnet.py

#!/usr/bin/env python

# -*- coding:utf-8 -*-

import tensorflow as tf

from tensorflow import keras

from tensorflow.keras import layers, Sequential

class BasicBlock(layers.Layer):

def __init__(self, filter_num, stride=1):

super(BasicBlock, self).__init__()

self.conv1 = layers.Conv2D(filter_num, (3, 3), strides=stride, padding='same')

self.bn1 = layers.BatchNormalization()

self.relu = layers.Activation('relu')

self.conv2 = layers.Conv2D(filter_num, (3, 3), strides=1, padding='same')

self.bn2 = layers.BatchNormalization()

if stride != 1:

self.downsample = Sequential()

self.downsample.add(layers.Conv2D(filter_num, (1, 1), strides=stride))

else:

self.downsample = lambda x: x

def call(self, inputs, training=None):

# [b,h,w,c]

out = self.conv1(inputs)

out = self.bn1(out)

out = self.relu(out)

out = self.conv2(out)

out = self.bn2(out)

identity = self.downsample(inputs)

output = layers.add([out, identity])

output = tf.nn.relu(output)

return out

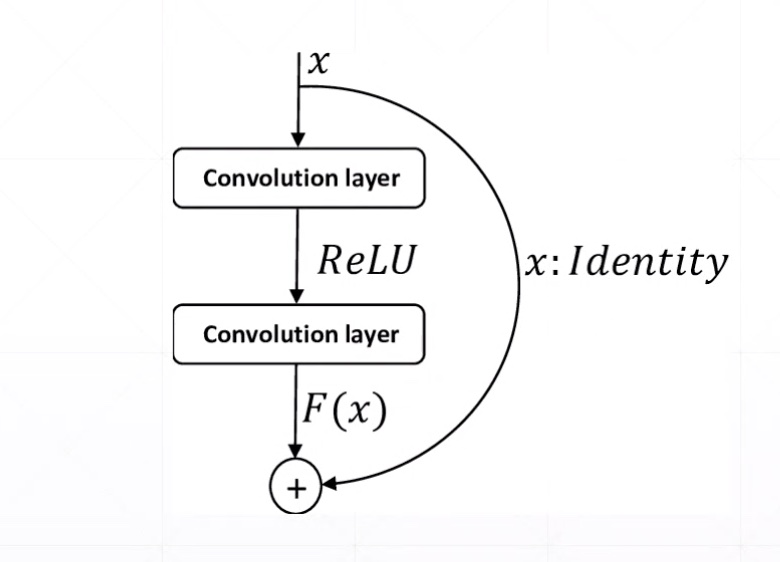

Res Block

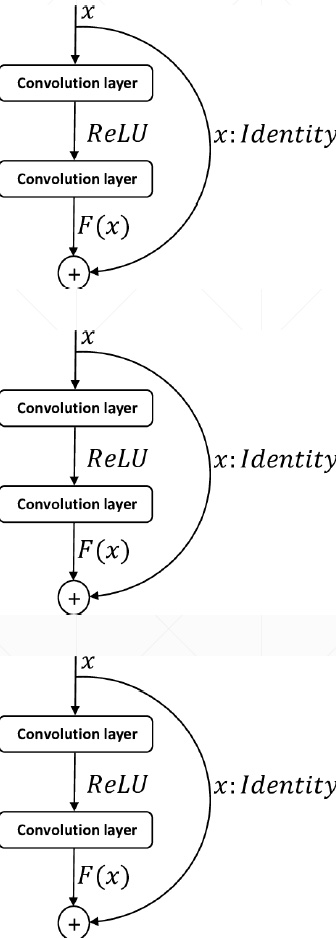

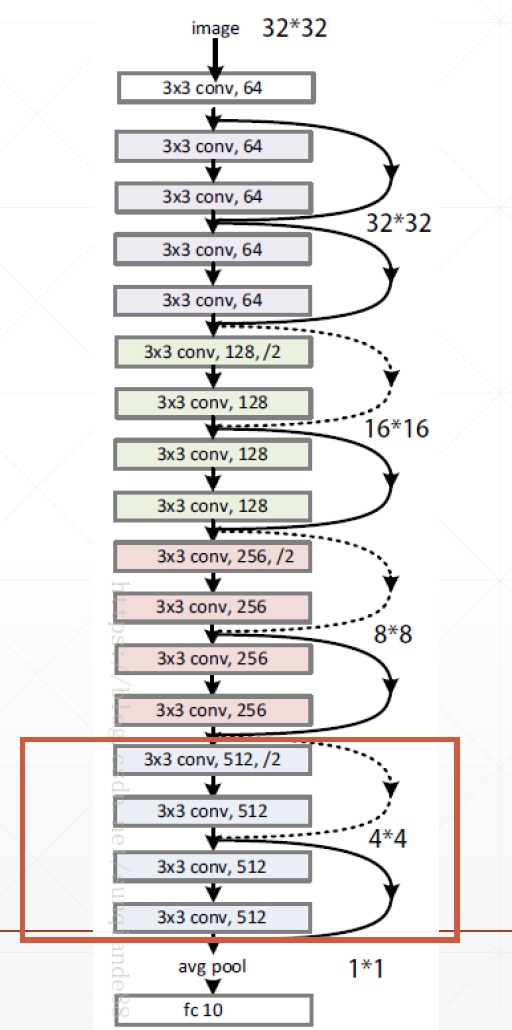

ResNet18

# Resnet.py

#!/usr/bin/env python

# -*- coding:utf-8 -*-

import tensorflow as tf

from tensorflow import keras

from tensorflow.keras import layers, Sequential

class BasicBlock(layers.Layer):

def __init__(self, filter_num, stride=1):

super(BasicBlock, self).__init__()

self.conv1 = layers.Conv2D(filter_num, (3, 3), strides=stride, padding='same')

self.bn1 = layers.BatchNormalization()

self.relu = layers.Activation('relu')

self.conv2 = layers.Conv2D(filter_num, (3, 3), strides=1, padding='same')

self.bn2 = layers.BatchNormalization()

if stride != 1:

self.downsample = Sequential()

self.downsample.add(layers.Conv2D(filter_num, (1, 1), strides=stride))

else:

self.downsample = lambda x: x

def call(self, inputs, training=None):

# [b,h,w,c]

out = self.conv1(inputs)

out = self.bn1(out)

out = self.relu(out)

out = self.conv2(out)

out = self.bn2(out)

identity = self.downsample(inputs)

output = layers.add([out, identity])

output = tf.nn.relu(output)

return out

class ResNet(keras.Model):

def __init__(self, layer_dims, num_classes=100): # [2,2,2,2]

super(ResNet, self).__init__()

# 根部

self.stem = Sequential([layers.Conv2D(64, (3, 3), strides=(1, 1,)),

layers.BatchNormalization(),

layers.Activation('relu'),

layers.MaxPool2D(pool_size=(2, 2), strides=(1, 1), padding='same')

])

# 64,128,256,512是通道数

self.layer1 = self.build_resblock(64, layer_dims[0])

self.layer2 = self.build_resblock(128, layer_dims[1], stride=2)

self.layer3 = self.build_resblock(256, layer_dims[2], stride=2)

self.layer4 = self.build_resblock(512, layer_dims[3], stride=2)

# output: [b, 512, h, w]

self.avgpool = layers.GlobalAveragePooling2D()

self.fc = layers.Dense(num_classes) # 分类

def call(self, inputs, training=None):

x = self.stem(inputs)

x = self.layer1(x)

x = self.layer2(x)

x = self.layer3(x)

x = self.layer4(x)

# [b, c]

x = self.avgpool(x)

# [b]

x = self.fc(x)

return x

def build_resblock(self, filter_num, blocks, stride=1):

res_blocks = Sequential()

# may down sample

res_blocks.add(BasicBlock(filter_num, stride))

for _ in range(1, blocks):

res_blocks.add(BasicBlock(filter_num, stride=1))

return res_blocks

def resnet18():

return ResNet([2, 2, 2, 2])

def resnet34():

return ResNet([3, 4, 6, 3])

# resnet18_train.py

#!/usr/bin/env python

# -*- coding:utf-8 -*-

import tensorflow as tf

from tensorflow.keras import layers, optimizers, datasets, Sequential

import os

from Resnet import resnet18

os.environ['TF_CPP_MIN_LOG_LEVEL'] = '2'

tf.random.set_seed(2345)

def preprocess(x, y):

# [-1~1]

x = tf.cast(x, dtype=tf.float32) / 255. - 0.5

y = tf.cast(y, dtype=tf.int32)

return x, y

(x, y), (x_test, y_test) = datasets.cifar100.load_data()

y = tf.squeeze(y, axis=1)

y_test = tf.squeeze(y_test, axis=1)

print(x.shape, y.shape, x_test.shape, y_test.shape)

train_db = tf.data.Dataset.from_tensor_slices((x, y))

train_db = train_db.shuffle(1000).map(preprocess).batch(512)

test_db = tf.data.Dataset.from_tensor_slices((x_test, y_test))

test_db = test_db.map(preprocess).batch(512)

sample = next(iter(train_db))

print('sample:', sample[0].shape, sample[1].shape,

tf.reduce_min(sample[0]), tf.reduce_max(sample[0]))

def main():

# [b, 32, 32, 3] => [b, 1, 1, 512]

model = resnet18()

model.build(input_shape=(None, 32, 32, 3))

model.summary()

optimizer = optimizers.Adam(lr=1e-3)

for epoch in range(500):

for step, (x, y) in enumerate(train_db):

with tf.GradientTape() as tape:

# [b, 32, 32, 3] => [b, 100]

logits = model(x)

# [b] => [b, 100]

y_onehot = tf.one_hot(y, depth=100)

# compute loss

loss = tf.losses.categorical_crossentropy(y_onehot, logits, from_logits=True)

loss = tf.reduce_mean(loss)

grads = tape.gradient(loss, model.trainable_variables)

optimizer.apply_gradients(zip(grads, model.trainable_variables))

if step % 50 == 0:

print(epoch, step, 'loss:', float(loss))

total_num = 0

total_correct = 0

for x, y in test_db:

logits = model(x)

prob = tf.nn.softmax(logits, axis=1)

pred = tf.argmax(prob, axis=1)

pred = tf.cast(pred, dtype=tf.int32)

correct = tf.cast(tf.equal(pred, y), dtype=tf.int32)

correct = tf.reduce_sum(correct)

total_num += x.shape[0]

total_correct += int(correct)

acc = total_correct / total_num

print(epoch, 'acc:', acc)

if __name__ == '__main__':

main()

(50000, 32, 32, 3) (50000,) (10000, 32, 32, 3) (10000,)

sample: (512, 32, 32, 3) (512,) tf.Tensor(-0.5, shape=(), dtype=float32) tf.Tensor(0.5, shape=(), dtype=float32)

Model: "res_net"

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

sequential (Sequential) multiple 2048

_________________________________________________________________

sequential_1 (Sequential) multiple 148736

_________________________________________________________________

sequential_2 (Sequential) multiple 526976

_________________________________________________________________

sequential_4 (Sequential) multiple 2102528

_________________________________________________________________

sequential_6 (Sequential) multiple 8399360

_________________________________________________________________

global_average_pooling2d (Gl multiple 0

_________________________________________________________________

dense (Dense) multiple 51300

=================================================================

Total params: 11,230,948

Trainable params: 11,223,140

Non-trainable params: 7,808

_________________________________________________________________

WARNING: Logging before flag parsing goes to stderr.

W0601 16:59:57.619546 4664264128 optimizer_v2.py:928] Gradients does not exist for variables ['sequential_2/basic_block_2/sequential_3/conv2d_7/kernel:0', 'sequential_2/basic_block_2/sequential_3/conv2d_7/bias:0', 'sequential_4/basic_block_4/sequential_5/conv2d_12/kernel:0', 'sequential_4/basic_block_4/sequential_5/conv2d_12/bias:0', 'sequential_6/basic_block_6/sequential_7/conv2d_17/kernel:0', 'sequential_6/basic_block_6/sequential_7/conv2d_17/bias:0'] when minimizing the loss.

0 0 loss: 4.60512638092041

Out of memory

- decrease batch size

- tune resnet[2,2,2,2]

- try Google CoLab

- buy new NVIDIA GPU Card

ResNet实战的更多相关文章

- TensorFlow2教程(目录)

第一篇 基本操作 01 Tensor数据类型 02 创建Tensor 03 Tensor索引和切片 04 维度变换 05 Broadcasting 06 数学运算 07 前向传播(张量)- 实战 第二 ...

- Pytorch1.0入门实战三:ResNet实现cifar-10分类,利用visdom可视化训练过程

人的理想志向往往和他的能力成正比. —— 约翰逊 最近一直在使用pytorch深度学习框架,很想用pytorch搞点事情出来,但是框架中一些基本的原理得懂!本次,利用pytorch实现ResNet神经 ...

- Pytorch1.0入门实战二:LeNet、AleNet、VGG、GoogLeNet、ResNet模型详解

LeNet 1998年,LeCun提出了第一个真正的卷积神经网络,也是整个神经网络的开山之作,称为LeNet,现在主要指的是LeNet5或LeNet-5,如图1.1所示.它的主要特征是将卷积层和下采样 ...

- [深度应用]·实战掌握PyTorch图片分类简明教程

[深度应用]·实战掌握PyTorch图片分类简明教程 个人网站--> http://www.yansongsong.cn/ 项目GitHub地址--> https://github.com ...

- 学习笔记TF033:实现ResNet

ResNet(Residual Neural Network),微软研究院 Kaiming He等4名华人提出.通过Residual Unit训练152层深神经网络,ILSVRC 2015比赛冠军,3 ...

- Reading | 《TensorFlow:实战Google深度学习框架》

目录 三.TensorFlow入门 1. TensorFlow计算模型--计算图 I. 计算图的概念 II. 计算图的使用 2.TensorFlow数据类型--张量 I. 张量的概念 II. 张量的使 ...

- 人工智能深度学习框架MXNet实战:深度神经网络的交通标志识别训练

人工智能深度学习框架MXNet实战:深度神经网络的交通标志识别训练 MXNet 是一个轻量级.可移植.灵活的分布式深度学习框架,2017 年 1 月 23 日,该项目进入 Apache 基金会,成为 ...

- 【深度学习】基于Pytorch的ResNet实现

目录 1. ResNet理论 2. pytorch实现 2.1 基础卷积 2.2 模块 2.3 使用ResNet模块进行迁移学习 1. ResNet理论 论文:https://arxiv.org/pd ...

- tensorflow学习笔记——ResNet

自2012年AlexNet提出以来,图像分类.目标检测等一系列领域都被卷积神经网络CNN统治着.接下来的时间里,人们不断设计新的深度学习网络模型来获得更好的训练效果.一般而言,许多网络结构的改进(例如 ...

随机推荐

- 关于python安装lxml插件的问题

文章只是介绍自己安装时从安装不上到安装后报错,再到安装成功的心路历程,并不代表广大欧皇也会会出现同类型的问题,也不是总结和汇总各种出问题的原因. 直接进入正题,首先我这边是win环境,电脑上装的是py ...

- 再谈spark部署搭建和企业级项目接轨的入门经验(博主推荐)

进入我这篇博客的博友们,相信你们具备有一定的spark学习基础和实践了. 先给大家来梳理下.spark的运行模式和常用的standalone.yarn部署.这里不多赘述,自行点击去扩展. 1.Spar ...

- 笔记——malloc、free、不同数据类型操作、.pyc文件、python安装第三方包、验证一个网站的所有链接有效性

C — malloc( ) and free( ) C 语言中使用malloc( )函数申请的内存空间,为什么一定要使用free释放? **malloc()函数功能:是从堆区申请一段连续的空间,函数结 ...

- 理解C++中拷贝构造函数

拷贝构造函数的功能是用一个已有的对象来初始化一个被创建的同样对象,是一种特殊的构造函数,具有一般构造函数的所有特性,当创建一个新对象的时候系统会自动调用它:其形参是本类对象的引用,它的特殊功能是将参数 ...

- SQL 初级教程学习(二)

1.SQL 语句从 "Websites" 表中选取头两条记录: SELECT * FROM Websites LIMIT 2; SELECT TOP 50 PERCENT * FR ...

- poj 3281 Dining (最大网络流)

题目链接: http://poj.org/problem?id=3281 题目大意: 有n头牛,f种食物,d种饮料,第i头牛喜欢fi种食物和di种饮料,每种食物或者饮料被一头牛选中后,就不能被其他的牛 ...

- ORA-00020: maximum number of processes (300) exceeded

SQL> select count(*) from v$session; COUNT(*)---------- 98 SQL> select count(*) from v$process ...

- MAT使用入门

原文出处: 高建武 (Granker,@高爷) MAT简介 MAT(Memory Analyzer Tool),一个基于Eclipse的内存分析工具,是一个快速.功能丰富的JAVA heap分析工具, ...

- Java操作pdf: JarsperReport的简单使用

在企业级应用开发中,报表生成.报表打印下载是其重要的一个环节.除了 Excel 报表之外,PDF 报表也有广泛的应用场景. 目前世面上比较流行的制作 PDF 报表的工具如下: iText PDF :i ...

- java数据结构和算法04(链表)

前面我们看了数组,栈和队列,大概就会这些数据结构有了一些基本的认识,首先回顾一下之前的东西: 在数组中,其实是分为有序数组和无序数组,我简单实现了无序数组,为什么呢?因为有序数组的实现就是将无序数组进 ...