Why Deep Learning Works – Key Insights and Saddle Points

Why Deep Learning Works – Key Insights and Saddle Points

A quality discussion on the theoretical motivations for deep learning, including distributed representation, deep architecture, and the easily escapable saddle point.

This post summarizes the key points of a recent blog post by Rinu Boney, based on a lecture by Dr. Yoshua Bengio from this year's Deep Learning Summer School in Montreal, which discusses the theoretical motivations for deep learning.

"To generalize locally, we need representative examples for all relevant variations."

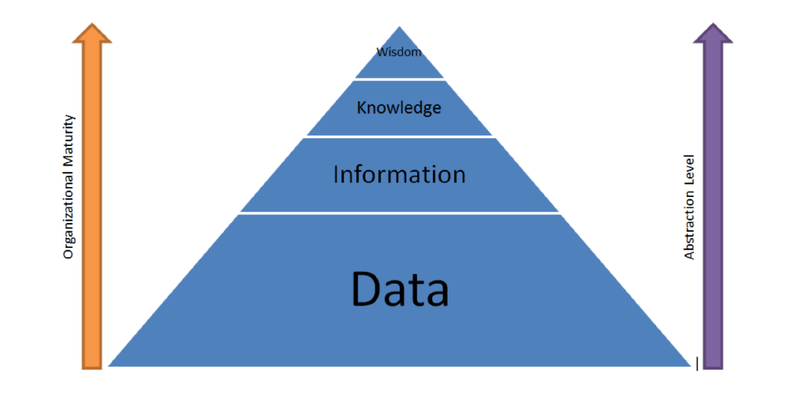

Deep learning is about learning multiple levels of representations, corresponding to multiple levels of abstractions. If we are able to learn these multiple levels of representation, we are able to generalize well.

After setting the general tone of the post with the above (paraphrased) statement, the author presents a number of different artificial intelligence (AI) strategies, from rule-based systems to deep learning, and notes on at which levels of learning their components function. He then states the 3 keys to moving from machine learning (ML) to true artificial intelligence: Lots of data, very flexible models, and powerful priors, and that, since classical ML can handle the first 2, his post deals with the third.

On the path toward AI from today's ML systems, we need learning, generalization, ways to fight the curse of dimensionality, and the ability to disentangle the underlying explanatory factors. Before explaining why non-parametric learning algorithms won't get us to true AI, he gives a nuanced definition of non-parametric. He explains why smoothness, a classical non-parametric approach, won't work on high-dimensionality, and then provides the following insight re: dimensionality:

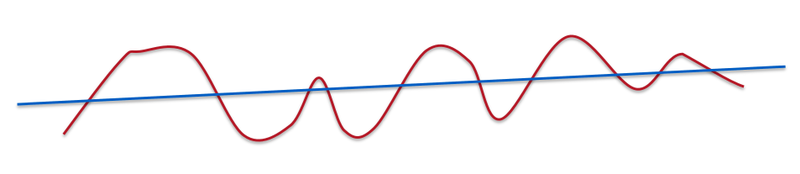

"If we dig deeper mathematically, it's not the number of dimensions but the number of variations of functions that we learn. In this case, smoothness is about how many ups and downs are present in the curve."

"A line is very smooth. A curve with some ups and downs is less smooth but still smooth."

So, it's clear that smoothness will not beat the curse of dimensionality alone. In fact, smoothness doesn't even apply to modern, complex problems like computer vision or natural language processing. After discussing the downfalls of such competing methods as Gaussian kernels, Boney sets his sights on moving past smoothness, and why that's necessary:

"We want to be non-parametric in the sense that we want the family of functions to grow in flexibility as we get more data. In neural networks, we change the number of hidden units depending on the amount of data."

He notes that in deep learning, 2 priors are used, namely distributed representations and deep architecture.

Why distributed representations?

"With distributed representations, it is possible to represent exponential number of regions with a linear number of parameters. The magic of distributed representation is that it can learn a very complicated function (with many ups and downs) with a low number of examples."

In distributed representations, features are individually and independently meaningful, and they remain so regardless of what the other features are. There maybe some interactions but most features are learned independent of each other. Boney states that neural networks are very good at learning representations capturing the semantic aspects, and that their generalization power is derived from these representations. As a practical exploration of the topic, he recommend's Cristopher Olah's article for some information on distributed representation and Natural Language Processing.

There is a lot of misunderstanding about what depth means.

"Deeper networks does not correspond to a higher capacity. Deeper doesn't mean we can represent more functions. If the function we are trying to learn has a particular characteristic obtained through composition of many operations, then it is much better to approximate these functions with a deep neural network."

Boney then comes full circle. He explains that one of the reasons neural network research was abandon (once again) in the late 90s was because the optimization problem is non-convex. The realization from the work in the 80s and 90s that neural networks have an exponential number of local minima, along with the breakout success of kernel machines, also led to this downfall, as did the fact that networks may get stuck on poor solutions. Recently we have evidence that the issue of non-convexity may be a non-issue, which changes its relationship vis-a-vis neural networks.

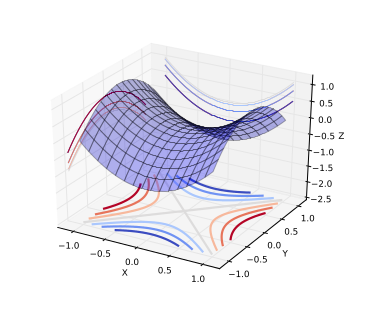

"A saddle point is illustrated in the image above. In a global or local minima, all the directions are going up and in a global or local maxima, all the directions are going down."

Saddle Points.

"Let us consider the optimization problem in low dimensions vs high dimensions. In low dimensions, it is true that there exists lots of local minima. However in high dimensions, local minima are not really the critical points that are the most prevalent in points of interest. When we optimize neural networks or any high dimensional function, for most of the trajectory we optimize, the critical points(the points where the derivative is zero or close to zero) are saddle points. Saddle points, unlike local minima, are easily escapable."

The intuition with the saddle point, is that, for a minima located close to the global minima, all directions should be climbing upward; going further downward is not possible. Local minima exist, but are very close to global minima in terms of objective functions, and theoretical results suggest that some large functions have their probability concentrated between the index (the critical points) and the objective function. The index is the fraction of directions moving downward; for all values of index not 0 or 1 (local minima and maxima, respectively), then it is a saddle point.

Boney goes on to say that there has been empirical validation corroborating this relationship between index and objective function, and that, while there is no proof the results apply to neural network optimization, some evidence suggests that the observed behavior may well correspond to the theoretical results. Stochastic gradient descent, in practice, almost always escapes from surfaces that are not local minima.

This all suggests that local minima may not, in fact, be an issue because of saddle points.

Boney follows his saddle points discussion up by pointing out a few other priors that work with deep distributed representations; human learning, semi-supervised learning, and multi-task learning. He then lists a few related papers on saddle points.

Rinu Boney has written a detailed piece on the motivations for deep learning, including a good discussion on saddle points, all of which is difficult to do justice with a few quotes and some summarization. If you are interested in a deeper discussion of the above points to visit Boney's blog and read the insightful and well-written piece yourself.

Bio: Matthew Mayo is a computer science graduate student currently working on his thesis parallelizing machine learning algorithms. He is also a student of data mining, a data enthusiast, and an aspiring machine learning scientist.

Related:

Most popular last 30 days

Most viewed last 30 days

- Top 5 arXiv Deep Learning Papers, Explained - Oct 1, 2015.

- Data Lake vs Data Warehouse: Key Differences - Sep 29, 2015.

- 60+ Free Books on Big Data, Data Science, Data Mining, Machine Learning, Python, R, and more - Sep 4, 2015.

- R vs Python for Data Science: The Winner is ... - May 26, 2015.

- R vs Python: head to head data analysis - Oct 13, 2015.

- 30 Cant miss Harvard Business Review articles on Data Science, Big Data and Analytics - Sep 30, 2015.

- 9 Must-Have Skills You Need to Become a Data Scientist - Nov 22, 2014.

Most shared last 30 days

- Top 5 arXiv Deep Learning Papers, Explained - Oct 1, 2015.

- 30 Cant miss Harvard Business Review articles on Data Science, Big Data and Analytics - Sep 30, 2015.

- 90+ Active Blogs on Analytics, Big Data, Data Mining, Data Science, Machine Learning - Oct 8, 2015.

- How Big Data Helps Build Smart Cities - Oct 16, 2015.

- Does Deep Learning Come from the Devil? - Oct 9, 2015.

- 5 steps to actually learn data science - Oct 6, 2015.

- R vs Python: head to head data analysis - Oct 13, 2015.

Why Deep Learning Works – Key Insights and Saddle Points的更多相关文章

- why deep learning works

https://medium.com/towards-data-science/deep-learning-for-object-detection-a-comprehensive-review-73 ...

- Growing Pains for Deep Learning

Growing Pains for Deep Learning Advances in theory and computer hardware have allowed neural network ...

- Decision Boundaries for Deep Learning and other Machine Learning classifiers

Decision Boundaries for Deep Learning and other Machine Learning classifiers H2O, one of the leading ...

- Why deep learning?

1. 深度学习中网络越深越好么? 理论上说是这样的,因为网络越深,参数也越多,拟合能力也越强(但实际情况是,网络很深的时候,不容易训练,使得表现能力可能并不好). 2. 那么,不同什么深度的网络,在参 ...

- Use of Deep Learning in Modern Recommendation System: A Summary of Recent Works(笔记)

注意:论文中,很多的地方出现baseline,可以理解为参照物的意思,但是在论文中,我们还是直接将它称之为基线,也 就是对照物,参照物. 这片论文中,作者没有去做实际的实验,但是却做了一件很有意义的事 ...

- (转)WHY DEEP LEARNING IS SUDDENLY CHANGING YOUR LIFE

Main Menu Fortune.com E-mail Tweet Facebook Linkedin Share icons By Roger Parloff Illustration ...

- The Brain vs Deep Learning Part I: Computational Complexity — Or Why the Singularity Is Nowhere Near

The Brain vs Deep Learning Part I: Computational Complexity — Or Why the Singularity Is Nowhere Near ...

- What are some good books/papers for learning deep learning?

What's the most effective way to get started with deep learning? 29 Answers Yoshua Bengio, ...

- (转) Learning Deep Learning with Keras

Learning Deep Learning with Keras Piotr Migdał - blog Projects Articles Publications Resume About Ph ...

随机推荐

- SilverIight数据绑定实例

前台Code <DataGrid Name="DataGrid1" AutoGenerateColumns="False" IsReadOnly=&quo ...

- Linux下Tomcat的启动、关闭、杀死进程

打开终端 cd /java/tomcat #执行 bin/startup.sh #启动tomcat bin/shutdown.sh #停止tomcat tail -f logs/catalina.ou ...

- 未能解析此远程名称:'nuget.org' 的解决方法

今天用Nuget下一个程序包时,发现Nuget挂了: 未能解析此远程名称:'nuget.org' . 浏览器打开 http://nuget.org 失败. 使用cmd命令 输入nslookup n ...

- Eclipse中10个最有用的快捷键组合(转)

Eclipse中10个最有用的快捷键组合 1. ctrl+shift+r:打开资源 这可能是所有快捷键组合中最省时间的了.这组快捷键可以让你打开你的工作区中任何一个文件,而你只需要按下文件名或mask ...

- IBatis.Net学习笔记七--日志处理

IBatis.Net中提供了方便的日志处理,可以输出sql语句等调试信息. 常用的有两种:1.输出到控制台: <configSections> <sectionGroup ...

- 我们为什么需要DTO?

看了几套源码,其中都有用到DTO,这篇文章主要来谈论一下DTO使用的场合及其带来的好处. 在传统的编程中,我们一般都是前台请求数据,发送到Webservice,然后WebService向数据库发出请求 ...

- github上一款特别的侧滑

知识分享: 首先看图,我只是大自然的搬运工,想实现这种特效的请点击连接下载github地址忘掉了,....http://download.csdn.net/detail/lj419855402/860 ...

- 基于CoreText的基础排版引擎

storyboard: 新建一个CTDisplayView:UIView 代码如下: #import "CTDisplayView.h" #import "CoreTex ...

- dll,lib文件的导入

这里介绍了两种方式调用,不过我一般用的是第一种,比较方便. 1动态库函数的调用,可以采用静态链接的方式 ,主要步骤如下: 1) 包含DLL中导出的头文件. 2) 采用#pragma comment(l ...

- 服务发现:Zookeeper vs etcd vs Consul

[编者的话]本文对比了Zookeeper.etcd和Consul三种服务发现工具,探讨了最佳的服务发现解决方案,仅供参考. 如果使用预定义的端口,服务越多,发生冲突的可能性越大,毕竟,不可能有两个服务 ...

Previous post

Previous post