本地启动spark-shell

由于spark-1.3作为一个里程碑式的发布, 加入众多的功能特性,所以,有必要好好的研究一把,spark-1.3需要scala-2.10.x的版本支持,而系统上默认的scala的版本为2.9,需要进行升级, 可以参考ubuntu 安装 2.10.x版本的scala. 配置好scala的环境后,下载spark的cdh版本, 点我下载.

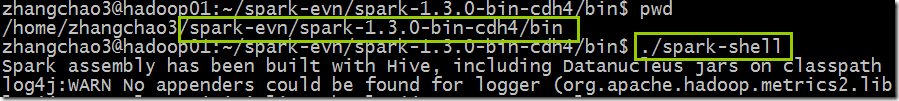

下载好后,直接解压,然后在bin目录直接运行./spark-shell 即可:

日志如下:

zhangchao3@hadoop01:~/spark-evn/spark-1.3.0-bin-cdh4/bin$ ./spark-shell

Spark assembly has been built with Hive, including Datanucleus jars on classpath

log4j:WARN No appenders could be found for logger (org.apache.hadoop.metrics2.lib.MutableMetricsFactory).

log4j:WARN Please initialize the log4j system properly.

log4j:WARN See http://logging.apache.org/log4j/1.2/faq.html#noconfig for more info.

Using Spark's default log4j profile: org/apache/spark/log4j-defaults.properties

15/04/14 00:03:30 INFO SecurityManager: Changing view acls to: zhangchao3

15/04/14 00:03:30 INFO SecurityManager: Changing modify acls to: zhangchao3

15/04/14 00:03:30 INFO SecurityManager: SecurityManager: authentication disabled; ui acls disabled; users with view permissions: Set(zhangchao3); users with modify permissions: Set(zhangchao3)

15/04/14 00:03:30 INFO HttpServer: Starting HTTP Server

15/04/14 00:03:30 INFO Server: jetty-8.y.z-SNAPSHOT

15/04/14 00:03:30 INFO AbstractConnector: Started SocketConnector@0.0.0.0:45918

15/04/14 00:03:30 INFO Utils: Successfully started service 'HTTP class server' on port 45918.

Welcome to

____ __

/ __/__ ___ _____/ /__

_\ \/ _ \/ _ `/ __/ '_/

/___/ .__/\_,_/_/ /_/\_\ version 1.3.0

/_/ Using Scala version 2.10.4 (OpenJDK 64-Bit Server VM, Java 1.7.0_75)

Type in expressions to have them evaluated.

Type :help for more information.

15/04/14 00:03:33 WARN Utils: Your hostname, hadoop01 resolves to a loopback address: 127.0.1.1; using 172.18.147.71 instead (on interface em1)

15/04/14 00:03:33 WARN Utils: Set SPARK_LOCAL_IP if you need to bind to another address

15/04/14 00:03:33 INFO SparkContext: Running Spark version 1.3.0

15/04/14 00:03:33 INFO SecurityManager: Changing view acls to: zhangchao3

15/04/14 00:03:33 INFO SecurityManager: Changing modify acls to: zhangchao3

15/04/14 00:03:33 INFO SecurityManager: SecurityManager: authentication disabled; ui acls disabled; users with view permissions: Set(zhangchao3); users with modify permissions: Set(zhangchao3)

15/04/14 00:03:33 INFO Slf4jLogger: Slf4jLogger started

15/04/14 00:03:33 INFO Remoting: Starting remoting

15/04/14 00:03:33 INFO Remoting: Remoting started; listening on addresses :[akka.tcp://sparkDriver@172.18.147.71:51629]

15/04/14 00:03:33 INFO Utils: Successfully started service 'sparkDriver' on port 51629.

15/04/14 00:03:33 INFO SparkEnv: Registering MapOutputTracker

15/04/14 00:03:33 INFO SparkEnv: Registering BlockManagerMaster

15/04/14 00:03:33 INFO DiskBlockManager: Created local directory at /tmp/spark-d398c8f3-6345-41f9-a712-36cad4a45e67/blockmgr-255070a6-19a9-49a5-a117-e4e8733c250a

15/04/14 00:03:33 INFO MemoryStore: MemoryStore started with capacity 265.4 MB

15/04/14 00:03:33 INFO HttpFileServer: HTTP File server directory is /tmp/spark-296eb142-92fc-46e9-bea8-f6065aa8f49d/httpd-4d6e4295-dd96-48bc-84b8-c26815a9364f

15/04/14 00:03:33 INFO HttpServer: Starting HTTP Server

15/04/14 00:03:33 INFO Server: jetty-8.y.z-SNAPSHOT

15/04/14 00:03:33 INFO AbstractConnector: Started SocketConnector@0.0.0.0:56529

15/04/14 00:03:33 INFO Utils: Successfully started service 'HTTP file server' on port 56529.

15/04/14 00:03:33 INFO SparkEnv: Registering OutputCommitCoordinator

15/04/14 00:03:33 INFO Server: jetty-8.y.z-SNAPSHOT

15/04/14 00:03:33 INFO AbstractConnector: Started SelectChannelConnector@0.0.0.0:4040

15/04/14 00:03:33 INFO Utils: Successfully started service 'SparkUI' on port 4040.

15/04/14 00:03:33 INFO SparkUI: Started SparkUI at http://172.18.147.71:4040

15/04/14 00:03:33 INFO Executor: Starting executor ID <driver> on host localhost

15/04/14 00:03:33 INFO Executor: Using REPL class URI: http://172.18.147.71:45918

15/04/14 00:03:33 INFO AkkaUtils: Connecting to HeartbeatReceiver: akka.tcp://sparkDriver@172.18.147.71:51629/user/HeartbeatReceiver

15/04/14 00:03:33 INFO NettyBlockTransferService: Server created on 55429

15/04/14 00:03:33 INFO BlockManagerMaster: Trying to register BlockManager

15/04/14 00:03:33 INFO BlockManagerMasterActor: Registering block manager localhost:55429 with 265.4 MB RAM, BlockManagerId(<driver>, localhost, 55429)

15/04/14 00:03:33 INFO BlockManagerMaster: Registered BlockManager

15/04/14 00:03:34 INFO SparkILoop: Created spark context..

Spark context available as sc.

15/04/14 00:03:34 INFO SparkILoop: Created sql context (with Hive support)..

SQL context available as sqlContext. scala>

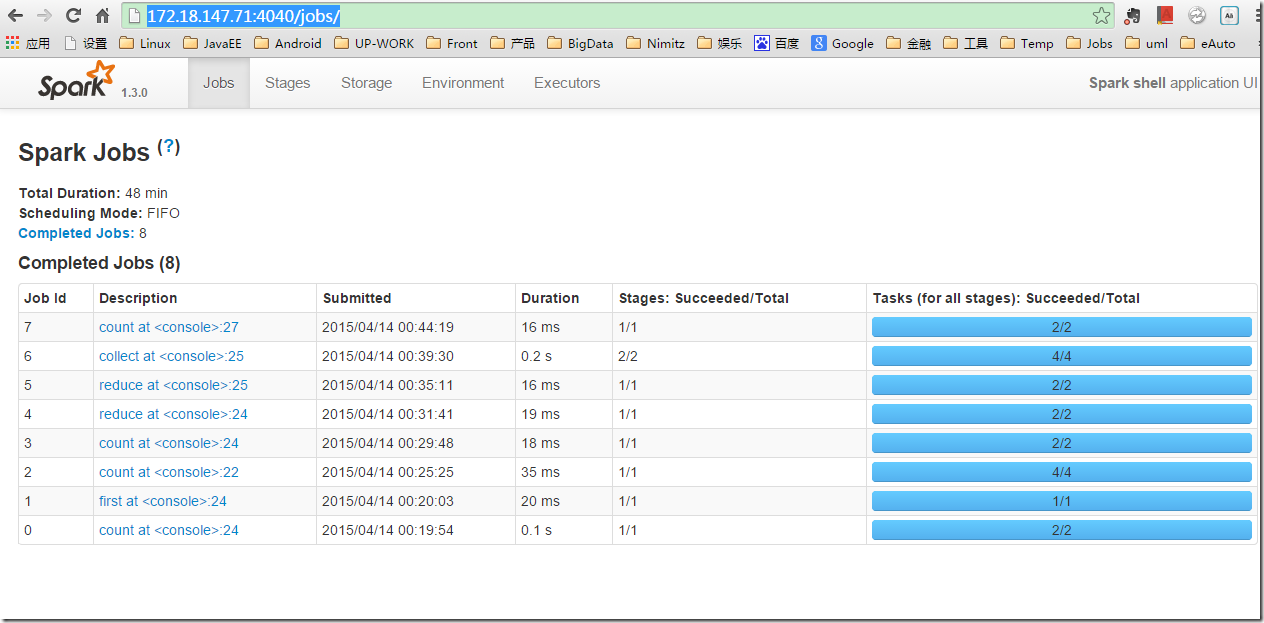

http://172.18.147.71:4040/jobs/ ,可以看到spark运行情况:

本地启动spark-shell的更多相关文章

- Spark Standalone Mode 单机启动Spark -- 分布式计算系统spark学习(一)

spark是个啥? Spark是一个通用的并行计算框架,由UCBerkeley的AMP实验室开发. Spark和Hadoop有什么不同呢? Spark是基于map reduce算法实现的分布式计算,拥 ...

- Spark学习进度-Spark环境搭建&Spark shell

Spark环境搭建 下载包 所需Spark包:我选择的是2.2.0的对应Hadoop2.7版本的,下载地址:https://archive.apache.org/dist/spark/spark-2. ...

- [Spark内核] 第36课:TaskScheduler内幕天机解密:Spark shell案例运行日志详解、TaskScheduler和SchedulerBackend、FIFO与FAIR、Task运行时本地性算法详解等

本課主題 通过 Spark-shell 窥探程序运行时的状况 TaskScheduler 与 SchedulerBackend 之间的关系 FIFO 与 FAIR 两种调度模式彻底解密 Task 数据 ...

- Spark源码分析之Spark Shell(下)

继上次的Spark-shell脚本源码分析,还剩下后面半段.由于上次涉及了不少shell的基本内容,因此就把trap和stty放在这篇来讲述. 上篇回顾:Spark源码分析之Spark Shell(上 ...

- Spark shell的原理

Spark shell是一个特别适合快速开发Spark原型程序的工具,可以帮助我们熟悉Scala语言.即使你对Scala不熟悉,仍然可以使用这个工具.Spark shell使得用户可以和Spark集群 ...

- Spark源码分析之Spark Shell(上)

终于开始看Spark源码了,先从最常用的spark-shell脚本开始吧.不要觉得一个启动脚本有什么东东,其实里面还是有很多知识点的.另外,从启动脚本入手,是寻找代码入口最简单的方法,很多开源框架,其 ...

- 【原创 Hadoop&Spark 动手实践 5】Spark 基础入门,集群搭建以及Spark Shell

Spark 基础入门,集群搭建以及Spark Shell 主要借助Spark基础的PPT,再加上实际的动手操作来加强概念的理解和实践. Spark 安装部署 理论已经了解的差不多了,接下来是实际动手实 ...

- Spark Shell简单使用

基础 Spark的shell作为一个强大的交互式数据分析工具,提供了一个简单的方式学习API.它可以使用Scala(在Java虚拟机上运行现有的Java库的一个很好方式)或Python.在Spark目 ...

- 02、体验Spark shell下RDD编程

02.体验Spark shell下RDD编程 1.Spark RDD介绍 RDD是Resilient Distributed Dataset,中文翻译是弹性分布式数据集.该类是Spark是核心类成员之 ...

- 利用Maven管理工程项目本地启动报错及解决方案

目前利用Maven工具来构建自己的项目已比较常见.今天主要不是介绍Maven工具,而是当你本地启动这样的服务时,如果遇到报错,该如何解决?下面只是参考的解决方案,具体的解法还是得看log的信息. 1. ...

随机推荐

- WINDOWS7 下 xclient 802.1x 客户端 停止运行的解决办法

昨天下午,由于FOXMAIL 出现问题,修改了一个地方,导致xclient 停止运行.具体解决办法如下:右击“计算机”-进入“系统属性”-->“高级”-->"设置"-- ...

- Java远程方法协议(JRMP)

Java远程方法协议(英语:Java Remote Method Protocol,JRMP)是特定于Java技术的.用于查找和引用远程对象的协议.这是运行在Java远程方法调用(RMI)之下.TCP ...

- 【PMP】十五至尊图

以上是PMP的10大知识领域与5个过程组,在PMP考试中属于必须记忆的知识,该知识来源于PMBOK 第6版 附件为每日练习记忆模板,可以更好的记忆上图 点击下载附件

- 如何取消Excel中的自动超链接

步骤:文件按钮→Excel选项-校对-自动更正选项→键入时自动套用格式→Internet及网络路径替换为超链接,对其不要勾选即可.

- Linux和windows下内核socket优化项 (转)

问题: No buffer space available Linux: vi /etc/sysctl.conf net.core.netdev_max_backlog = 30000 每个网络接口 ...

- nfs远程挂载问题记录

问题描述: mount: wrong fs type, bad option, bad superblock on x.x.x.x:/xxxx_domain/update,missing codepa ...

- C++比较特殊的构造函数和初始化语法

C++的构造函数 看Qt创建的示例函数, 第一个构造函数就没看懂. 是这样的 Notepad::Notepad(QWidget *parent) : QMainWindow(parent), ui(n ...

- 跟我学Shiro---无状态 Web 应用集成

无状态 Web 应用集成 在一些环境中,可能需要把 Web 应用做成无状态的,即服务器端无状态,就是说服务器端不会存储像会话这种东西,而是每次请求时带上相应的用户名进行登录.如一些 REST 风格的 ...

- Kubernetes滚动更新介绍及使用-minReadySeconds

滚动升级Deployment 现在我们将刚刚保存的yaml文件中的nginx镜像修改为 nginx:1.13.3,然后在spec下面添加滚动升级策略: 1 2 3 4 5 6 7 minReady ...

- ios中coredata

http://blog.csdn.net/q199109106q/article/details/8563438 // // MJViewController.m // 数据存储5-Core Data ...