hadoop上hive的安装

1.前言

说明:安装hive前提是要先安装hadoop集群,并且hive只需要再hadoop的namenode节点集群里安装即可(需要再所有namenode上安装),可以不在datanode节点的机器上安装。另外还需要说明的是,虽然修改配置文件并不需要你已经把hadoop跑起来,但是本文中用到了hadoop命令,在执行这些命令前你必须确保hadoop是在正常跑着的,而且启动hive的前提也是需要hadoop在正常跑着,所以建议你先将hadoop跑起来在按照本文操作。有关如何安装和启动hadoop集群。

hive 版本下载:hive-2.3.4/

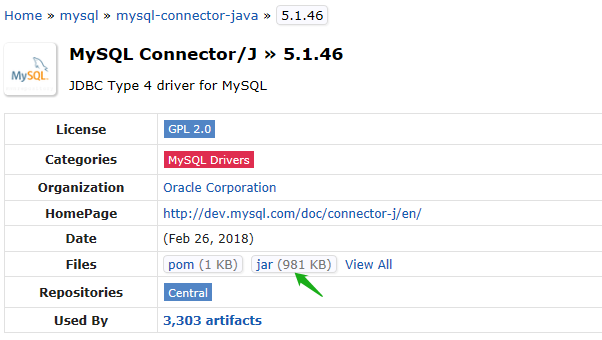

下载 mysql-connector-java-5.1.46.jar

https://mvnrepository.com/artifact/mysql/mysql-connector-java/5.1.46

把包上传到/home/hadoop/hive

-rw-r--r--. 1 hadoop hadoop 232234292 Jan 6 06:49 apache-hive-2.3.4-bin.tar.gz

-rw-r--r--. 1 hadoop hadoop 1004838 Jan 6 06:49 mysql-connector-java-5.1.46.jar

解压:

tar -xvf apache-hive-2.3.4-bin.tar.gz

mv apache-hive-2.3.4-bin hive

然后把 mysql-connector-java-5.1.46.jar移动到hive2.3.4/lib下

把hive移动到/home/hadoop下

vi /etc/profile 添加下面

export HIVE_HOME=/home/hadoop/hive

export PATH=$PATH:$HIVE_HOME/bin

生效:

source /etc/profile

修改hive配置文件

cp hive-env.sh.template hive-env.sh

cp hive-default.xml.template hive-site.xml

cp hive-log4j2.properties.template hive-log4j2.properties

cp hive-exec-log4j2.properties.template hive-exec-log4j2.properties

修改 hive-env.sh 文件添加:

export JAVA_HOME=/usr/local/jdk1.8

export HADOOP_HOME=/home/hadoop/hadoop-2.7.3

export HIVE_HOME=/home/hadoop/hive

export HIVE_CONF_DIR=/home/hadoop/hive/conf

在hdfs中创建目录,并授权,用于存储文件

启动hadoop

hdfs dfs -mkdir -p /user/hive/warehouse

hdfs dfs -mkdir -p /user/hive/tmp

hdfs dfs -mkdir -p /user/hive/log

hdfs dfs -chmod -R 777 /user/hive/warehouse

hdfs dfs -chmod -R 777 /user/hive/tmp

hdfs dfs -chmod -R 777 /user/hive/log

验证:

[hadoop@master conf]$ hdfs dfs -ls /user/hive

Found 3 items

drwxrwxrwx - hadoop supergroup 0 2019-01-06 07:09 /user/hive/log

drwxrwxrwx - hadoop supergroup 0 2019-01-06 07:09 /user/hive/tmp

drwxrwxrwx - hadoop supergroup 0 2019-01-06 07:09 /user/hive/warehouse

修改hive-site.xml

<property>

<name>hive.exec.scratchdir</name>

<value>/user/hive/tmp</value>

</property>

<property>

<name>hive.metastore.warehouse.dir</name>

<value>/user/hive/warehouse</value>

</property>

<property>

<name>hive.querylog.location</name>

<value>/user/hive/log</value>

</property>

<property>

<name>javax.jdo.option.ConnectionURL</name>

<value>jdbc:mysql://localhost:3306/hive?createDatabaseIfNotExist=true&characterEncoding=UTF-8&useSSL=false</value>

</property>

<property>

<name>javax.jdo.option.ConnectionDriverName</name>

<value>com.mysql.jdbc.Driver</value>

</property>

<property>

<name>javax.jdo.option.ConnectionUserName</name>

<value>root</value>

</property>

<property>

<name>javax.jdo.option.ConnectionPassword</name>

<value>mysql</value> --mysql 的密码

</property>

创建 tmp 文件夹 ,并修改权限 mkdir -p /home/hadoop/apps/hive/tmp chmod -R 777 /home/hadoop/apps/hive/tmp

将hive-site.xml文件中的${system:java.io.tmpdir}替换为hive的临时目录 /home/hadoop/apps/hive/tmp

把 {system:user.name} 改成 {user.name}

初始化:

[hadoop@master conf]$ schematool -dbType mysql -initSchema

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/home/hadoop/hive/lib/log4j-slf4j-impl-2.6..jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/home/hadoop/hadoop-2.7./share/hadoop/common/lib/slf4j-log4j12-1.7..jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

SLF4J: Actual binding is of type [org.apache.logging.slf4j.Log4jLoggerFactory]

Metastore connection URL: jdbc:mysql://localhost:3306/hive?createDatabaseIfNotExist=true&characterEncoding=UTF-8&useSSL=false

Metastore Connection Driver : com.mysql.jdbc.Driver

Metastore connection User: root

原因是jar重复导致,删除一个jar包

[hadoop@master tmp]$ cd /home/hadoop/hive/lib/

[hadoop@master lib]$ ll log4j-slf4j-impl-2.6.2.jar

-rw-r--r--. 1 hadoop hadoop 22927 Jan 6 06:57 log4j-slf4j-impl-2.6.2.jar

[hadoop@master lib]$ rm -rf log4j-slf4j-impl-2.6.2.jar

[hadoop@master conf]$ schematool -dbType mysql -initSchema

Metastore connection URL: jdbc:mysql://localhost:3306/hive?createDatabaseIfNotExist=true&characterEncoding=UTF-8&useSSL=false

Metastore Connection Driver : com.mysql.jdbc.Driver

Metastore connection User: root

Starting metastore schema initialization to 2.3.0

Initialization script hive-schema-2.3.0.mysql.sql

Initialization script completed

schemaTool completed

启动hive方式一:

[hadoop@master conf]$ hive

which: no hbase in (/usr/local/jdk1./bin:/usr/local/jdk1./jre/bin:/usr/local/bin:/bin:/usr/bin:/usr/local/sbin:/usr/sbin:/sbin:/home/hadoop/hive/bin:/home/hadoop/hadoop-2.7./sbin:/home/hadoop/hadoop-2.7./bin) Logging initialized using configuration in file:/home/hadoop/hive/conf/hive-log4j2.properties Async: true

Hive-on-MR is deprecated in Hive and may not be available in the future versions. Consider using a different execution engine (i.e. spark, tez) or using Hive .X releases.

hive> show database;

启动方式二:

使用 beeline

必须先对hadoop的core-site.xml进行配置,在文件里面添加并保存

<property>

<name>hadoop.proxyuser.hadoop.groups</name>

<value>*</value>

</property>

<property>

<name>hadoop.proxyuser.hadoop.hosts</name>

<value>*</value>

</property>

先启动 hiveserver2

[hadoop@master conf]$ hiveserver2

which: no hbase in (/usr/local/jdk1./bin:/usr/local/jdk1./jre/bin:/usr/local/bin:/bin:/usr/bin:/usr/local/sbin:/usr/sbin:/sbin:/home/hadoop/hive/bin:/home/hadoop/hadoop-2.7./sbin:/home/hadoop/hadoop-2.7./bin)

-- ::: Starting HiveServer2

在启动

[hadoop@master hadoop]$ beeline

Beeline version 2.3. by Apache Hive

beeline> !connect jdbc:hive2://master:10000

Connecting to jdbc:hive2://master:10000

Enter username for jdbc:hive2://master:10000: hadoop

Enter password for jdbc:hive2://master:10000: ******

Connected to: Apache Hive (version 2.3.)

Driver: Hive JDBC (version 2.3.)

Transaction isolation: TRANSACTION_REPEATABLE_READ

: jdbc:hive2://master:10000> show databases;

+----------------+

| database_name |

+----------------+

| default |

+----------------+

row selected (1.196 seconds)

: jdbc:hive2://master:10000>

启动方式三 :Web UI

一、页面配置

[hadoop@master conf]$ vi hive-site.xml

<property>

<name>hive.server2.webui.host</name>

<value>192.168.1.30</value> --主机IP

<description>The host address the HiveServer2 WebUI will listen on</description>

</property>

<property>

<name>hive.server2.webui.port</name>

<value></value>

<description>The port the HiveServer2 WebUI will listen on. This can beset to or a negative integer to disable the web UI</description>

</property>

二、切记,在修改完hive的hive-site.xml的配置之后,一定要重新启动HiveServer2服务

[hadoop@master conf]$ hive --service hiveserver2 &

[1] 3731

[hadoop@master conf]$ which: no hbase in (/usr/local/jdk1.8/bin:/usr/local/jdk1.8/jre/bin:/usr/local/bin:/bin:/usr/bin:/usr/local/sbin:/usr/sbin:/sbin:/home/hadoop/hive/bin:/home/hadoop/hadoop-2.7.3/sbin:/home/hadoop/hadoop-2.7.3/bin)

2019-01-08 10:38:40: Starting HiveServer2

。。。。。。

三、在浏览器前台输入网址:http://192.168.1.30:10002/

[hadoop@master bin]$ beeline

Beeline version 2.3. by Apache Hive

beeline> !connect jdbc:hive2://master:10000 hadoop hadoop org.apache.hive.jdbc.HiveDriver

Connecting to jdbc:hive2://master:10000

Connected to: Apache Hive (version 2.3.)

Driver: Hive JDBC (version 2.3.)

Transaction isolation: TRANSACTION_REPEATABLE_READ

: jdbc:hive2://master:10000> show databases;

OK

+----------------+

| database_name |

+----------------+

| db_hive |

| default |

+----------------+

rows selected (1.555 seconds)

: jdbc:hive2://master:10000> use db_hive;

OK

No rows affected (0.153 seconds)

: jdbc:hive2://master:10000> show tables;

OK

+-----------+

| tab_name |

+-----------+

| u2 |

| u4 |

+-----------+

rows selected (0.159 seconds)

: jdbc:hive2://master:10000> select * from u2;

OK

+--------+----------+---------+-----------+---------+

| u2.id | u2.name | u2.age | u2.month | u2.day |

+--------+----------+---------+-----------+---------+

| | xm1 | | | |

| | xm2 | | | |

| | xm3 | | | |

| | xh4 | | | |

| | xh5 | | | |

| | xh6 | | | |

| | xh7 | | | |

| | xh8 | | | |

| | xh9 | | | |

+--------+----------+---------+-----------+---------+

rows selected (2.672 seconds)

: jdbc:hive2://master:10000>

[hadoop@master bin]$ beeline -u jdbc:hive2://master:10000/default

Connecting to jdbc:hive2://master:10000/default

Connected to: Apache Hive (version 2.3.)

Driver: Hive JDBC (version 2.3.)

Transaction isolation: TRANSACTION_REPEATABLE_READ

Beeline version 2.3. by Apache Hive

: jdbc:hive2://master:10000/default> show databases;

OK

+----------------+

| database_name |

+----------------+

| db_hive |

| default |

+----------------+

rows selected (0.208 seconds)

完。。。

hadoop上hive的安装的更多相关文章

- Hadoop上 Hive 操作

数据dept表的准备: --创建dept表 CREATE TABLE dept( deptno int, dname string, loc string) ROW FORMAT DELIMITED ...

- Hadoop 上Hive 的操作

数据dept表的准备: --创建dept表 CREATE TABLE dept( deptno int, dname string, loc string) ROW FORMAT DELIMITED ...

- 《Programming Hive》读书笔记(一)Hadoop和hive环境搭建

<Programming Hive>读书笔记(一)Hadoop和Hive环境搭建 先把主要的技术和工具学好,才干更高效地思考和工作. Chapter 1.Int ...

- hadoop/hbase/hive单机扩增slave

原来只有一台机器,hadoop,hbase,hive都安装在一台机器上,现在又申请到一台机器,领导说做成主备, 要重新配置吗?还是原来的不动,把新增的机器做成slave,原来的当作master?网上找 ...

- Hadoop之hive安装过程以及运行常见问题

Hive简介 1.数据仓库工具 2.支持一种与Sql类似的语言HiveQL 3.可以看成是从Sql到MapReduce的映射器 4.提供shall.Jdbc/odbc.Thrift.Web等接口 Hi ...

- Hadoop学习(7)-hive的安装和命令行使用和java操作

Hive的用处,就是把hdfs里的文件建立映射转化成数据库的表 但hive里的sql语句都是转化成了mapruduce来对hdfs里的数据进行处理 ,并不是真正的在数据库里进行了操作. 而那些表的定义 ...

- Mac上Hive安装配置

Mac上Hive安装配置 1.安装 下载hive,地址:http://mirror.bit.edu.cn/apache/hive/ 之前我配置了集群,tjt01.tjt02.tjt03,这里hive安 ...

- 【Hadoop离线基础总结】Hive的安装部署以及使用方式

Hive的安装部署以及使用方式 安装部署 Derby版hive直接使用 cd /export/softwares 将上传的hive软件包解压:tar -zxvf hive-1.1.0-cdh5.14. ...

- 1. 安装虚拟机,Hadoop和Hive

由于想自学下Hive,所以前段时间在个人电脑上安装了虚拟机,并安装上Hadoop和Hive.接下我就分享下我如何安装Hive的.步骤如下: 安装虚拟机 安装Hadoop 安装Java 安装Hive 我 ...

随机推荐

- kernel hacking的一些网站

很全面的网站,下面的网站基本都可以从该地址找到. 新手必备 subscrible/unsubscrible mail list mail list archive kernel git mainlin ...

- electron关于页面跳转 的问题

刚开始看到页面跳转,大家一般会想到用 window.location.href = './index.html'; 这样的代码.结果是可以跳转,但 DOM事件 基本都会失效.到最后还是使用的 elec ...

- StackTraceElement 源码阅读

StackTraceElement 属性说明 /** * 每个 StackTraceElement 对象代表一个独立的栈帧,所有栈帧的顶部是一个方法调用 * @since 1.4 * @author ...

- StringJoiner 源码阅读

StringJoiner 属性说明 /** * StringJoiner 使用指定的分割符将多个字符串进行拼接,并可指定前缀和后缀 * * @see java.util.stream.Collecto ...

- 找出所有从根节点到叶子节点路径和等于n的路径并输出

//找出所有从根节点到叶子节点路径和等于n的路径并输出 Stack<Node> stack = new Stack<Node>(); public void findPath( ...

- [zookeeper]依赖jar的问题

zookeeper是依赖以下三个jar包 log4j-1.2.17.jar slf4j-api-1.7.25.jar slf4j-log4j12-1.7.18.jar 否则会报异常:java.lang ...

- visual studio 2019不能在vue文件中直接识别less语法

试了好多方法,不象vs code那样能直接在template vue文件中就识别less语法下边这种分离的方式是可以的,在项目中也比较实用,将来你代码量大了,样式/脚本也还是要和template代码分 ...

- Nginx动态添加模块 平滑升级

已经安装好的Nginx动态添加模块 说明: 已经安装好的Nginx,需要添加一个未被编译安装的模块,需要怎么弄呢? 这里已安装第三方nginx-rtmp-module模块为例 nginx的模块是需要重 ...

- jQuery I

jQuery 两大特点: 链式编程:比如.show()和.html()可以连写成.show().html(). 隐式迭代:隐式对应的是显式.隐式迭代的意思是:在方法的内部进行循环遍历,而不用我们自己再 ...

- Leetcode之动态规划(DP)专题-413. 等差数列划分(Arithmetic Slices)

Leetcode之动态规划(DP)专题-413. 等差数列划分(Arithmetic Slices) 如果一个数列至少有三个元素,并且任意两个相邻元素之差相同,则称该数列为等差数列. 例如,以下数列为 ...