吴裕雄 python 神经网络——TensorFlow 实现LeNet-5模型处理MNIST手写数据集

import os

import numpy as np

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data tf.reset_default_graph() INPUT_NODE = 784

OUTPUT_NODE = 10 IMAGE_SIZE = 28

NUM_CHANNELS = 1

NUM_LABELS = 10 CONV1_DEEP = 32

CONV1_SIZE = 5 CONV2_DEEP = 64

CONV2_SIZE = 5 FC_SIZE = 512 def inference(input_tensor, train, regularizer):

with tf.variable_scope('layer1-conv1'):

conv1_weights = tf.get_variable("weight", [CONV1_SIZE, CONV1_SIZE, NUM_CHANNELS, CONV1_DEEP],initializer=tf.truncated_normal_initializer(stddev=0.1))

conv1_biases = tf.get_variable("bias", [CONV1_DEEP], initializer=tf.constant_initializer(0.0))

conv1 = tf.nn.conv2d(input_tensor, conv1_weights, strides=[1, 1, 1, 1], padding='SAME')

relu1 = tf.nn.relu(tf.nn.bias_add(conv1, conv1_biases)) with tf.name_scope("layer2-pool1"):

pool1 = tf.nn.max_pool(relu1, ksize = [1,2,2,1],strides=[1,2,2,1],padding="SAME") with tf.variable_scope("layer3-conv2"):

conv2_weights = tf.get_variable("weight", [CONV2_SIZE, CONV2_SIZE, CONV1_DEEP, CONV2_DEEP],initializer=tf.truncated_normal_initializer(stddev=0.1))

conv2_biases = tf.get_variable("bias", [CONV2_DEEP], initializer=tf.constant_initializer(0.0))

conv2 = tf.nn.conv2d(pool1, conv2_weights, strides=[1, 1, 1, 1], padding='SAME')

relu2 = tf.nn.relu(tf.nn.bias_add(conv2, conv2_biases)) with tf.name_scope("layer4-pool2"):

pool2 = tf.nn.max_pool(relu2, ksize=[1, 2, 2, 1], strides=[1, 2, 2, 1], padding='SAME')

pool_shape = pool2.get_shape().as_list()

nodes = pool_shape[1] * pool_shape[2] * pool_shape[3]

reshaped = tf.reshape(pool2, [pool_shape[0], nodes]) with tf.variable_scope('layer5-fc1'):

fc1_weights = tf.get_variable("weight", [nodes, FC_SIZE],initializer=tf.truncated_normal_initializer(stddev=0.1))

if regularizer != None:

tf.add_to_collection('losses', regularizer(fc1_weights))

fc1_biases = tf.get_variable("bias", [FC_SIZE], initializer=tf.constant_initializer(0.1))

fc1 = tf.nn.relu(tf.matmul(reshaped, fc1_weights) + fc1_biases)

if train: fc1 = tf.nn.dropout(fc1, 0.5) with tf.variable_scope('layer6-fc2'):

fc2_weights = tf.get_variable("weight", [FC_SIZE, NUM_LABELS],initializer=tf.truncated_normal_initializer(stddev=0.1))

if regularizer != None: tf.add_to_collection('losses', regularizer(fc2_weights))

fc2_biases = tf.get_variable("bias", [NUM_LABELS], initializer=tf.constant_initializer(0.1))

logit = tf.matmul(fc1, fc2_weights) + fc2_biases

return logit BATCH_SIZE = 100

LEARNING_RATE_BASE = 0.01

LEARNING_RATE_DECAY = 0.99

REGULARIZATION_RATE = 0.0001

TRAINING_STEPS = 6000

MOVING_AVERAGE_DECAY = 0.99 def train(mnist):

# 定义输出为4维矩阵的placeholder

x = tf.placeholder(tf.float32, [BATCH_SIZE,IMAGE_SIZE,IMAGE_SIZE,NUM_CHANNELS],name='x-input')

y_ = tf.placeholder(tf.float32, [None, OUTPUT_NODE], name='y-input')

regularizer = tf.contrib.layers.l2_regularizer(REGULARIZATION_RATE)

y = inference(x,False,regularizer)

global_step = tf.Variable(0, trainable=False) # 定义损失函数、学习率、滑动平均操作以及训练过程。

variable_averages = tf.train.ExponentialMovingAverage(MOVING_AVERAGE_DECAY, global_step)

variables_averages_op = variable_averages.apply(tf.trainable_variables())

cross_entropy = tf.nn.sparse_softmax_cross_entropy_with_logits(logits=y, labels=tf.argmax(y_, 1))

cross_entropy_mean = tf.reduce_mean(cross_entropy)

loss = cross_entropy_mean + tf.add_n(tf.get_collection('losses'))

learning_rate = tf.train.exponential_decay(LEARNING_RATE_BASE,global_step,mnist.train.num_examples / BATCH_SIZE, LEARNING_RATE_DECAY,staircase=True) train_step = tf.train.GradientDescentOptimizer(learning_rate).minimize(loss, global_step=global_step)

with tf.control_dependencies([train_step, variables_averages_op]):

train_op = tf.no_op(name='train')

# 初始化TensorFlow持久化类。

saver = tf.train.Saver()

with tf.Session() as sess:

tf.global_variables_initializer().run()

for i in range(TRAINING_STEPS):

xs, ys = mnist.train.next_batch(BATCH_SIZE)

reshaped_xs = np.reshape(xs, (BATCH_SIZE,IMAGE_SIZE,IMAGE_SIZE,NUM_CHANNELS))

_, loss_value, step = sess.run([train_op, loss, global_step], feed_dict={x: reshaped_xs, y_: ys})

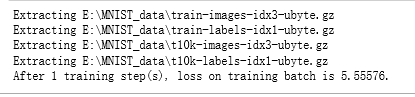

if i % 1000 == 0:

print("After %d training step(s), loss on training batch is %g." % (step, loss_value)) def main(argv=None):

mnist = input_data.read_data_sets("E:\\MNIST_data\\", one_hot=True)

train(mnist) if __name__ == '__main__':

main()

吴裕雄 python 神经网络——TensorFlow 实现LeNet-5模型处理MNIST手写数据集的更多相关文章

- 吴裕雄 python 神经网络TensorFlow实现LeNet模型处理手写数字识别MNIST数据集

import tensorflow as tf tf.reset_default_graph() # 配置神经网络的参数 INPUT_NODE = 784 OUTPUT_NODE = 10 IMAGE ...

- TensorFlow实战第五课(MNIST手写数据集识别)

Tensorflow实现softmax regression识别手写数字 MNIST手写数字识别可以形象的描述为机器学习领域中的hello world. MNIST是一个非常简单的机器视觉数据集.它由 ...

- 吴裕雄 python 神经网络——TensorFlow 循环神经网络处理MNIST手写数字数据集

#加载TF并导入数据集 import tensorflow as tf from tensorflow.contrib import rnn from tensorflow.examples.tuto ...

- 吴裕雄 python 神经网络——TensorFlow 使用卷积神经网络训练和预测MNIST手写数据集

import tensorflow as tf import numpy as np from tensorflow.examples.tutorials.mnist import input_dat ...

- 吴裕雄 python 神经网络——TensorFlow 训练过程的可视化 TensorBoard的应用

#训练过程的可视化 ,TensorBoard的应用 #导入模块并下载数据集 import tensorflow as tf from tensorflow.examples.tutorials.mni ...

- 吴裕雄 python 神经网络——TensorFlow实现搭建基础神经网络

import numpy as np import tensorflow as tf import matplotlib.pyplot as plt def add_layer(inputs, in_ ...

- 吴裕雄 python 神经网络——TensorFlow图片预处理调整图片

import numpy as np import tensorflow as tf import matplotlib.pyplot as plt def distort_color(image, ...

- 吴裕雄 python 神经网络——TensorFlow pb文件保存方法

import tensorflow as tf from tensorflow.python.framework import graph_util v1 = tf.Variable(tf.const ...

- 吴裕雄 python 神经网络——TensorFlow 数据集高层操作

import tempfile import tensorflow as tf train_files = tf.train.match_filenames_once("E:\\output ...

- 吴裕雄 python 神经网络——TensorFlow 输入数据处理框架

import tensorflow as tf files = tf.train.match_filenames_once("E:\\MNIST_data\\output.tfrecords ...

随机推荐

- Apache Kafka(四)- 使用 Java 访问 Kafka

1. Produer 1.1. 基本 Producer 首先使用 maven 构建相关依赖,这里我们服务器kafka 版本为 2.12-2.3.0,pom.xml 文件为: <?xml vers ...

- 阻塞队列BlockingQueue之ASynchronousQueue

一.SynchronousQueue简介 Java 6的并发编程包中的SynchronousQueue是一个没有数据缓冲的BlockingQueue,生产者线程对其的插入操作put必须等待消费者的移除 ...

- Chrome浏览器支持跨域访问

创建一个chrome的快捷方式,并加上参数 --allow-file-access-from-files --disable-web-security --user-data-dir="D: ...

- sqlserver 数据保留固定位小数,四舍五入后保存

在实际业务中遇到金额保留四舍五入后,保留两位小数的需求.但是原来的数据是保留的6位小数,所以需要转化一下.具体实现过程如下: EG:SELECT CAST ( ROUND(1965.12540,2) ...

- PyQt5遇到的一个坑 "ImportError: unable to find Qt5Core.dll on PATH" 及解决办法

最近再实现一个功能,主要是将自动化测试界面化 环境组合为:Windows 64bit + PyCharm + Python + PyQt5 + Pyinstaller + Inno Setup PS ...

- bugku 求getshell

要修改三个地方 根据大佬们的writeup,要修改三个地方: 1.扩展名filename 2.filename下面一行的Content-Type:image/jpeg 3.最最最重要的是请求头里的Co ...

- hadoop fs -put could only be replicated to 0 nodes, instead of 1 解决方法

我的坏境是在虚拟机linux操作系统中,启动start-all.sh后 1.执行jps,如下 2.执行hadoop fs -mkdir input 创建成功 执行hadoop fs -ls 可以看到i ...

- Codeforces Round #599 (Div. 2) B1. Character Swap (Easy Version)

This problem is different from the hard version. In this version Ujan makes exactly one exchange. Yo ...

- 【Python】文件下载小助手

import requests from contextlib import closing class ProgressBar(object): def __init__(self, title, ...

- js将后台传入得时间格式化

//格式化时间函数Date.prototype.Format = function (fmt) { var o = { "M+": this.getMonth() + 1, //月 ...