Machine learning第6周编程作业

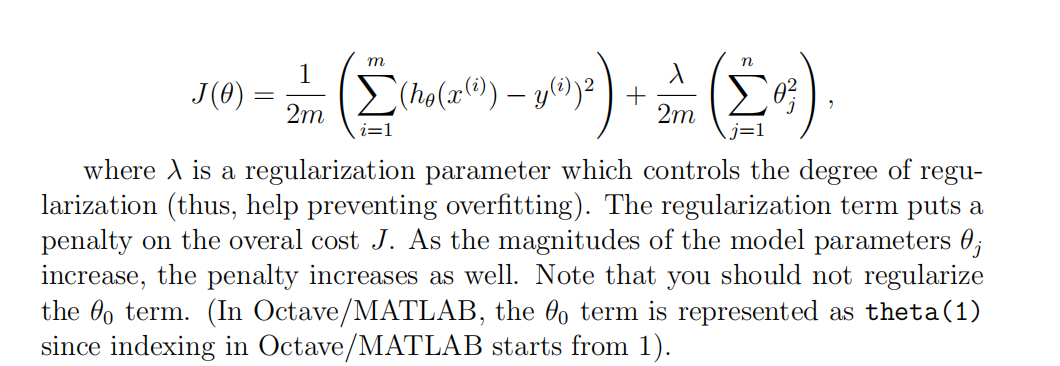

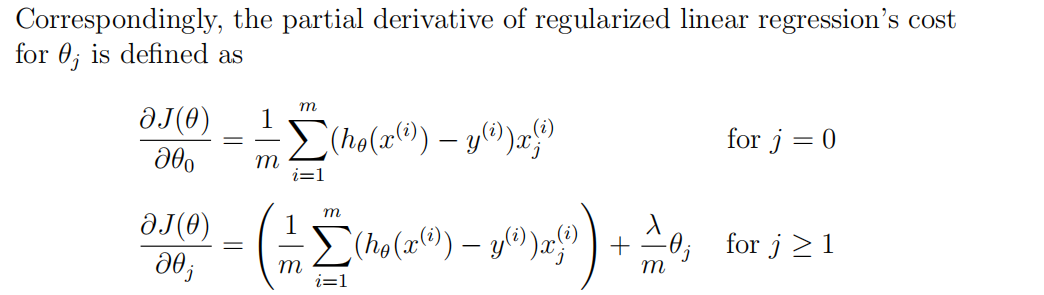

1.linearRegCostFunction:

function [J, grad] = linearRegCostFunction(X, y, theta, lambda)

%LINEARREGCOSTFUNCTION Compute cost and gradient for regularized linear

%regression with multiple variables

% [J, grad] = LINEARREGCOSTFUNCTION(X, y, theta, lambda) computes the

% cost of using theta as the parameter for linear regression to fit the

% data points in X and y. Returns the cost in J and the gradient in grad % Initialize some useful values

m = length(y); % number of training examples % You need to return the following variables correctly

J = 0;

grad = zeros(size(theta)); % ====================== YOUR CODE HERE ======================

% Instructions: Compute the cost and gradient of regularized linear

% regression for a particular choice of theta.

%

% You should set J to the cost and grad to the gradient.

% h=(X*theta);

for i=1:m,

J=J+1/(2*m)*(h(i)-y(i))^2;

endfor

n= length(theta);

for i=2:n,

J=J+lambda/(2*m)*theta(i)^2;

endfor grad(1)=1/m*(h-y)'*X(:,1);

for i=2:n,

grad(i)=1/m*(h-y)'*X(:,i)+lambda/m*theta(i);

endfor % ========================================================================= grad = grad(:); end

2.learningCuvers

function [error_train, error_val] = ...

learningCurve(X, y, Xval, yval, lambda)

%LEARNINGCURVE Generates the train and cross validation set errors needed

%to plot a learning curve

% [error_train, error_val] = ...

% LEARNINGCURVE(X, y, Xval, yval, lambda) returns the train and

% cross validation set errors for a learning curve. In particular,

% it returns two vectors of the same length - error_train and

% error_val. Then, error_train(i) contains the training error for

% i examples (and similarly for error_val(i)).

%

% In this function, you will compute the train and test errors for

% dataset sizes from 1 up to m. In practice, when working with larger

% datasets, you might want to do this in larger intervals.

% % Number of training examples

m = size(X, 1); % You need to return these values correctly

error_train = zeros(m, 1);

error_val = zeros(m, 1); % ====================== YOUR CODE HERE ======================

% Instructions: Fill in this function to return training errors in

% error_train and the cross validation errors in error_val.

% i.e., error_train(i) and

% error_val(i) should give you the errors

% obtained after training on i examples.

%

% Note: You should evaluate the training error on the first i training

% examples (i.e., X(1:i, :) and y(1:i)).

%

% For the cross-validation error, you should instead evaluate on

% the _entire_ cross validation set (Xval and yval).

%

% Note: If you are using your cost function (linearRegCostFunction)

% to compute the training and cross validation error, you should

% call the function with the lambda argument set to 0.

% Do note that you will still need to use lambda when running

% the training to obtain the theta parameters.

%

% Hint: You can loop over the examples with the following:

%

% for i = 1:m

% % Compute train/cross validation errors using training examples

% % X(1:i, :) and y(1:i), storing the result in

% % error_train(i) and error_val(i)

% ....

%

% end

% % ---------------------- Sample Solution ---------------------- for i=1:m,

theta=trainLinearReg(X(1:i,:),y(1:i),lambda);

error_train(i)=linearRegCostFunction(X(1:i,:),y(1:i),theta,0);

error_val(i)=linearRegCostFunction(Xval,yval,theta,0);

endfor % ------------------------------------------------------------- % ========================================================================= end

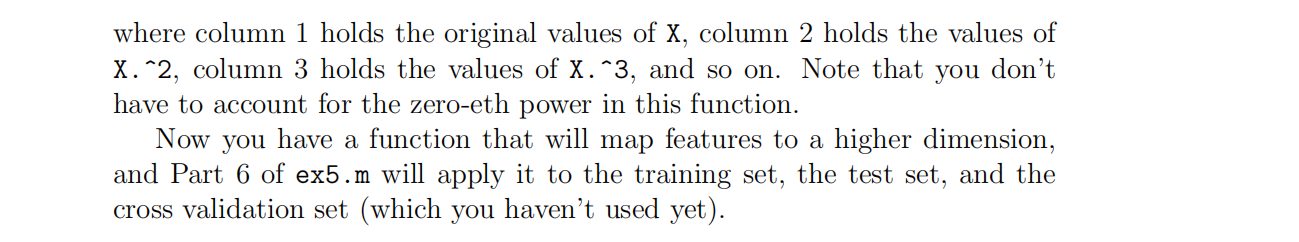

3.polyFeatures

function [X_poly] = polyFeatures(X, p)

%POLYFEATURES Maps X (1D vector) into the p-th power

% [X_poly] = POLYFEATURES(X, p) takes a data matrix X (size m x 1) and

% maps each example into its polynomial features where

% X_poly(i, :) = [X(i) X(i).^2 X(i).^3 ... X(i).^p];

% % You need to return the following variables correctly.

X_poly = zeros(numel(X), p); % ====================== YOUR CODE HERE ======================

% Instructions: Given a vector X, return a matrix X_poly where the p-th

% column of X contains the values of X to the p-th power.

%

% for i=1:p,

X_poly(:,i)=(X.^i);

endfor % ========================================================================= end

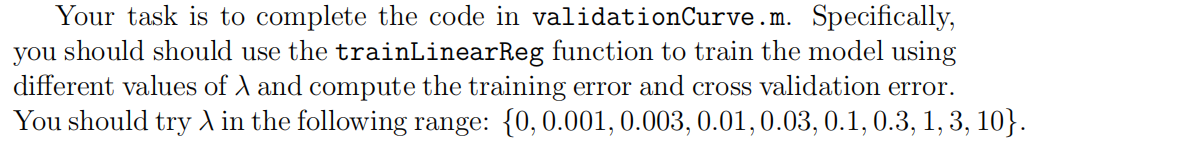

4.ValidationCurve

function [lambda_vec, error_train, error_val] = ...

validationCurve(X, y, Xval, yval)

%VALIDATIONCURVE Generate the train and validation errors needed to

%plot a validation curve that we can use to select lambda

% [lambda_vec, error_train, error_val] = ...

% VALIDATIONCURVE(X, y, Xval, yval) returns the train

% and validation errors (in error_train, error_val)

% for different values of lambda. You are given the training set (X,

% y) and validation set (Xval, yval).

% % Selected values of lambda (you should not change this)

lambda_vec = [0 0.001 0.003 0.01 0.03 0.1 0.3 1 3 10]'; % You need to return these variables correctly.

error_train = zeros(length(lambda_vec), 1);

error_val = zeros(length(lambda_vec), 1); % ====================== YOUR CODE HERE ======================

% Instructions: Fill in this function to return training errors in

% error_train and the validation errors in error_val. The

% vector lambda_vec contains the different lambda parameters

% to use for each calculation of the errors, i.e,

% error_train(i), and error_val(i) should give

% you the errors obtained after training with

% lambda = lambda_vec(i)

%

% Note: You can loop over lambda_vec with the following:

%

% for i = 1:length(lambda_vec)

% lambda = lambda_vec(i);

% % Compute train / val errors when training linear

% % regression with regularization parameter lambda

% % You should store the result in error_train(i)

% % and error_val(i)

% ....

%

% end

%

% for i=1:length(lambda_vec),

Lam=lambda_vec(i);

theta=trainLinearReg(X,y,Lam);

error_train(i)=linearRegCostFunction(X,y,theta,0);

error_val(i)=linearRegCostFunction(Xval,yval,theta,0);

endfor % ========================================================================= end

Machine learning第6周编程作业的更多相关文章

- Machine learning 第7周编程作业 SVM

1.Gaussian Kernel function sim = gaussianKernel(x1, x2, sigma) %RBFKERNEL returns a radial basis fun ...

- Machine learning 第8周编程作业 K-means and PCA

1.findClosestCentroids function idx = findClosestCentroids(X, centroids) %FINDCLOSESTCENTROIDS compu ...

- Machine learning 第5周编程作业

1.Sigmoid Gradient function g = sigmoidGradient(z) %SIGMOIDGRADIENT returns the gradient of the sigm ...

- Machine learning第四周code 编程作业

1.lrCostFunction: 和第三周的那个一样的: function [J, grad] = lrCostFunction(theta, X, y, lambda) %LRCOSTFUNCTI ...

- 吴恩达深度学习第4课第3周编程作业 + PIL + Python3 + Anaconda环境 + Ubuntu + 导入PIL报错的解决

问题描述: 做吴恩达深度学习第4课第3周编程作业时导入PIL包报错. 我的环境: 已经安装了Tensorflow GPU 版本 Python3 Anaconda 解决办法: 安装pillow模块,而不 ...

- 吴恩达深度学习第2课第2周编程作业 的坑(Optimization Methods)

我python2.7, 做吴恩达深度学习第2课第2周编程作业 Optimization Methods 时有2个坑: 第一坑 需将辅助文件 opt_utils.py 的 nitialize_param ...

- c++ 西安交通大学 mooc 第十三周基础练习&第十三周编程作业

做题记录 风影影,景色明明,淡淡云雾中,小鸟轻灵. c++的文件操作已经好玩起来了,不过掌握好控制结构显得更为重要了. 我这也不做啥题目分析了,直接就题干-代码. 总结--留着自己看 1. 流是指从一 ...

- Machine Learning - 第7周(Support Vector Machines)

SVMs are considered by many to be the most powerful 'black box' learning algorithm, and by posing构建 ...

- Machine Learning - 第6周(Advice for Applying Machine Learning、Machine Learning System Design)

In Week 6, you will be learning about systematically improving your learning algorithm. The videos f ...

随机推荐

- Rabbitmq的几种交换机模式

Rabbitmq的核心概念(如下图所示):有虚拟主机.交换机.队列.绑定: 交换机可以理解成具有路由表的路由程序,仅此而已.每个消息都有一个称为路由键(routing key)的属性,就是一个简单的字 ...

- 区块链相关在线加解密工具(非对称加密/hash)

https://cse.buffalo.edu/blockchain/tools.html https://cse.buffalo.edu/blockchain/encryption.html 由纽约 ...

- Common issue on financial information exchange (FIX) Connectivity[z]

FIX Protocol Session Connectivity Hi guys, in this post I would like share my experience with financ ...

- 12个优秀的国外Material Design网站案例

眼看2017年就快完了,你是不是还没完全搞懂Material Design呢?是嫌说明文档太长,还是觉得自己英文不好?都没关系,小编今天给大家整理了一份干货满满的学习笔记,并列举了一些国外的Mater ...

- ToList和ToDataTable(其中也有反射的知识)

using System;using System.Collections.Generic;using System.Data;using System.Linq;using System.Refle ...

- firstpage 2015/5/21

<%@ Page Language="C#" AutoEventWireup="true" CodeBehind="firstPage.aspx ...

- Java程序设计19——类的加载和反射-Part-B

接下来可以随意提供一个简单的主类,该主类无须编译就可使用上面的CompileClassLoader来运行它. package chapter18; public class Hello { publi ...

- Jenkins执行selenium报错unknown error: cannot find Chrome binary

问题描述:在Pycharm中执行selenium测试用例,可以正常运行, 集成在Jenkins中,构建时,发现构建成功,但是查看Console Output,报错:unknown error: can ...

- 如何打开Tango的ADF文件?

3ds max? opengl? ... Excel? vs? UltraEdit OpenGL Android API ADF文件数据结构:链接

- AndroidStudio-永远无法进入

由于出现了莫名其妙的,AndroidStudio已过时错误信息 就去删除了: C:\Users\Administrator\.android C:\Users\Administrator\.Andro ...