(转) WTF is computer vision?

WTF is computer vision?

Someone across the room throws you a ball and you catch it. Simple, right?

Actually, this is one of the most complex processes we’ve ever attempted to comprehend – let alone recreate. Inventing a machine that sees like we do is a deceptively difficult task, not just because it’s hard to make computers do it, but because we’re not entirely sure how we do it in the first place.

What actually happens is roughly this: the image of the ball passes through your eye and strikes your retina, which does some elementary analysis and sends it along to the brain, where the visual cortex more thoroughly analyzes the image. It then sends it out to the rest of the cortex, which compares it to everything it already knows, classifies the objects and dimensions, and finally decides on something to do: raise your hand and catch the ball (having predicted its path). This takes place in a tiny fraction of a second, with almost no conscious effort, and almost never fails. So recreating human vision isn’t just a hard problem, it’s a set of them, each of which relies on the other.

Well, no one ever said this would be easy. Except, perhaps, AI pioneer Marvin Minsky, who famously instructed a graduate student in 1966 to “connect a camera to a computer and have it describe what it sees.” Pity the kid: 50 years later, we’re still working on it.

Serious research began in the 50s and started along three distinct lines: replicating the eye (difficult); replicating the visual cortex (very difficult); and replicating the rest of the brain (arguably the most difficult problem ever attempted).

To see

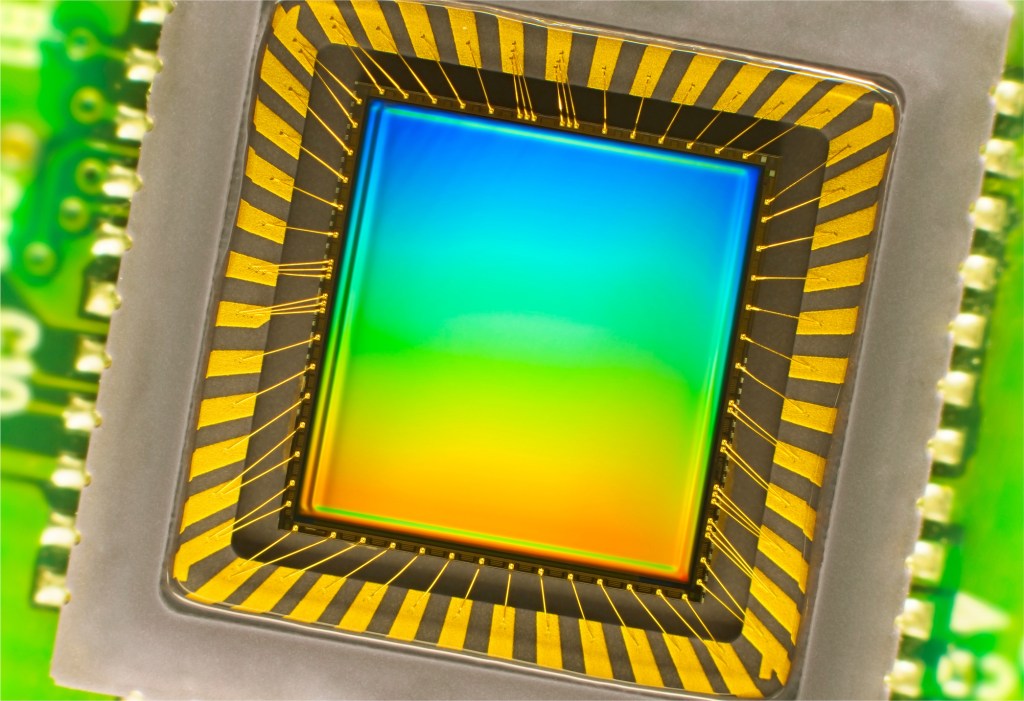

Reinventing the eye is the area where we’ve had the most success. Over the past few decades, we have created sensors and image processors that match and in some ways exceed the human eye’s capabilities. With larger, more optically perfect lenses and semiconductor subpixels fabricated at nanometer scales, the precision and sensitivity of modern cameras is nothing short of incredible. Cameras can also record thousands of images per second and detect distances with great precision.

An image sensor one might find in a digital camera.

Yet despite the high fidelity of their outputs, these devices are in many ways no better than a pinhole camera from the 19th century: They merely record the distribution of photons coming in a given direction. The best camera sensor ever made couldn’t recognize a ball — much less be able to catch it.

The hardware, in other words, is severely limited without the software — which, it turns out, is by far the greater problem to solve. But modern camera technology does provide a rich and flexible platform on which to work.

To describe

This isn’t the place for a complete course on visual neuroanatomy, but suffice it to say that our brains are built from the ground up with seeing in mind, so to speak. More of the brain is dedicated to vision than any other task, and that specialization goes all the way down to the cells themselves. Billions of them work together to extract patterns from the noisy, disorganized signal from the retina.

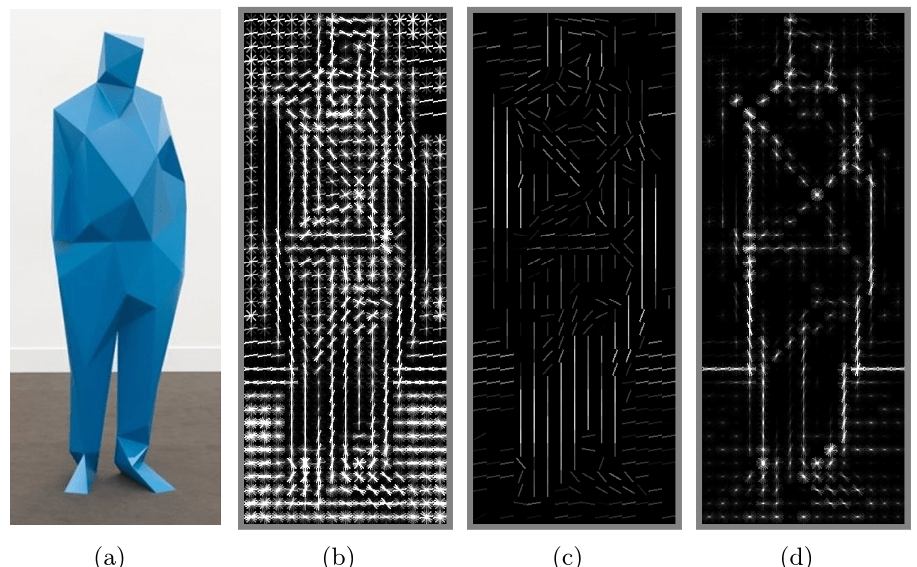

Sets of neurons excite one another if there’s contrast along a line at a certain angle, say, or rapid motion in a certain direction. Higher-level networks aggregate these patterns into meta-patterns: a circle, moving upwards. Another network chimes in: the circle is white, with red lines. Another: it is growing in size. A picture begins to emerge from these crude but complementary descriptions.

A “histogram of oriented gradients,” finding edges and other features using a technique like that found in the brain’s visual areas.

Early research into computer vision, considering these networks as being unfathomably complex, took a different approach: “top-down” reasoning — a book looks like /this/, so watch for /this/ pattern, unless it’s on its side, in which case it looks more like /this/. A car looks like /this/ and moves like /this/.

We can barely come up with a working definition of how our minds work, much less how to simulate it.

For a few objects in controlled situations, this worked well, but imagine trying to describe every object around you, from every angle, with variations for lighting and motion and a hundred other things. It became clear that to achieve even toddler-like levels of recognition would require impractically large sets of data.

A “bottom-up” approach mimicking what is found in the brain is more promising. A computer can apply a series of transformations to an image and discover edges, the objects they imply, perspective and movement when presented with multiple pictures, and so on. The processes involve a great deal of math and statistics, but they amount to the computer trying to match the shapes it sees with shapes it has been trained to recognize — trained on other images, the way our brains were.

What an image like this one above (from Purdue University’s E-lab) is showing is the computer displaying that, by its calculations, the objects highlighted look and act like other examples of that object, to a certain level of statistical certainty.

Proponents of bottom-up architecture might have said “I told you so.” Except that until recent years, the creation and operation of artificial neural networks was impractical because of the immense amount of computation they require. Advances in parallel computing have eroded those barriers, and the last few years have seen an explosion of research into and using systems that imitate — still very approximately — the ones in our brain. The process of pattern recognition has been sped up by orders of magnitude, and we’re making more progress every day.

To understand

Of course, you could build a system that recognizes every variety of apple, from every angle, in any situation, at rest or in motion, with bites taken out of it, anything — and it wouldn’t be able to recognize an orange. For that matter, it couldn’t even tell you what an apple is, whether it’s edible, how big it is or what they’re used for.

The problem is that even good hardware and software aren’t much use without an operating system.

For us, that’s the rest of our minds: short and long term memory, input from our other senses, attention and cognition, a billion lessons learned from a trillion interactions with the world, written with methods we barely understand to a network of interconnected neurons more complex than anything we’ve ever encountered.

The future of computer vision is in integrating the powerful but specific systems we’ve created with broader ones.

This is where the frontiers of computer science and more general artificial intelligence converge — and where we’re currently spinning our wheels. Between computer scientists, engineers, psychologists, neuroscientists and philosophers, we can barely come up with a working definition of how our minds work, much less how to simulate it.

That doesn’t mean we’re at a dead end. The future of computer vision is in integrating the powerful but specific systems we’ve created with broader ones that are focused on concepts that are a bit harder to pin down: context, attention, intention.

That said, computer vision even in its nascent stage is still incredibly useful. It’s in our cameras, recognizing faces and smiles. It’s in self-driving cars, reading traffic signs and watching for pedestrians. It’s in factory robots, monitoring for problems and navigating around human co-workers. There’s still a long way to go before they see like we do — if it’s even possible — but considering the scale of the task at hand, it’s amazing that they see at all.

FEATURED IMAGE: BRYCE DURBIN

(转) WTF is computer vision?的更多相关文章

- Computer vision labs

积累记录一些视觉实验室,方便查找 1. 多伦多大学计算机科学系 2. 普林斯顿大学计算机视觉和机器人实验室 3. 牛津大学Torr Vision Group 4. 伯克利视觉和学习中心 Pro ...

- Computer Vision: OpenCV, Feature Tracking, and Beyond--From <<Make Things See>> by Greg

In the 1960s, the legendary Stanford artificial intelligence pioneer, John McCarthy, famously gave a ...

- [转载]Three Trending Computer Vision Research Areas, 从CVPR看接下来几年的CV的发展趋势

As I walked through the large poster-filled hall at CVPR 2013, I asked myself, “Quo vadis Computer V ...

- Computer Vision 学习 -- 图像存储格式

本文把自己理解的图像存储格式总结一下. 计算机中的数据,都是二进制的,所以图片也不例外. 这是opencv文档的描述,具体在代码里面,使用矩阵来进行存储. 类似下图是(BGR格式): 图片的最小单位是 ...

- Analyzing The Papers Behind Facebook's Computer Vision Approach

Analyzing The Papers Behind Facebook's Computer Vision Approach Introduction You know that company c ...

- 计算机视觉和人工智能的状态:我们已经走得很远了 The state of Computer Vision and AI: we are really, really far away.

The picture above is funny. But for me it is also one of those examples that make me sad about the o ...

- Computer Vision的尴尬---by林达华

Computer Vision的尴尬---by林达华 Computer Vision是AI的一个非常活跃的领域,每年大会小会不断,发表的文章数以千计(单是CVPR每年就录取300多,各种二流会议每年的 ...

- Computer Vision Applied to Super Resolution

Capel, David, and Andrew Zisserman. "Computer vision applied to super resolution." Signal ...

- Computer Vision Algorithm Implementations

Participate in Reproducible Research General Image Processing OpenCV (C/C++ code, BSD lic) Image man ...

随机推荐

- jQuery原生框架-----------------核心框架

// 1.自调防止全局变量污染(function( window ) { var version = '1.0.0'; var document = window.document; var arr ...

- css3动画之背景颜色的自动切换

因为不同浏览器内核的不同所以会产生浏览器兼容性问题 <!DOCTYPE html> <html> <head> <meta charset='utf-8'/& ...

- centos系列——1

今天开始好好学一下centos,下面记录全过程,以方便后来查阅. 开机后用户名为root. 忘记密码修改方法: 以GRUB 多系统引导程序启动,用GRUB引导系统进入单用户步骤:(1) 启动GRUB, ...

- jquery中的DOM事件绑定与解绑

在jquery事件中有时候有的事件只需要在绑定后有效触发一次,当通过e.target判断触发条件有效触发后解除绑定事件,来避免多次无效触发和与未知情况造成冲突. 这时候就要用到了jquery中的事件绑 ...

- JAVAWEB学习总结 HTTPSERVLETRESPONSE对象(二)

一.HttpServletResponse常见应用--生成验证码 1.1.生成随机图片用作验证码 生成图片主要用到了一个BufferedImage类 步骤: 1. 在内存中创建一张图片 2.得到图片 ...

- NSOperation实现线程间通信

-(void)touchesBegan:(NSSet<UITouch *> *)touches withEvent:(UIEvent *)event { //1.创建队列 NSOperat ...

- [windows]部分前缀以及其意义

前缀 意义 CS 类风格选项 CW 创建窗口选项 DT 文本绘制选项 IDI 图标的ID号 MB 消息框选项 SND 声音选项 WM 窗口消息 WS 窗 ...

- Java随笔二

1.常量:final可以设置变量,也可以表示这个变量只能被赋值一次(即可以声明一个空变量,只能赋值一次):可以使用关键字static final设置一个类常量,以供一个类中的多个方法使用. 2.字符串 ...

- 解决Tomcat7“At least one JAR was scanned for TLDs yet contained no TLDs”问题

解决Tomcat7“At least one JAR was scanned for TLDs yet contained no TLDs”问题 2013-12-05 21:58:00| 分类: t ...

- mysql登录不了及修改密码

安装mariadb,默认是无密码的,但一般是指要设置密码的.在设置密码时出现各种问题,可能还是不太明白其原理. 一下我尝试了两种方法,但都失败了.下面这两个是我尝试的方法: 一.网上最多的方法是 1. ...