K8s集群安装和检查(经验分享)

一、组件方式检查

1. Master节点:

2. Node 节点:

无

1. Master 节点:

root>> systemctl status etcd

root>> systemctl status kube-apiserver

root>> systemctl status kube-controller-manager

root>> systemctl status kube-scheduler

2. Node 节点

root>> systemctl status flanneld

root>> systemctl status kube-proxy

root>> systemctl status kubelet

root>> systemctl status docker

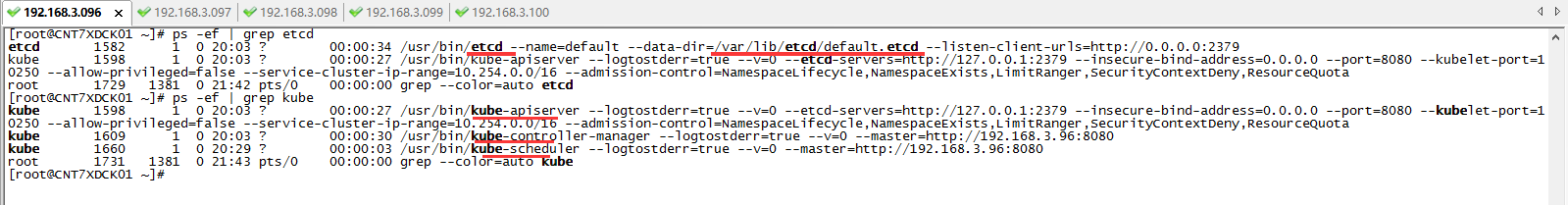

三、进程方式检查

1. Master 节点:

root>> ps -ef | grep etcd

root>> yum list installed | grep kube

2. Node 节点:

root>> ps -ef | grep flannel

root>> ps -ef | grep kube

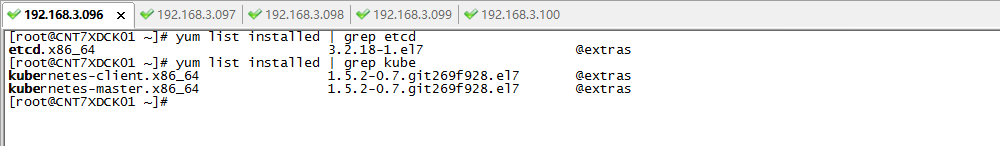

四、安装包方式检查

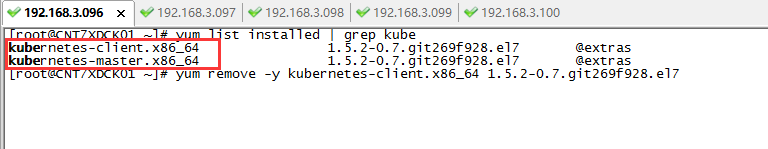

1. Master 节点:

root>> yum list installed | grep etcd

root>> yum list installed | grep kube

2. Node 节点:

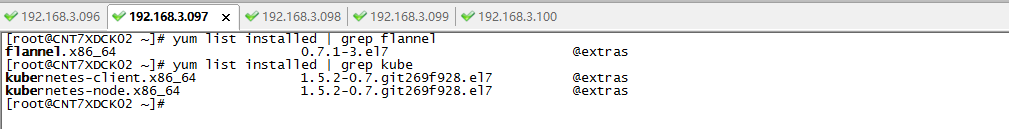

root>> yum list installed | grep flannel

root>> yum list installed | grep kube

五,附上第一次安装k8s集群失败后,后面重新安装k8s的一些环境重置的命令。

5.1 Master 节点

1. 卸载之前组件

[root@CNT7XDCK01 ~]# yum list installed | grep kube #首先查询组件

kubernetes-client.x86_64 1.5.2-0.7.git269f928.el7 @extras

kubernetes-master.x86_64 1.5.2-0.7.git269f928.el7 @extras

[root@CNT7XDCK01 ~]# yum remove -y kubernetes-client.x86_64

[root@CNT7XDCK01 ~]# yum remove -y kubernetes-master.x86_64

2. 重新安装组件

[root@CNT7XDCK01 ~]# yum -y install etcd

[root@CNT7XDCK01 ~]# yum -y install kubernetes-master

3. 配置相关kube的配置文件

编辑/etc/etcd/etcd.conf文件

ETCD_NAME="default"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_CLIENT_URLS="http://0.0.0.0:2379"

ETCD_ADVERTISE_CLIENT_URLS="http://localhost:2379"

编辑/etc/kubernetes/apiserver文件

###

# kubernetes system config

#

# The following values are used to configure the kube-apiserver

# # The address on the local server to listen to.

KUBE_API_ADDRESS="--insecure-bind-address=0.0.0.0" # The port on the local server to listen on.

KUBE_API_PORT="--port=8080" # Port minions listen on

KUBELET_PORT="--kubelet-port=10250" # Comma separated list of nodes in the etcd cluster

KUBE_ETCD_SERVERS="--etcd-servers=http://127.0.0.1:2379" # Address range to use for services

KUBE_SERVICE_ADDRESSES="--service-cluster-ip-range=10.254.0.0/16" # default admission control policies

# KUBE_ADMISSION_CONTROL="--admission-control=NamespaceLifecycle,NamespaceExists,LimitRanger,SecurityContextDeny,ServiceAccount,ResourceQuota"

KUBE_ADMISSION_CONTROL="--admission-control=NamespaceLifecycle,NamespaceExists,LimitRanger,SecurityContextDeny,ResourceQuota" # Add your own!

KUBE_API_ARGS=""

4. 重新注册/启动/检查:组件的系统服务

[root@CNT7XDCK01 ~]# systemctl enable etcd

[root@CNT7XDCK01 ~]# systemctl enable kube-apiserver

[root@CNT7XDCK01 ~]# systemctl enable kube-controller-manager

[root@CNT7XDCK01 ~]# systemctl enable kube-scheduler

[root@CNT7XDCK01 ~]# systemctl restart etcd

[root@CNT7XDCK01 ~]# systemctl restart kube-apiserver

[root@CNT7XDCK01 ~]# systemctl restart kube-controller-manager

[root@CNT7XDCK01 ~]# systemctl restart kube-scheduler

[root@CNT7XDCK01 ~]# systemctl status etcd

[root@CNT7XDCK01 ~]# systemctl status kube-apiserver

[root@CNT7XDCK01 ~]# systemctl status kube-controller-manager

[root@CNT7XDCK01 ~]# systemctl status kube-scheduler

====================================================================

5.2 Node 节点

1. 卸载之前组件

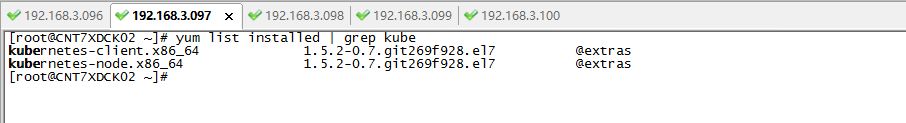

[root@CNT7XDCK02 ~]# yum list installed | grep kube

kubernetes-client.x86_64 1.5.2-0.7.git269f928.el7 @extras

kubernetes-node.x86_64 1.5.2-0.7.git269f928.el7 @extras

[root@CNT7XDCK02 ~]# yum remove -y kubernetes-client.x86_64

[root@CNT7XDCK02 ~]# yum remove -y kubernetes-node.x86_64

2. 重新安装组件

[root@CNT7XDCK02 ~]# yum -y install flannel

[root@CNT7XDCK02 ~]# yum -y install kubernetes-node

3. 配置相关kube的配置文件

修改/etc/sysconfig/flanneld文件

# Flanneld configuration options # etcd url location. Point this to the server where etcd runs

FLANNEL_ETCD_ENDPOINTS="http://192.168.3.96:2379" # etcd config key. This is the configuration key that flannel queries

# For address range assignment

FLANNEL_ETCD_PREFIX="/atomic.io/network" # Any additional options that you want to pass

#FLANNEL_OPTIONS=""

修改/etc/kubernetes/config文件

###

# kubernetes system config

#

# The following values are used to configure various aspects of all

# kubernetes services, including

#

# kube-apiserver.service

# kube-controller-manager.service

# kube-scheduler.service

# kubelet.service

# kube-proxy.service

# logging to stderr means we get it in the systemd journal

KUBE_LOGTOSTDERR="--logtostderr=true" # journal message level, 0 is debug

KUBE_LOG_LEVEL="--v=0" # Should this cluster be allowed to run privileged docker containers

KUBE_ALLOW_PRIV="--allow-privileged=false" # How the controller-manager, scheduler, and proxy find the apiserver

KUBE_MASTER="--master=http://192.168.3.96:8080"

修改/etc/kubernetes/kubelet文件

###

# kubernetes kubelet (minion) config # The address for the info server to serve on (set to 0.0.0.0 or "" for all interfaces)

KUBELET_ADDRESS="--address=0.0.0.0" # The port for the info server to serve on

KUBELET_PORT="--port=10250" # You may leave this blank to use the actual hostname

KUBELET_HOSTNAME="--hostname-override=192.168.3.97" # 这里是node机器的IP # location of the api-server

KUBELET_API_SERVER="--api-servers=http://192.168.3.96:8080" # 这里是master机器的IP # pod infrastructure container

KUBELET_POD_INFRA_CONTAINER="--pod-infra-container-image=registry.access.redhat.com/rhel7/pod-infrastructure:latest" # Add your own!

KUBELET_ARGS=""

4. 重新注册/启动/检查:组件的系统服务

[root@CNT7XDCK02 ~]# systemctl enable flanneld

[root@CNT7XDCK02 ~]# systemctl enable kube-proxy

[root@CNT7XDCK02 ~]# systemctl enable kubelet

[root@CNT7XDCK02 ~]# systemctl enable docker

[root@CNT7XDCK02 ~]# systemctl restart flanneld

[root@CNT7XDCK02 ~]# systemctl restart kube-proxy

[root@CNT7XDCK02 ~]# systemctl restart kubelet

[root@CNT7XDCK02 ~]# systemctl restart docker

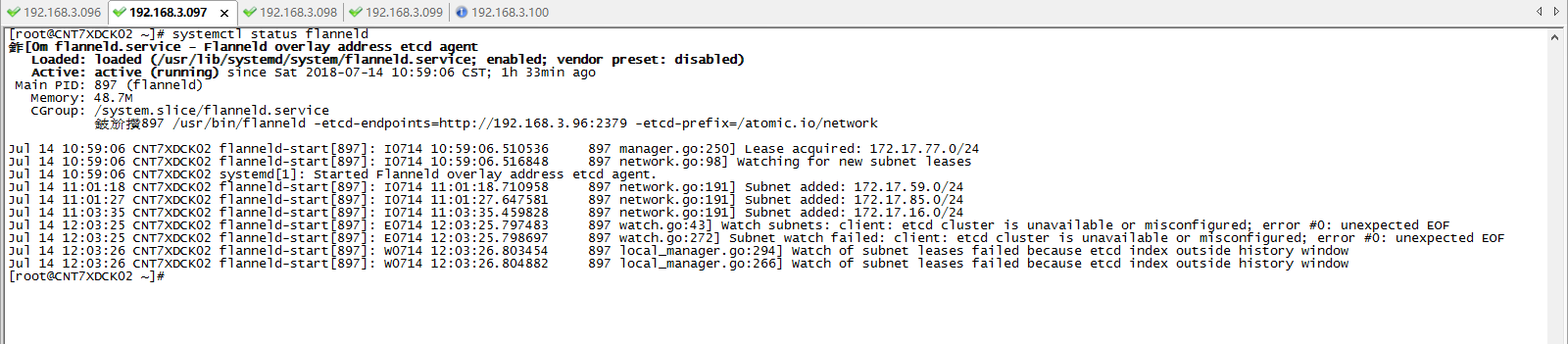

[root@CNT7XDCK02 ~]# systemctl status flanneld

[root@CNT7XDCK02 ~]# systemctl status kube-proxy

[root@CNT7XDCK02 ~]# systemctl status kubelet

[root@CNT7XDCK02 ~]# systemctl status docker

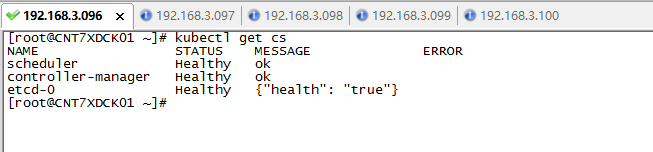

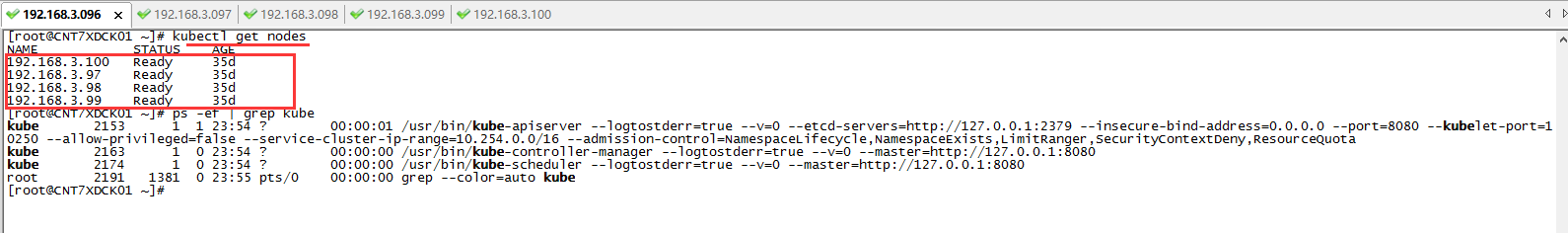

六、最后,在Master机器,查看K8s安装结果

[root@CNT7XDCK01 ~]# kubectl get nodes

NAME STATUS AGE

192.168.3.100 Ready 35d

192.168.3.97 Ready 35d

192.168.3.98 Ready 35d

192.168.3.99 Ready 35d

如下,可以看到master拥有四个node节点机器,状态是Ready正常的。

K8s集群安装和检查(经验分享)的更多相关文章

- K8s集群安装--最新版 Kubernetes 1.14.1

K8s集群安装--最新版 Kubernetes 1.14.1 前言 网上有很多关于k8s安装的文章,但是我参照一些文章安装时碰到了不少坑.今天终于安装好了,故将一些关键点写下来与大家共享. 我安装是基 ...

- [转帖]K8s集群安装--最新版 Kubernetes 1.14.1

K8s集群安装--最新版 Kubernetes 1.14.1 http://www.cnblogs.com/jieky/p/10679998.html 原作者写的比较简单 大略流程和跳转的多一些 改天 ...

- K8S集群安装部署

K8S集群安装部署 参考地址:https://www.cnblogs.com/xkops/p/6169034.html 1. 确保系统已经安装epel-release源 # yum -y inst ...

- Kubernetes(k8s)集群安装

一:简介 二:基础环境安装 1.系统环境 os Role ip Memory Centos 7 master01 192.168.25.30 4G Centos 7 node01 192.168.25 ...

- K8s 集群安装(一)

01,集群环境 三个节点 master node1 node2 IP 192.168.0.81 192.168.0.82 192.168.0.83 环境 centos 7 centos 7 cen ...

- 基于 K8S 集群安装部署 istio-1.2.4

使用云平台可以为组织提供丰富的好处.然而,不可否认的是,采用云可能会给 DevOps 团队带来压力.开发人员必须使用微服务以满足应用的可移植性,同时运营商管理了极其庞大的混合和多云部署.Istio 允 ...

- install kubernetes cluster k8s集群安装

一,安装docker-ce 17.031,下载rpm包 Wget -P /tmp https://mirrors.aliyun.com/docker-ce/linux/centos/7/x86_64/ ...

- kubernetes(k8s)集群安装calico

添加hosts解析 cat /etc/hosts 10.39.7.51 k8s-master-51 10.39.7.57 k8s-master-57 10.39.7.52 k8s-master-52 ...

- k8s集群安装

准备三台虚拟机,一台做master,两台做master节点,关闭selinux. 一.安装docker,两node节点上进行 1. 2.安装docker依赖包:yum install -y yum-u ...

随机推荐

- Android 自定义简易的方向盘操作控件

最近在做一款交互性较为复杂的APP,需要开发一个方向操作控件.最终用自定义控件做了一个简单的版本. 这里我准备了两张素材图,作为方向盘被点击和没被点击的背景图.下面看看自定义的Wheel类 publi ...

- c# 设计模式 之:工厂模式之---简单工厂

1.uml类图如下: 具体实现和依赖关系: 实现:SportCar.JeepCar.HatchbackCar 实现 Icar接口 依赖: Factory依赖 SportCar.JeepCar.Hatc ...

- cocos2dx中node的pause函数(lua)

time:2015/05/14 描述 lua下使用node的pause函数想暂停layer上的所有动画,结果没有效果 1. pause函数 (1)cc.Node:pause 代码: void Node ...

- 10个值得深思的PHP面试题

第一个问题关于弱类型 $str1 = 'yabadabadoo'; $str2 = 'yaba'; if (strpos($str1,$str2)) { echo "/"" ...

- Salesforce平台支持多租户Multi tenant的核心设计思路

Multitenancy is the fundamental technology that clouds use to share IT resources cost-efficiently an ...

- Server Host Cannot be null解决方法

在用打开Services Directory application 或者访问 某个已发布的地图服务时,出现"Server Host Cannot be null"的错误. 问题的 ...

- ZOJ-3279 Ants 树状数组 + 二分

题目链接: https://cn.vjudge.net/problem/ZOJ-3279 题目大意: 有1到n 那个level 每一个level有a[i]只蚂蚁两种操作 p a b 把第a个level ...

- sychronized关键字(多线程)

sychronized关键字: 1. 作用:利用该关键字来创建内置锁,实现线程同步: 2. 分类:(1)sychronized同步方法:(2)sychronized同步代码块: 3. sychroni ...

- python中的BaseManager通信(一)文件三分

可以在windows下单机运行 主部分(提供服务器) #mainfirst.py from multiprocessing.managers import BaseManager import Que ...

- FP又称为Monadic Programming

什么是Monad? trait Monad[+T] { def flatMap[U]( f : (T) => Monad[U] ) : Monad[U] def unit(value : B) ...