吴裕雄 python 神经网络——TensorFlow训练神经网络:不使用激活函数

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data INPUT_NODE = 784 # 输入节点

OUTPUT_NODE = 10 # 输出节点

LAYER1_NODE = 500 # 隐藏层数 BATCH_SIZE = 100 # 每次batch打包的样本个数 # 模型相关的参数

LEARNING_RATE_BASE = 0.01

LEARNING_RATE_DECAY = 0.99

REGULARAZTION_RATE = 0.0001

TRAINING_STEPS = 5000

MOVING_AVERAGE_DECAY = 0.99 def inference(input_tensor, avg_class, weights1, biases1, weights2, biases2):

# 不使用滑动平均类

if avg_class == None:

layer1 = tf.matmul(input_tensor, weights1) + biases1

return tf.matmul(layer1, weights2) + biases2

else:

# 使用滑动平均类

layer1 = tf.matmul(input_tensor, avg_class.average(weights1)) + avg_class.average(biases1)

return tf.matmul(layer1, avg_class.average(weights2)) + avg_class.average(biases2) def train(mnist):

x = tf.placeholder(tf.float32, [None, INPUT_NODE], name='x-input')

y_ = tf.placeholder(tf.float32, [None, OUTPUT_NODE], name='y-input')

# 生成隐藏层的参数。

weights1 = tf.Variable(tf.truncated_normal([INPUT_NODE, LAYER1_NODE], stddev=0.1))

biases1 = tf.Variable(tf.constant(0.1, shape=[LAYER1_NODE]))

# 生成输出层的参数。

weights2 = tf.Variable(tf.truncated_normal([LAYER1_NODE, OUTPUT_NODE], stddev=0.1))

biases2 = tf.Variable(tf.constant(0.1, shape=[OUTPUT_NODE])) # 计算不含滑动平均类的前向传播结果

y = inference(x, None, weights1, biases1, weights2, biases2) # 定义训练轮数及相关的滑动平均类

global_step = tf.Variable(0, trainable=False)

variable_averages = tf.train.ExponentialMovingAverage(MOVING_AVERAGE_DECAY, global_step)

variables_averages_op = variable_averages.apply(tf.trainable_variables())

average_y = inference(x, variable_averages, weights1, biases1, weights2, biases2) # 计算交叉熵及其平均值

cross_entropy = tf.nn.sparse_softmax_cross_entropy_with_logits(logits=y, labels=tf.argmax(y_, 1))

cross_entropy_mean = tf.reduce_mean(cross_entropy) # 损失函数的计算

regularizer = tf.contrib.layers.l2_regularizer(REGULARAZTION_RATE)

regularaztion = regularizer(weights1) + regularizer(weights2)

loss = cross_entropy_mean + regularaztion # 设置指数衰减的学习率。

learning_rate = tf.train.exponential_decay(

LEARNING_RATE_BASE,

global_step,

mnist.train.num_examples / BATCH_SIZE,

LEARNING_RATE_DECAY,

staircase=True) # 优化损失函数

train_step = tf.train.GradientDescentOptimizer(learning_rate).minimize(loss, global_step=global_step) # 反向传播更新参数和更新每一个参数的滑动平均值

with tf.control_dependencies([train_step, variables_averages_op]):

train_op = tf.no_op(name='train') # 计算正确率

correct_prediction = tf.equal(tf.argmax(average_y, 1), tf.argmax(y_, 1))

accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32)) # 初始化会话,并开始训练过程。

with tf.Session() as sess:

tf.global_variables_initializer().run()

validate_feed = {x: mnist.validation.images, y_: mnist.validation.labels}

test_feed = {x: mnist.test.images, y_: mnist.test.labels} # 循环的训练神经网络。

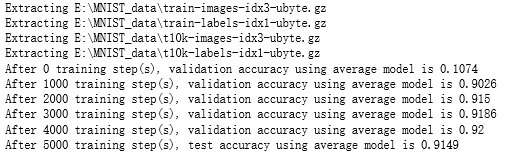

for i in range(TRAINING_STEPS):

if i % 1000 == 0:

validate_acc = sess.run(accuracy, feed_dict=validate_feed)

print("After %d training step(s), validation accuracy using average model is %g " % (i, validate_acc))

xs,ys=mnist.train.next_batch(BATCH_SIZE)

sess.run(train_op,feed_dict={x:xs,y_:ys})

test_acc=sess.run(accuracy,feed_dict=test_feed)

print(("After %d training step(s), test accuracy using average model is %g" %(TRAINING_STEPS, test_acc))) def main(argv=None):

mnist = input_data.read_data_sets("E:\\MNIST_data", one_hot=True)

train(mnist) if __name__=='__main__':

main()

吴裕雄 python 神经网络——TensorFlow训练神经网络:不使用激活函数的更多相关文章

- 吴裕雄 python 神经网络——TensorFlow训练神经网络:不使用滑动平均

import tensorflow as tf from tensorflow.examples.tutorials.mnist import input_data INPUT_NODE = 784 ...

- 吴裕雄 python 神经网络——TensorFlow训练神经网络:不使用隐藏层

import tensorflow as tf from tensorflow.examples.tutorials.mnist import input_data INPUT_NODE = 784 ...

- 吴裕雄 python 神经网络——TensorFlow训练神经网络:不使用指数衰减的学习率

import tensorflow as tf from tensorflow.examples.tutorials.mnist import input_data INPUT_NODE = 784 ...

- 吴裕雄 python 神经网络——TensorFlow训练神经网络:不使用正则化

import tensorflow as tf from tensorflow.examples.tutorials.mnist import input_data INPUT_NODE = 784 ...

- 吴裕雄 python 神经网络——TensorFlow训练神经网络:全模型

import tensorflow as tf from tensorflow.examples.tutorials.mnist import input_data INPUT_NODE = 784 ...

- 吴裕雄 python 神经网络——TensorFlow训练神经网络:花瓣识别

import os import glob import os.path import numpy as np import tensorflow as tf from tensorflow.pyth ...

- 吴裕雄 python 神经网络——TensorFlow训练神经网络:MNIST最佳实践

import os import tensorflow as tf from tensorflow.examples.tutorials.mnist import input_data INPUT_N ...

- 吴裕雄 python 神经网络——TensorFlow训练神经网络:卷积层、池化层样例

import numpy as np import tensorflow as tf M = np.array([ [[1],[-1],[0]], [[-1],[2],[1]], [[0],[2],[ ...

- 吴裕雄--天生自然 Tensorflow卷积神经网络:花朵图片识别

import os import numpy as np import matplotlib.pyplot as plt from PIL import Image, ImageChops from ...

随机推荐

- Go之第三方库ini

文章转自 快速开始 my.ini # possible values : production, development app_mode = development [paths] # Path t ...

- hdu 1045 Fire Net(二分图)

题目连接:http://acm.hdu.edu.cn/showproblem.php?pid=1045 题目大意为给定一个最大为4*4的棋盘,棋盘可以放置堡垒,处在同一行或者同一列的堡垒可以相互攻击, ...

- 210. 课程表 II

Q: 现在你总共有 n 门课需要选,记为 0 到 n-1. 在选修某些课程之前需要一些先修课程. 例如,想要学习课程 0 ,你需要先完成课程 1 ,我们用一个匹配来表示他们: [0,1] 给定课程总量 ...

- python"TypeError: 'NoneType' object is not iterable"错误解析

尊重原创博主,原文链接:https://blog.csdn.net/dataspark/article/details/9953225 [解析] 一般是函数返回值为None,并被赋给了多个变量. 实例 ...

- 对C#面向对象三大特性的一点总结

一.三大特性 封装: 把客观事物封装成类,并把类内部的实现隐藏,以保证数据的完整性 继承:通过继承可以复用父类的代码 多态:允许将子对象赋值给父对象的一种能力 二.[封装]特性 把类内部的数据隐藏,不 ...

- 网站调用qq第三方登录

1. 准备工作 (1) 接入QQ登录前,网站需首先进行申请,获得对应的appid与appkey,以保证后续流程中可正确对网站与用户进行验证与授权. ① 注册QQ互联开发者账号 网址 https:/ ...

- python开发基础04-列表、元组、字典操作练习

练习1: # l1 = [11,22,33]# l2 = [22,33,44]# a. 获取内容相同的元素列表# b. 获取 l1 中有, l2 中没有的元素列表# c. 获取 l2 中有, l1 中 ...

- Adobe PS

1. ctrl + Tab 切换视图窗口 2.shift 拖拽图片,将 2 张图片放在一起 3.切换显示方式 /全屏/带有工具栏 快捷键:F 4. 缩小/放大工具 快捷键: alt + 鼠标滑轮 5 ...

- Python 摄像头 树莓派 USB mjpb

import cv2 import urllib.request import numpy as np import sys host = "192.168.1.109:8080" ...

- requests使用小结(不定期更新)

request是python的第三方库,使用上比urllib和urllib2要更方便. 0x01 使用session发送:能保存一条流中获取的cookie,并自动添加到http头中 s = reque ...