reduceByKey和groupByKey的区别

先来看一下在PairRDDFunctions.scala文件中reduceByKey和groupByKey的源码

/**

* Merge the values for each key using an associative reduce function. This will also perform

* the merging locally on each mapper before sending results to a reducer, similarly to a

* "combiner" in MapReduce. Output will be hash-partitioned with the existing partitioner/

* parallelism level.

*/

def reduceByKey(func: (V, V) => V): RDD[(K, V)] = {

reduceByKey(defaultPartitioner(self), func)

} /**

* Group the values for each key in the RDD into a single sequence. Allows controlling the

* partitioning of the resulting key-value pair RDD by passing a Partitioner.

* The ordering of elements within each group is not guaranteed, and may even differ

* each time the resulting RDD is evaluated.

*

* Note: This operation may be very expensive. If you are grouping in order to perform an

* aggregation (such as a sum or average) over each key, using [[PairRDDFunctions.aggregateByKey]]

* or [[PairRDDFunctions.reduceByKey]] will provide much better performance.

*

* Note: As currently implemented, groupByKey must be able to hold all the key-value pairs for any

* key in memory. If a key has too many values, it can result in an [[OutOfMemoryError]].

*/

def groupByKey(partitioner: Partitioner): RDD[(K, Iterable[V])] = {

// groupByKey shouldn't use map side combine because map side combine does not

// reduce the amount of data shuffled and requires all map side data be inserted

// into a hash table, leading to more objects in the old gen.

val createCombiner = (v: V) => CompactBuffer(v)

val mergeValue = (buf: CompactBuffer[V], v: V) => buf += v

val mergeCombiners = (c1: CompactBuffer[V], c2: CompactBuffer[V]) => c1 ++= c2

val bufs = combineByKey[CompactBuffer[V]](

createCombiner, mergeValue, mergeCombiners, partitioner, mapSideCombine=false)

bufs.asInstanceOf[RDD[(K, Iterable[V])]]

}

通过源码可以发现:

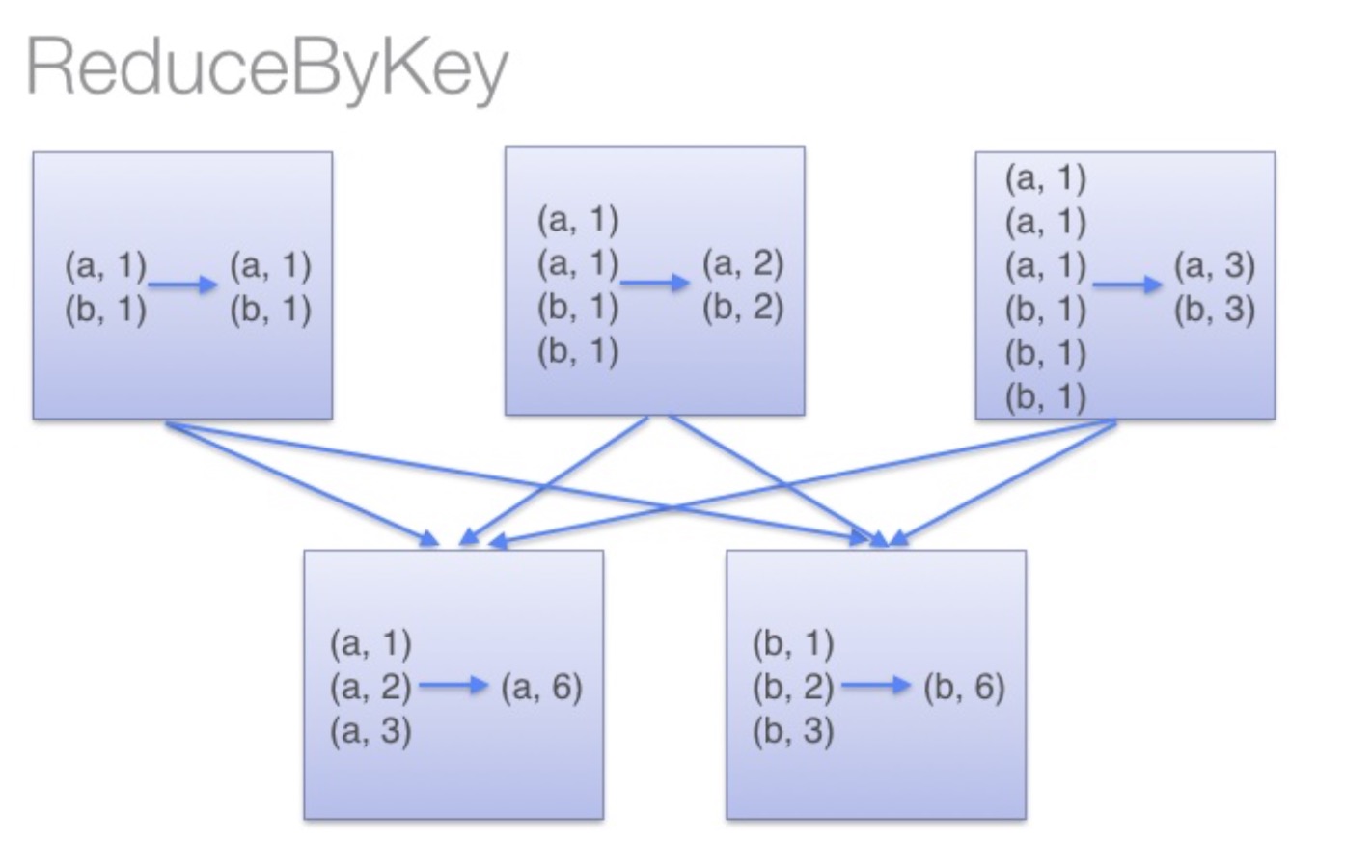

reduceByKey:reduceByKey会在结果发送至reducer之前会对每个mapper在本地进行merge,有点类似于在MapReduce中的combiner。这样做的好处在于,在map端进行一次reduce之后,数据量会大幅度减小,从而减小传输,保证reduce端能够更快的进行结果计算。

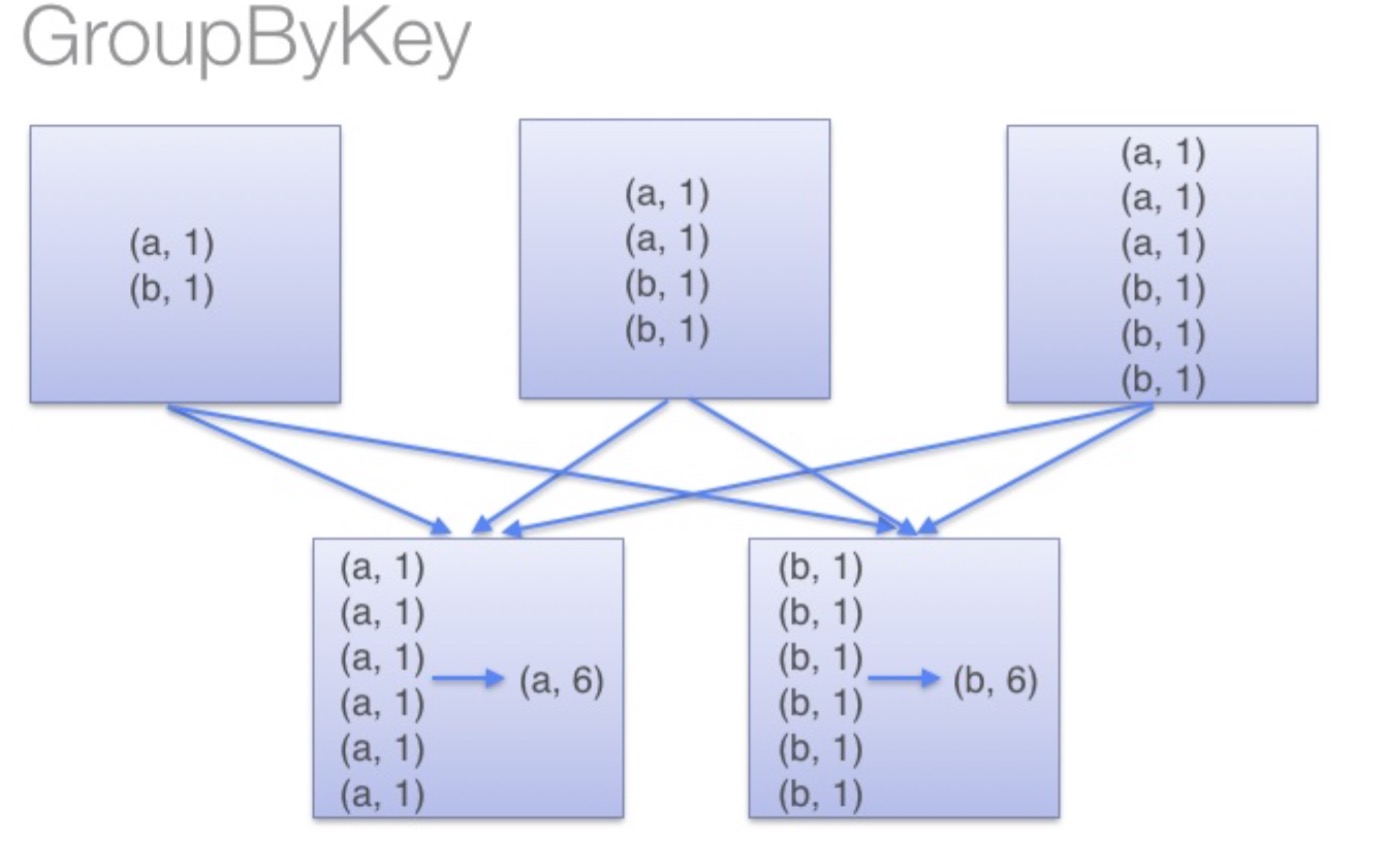

groupByKey:groupByKey会对每一个RDD中的value值进行聚合形成一个序列(Iterator),此操作发生在reduce端,所以势必会将所有的数据通过网络进行传输,造成不必要的浪费。同时如果数据量十分大,可能还会造成OutOfMemoryError。

通过以上对比可以发现在进行大量数据的reduce操作时候建议使用reduceByKey。不仅可以提高速度,还是可以防止使用groupByKey造成的内存溢出问题。

reduceByKey和groupByKey的区别的更多相关文章

- 转载-reduceByKey和groupByKey的区别

原文链接-https://www.cnblogs.com/0xcafedaddy/p/7625358.html 先来看一下在PairRDDFunctions.scala文件中reduceByKey和g ...

- spark:reducebykey与groupbykey的区别

从源码看: reduceBykey与groupbykey: 都调用函数combineByKeyWithClassTag[V]((v: V) => v, func, func, partition ...

- reduceByKey和groupByKey区别与用法

在spark中,我们知道一切的操作都是基于RDD的.在使用中,RDD有一种非常特殊也是非常实用的format——pair RDD,即RDD的每一行是(key, value)的格式.这种格式很像Pyth ...

- 【spark】常用转换操作:reduceByKey和groupByKey

1.reduceByKey(func) 功能: 使用 func 函数合并具有相同键的值. 示例: val list = List("hadoop","spark" ...

- spark RDD,reduceByKey vs groupByKey

Spark中有两个类似的api,分别是reduceByKey和groupByKey.这两个的功能类似,但底层实现却有些不同,那么为什么要这样设计呢?我们来从源码的角度分析一下. 先看两者的调用顺序(都 ...

- 【Spark算子】:reduceByKey、groupByKey和combineByKey

在spark中,reduceByKey.groupByKey和combineByKey这三种算子用的较多,结合使用过程中的体会简单总结: 我的代码实践:https://github.com/wwcom ...

- spark新能优化之reduceBykey和groupBykey的使用

val counts = pairs.reduceByKey(_ + _) val counts = pairs.groupByKey().map(wordCounts => (wordCoun ...

- scala flatmap、reduceByKey、groupByKey

1.test.txt文件中存放 asd sd fd gf g dkf dfd dfml dlf dff gfl pkdfp dlofkp // 创建一个Scala版本的Spark Context va ...

- 32、reduceByKey和groupByKey对比

一.groupByKey 1.图解 val counts = pairs.groupByKey().map(wordCounts => (wordCounts._1, wordCounts._2 ...

随机推荐

- JZOJ 5280 膜法师

好啰嗦......还好作者给了一句话题意,不然光看题就很耗费时间. 样例输入: 1 6 3 1 78 69 55 102 233 666 样例输出: 1 2 3 4 5 6 11 数据范围: 思路: ...

- fmap为什么可以用function作为第二个参数

看看fmap的类型 fmap :: Functor f => (a -> b) -> f a -> f b 很明显的,第一个参数是function,第二个参数是functor的 ...

- wav格式

转自: http://www.cnblogs.com/tiandsp/archive/2012/10/17/2728585.html 起始地址 占用空间 本地址数字的含义 00H 4byte RIFF ...

- 配置Nginx来支持php

安装php7 下载地址:https://secure.php.net/downloads.php这里下载的是:wget http://ar2.php.net/distributions/php ...

- 去掉VS中的警告错误:warning C4819

当项目引用到外部源代码后,经常出现4819错误,警告信息如下: warning C4819: 该文件包含不能在当前代码页(936)中表示的字符.请将该文件保存为 Unicode 格式以防止数据丢失. ...

- 解决:eclipse 断点调试进入到class文件,无法查看变量值问题

今天团队一小伙伴调试项目时,一不小心选错了源文件目录(maven分模块项目),选到了顶层父项目下的文件,结果调试时发现无法查看调试过程中的变量值,要解决这个问题,其实很简单,稍稍配置一下就可以了,为了 ...

- Fiddler抓包6-get请求(url详解)【转载】

本篇转自博客:上海-悠悠 原文地址:http://www.cnblogs.com/yoyoketang/tag/fiddler/ 前言 上一篇介绍了Composer的功能,可以模拟get和post请求 ...

- Centos7更改网卡名为eth0

1.先更该网卡配置文件设备名和网卡名参数: vim /etc/sysconfig/network-scripts/ifcfg-eno16777736 NAME=eth0DEVICE=eth0 2.将配 ...

- C/C++/C#程序如何打成DLL动态库

C/C++程序如何打成DLL动态库:1.在VS中新建main.h,添加如下内容:extern "C" _declspec(dllexport) int onLoad(); 2.新建 ...

- Switch能否用string做参数

在Java5以前,switch(expr)中,exper只能是byte,short,char,int类型(或其包装类)的常量表达式. 从Java5开始,java中引入了枚举类型,即enum类型. 从J ...