Machine learning 第5周编程作业

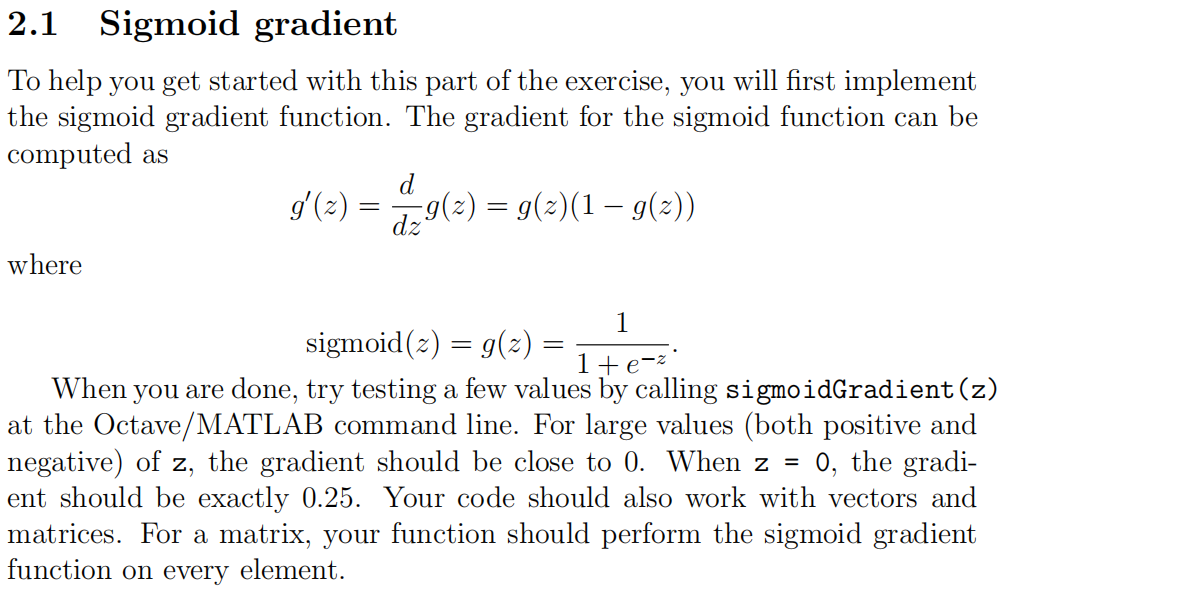

1.Sigmoid Gradient

function g = sigmoidGradient(z)

%SIGMOIDGRADIENT returns the gradient of the sigmoid function

%evaluated at z

% g = SIGMOIDGRADIENT(z) computes the gradient of the sigmoid function

% evaluated at z. This should work regardless if z is a matrix or a

% vector. In particular, if z is a vector or matrix, you should return

% the gradient for each element. g = zeros(size(z)); % ====================== YOUR CODE HERE ======================

% Instructions: Compute the gradient of the sigmoid function evaluated at

% each value of z (z can be a matrix, vector or scalar). g=sigmoid(z).*(1-sigmoid(z)); % ============================================================= end

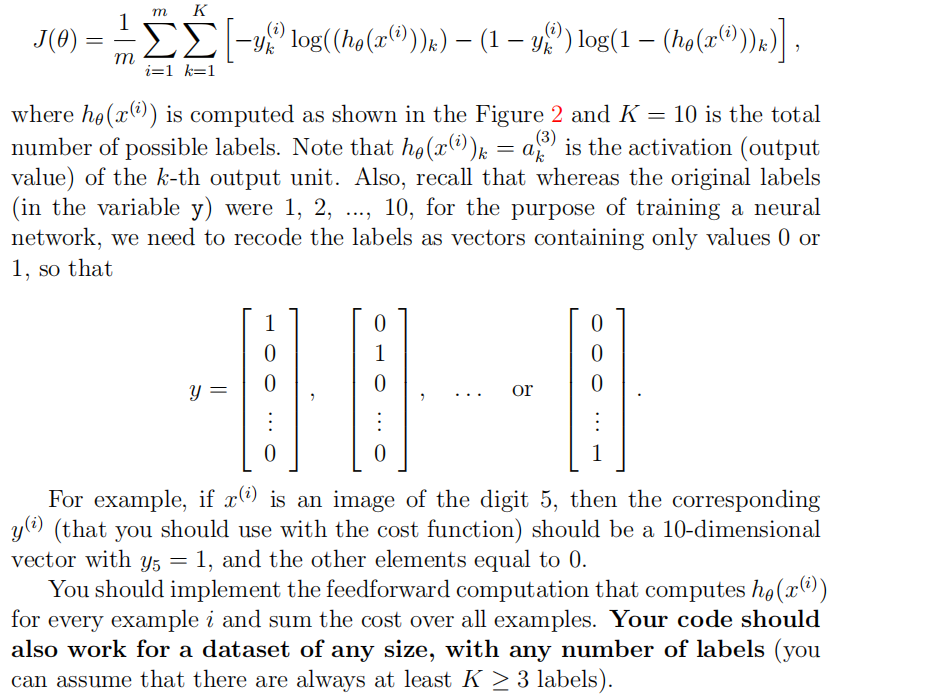

2.nnCostFunction

这是一道综合问题;

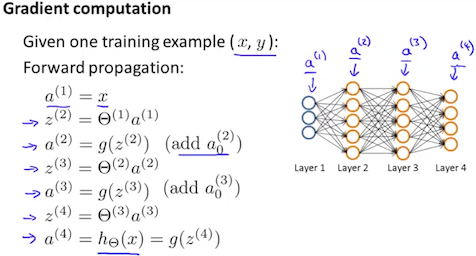

Ⅰ:计算代价函数J(前向传播)

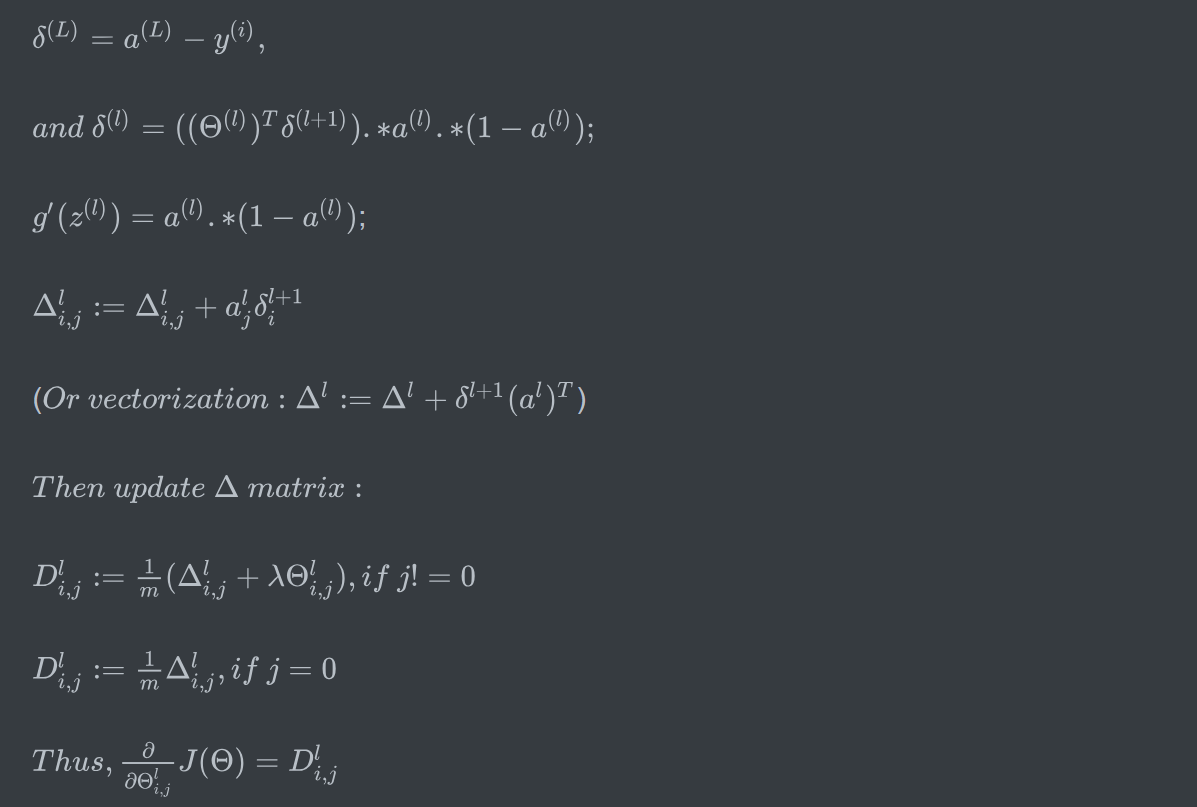

Ⅱ:BackPropagation

Ⅲ:正则化;

function [J grad] = nnCostFunction(nn_params, ...

input_layer_size, ...

hidden_layer_size, ...

num_labels, ...

X, y, lambda)

%NNCOSTFUNCTION Implements the neural network cost function for a two layer

%neural network which performs classification

% [J grad] = NNCOSTFUNCTON(nn_params, hidden_layer_size, num_labels, ...

% X, y, lambda) computes the cost and gradient of the neural network. The

% parameters for the neural network are "unrolled" into the vector

% nn_params and need to be converted back into the weight matrices.

%

% The returned parameter grad should be a "unrolled" vector of the

% partial derivatives of the neural network.

% % Reshape nn_params back into the parameters Theta1 and Theta2, the weight matrices

% for our 2 layer neural network

Theta1 = reshape(nn_params(1:hidden_layer_size * (input_layer_size + 1)), ...

hidden_layer_size, (input_layer_size + 1)); Theta2 = reshape(nn_params((1 + (hidden_layer_size * (input_layer_size + 1))):end), ...

num_labels, (hidden_layer_size + 1)); % Setup some useful variables

m = size(X, 1); % You need to return the following variables correctly

J = 0;

Theta1_grad = zeros(size(Theta1));

Theta2_grad = zeros(size(Theta2)); % ====================== YOUR CODE HERE ======================

% Instructions: You should complete the code by working through the

% following parts.

%

% Part 1: Feedforward the neural network and return the cost in the

% variable J. After implementing Part 1, you can verify that your

% cost function computation is correct by verifying the cost

% computed in ex4.m

%

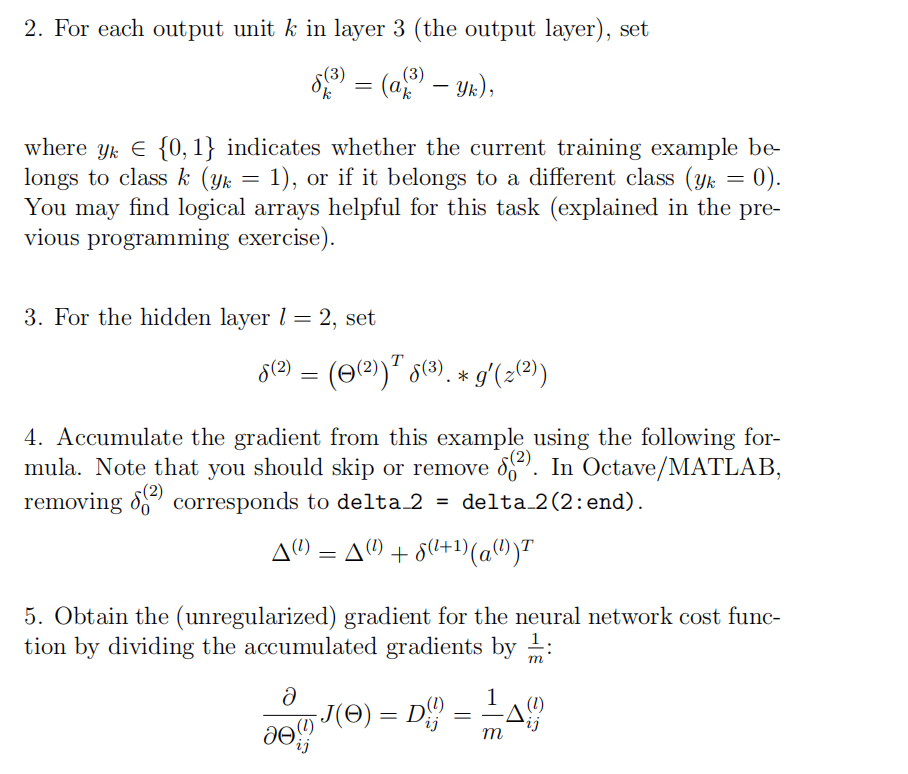

% Part 2: Implement the backpropagation algorithm to compute the gradients

% Theta1_grad and Theta2_grad. You should return the partial derivatives of

% the cost function with respect to Theta1 and Theta2 in Theta1_grad and

% Theta2_grad, respectively. After implementing Part 2, you can check

% that your implementation is correct by running checkNNGradients

%

% Note: The vector y passed into the function is a vector of labels

% containing values from 1..K. You need to map this vector into a

% binary vector of 1's and 0's to be used with the neural network

% cost function.

%

% Hint: We recommend implementing backpropagation using a for-loop

% over the training examples if you are implementing it for the

% first time.

%

% Part 3: Implement regularization with the cost function and gradients.

%

% Hint: You can implement this around the code for

% backpropagation. That is, you can compute the gradients for

% the regularization separately and then add them to Theta1_grad

% and Theta2_grad from Part 2.

% X=[ones(m,1) X];

a1=Theta1*X';

z1=[ones(m,1),sigmoid(a1)'];

a2=Theta2*z1';

h=sigmoid(a2); yy=zeros(m,num_labels);

for i=1:m,

yy(i,y(i))=1;

endfor

J=1/m*sum( sum( (-yy).*log(h')-(1-yy).*log(1-h') ) ); J=J+lambda/(2*m)*( sum(sum(Theta1(:,2:end).^2))+sum(sum(Theta2(:,2:end).^2))); for i=1:m,

a1=X(i,:)';

z2=Theta1*a1;

a2=[1;sigmoid(z2)];

z3=Theta2*a2;

a3=sigmoid(z3);

tmpy=yy(i,:);

dlt3=a3-tmpy';

dlt2=(Theta2(:,2:end)'*dlt3.*sigmoidGradient(z2)); Theta1_grad=Theta1_grad+dlt2*a1';

Theta2_grad=Theta2_grad+dlt3*a2';

endfor Theta1_grad=Theta1_grad./m;

Theta2_grad=Theta2_grad./m; Theta1(:,1)=0;

Theta2(:,1)=0; Theta1_grad=Theta1_grad+lambda/m*Theta1;

Theta2_grad=Theta2_grad+lambda/m*Theta2; % ------------------------------------------------------------- % ========================================================================= % Unroll gradients

grad = [Theta1_grad(:) ; Theta2_grad(:)]; end

Machine learning 第5周编程作业的更多相关文章

- Machine learning 第7周编程作业 SVM

1.Gaussian Kernel function sim = gaussianKernel(x1, x2, sigma) %RBFKERNEL returns a radial basis fun ...

- Machine learning第6周编程作业

1.linearRegCostFunction: function [J, grad] = linearRegCostFunction(X, y, theta, lambda) %LINEARREGC ...

- Machine learning 第8周编程作业 K-means and PCA

1.findClosestCentroids function idx = findClosestCentroids(X, centroids) %FINDCLOSESTCENTROIDS compu ...

- Machine learning第四周code 编程作业

1.lrCostFunction: 和第三周的那个一样的: function [J, grad] = lrCostFunction(theta, X, y, lambda) %LRCOSTFUNCTI ...

- 吴恩达深度学习第4课第3周编程作业 + PIL + Python3 + Anaconda环境 + Ubuntu + 导入PIL报错的解决

问题描述: 做吴恩达深度学习第4课第3周编程作业时导入PIL包报错. 我的环境: 已经安装了Tensorflow GPU 版本 Python3 Anaconda 解决办法: 安装pillow模块,而不 ...

- 吴恩达深度学习第2课第2周编程作业 的坑(Optimization Methods)

我python2.7, 做吴恩达深度学习第2课第2周编程作业 Optimization Methods 时有2个坑: 第一坑 需将辅助文件 opt_utils.py 的 nitialize_param ...

- c++ 西安交通大学 mooc 第十三周基础练习&第十三周编程作业

做题记录 风影影,景色明明,淡淡云雾中,小鸟轻灵. c++的文件操作已经好玩起来了,不过掌握好控制结构显得更为重要了. 我这也不做啥题目分析了,直接就题干-代码. 总结--留着自己看 1. 流是指从一 ...

- Machine Learning - 第7周(Support Vector Machines)

SVMs are considered by many to be the most powerful 'black box' learning algorithm, and by posing构建 ...

- Machine Learning - 第6周(Advice for Applying Machine Learning、Machine Learning System Design)

In Week 6, you will be learning about systematically improving your learning algorithm. The videos f ...

随机推荐

- 《OpenGL超级宝典》编程环境配置

最近在接触OpenGL,使用的书籍就是那本<OpenGL超级宝典>,不过编程环境的搭建和设置还是比较麻烦的,在网上找了很多资料,找不到GLTools.lib这个库.没办法自己就借助源码自己 ...

- 修复PlatformToolsets丢失问题(为VS2013以上版本安装VC90,VC100编译器)

前段时间测试VS2017的IDE时不小心弄丢了 MSBuild\Microsoft.Cpp\v4.0\Platforms\Win32\PlatformToolsets 下的VC90以及VC100的编译 ...

- Monokai风格的EditPlus配色方案

EditPlus的配置文件editplus_u.ini,该文件默认在:系统盘:\Users\用户名\AppData\Roaming\EditPlus目录中.将其中的内容替换为如下即可: [Option ...

- 用Swift实现一款天气预报APP(三)

这个系列的目录: 用Swift实现一款天气预报APP(一) 用Swift实现一款天气预报APP(二) 用Swift实现一款天气预报APP(三) 通过前面的学习,一个天气预报的APP已经基本可用了.至少 ...

- 机器学习—SVM

一.原理部分: 依然是图片~ 二.sklearn实现: import pandas as pd import numpy as np import matplotlib.pyplot as plt i ...

- 机器学习—K近邻

一.算法原理 还是图片格式~ 二.sklearn实现 import pandas as pd import numpy as np import matplotlib.pyplot as plt im ...

- EBS增加客制应用CUX:Custom Application

1. 创建数据库文件和帐号 [root@ebs12vis oracle]# su - oracle[oracle@ebs12vis ~]$ sqlplus / as sysdba SQL*Plus: ...

- Android-GsonUtil工具类

JSON解析封装相关工具类 public class GsonUtil { private static Gson gson = null; static { if (gson == null) { ...

- [转载]MVC、MVP以及Model2(上)

对于大部分面向最终用户的应用来说,它们都需要具有一个可视化的UI与用户进行交互,我们将这个UI称为视图(View).在早期,我们倾向于将所有与视图相关的逻辑糅合在一起,这些逻辑包括数据的呈现.用户操作 ...

- C# 实用小类

/// <summary> /// 汉字转换拼音 /// </summary> /// <param name="PinYin"></pa ...