吴裕雄 python深度学习与实践(15)

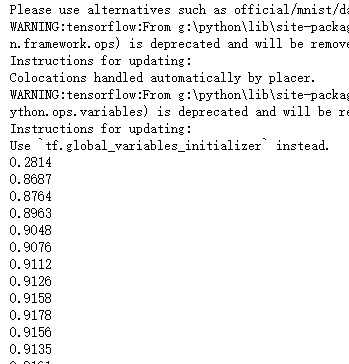

import tensorflow as tf

import tensorflow.examples.tutorials.mnist.input_data as input_data mnist = input_data.read_data_sets("D:\\F\\TensorFlow_deep_learn\\MNIST\\", one_hot=True) x_data = tf.placeholder("float32", [None, 784])

weight = tf.Variable(tf.ones([784, 10]))

bias = tf.Variable(tf.ones([10]))

y_model = tf.nn.softmax(tf.matmul(x_data, weight) + bias)

y_data = tf.placeholder("float32", [None, 10]) loss = tf.reduce_sum(tf.pow((y_model - y_data), 2)) train_step = tf.train.GradientDescentOptimizer(0.01).minimize(loss)

init = tf.initialize_all_variables()

sess = tf.Session()

sess.run(init) for _ in range(1000):

batch_xs, batch_ys = mnist.train.next_batch(100)

sess.run(train_step, feed_dict={x_data:batch_xs, y_data:batch_ys})

if _ % 50 == 0:

correct_prediction = tf.equal(tf.argmax(y_model, 1), tf.argmax(y_data, 1))

accuracy = tf.reduce_mean(tf.cast(correct_prediction, "float"))

print(sess.run(accuracy, feed_dict={x_data: mnist.test.images, y_data: mnist.test.labels}))

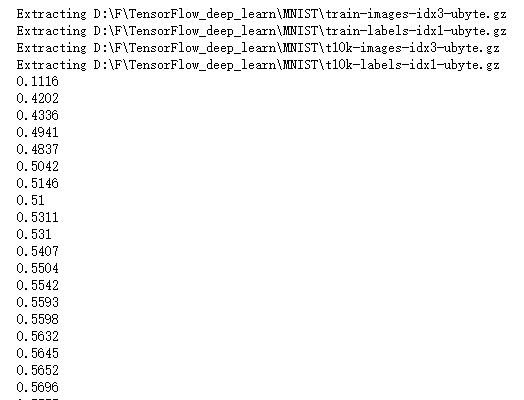

import tensorflow as tf

import tensorflow.examples.tutorials.mnist.input_data as input_data mnist = input_data.read_data_sets("D:\\F\\TensorFlow_deep_learn\\MNIST\\", one_hot=True) x_data = tf.placeholder("float32", [None, 784])

weight = tf.Variable(tf.ones([784, 10]))

bias = tf.Variable(tf.ones([10]))

y_model = tf.nn.relu(tf.matmul(x_data, weight) + bias)

y_data = tf.placeholder("float32", [None, 10])

loss = -tf.reduce_sum(y_data*tf.log(y_model)) train_step = tf.train.GradientDescentOptimizer(0.01).minimize(loss)

init = tf.initialize_all_variables()

sess = tf.Session()

sess.run(init) for _ in range(1000):

batch_xs, batch_ys = mnist.train.next_batch(50)

sess.run(train_step, feed_dict={x_data:batch_xs, y_data:batch_ys})

if _ % 50 == 0:

correct_prediction = tf.equal(tf.argmax(y_model, 1), tf.argmax(y_data, 1))

accuracy = tf.reduce_mean(tf.cast(correct_prediction, "float"))

print(sess.run(accuracy, feed_dict={x_data: mnist.test.images, y_data: mnist.test.labels}))

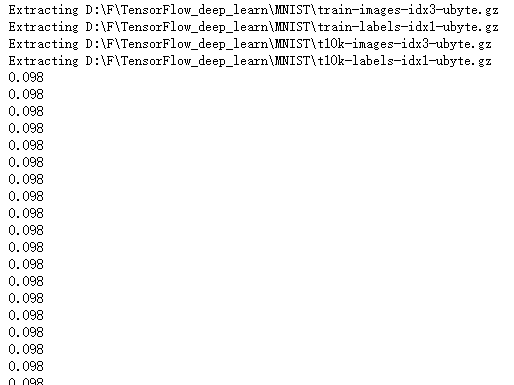

import tensorflow as tf

import tensorflow.examples.tutorials.mnist.input_data as input_data mnist = input_data.read_data_sets("D:\\F\\TensorFlow_deep_learn\\MNIST\\", one_hot=True) x_data = tf.placeholder("float32", [None, 784]) weight1 = tf.Variable(tf.ones([784, 256]))

bias1 = tf.Variable(tf.ones([256]))

y1_model1 = tf.matmul(x_data, weight1) + bias1 weight2 = tf.Variable(tf.ones([256, 10]))

bias2 = tf.Variable(tf.ones([10]))

y_model = tf.nn.softmax(tf.matmul(y1_model1, weight2) + bias2) y_data = tf.placeholder("float32", [None, 10]) loss = -tf.reduce_sum(y_data*tf.log(y_model))

train_step = tf.train.GradientDescentOptimizer(0.01).minimize(loss)

init = tf.initialize_all_variables()

sess = tf.Session()

sess.run(init) for _ in range(1000):

batch_xs, batch_ys = mnist.train.next_batch(50)

sess.run(train_step, feed_dict={x_data:batch_xs, y_data:batch_ys})

if _ % 50 == 0:

correct_prediction = tf.equal(tf.argmax(y_model, 1), tf.argmax(y_data, 1))

accuracy = tf.reduce_mean(tf.cast(correct_prediction, "float"))

print(sess.run(accuracy, feed_dict={x_data: mnist.test.images, y_data: mnist.test.labels}))

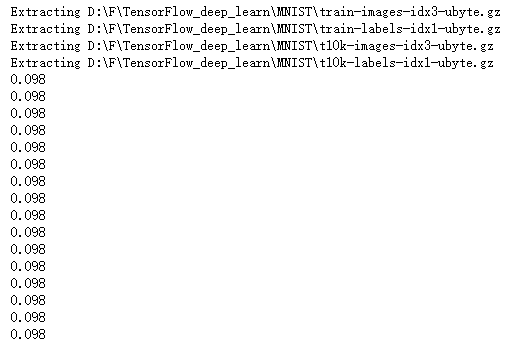

import tensorflow as tf

import tensorflow.examples.tutorials.mnist.input_data as input_data mnist = input_data.read_data_sets("D:\\F\\TensorFlow_deep_learn\\MNIST\\", one_hot=True) x_data = tf.placeholder("float32", [None, 784])

x_image = tf.reshape(x_data, [-1,28,28,1]) w_conv = tf.Variable(tf.ones([5,5,1,32]))

b_conv = tf.Variable(tf.ones([32]))

h_conv = tf.nn.relu(tf.nn.conv2d(x_image, w_conv, strides=[1, 1, 1, 1], padding='SAME') + b_conv) h_pool = tf.nn.max_pool(h_conv, ksize=[1, 2, 2, 1],strides=[1, 2, 2, 1], padding='SAME') w_fc = tf.Variable(tf.ones([14*14*32,1024]))

b_fc = tf.Variable(tf.ones([1024])) h_pool_flat = tf.reshape(h_pool, [-1, 14*14*32])

h_fc = tf.nn.relu(tf.matmul(h_pool_flat, w_fc) + b_fc) W_fc2 = tf.Variable(tf.ones([1024,10]))

b_fc2 = tf.Variable(tf.ones([10])) y_model = tf.nn.softmax(tf.matmul(h_fc, W_fc2) + b_fc2) y_data = tf.placeholder("float32", [None, 10]) loss = -tf.reduce_sum(y_data*tf.log(y_model))

train_step = tf.train.GradientDescentOptimizer(0.01).minimize(loss)

init = tf.initialize_all_variables()

sess = tf.Session()

sess.run(init) for _ in range(1000):

batch_xs, batch_ys = mnist.train.next_batch(200)

sess.run(train_step, feed_dict={x_data:batch_xs, y_data:batch_ys})

if _ % 50 == 0:

correct_prediction = tf.equal(tf.argmax(y_model, 1), tf.argmax(y_data, 1))

accuracy = tf.reduce_mean(tf.cast(correct_prediction, "float"))

print(sess.run(accuracy, feed_dict={x_data: mnist.test.images, y_data: mnist.test.labels}))

import tensorflow as tf

import tensorflow.examples.tutorials.mnist.input_data as input_data mnist = input_data.read_data_sets("D:\\F\\TensorFlow_deep_learn\\MNIST\\", one_hot=True) x_data = tf.placeholder("float", shape=[None, 784])

y_data = tf.placeholder("float", shape=[None, 10]) def weight_variable(shape):

initial = tf.truncated_normal(shape, stddev=0.1)

return tf.Variable(initial) def bias_variable(shape):

initial = tf.constant(0.1, shape=shape)

return tf.Variable(initial) def conv2d(x, W):

return tf.nn.conv2d(x, W, strides=[1, 1, 1, 1], padding='VALID') def max_pool_2x2(x):

return tf.nn.max_pool(x, ksize=[1, 2, 2, 1], strides=[1, 2, 2, 1], padding='VALID') W_conv1 = weight_variable([5, 5, 1, 32])

b_conv1 = bias_variable([32])

x_image = tf.reshape(x_data, [-1, 28, 28, 1])

h_conv1 = tf.nn.relu(conv2d(x_image, W_conv1) + b_conv1)

h_pool1 = max_pool_2x2(h_conv1) W_conv2 = weight_variable([5, 5, 32, 64])

b_conv2 = bias_variable([64])

h_conv2 = tf.nn.relu(conv2d(h_pool1, W_conv2) + b_conv2)

h_pool2 = max_pool_2x2(h_conv2) W_fc1 = weight_variable([4 * 4 * 64, 1024])

b_fc1 = bias_variable([1024]) h_pool2_flat = tf.reshape(h_pool2, [-1, 4*4*64])

h_fc1 = tf.nn.relu(tf.matmul(h_pool2_flat, W_fc1) + b_fc1) keep_prob = tf.placeholder("float")

h_fc1_drop = tf.nn.dropout(h_fc1, keep_prob) W_fc2 = weight_variable([1024, 10])

b_fc2 = bias_variable([10]) y_conv=tf.nn.softmax(tf.matmul(h_fc1_drop, W_fc2) + b_fc2) cross_entropy = -tf.reduce_sum(y_data * tf.log(y_conv))

train_step = tf.train.AdamOptimizer(1e-2).minimize(cross_entropy)

correct_prediction = tf.equal(tf.argmax(y_conv,1), tf.argmax(y_data, 1))

accuracy = tf.reduce_mean(tf.cast(correct_prediction, "float")) sess = tf.Session()

sess.run(tf.initialize_all_variables()) for i in range(1000):

batch = mnist.train.next_batch(50)

if i%5 == 0:

train_accuracy = sess.run(accuracy, feed_dict={x_data:batch[0], y_data: batch[1], keep_prob: 1.0})

print("step %d, training accuracy %g"%(i, train_accuracy))

sess.run(train_step, feed_dict={x_data: batch[0], y_data: batch[1], keep_prob: 0.5})

吴裕雄 python深度学习与实践(15)的更多相关文章

- 吴裕雄 python深度学习与实践(13)

import numpy as np import matplotlib.pyplot as plt x_data = np.random.randn(10) print(x_data) y_data ...

- 吴裕雄 python深度学习与实践(18)

# coding: utf-8 import time import numpy as np import tensorflow as tf import _pickle as pickle impo ...

- 吴裕雄 python深度学习与实践(17)

import tensorflow as tf from tensorflow.examples.tutorials.mnist import input_data import time # 声明输 ...

- 吴裕雄 python深度学习与实践(16)

import struct import numpy as np import matplotlib.pyplot as plt dateMat = np.ones((7,7)) kernel = n ...

- 吴裕雄 python深度学习与实践(14)

import numpy as np import tensorflow as tf import matplotlib.pyplot as plt threshold = 1.0e-2 x1_dat ...

- 吴裕雄 python深度学习与实践(12)

import tensorflow as tf q = tf.FIFOQueue(,"float32") counter = tf.Variable(0.0) add_op = t ...

- 吴裕雄 python深度学习与实践(11)

import numpy as np from matplotlib import pyplot as plt A = np.array([[5],[4]]) C = np.array([[4],[6 ...

- 吴裕雄 python深度学习与实践(10)

import tensorflow as tf input1 = tf.constant(1) print(input1) input2 = tf.Variable(2,tf.int32) print ...

- 吴裕雄 python深度学习与实践(9)

import numpy as np import tensorflow as tf inputX = np.random.rand(100) inputY = np.multiply(3,input ...

随机推荐

- webpack配置文件--(loader)

这篇写的很详细 https://segmentfault.com/a/1190000012718374#articleHeader9 主要的配置项: test:必须 匹配需要处理的文件的扩展名 use ...

- UA池和代理池

scrapy下载中间件 UA池 代理池 一.下载中间件 先祭出框架图: 下载中间件(Downloader Middlewares) 位于scrapy引擎和下载器之间的一层组件. - 作用: (1)引擎 ...

- js 在线引用

<!DOCTYPE html> <html> <head> <meta charset="utf-8" /> <meta ht ...

- 远程桌面连接问题,ping服务器ip无法连接主机。

今天是礼拜一,上班的第一天去连公司的服务器,远程桌面竟然登录不上. 试了一下同事的电脑,也是一样的情况无法连接到远程计算机.这下可把我急坏了. 试了很多方法,也重新启动了服务器,重启后同事的win10 ...

- 基于 Jenkins 构建持续集成任务

1.1 Jenkins 配置使用心得 我是在windows10上安装的,安装过程很简单,从官网上下载下来msi安装包,双击执行就好了.安装程序完成后会自动打开http://localhost:8080 ...

- Unix中共享信息方式

- 1.1.21 Word修改文章目录

1.选中目录后,右键[编辑域],选择[索引和目录].选择[TOC],点击右侧的[目录]. 2.选中[目录]后,按照如下[1][2][3]顺序,按格式要求修改目录即可.

- Linux查看线程

我的程序在其内部创建并执行了多个线程,我怎样才能在该程序创建线程后监控其中单个线程?我想要看到带有它们名称的单个线程详细情况(如,CPU/内存使用率). 线程是现代操作系统上进行并行执行的一个流行的编 ...

- Android SurfaceView及TextureView对比

SurfaceView是什么? 它继承自类View,因此它本质上是一个View.但与普通View不同的是,它有自己的Surface.有自己的Surface,在WMS中有对应的WindowState,在 ...

- [UE4]CheckBox

一.CheckBox默认情况下是比较小的 二.要让CheckBox变大,最简单的方法就是直接设置Transform.Scale,但如此一来CheckBox就变得模糊了. 三.CheckBox控件是在C ...