吴裕雄 python 机器学习-KNN算法(1)

import numpy as np

import operator as op

from os import listdir def classify0(inX, dataSet, labels, k):

dataSetSize = dataSet.shape[0]

diffMat = np.tile(inX, (dataSetSize,1)) - dataSet

sqDiffMat = diffMat**2

sqDistances = sqDiffMat.sum(axis=1)

distances = sqDistances**0.5

sortedDistIndicies = distances.argsort()

classCount={}

for i in range(k):

voteIlabel = labels[sortedDistIndicies[i]]

classCount[voteIlabel] = classCount.get(voteIlabel,0) + 1

sortedClassCount = sorted(classCount.items(), key=op.itemgetter(1), reverse=True)

return sortedClassCount[0][0] def createDataSet():

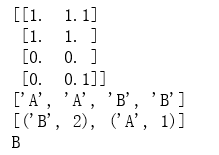

group = np.array([[1.0,1.1],[1.0,1.0],[0,0],[0,0.1]])

labels = ['A','A','B','B']

return group, labels data,labels = createDataSet()

print(data)

print(labels) test = np.array([[0,0.5]])

result = classify0(test,data,labels,3)

print(result)

import numpy as np

import operator as op

from os import listdir def classify0(inX, dataSet, labels, k):

dataSetSize = dataSet.shape[0]

diffMat = np.tile(inX, (dataSetSize,1)) - dataSet

sqDiffMat = diffMat**2

sqDistances = sqDiffMat.sum(axis=1)

distances = sqDistances**0.5

sortedDistIndicies = distances.argsort()

classCount={}

for i in range(k):

voteIlabel = labels[sortedDistIndicies[i]]

classCount[voteIlabel] = classCount.get(voteIlabel,0) + 1

sortedClassCount = sorted(classCount.items(), key=op.itemgetter(1), reverse=True)

return sortedClassCount[0][0] def file2matrix(filename):

fr = open(filename)

returnMat = []

classLabelVector = [] #prepare labels return

for line in fr.readlines():

line = line.strip()

listFromLine = line.split('\t')

returnMat.append([float(listFromLine[0]),float(listFromLine[1]),float(listFromLine[2])])

classLabelVector.append(int(listFromLine[-1]))

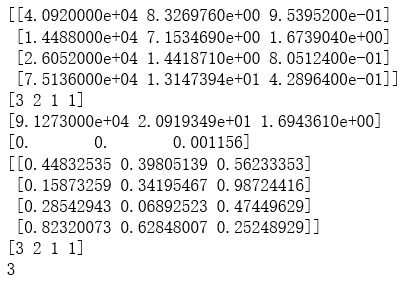

return np.array(returnMat),np.array(classLabelVector) trainData,trainLabel = file2matrix("D:\\LearningResource\\machinelearninginaction\\Ch02\\datingTestSet2.txt")

print(trainData[0:4])

print(trainLabel[0:4]) def autoNorm(dataSet):

minVals = dataSet.min(0)

maxVals = dataSet.max(0)

ranges = maxVals - minVals

normDataSet = np.zeros(np.shape(dataSet))

m = dataSet.shape[0]

normDataSet = dataSet - np.tile(minVals, (m,1))

normDataSet = normDataSet/np.tile(ranges, (m,1)) #element wise divide

return normDataSet, ranges, minVals normDataSet, ranges, minVals = autoNorm(trainData)

print(ranges)

print(minVals)

print(normDataSet[0:4])

print(trainLabel[0:4]) testData = np.array([[0.5,0.3,0.5]])

result = classify0(testData, normDataSet, trainLabel, 5)

print(result)

import numpy as np

import operator as op

from os import listdir def classify0(inX, dataSet, labels, k):

dataSetSize = dataSet.shape[0]

diffMat = np.tile(inX, (dataSetSize,1)) - dataSet

sqDiffMat = diffMat**2

sqDistances = sqDiffMat.sum(axis=1)

distances = sqDistances**0.5

sortedDistIndicies = distances.argsort()

classCount={}

for i in range(k):

voteIlabel = labels[sortedDistIndicies[i]]

classCount[voteIlabel] = classCount.get(voteIlabel,0) + 1

sortedClassCount = sorted(classCount.items(), key=op.itemgetter(1), reverse=True)

return sortedClassCount[0][0] def file2matrix(filename):

fr = open(filename)

returnMat = []

classLabelVector = [] #prepare labels return

for line in fr.readlines():

line = line.strip()

listFromLine = line.split('\t')

returnMat.append([float(listFromLine[0]),float(listFromLine[1]),float(listFromLine[2])])

classLabelVector.append(listFromLine[-1])

return np.array(returnMat),np.array(classLabelVector) def autoNorm(dataSet):

minVals = dataSet.min(0)

maxVals = dataSet.max(0)

ranges = maxVals - minVals

normDataSet = np.zeros(np.shape(dataSet))

m = dataSet.shape[0]

normDataSet = dataSet - np.tile(minVals, (m,1))

normDataSet = normDataSet/np.tile(ranges, (m,1)) #element wise divide

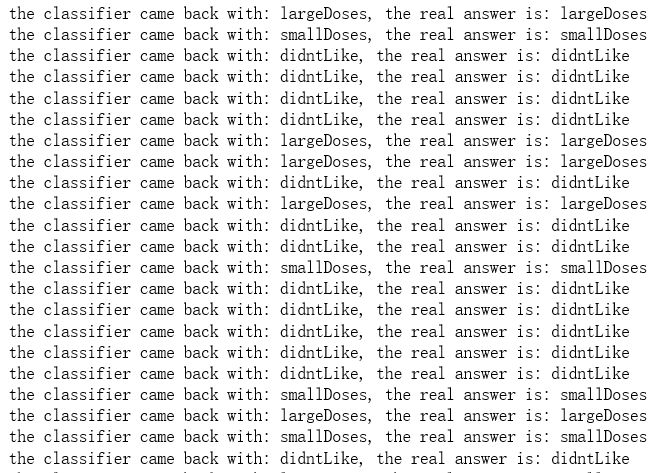

return normDataSet, ranges, minVals normDataSet, ranges, minVals = autoNorm(trainData) def datingClassTest():

hoRatio = 0.10 #hold out 10%

datingDataMat,datingLabels = file2matrix("D:\\LearningResource\\machinelearninginaction\\Ch02\\datingTestSet.txt")

normMat, ranges, minVals = autoNorm(datingDataMat)

m = normMat.shape[0]

numTestVecs = int(m*hoRatio)

errorCount = 0.0

for i in range(numTestVecs):

classifierResult = classify0(normMat[i,:],normMat[numTestVecs:m,:],datingLabels[numTestVecs:m],3)

print(('the classifier came back with: %s, the real answer is: %s') % (classifierResult, datingLabels[i]))

if (classifierResult != datingLabels[i]):

errorCount += 1.0

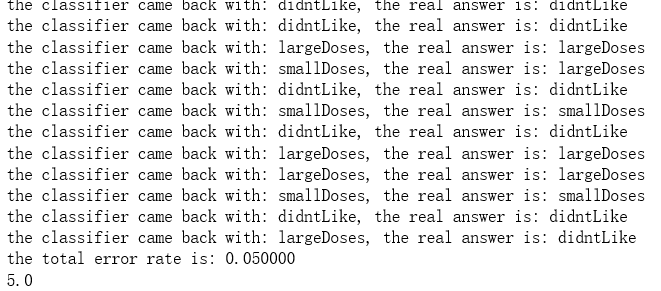

print(('the total error rate is: %f') % (errorCount/float(numTestVecs)))

print(errorCount) datingClassTest()

import numpy as np

import operator as op

from os import listdir def classify0(inX, dataSet, labels, k):

dataSetSize = dataSet.shape[0]

diffMat = np.tile(inX, (dataSetSize,1)) - dataSet

sqDiffMat = diffMat**2

sqDistances = sqDiffMat.sum(axis=1)

distances = sqDistances**0.5

sortedDistIndicies = distances.argsort()

classCount={}

for i in range(k):

voteIlabel = labels[sortedDistIndicies[i]]

classCount[voteIlabel] = classCount.get(voteIlabel,0) + 1

sortedClassCount = sorted(classCount.items(), key=op.itemgetter(1), reverse=True)

return sortedClassCount[0][0] def file2matrix(filename):

fr = open(filename)

returnMat = []

classLabelVector = [] #prepare labels return

for line in fr.readlines():

line = line.strip()

listFromLine = line.split('\t')

returnMat.append([float(listFromLine[0]),float(listFromLine[1]),float(listFromLine[2])])

classLabelVector.append(listFromLine[-1])

return np.array(returnMat),np.array(classLabelVector) def autoNorm(dataSet):

minVals = dataSet.min(0)

maxVals = dataSet.max(0)

ranges = maxVals - minVals

normDataSet = np.zeros(np.shape(dataSet))

m = dataSet.shape[0]

normDataSet = dataSet - np.tile(minVals, (m,1))

normDataSet = normDataSet/np.tile(ranges, (m,1)) #element wise divide

return normDataSet, ranges, minVals normDataSet, ranges, minVals = autoNorm(trainData) def datingClassTest():

hoRatio = 0.10 #hold out 10%

datingDataMat,datingLabels = file2matrix("D:\\LearningResource\\machinelearninginaction\\Ch02\\datingTestSet.txt")

normMat, ranges, minVals = autoNorm(datingDataMat)

m = normMat.shape[0]

numTestVecs = int(m*hoRatio)

errorCount = 0.0

for i in range(numTestVecs):

classifierResult = classify0(normMat[i,:],normMat[numTestVecs:m,:],datingLabels[numTestVecs:m],3)

print(('the classifier came back with: %s, the real answer is: %s') % (classifierResult, datingLabels[i]))

if (classifierResult != datingLabels[i]):

errorCount += 1.0

print(('the total error rate is: %f') % (errorCount/float(numTestVecs)))

print(errorCount) datingClassTest()

................................................

import numpy as np

import operator as op

from os import listdir def classify0(inX, dataSet, labels, k):

dataSetSize = dataSet.shape[0]

diffMat = np.tile(inX, (dataSetSize,1)) - dataSet

sqDiffMat = diffMat**2

sqDistances = sqDiffMat.sum(axis=1)

distances = sqDistances**0.5

sortedDistIndicies = distances.argsort()

classCount={}

for i in range(k):

voteIlabel = labels[sortedDistIndicies[i]]

classCount[voteIlabel] = classCount.get(voteIlabel,0) + 1

sortedClassCount = sorted(classCount.items(), key=op.itemgetter(1), reverse=True)

return sortedClassCount[0][0] def file2matrix(filename):

fr = open(filename)

returnMat = []

classLabelVector = [] #prepare labels return

for line in fr.readlines():

line = line.strip()

listFromLine = line.split('\t')

returnMat.append([float(listFromLine[0]),float(listFromLine[1]),float(listFromLine[2])])

classLabelVector.append(int(listFromLine[-1]))

return np.array(returnMat),np.array(classLabelVector) def autoNorm(dataSet):

minVals = dataSet.min(0)

maxVals = dataSet.max(0)

ranges = maxVals - minVals

normDataSet = np.zeros(np.shape(dataSet))

m = dataSet.shape[0]

normDataSet = dataSet - np.tile(minVals, (m,1))

normDataSet = normDataSet/np.tile(ranges, (m,1)) #element wise divide

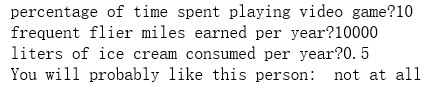

return normDataSet, ranges, minVals def classifyPerson():

resultList = ["not at all", "in samll doses", "in large doses"]

percentTats = float(input("percentage of time spent playing video game?"))

ffMiles = float(input("frequent flier miles earned per year?"))

iceCream = float(input("liters of ice cream consumed per year?"))

testData = np.array([percentTats,ffMiles,iceCream])

trainData,trainLabel = file2matrix("D:\\LearningResource\\machinelearninginaction\\Ch02\\datingTestSet2.txt")

normDataSet, ranges, minVals = autoNorm(trainData)

result = classify0((testData-minVals)/ranges, normDataSet, trainLabel, 3)

print("You will probably like this person: ",resultList[result-1]) classifyPerson()

import numpy as np

import operator as op

from os import listdir def classify0(inX, dataSet, labels, k):

dataSetSize = dataSet.shape[0]

diffMat = np.tile(inX, (dataSetSize,1)) - dataSet

sqDiffMat = diffMat**2

sqDistances = sqDiffMat.sum(axis=1)

distances = sqDistances**0.5

sortedDistIndicies = distances.argsort()

classCount={}

for i in range(k):

voteIlabel = labels[sortedDistIndicies[i]]

classCount[voteIlabel] = classCount.get(voteIlabel,0) + 1

sortedClassCount = sorted(classCount.items(), key=op.itemgetter(1), reverse=True)

return sortedClassCount[0][0] def file2matrix(filename):

fr = open(filename)

returnMat = []

classLabelVector = [] #prepare labels return

for line in fr.readlines():

line = line.strip()

listFromLine = line.split('\t')

returnMat.append([float(listFromLine[0]),float(listFromLine[1]),float(listFromLine[2])])

classLabelVector.append(int(listFromLine[-1]))

return np.array(returnMat),np.array(classLabelVector) def autoNorm(dataSet):

minVals = dataSet.min(0)

maxVals = dataSet.max(0)

ranges = maxVals - minVals

normDataSet = np.zeros(np.shape(dataSet))

m = dataSet.shape[0]

normDataSet = dataSet - np.tile(minVals, (m,1))

normDataSet = normDataSet/np.tile(ranges, (m,1)) #element wise divide

return normDataSet, ranges, minVals def classifyPerson():

resultList = ["not at all", "in samll doses", "in large doses"]

percentTats = float(input("percentage of time spent playing video game?"))

ffMiles = float(input("frequent flier miles earned per year?"))

iceCream = float(input("liters of ice cream consumed per year?"))

testData = np.array([percentTats,ffMiles,iceCream])

trainData,trainLabel = file2matrix("D:\\LearningResource\\machinelearninginaction\\Ch02\\datingTestSet2.txt")

normDataSet, ranges, minVals = autoNorm(trainData)

result = classify0((testData-minVals)/ranges, normDataSet, trainLabel, 3)

print("You will probably like this person: ",resultList[result-1]) classifyPerson()

import numpy as np

import operator as op

from os import listdir def classify0(inX, dataSet, labels, k):

dataSetSize = dataSet.shape[0]

diffMat = np.tile(inX, (dataSetSize,1)) - dataSet

sqDiffMat = diffMat**2

sqDistances = sqDiffMat.sum(axis=1)

distances = sqDistances**0.5

sortedDistIndicies = distances.argsort()

classCount={}

for i in range(k):

voteIlabel = labels[sortedDistIndicies[i]]

classCount[voteIlabel] = classCount.get(voteIlabel,0) + 1

sortedClassCount = sorted(classCount.items(), key=op.itemgetter(1), reverse=True)

return sortedClassCount[0][0] def img2vector(filename):

returnVect = []

fr = open(filename)

for i in range(32):

lineStr = fr.readline()

for j in range(32):

returnVect.append(int(lineStr[j]))

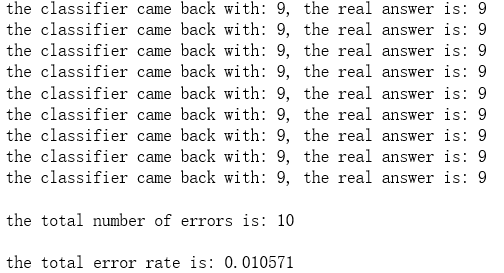

return np.array([returnVect]) def handwritingClassTest():

hwLabels = []

trainingFileList = listdir('D:\\LearningResource\\machinelearninginaction\\Ch02\\trainingDigits') #load the training set

m = len(trainingFileList)

trainingMat = np.zeros((m,1024))

for i in range(m):

fileNameStr = trainingFileList[i]

fileStr = fileNameStr.split('.')[0] #take off .txt

classNumStr = int(fileStr.split('_')[0])

hwLabels.append(classNumStr)

trainingMat[i,:] = img2vector('D:\\LearningResource\\machinelearninginaction\\Ch02\\trainingDigits\\%s' % fileNameStr)

testFileList = listdir('D:\\LearningResource\\machinelearninginaction\\Ch02\\testDigits') #iterate through the test set

mTest = len(testFileList)

errorCount = 0.0

for i in range(mTest):

fileNameStr = testFileList[i]

fileStr = fileNameStr.split('.')[0] #take off .txt

classNumStr = int(fileStr.split('_')[0])

vectorUnderTest = img2vector('D:\\LearningResource\\machinelearninginaction\\Ch02\\testDigits\\%s' % fileNameStr)

classifierResult = classify0(vectorUnderTest, trainingMat, hwLabels, 3)

print("the classifier came back with: %d, the real answer is: %d" % (classifierResult, classNumStr))

if (classifierResult != classNumStr):

errorCount += 1.0

print("\nthe total number of errors is: %d" % errorCount)

print("\nthe total error rate is: %f" % (errorCount/float(mTest))) handwritingClassTest()

.......................................

吴裕雄 python 机器学习-KNN算法(1)的更多相关文章

- 吴裕雄 python 机器学习——KNN回归KNeighborsRegressor模型

import numpy as np import matplotlib.pyplot as plt from sklearn import neighbors, datasets from skle ...

- 吴裕雄 python 机器学习——KNN分类KNeighborsClassifier模型

import numpy as np import matplotlib.pyplot as plt from sklearn import neighbors, datasets from skle ...

- 吴裕雄 python 机器学习-KNN(2)

import matplotlib import numpy as np import matplotlib.pyplot as plt from matplotlib.patches import ...

- 吴裕雄 python 机器学习——半监督学习标准迭代式标记传播算法LabelPropagation模型

import numpy as np import matplotlib.pyplot as plt from sklearn import metrics from sklearn import d ...

- 吴裕雄 python 机器学习——集成学习AdaBoost算法回归模型

import numpy as np import matplotlib.pyplot as plt from sklearn import datasets,ensemble from sklear ...

- 吴裕雄 python 机器学习——集成学习AdaBoost算法分类模型

import numpy as np import matplotlib.pyplot as plt from sklearn import datasets,ensemble from sklear ...

- 吴裕雄 python 机器学习——人工神经网络感知机学习算法的应用

import numpy as np from matplotlib import pyplot as plt from sklearn import neighbors, datasets from ...

- 吴裕雄 python 机器学习——半监督学习LabelSpreading模型

import numpy as np import matplotlib.pyplot as plt from sklearn import metrics from sklearn import d ...

- 吴裕雄 python 机器学习——人工神经网络与原始感知机模型

import numpy as np from matplotlib import pyplot as plt from mpl_toolkits.mplot3d import Axes3D from ...

随机推荐

- Java基础方面

1.作用域public,private,protected,以及不写时的区别 答:区别如下: 作用域 当前类 同一package 子孙类 其他package ...

- css(层叠样式表)属性

CSS属性相关 宽和高 width属性可以为元素设置宽度. height属性可以为元素设置高度. 块级标签才能设置宽度,内联标签的宽度由内容来决定. 字体属性 文字字体 font-family可以把多 ...

- Struts2学习:Action使用@Autowired注入为null的解决方案

1.pom.xml引入struts2-spring-plugin <dependency> <groupId>org.apache.struts</groupId> ...

- Microsoft Visual Studio正在等待操作完成

在编译项目的时候,有时会遇到 Microsoft Visual Studio正忙,结果就是半天没反应,要等待很长时间才能编译完成,在网上查了一下资料,微软官方是这样解释的: 阻止某些 devenv.e ...

- ios8.1.1系统怎么取消下划线

http://zhidao.baidu.com/link?url=y-3oAiOsuCSvoCD-7H2Uvgl_UI1BQQuNvA2jHKCRAGmZSH7_RrwDijKtRouMBa5yF_L ...

- Windows下python库的常用安装方法

目录: 1.pip安装(需要pip) 2.通过下载whl文件安装(需要pip) 3.在pythn官网下载安装包安装(不需要pip) 方法一:pip安装. 这是最 ...

- UI学习网站

以下是我收集的UI设计的网站提供给大家参考: 站酷 www.zcool.com.cn UI中国 www.ui.cn 学UI网 www.xueui.cn UIGREAT www.uigreat.com ...

- Linux 文件查找(find)

find(选项)(参数) 选项 -amin<分钟>:查找在指定时间曾被存取过的文件或目录,单位以分钟计算: -anewer<参考文件或目录>:查找其存取时间较指定文件或目录的存 ...

- git push error HTTP code = 413

error: RPC failed; HTTP 413 curl 22 The requested URL returned error: 413 Request Entity Too Large 将 ...

- https://www.52pojie.cn/thread-688820-1-1.html

https://www.52pojie.cn/thread-688820-1-1.html