吴裕雄--天生自然 PYTHON数据分析:所有美国股票和etf的历史日价格和成交量分析

# This Python 3 environment comes with many helpful analytics libraries installed

# It is defined by the kaggle/python docker image: https://github.com/kaggle/docker-python

# For example, here's several helpful packages to load in import matplotlib.pyplot as plt

import statsmodels.tsa.seasonal as smt

import numpy as np # linear algebra

import pandas as pd # data processing, CSV file I/O (e.g. pd.read_csv)

import random

import datetime as dt

from sklearn import linear_model

from sklearn.metrics import mean_absolute_error

import plotly # import the relevant Keras modules

from keras.models import Sequential

from keras.layers import Activation, Dense

from keras.layers import LSTM

from keras.layers import Dropout # Input data files are available in the "../input/" directory.

# For example, running this (by clicking run or pressing Shift+Enter) will list the files in the input directory from subprocess import check_output

import os

os.chdir('F:\\kaggleDataSet\\price-volume\\Stocks')

#read data

# kernels let us navigate through the zipfile as if it were a directory # trying to read a file of size zero will throw an error, so skip them

# filenames = [x for x in os.listdir() if x.endswith('.txt') and os.path.getsize(x) > 0]

# filenames = random.sample(filenames,1)

filenames = ['prk.us.txt', 'bgr.us.txt', 'jci.us.txt', 'aa.us.txt', 'fr.us.txt', 'star.us.txt', 'sons.us.txt', 'ipl_d.us.txt', 'sna.us.txt', 'utg.us.txt']

filenames = [filenames[1]]

print(filenames)

data = []

for filename in filenames:

df = pd.read_csv(filename, sep=',')

label, _, _ = filename.split(sep='.')

df['Label'] = filename

df['Date'] = pd.to_datetime(df['Date'])

data.append(df)

traces = []

for df in data:

clr = str(r()) + str(r()) + str(r())

df = df.sort_values('Date')

label = df['Label'].iloc[0]

trace = plotly.graph_objs.Scattergl(x=df['Date'],y=df['Close'])

traces.append(trace) layout = plotly.graph_objs.Layout(title='Plot',)

fig = plotly.graph_objs.Figure(data=traces, layout=layout)

plotly.offline.init_notebook_mode(connected=True)

plotly.offline.iplot(fig, filename='dataplot')

df = data[0]

window_len = 10 #Create a data point (i.e. a date) which splits the training and testing set

split_date = list(data[0]["Date"][-(2*window_len+1):])[0] #Split the training and test set

training_set, test_set = df[df['Date'] < split_date], df[df['Date'] >= split_date]

training_set = training_set.drop(['Date','Label', 'OpenInt'], 1)

test_set = test_set.drop(['Date','Label','OpenInt'], 1) #Create windows for training

LSTM_training_inputs = []

for i in range(len(training_set)-window_len):

temp_set = training_set[i:(i+window_len)].copy() for col in list(temp_set):

temp_set[col] = temp_set[col]/temp_set[col].iloc[0] - 1

LSTM_training_inputs.append(temp_set)

LSTM_training_outputs = (training_set['Close'][window_len:].values/training_set['Close'][:-window_len].values)-1 LSTM_training_inputs = [np.array(LSTM_training_input) for LSTM_training_input in LSTM_training_inputs]

LSTM_training_inputs = np.array(LSTM_training_inputs) #Create windows for testing

LSTM_test_inputs = []

for i in range(len(test_set)-window_len):

temp_set = test_set[i:(i+window_len)].copy() for col in list(temp_set):

temp_set[col] = temp_set[col]/temp_set[col].iloc[0] - 1

LSTM_test_inputs.append(temp_set)

LSTM_test_outputs = (test_set['Close'][window_len:].values/test_set['Close'][:-window_len].values)-1 LSTM_test_inputs = [np.array(LSTM_test_inputs) for LSTM_test_inputs in LSTM_test_inputs]

LSTM_test_inputs = np.array(LSTM_test_inputs)

def build_model(inputs, output_size, neurons, activ_func="linear",dropout=0.10, loss="mae", optimizer="adam"):

model = Sequential()

model.add(LSTM(neurons, input_shape=(inputs.shape[1], inputs.shape[2])))

model.add(Dropout(dropout))

model.add(Dense(units=output_size))

model.add(Activation(activ_func))

model.compile(loss=loss, optimizer=optimizer)

return model

# initialise model architecture

nn_model = build_model(LSTM_training_inputs, output_size=1, neurons = 32)

# model output is next price normalised to 10th previous closing price

# train model on data

# note: eth_history contains information on the training error per epoch

nn_history = nn_model.fit(LSTM_training_inputs, LSTM_training_outputs, epochs=5, batch_size=1, verbose=2, shuffle=True)

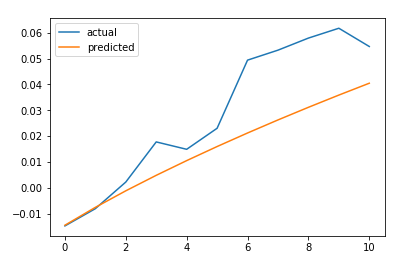

plt.plot(LSTM_test_outputs, label = "actual")

plt.plot(nn_model.predict(LSTM_test_inputs), label = "predicted")

plt.legend()

plt.show()

MAE = mean_absolute_error(LSTM_test_outputs, nn_model.predict(LSTM_test_inputs))

print('The Mean Absolute Error is: {}'.format(MAE))

#https://github.com/llSourcell/How-to-Predict-Stock-Prices-Easily-Demo/blob/master/lstm.py

def predict_sequence_full(model, data, window_size):

#Shift the window by 1 new prediction each time, re-run predictions on new window

curr_frame = data[0]

predicted = []

for i in range(len(data)):

predicted.append(model.predict(curr_frame[np.newaxis,:,:])[0,0])

curr_frame = curr_frame[1:]

curr_frame = np.insert(curr_frame, [window_size-1], predicted[-1], axis=0)

return predicted predictions = predict_sequence_full(nn_model, LSTM_test_inputs, 10) plt.plot(LSTM_test_outputs, label="actual")

plt.plot(predictions, label="predicted")

plt.legend()

plt.show()

MAE = mean_absolute_error(LSTM_test_outputs, predictions)

print('The Mean Absolute Error is: {}'.format(MAE))

结论

LSTM不能解决时间序列预测问题。对一个时间步长的预测并不比滞后模型好多少。如果我们增加预测的时间步长,性能下降的速度就不会像其他更传统的方法那么快。然而,在这种情况下,我们的误差增加了大约4.5倍。它随着我们试图预测的时间步长呈超线性增长。

吴裕雄--天生自然 PYTHON数据分析:所有美国股票和etf的历史日价格和成交量分析的更多相关文章

- 吴裕雄--天生自然 PYTHON数据分析:糖尿病视网膜病变数据分析(完整版)

# This Python 3 environment comes with many helpful analytics libraries installed # It is defined by ...

- 吴裕雄--天生自然 python数据分析:健康指标聚集分析(健康分析)

# This Python 3 environment comes with many helpful analytics libraries installed # It is defined by ...

- 吴裕雄--天生自然 python数据分析:葡萄酒分析

# import pandas import pandas as pd # creating a DataFrame pd.DataFrame({'Yes': [50, 31], 'No': [101 ...

- 吴裕雄--天生自然 PYTHON数据分析:人类发展报告——HDI, GDI,健康,全球人口数据数据分析

import pandas as pd # Data analysis import numpy as np #Data analysis import seaborn as sns # Data v ...

- 吴裕雄--天生自然 python数据分析:医疗费数据分析

import numpy as np import pandas as pd import os import matplotlib.pyplot as pl import seaborn as sn ...

- 吴裕雄--天生自然 PYTHON数据分析:基于Keras的CNN分析太空深处寻找系外行星数据

#We import libraries for linear algebra, graphs, and evaluation of results import numpy as np import ...

- 吴裕雄--天生自然 python数据分析:基于Keras使用CNN神经网络处理手写数据集

import pandas as pd import numpy as np import matplotlib.pyplot as plt import matplotlib.image as mp ...

- 吴裕雄--天生自然 PYTHON数据分析:钦奈水资源管理分析

df = pd.read_csv("F:\\kaggleDataSet\\chennai-water\\chennai_reservoir_levels.csv") df[&quo ...

- 吴裕雄--天生自然 PYTHON数据分析:医疗数据分析

import numpy as np # linear algebra import pandas as pd # data processing, CSV file I/O (e.g. pd.rea ...

随机推荐

- docker nginx 实现图片预览

一.实现 nginx http图片预览 1.创建本地配置文件目录以及配置文件 两种方式: 1.1.docker nginx将配置文件抽离到了/etc/nginx/conf.d,只需要配置default ...

- C++ traits技法的一点理解

为了更好的理解traits技法.我们一步一步的深入.先从实际写代码的过程中我们遇到诸如下面伪码说起. template< typename T,typename B> void (T a, ...

- sock.listen()

(转载) 函数原型: int listen(int sockfd, int backlog); 当服务器编程时,经常需要限制客户端的连接个数,下面为问题分析以及解决办法: 下面只讨论TCP UDP不 ...

- HDU_4734_数位dp

http://acm.hdu.edu.cn/showproblem.php?pid=4734 模版题. #include<iostream> #include<cstdio> ...

- 1. 学习Linux操作系统

1.熟练使用Linux命令行(鸟哥的Linux私房菜.Linux系统管理技术手册) 2.学会Linux程序设计(UNIX环境高级编程) 3.了解Linux内核机制(深入理解LINUX内核) 4.阅读L ...

- Go语言实现:【剑指offer】调整数组顺序使奇数位于偶数前面

该题目来源于牛客网<剑指offer>专题. 输入一个整数数组,实现一个函数来调整该数组中数字的顺序,使得所有的奇数位于数组的前半部分,所有的偶数位于数组的后半部分,并保证奇数和奇数,偶数和 ...

- Django表单Form类对空值None的替换

最近在写项目的时候用到Form,发现这个类什么都好,就是有些空值的默认赋值真是很不合我胃口. 查阅资料.官方文档后发现并没有设置该值的方式.于是,便开始了我的踩坑之路...... 不过现在完美解决了, ...

- VFP中OCX控件注册检测及自动注册

这是原来从网上搜集.整理后编制用于自己的小程序使用的OCX是否注册及未注册控件的自动注册函数. CheckCtrlFileRegist("ctToolBar.ctToolBarCtrl.4& ...

- vue路由+vue-cli实现tab切换

第一步:搭建环境 安装vue-cli cnpm install -g vue-cli安装vue-router cnpm install -g vue-router使用vue-cli初始化项目 vue ...

- 1,Python爬虫环境的安装

前言 很早以前就听说了Python爬虫,但是一直没有去了解:想着先要把一个方面的知识学好再去了解其他新兴的技术. 但是现在项目有需求,要到网上爬取一些信息,然后做数据分析.所以便从零开始学习Pytho ...